What is an API Waterfall? Explained Simply

In the intricate world of modern software development, where applications are increasingly built from modular, interconnected services, the concept of an API is paramount. APIs, or Application Programming Interfaces, serve as the fundamental contracts that allow different software components to communicate and interact. They are the invisible sinews that bind together the disparate parts of a complex digital ecosystem, from mobile apps fetching data from a backend server to microservices orchestrating a multi-step business process. However, as systems grow in complexity and functionality, these individual API calls rarely stand in isolation. Instead, they often form a series of interconnected dependencies, where the outcome of one API call directly influences or necessitates subsequent calls. This sequential, often cascading pattern of API interactions, where data flows from one service to another, creating a chain of operations, is what we metaphorically refer to as an "API Waterfall."

The term "API Waterfall" isn't a formally standardized technical definition found in RFCs or academic papers. Instead, it's an intuitive metaphor that perfectly encapsulates the reality of how many modern applications function. Much like a natural waterfall where water cascades from one level to the next, gaining momentum and transforming as it flows, an API waterfall describes a scenario where data and requests flow through a series of APIs. Each step in this cascade performs a specific function, processes data, and then passes the modified or augmented information down to the next API in the sequence. This intricate dance of data and logic forms the backbone of almost every dynamic application we use daily, yet it introduces significant challenges in terms of performance, reliability, and management. Understanding the nature of an API waterfall, its implications, and the architectural solutions designed to manage it, such as an API Gateway, is crucial for anyone involved in designing, developing, or maintaining robust digital systems.

The digital landscape is a vast ocean of interconnected services, and APIs are the currents that drive the flow of information. Imagine a user placing an order on an e-commerce website. This seemingly simple action triggers a cascade of API calls: first, to authenticate the user, then to check inventory levels for the requested items, followed by processing the payment, updating the order status, generating a shipping label, and finally, sending a confirmation email. Each of these steps might involve interacting with a different microservice or even an external third-party service. The successful completion of the payment API call, for instance, is a prerequisite for the shipping label generation API call. If any one of these steps fails or introduces undue latency, the entire process is disrupted, leading to a poor user experience or even system failure. This sequence of interdependent operations, where each API call builds upon the previous one, is the very essence of an API waterfall. It is a powerful pattern that enables complex functionalities but also presents a formidable management challenge, necessitating sophisticated solutions to ensure seamless operation.

The Core Concept of an API Waterfall: Chained Dependencies in Action

At its heart, an API waterfall is about dependency. It describes a situation where a single user request or application process necessitates multiple distinct API calls, executed in a specific order, often with data flowing from the output of one call into the input of the next. This isn't just about parallel requests; it's about a sequential, often conditional, execution flow that creates a dependency chain.

Consider a practical example beyond e-commerce: a social media application. When you open your feed, it’s not a single API call that magically materializes all the content. Instead, an API Waterfall unfolds:

- User Authentication API Call: First, the application needs to verify your identity and session. This involves an

apicall to an authentication service, which might return a user ID and access token. - Profile Data API Call: With your authenticated user ID, the application then makes an

apicall to a profile service to fetch your basic information (name, avatar, preferences). - Friends List API Call: Simultaneously or subsequently, another

apicall goes to a social graph service to retrieve a list of your friends or connections. - Content Aggregation API Call: Using the friends list, the application might then make multiple

apicalls to a post service, retrieving recent updates from each of your friends. This could involve filtering based on your preferences fetched earlier. - Media Processing API Call: If posts contain images or videos, further

apicalls might go to a media service for transcoding, resizing, or content delivery network (CDN) links. - Recommendation Engine API Call: Based on your past interactions and preferences, an

apicall to a recommendation engine might fetch suggestions for new content or people to follow. - Advertisement Service API Call: Finally, an

apicall to an advertising service might fetch targeted ads to be displayed alongside the content.

Each of these steps relies on the successful completion and data output of preceding steps. For instance, you can't fetch posts from friends until you know who your friends are, and you can't know who your friends are until you're authenticated. This interconnectedness creates a delicate balance. A delay or failure in the authentication api call can cascade down the entire chain, preventing the feed from loading or displaying incorrect information. This complex orchestration of calls, often invisible to the end-user, is precisely what an API waterfall entails.

The proliferation of microservices architectures has only intensified the prevalence of API waterfalls. In a monolithic application, different modules might communicate directly within the same process. However, in a microservices setup, even internal communication between components typically occurs via api calls over a network. This distributed nature, while offering immense benefits in scalability and maintainability, inherently introduces the challenges of managing these waterfalls. Each service becomes an api endpoint, and complex business logic is often decomposed into a sequence of calls across these independent services. The challenges arising from these dependencies are multifaceted, including:

- Increased Latency: Each hop in the waterfall adds network latency, processing time, and serialization/deserialization overhead. A chain of ten

apicalls, each taking 50ms, can quickly add up to a noticeable delay for the end-user, even before considering network fluctuations. - Error Propagation: A failure in one

apicall in the middle of the sequence can cause the entire waterfall to collapse. Without proper error handling, a small glitch in a single service can bring down a larger application feature. - Complexity for Client Applications: Client applications (mobile apps, web browsers) might need to manage the orchestration of these multiple

apicalls themselves. This complicates client-side development, increases bandwidth usage, and makes the client more susceptible to backend changes. - Security Concerns: Each

apicall represents a potential attack vector. Managing authentication and authorization across a series of interdependent services can be challenging and prone to misconfigurations if not handled centrally. - Monitoring and Troubleshooting: Diagnosing issues in an

API Waterfallcan be incredibly difficult. Pinpointing which specificapicall in a long chain is causing a problem requires sophisticated logging, tracing, and monitoring tools to track requests as they traverse multiple services.

These challenges highlight the critical need for a robust architectural component that can effectively manage and optimize these complex API Waterfalls. This is precisely where the API Gateway steps in, acting as a crucial intermediary to simplify, secure, and accelerate the flow of data through these cascading API interactions.

The Indispensable Role of an API Gateway in Managing the Waterfall

Given the inherent complexities and potential pitfalls of an API Waterfall, a strategic architectural pattern has emerged as the cornerstone for managing these distributed interactions: the API Gateway. An API Gateway is essentially a single entry point for all client requests, acting as a reverse proxy that sits in front of multiple backend services. Instead of client applications having to directly interact with numerous individual api endpoints, they communicate solely with the API Gateway. This gateway then takes on the responsibility of routing requests to the appropriate backend service, aggregating responses, applying security policies, and performing various other cross-cutting concerns before sending a unified response back to the client.

The significance of an API Gateway in the context of an API Waterfall cannot be overstated. It transforms a client's perspective of a convoluted series of interdependent api calls into a single, simplified interaction. For instance, in our social media feed example, instead of the mobile app making seven or eight distinct api calls to different microservices, it might make just one api call to the API Gateway for "get_my_feed." The gateway then orchestrates all the underlying api waterfall calls, collects the results, and constructs a consolidated response tailored for the client.

This centralization of the entry point offers a multitude of benefits, directly addressing the challenges posed by API Waterfalls:

- Request Routing and Load Balancing: The

API Gatewayintelligently directs incoming requests to the correct backend service. It can employ sophisticated load balancing algorithms to distribute traffic evenly across multiple instances of a service, enhancing performance and resilience. If a particular service is overloaded or fails, thegatewaycan reroute requests to healthy instances, preventing a complete collapse of theapiwaterfall. - Authentication and Authorization: Instead of each backend service needing to implement its own authentication and authorization logic, the

API Gatewaycan handle this centrally. It verifies access tokens, checks permissions, and can inject user context into requests before forwarding them to backend services. This simplifies security management, reduces the attack surface, and ensures consistent security policies across allapicalls in the waterfall. - Rate Limiting and Throttling: To protect backend services from being overwhelmed by excessive requests, the

API Gatewaycan enforce rate limits. It can prevent specific clients or IP addresses from making too manyapicalls within a given timeframe, effectively containing a potential "denial of service" scenario and ensuring stability for all services in theapiwaterfall. - Request/Response Transformation: Client applications often require data in a specific format, which might differ from the native format produced by backend services. The

API Gatewaycan transform request payloads or response bodies on the fly, tailoring the data to suit the client's needs. This capability is invaluable for managing versioning, supporting different client types (e.g., mobile vs. web), and abstracting underlying service changes from consumers. - Caching: To reduce latency and lighten the load on backend services, the

API Gatewaycan cache responses to frequently requestedapicalls. When a subsequent identical request arrives, thegatewaycan serve the cached response immediately, bypassing the entireapiwaterfall for that particular data, significantly improving performance. - Monitoring and Logging: As the single point of entry, the

API Gatewayis an ideal place to collect comprehensive metrics, logs, and traces for allapicalls. This central observability provides unparalleled insights into the health, performance, and usage patterns of the entire system, making it much easier to identify bottlenecks or troubleshoot issues within anapiwaterfall.

The API Gateway serves as a vital control point, not just for individual api calls, but for the entire API Waterfall. By centralizing common concerns, it allows backend services to focus purely on their specific business logic, leading to more cohesive, scalable, and manageable architectures. In essence, it takes the burden of orchestrating complex api sequences away from client applications and places it on a dedicated, robust infrastructure component.

For organizations dealing with a high volume of diverse api calls, especially those incorporating cutting-edge technologies like Artificial Intelligence, the selection of a capable API Gateway becomes a strategic decision. Products like APIPark stand out in this evolving landscape. APIPark, an open-source AI gateway and API management platform, is specifically designed to address the challenges of integrating and managing both traditional RESTful services and modern AI models. Its capabilities, such as quick integration of 100+ AI models, unified api format for AI invocation, and prompt encapsulation into REST APIs, directly contribute to simplifying and optimizing even the most complex API Waterfalls that involve AI processing. By providing end-to-end api lifecycle management, from design to decommissioning, and enabling features like team service sharing and independent tenant permissions, APIPark helps enterprises to not only manage the "waterfall" of conventional apis but also to navigate the emerging complexities of AI-driven api interactions with greater efficiency and security.

Deep Dive into API Gateway Capabilities for Waterfall Optimization

To truly appreciate the power of an API Gateway in taming the API Waterfall, it's essential to delve deeper into its advanced capabilities that specifically contribute to optimizing these chained interactions. These features are not merely about routing requests; they are about intelligently managing the flow, enhancing resilience, and providing crucial insights into the performance of interconnected services.

1. Request Aggregation and Composition

One of the most potent features of an API Gateway for API Waterfalls is its ability to perform request aggregation and composition. Instead of a client making multiple individual api calls to fetch related pieces of data (e.g., user profile, order history, payment methods), the gateway can expose a single, aggregated endpoint. When a client calls this endpoint, the API Gateway internally fans out the request to several backend services concurrently or sequentially, orchestrating the api waterfall itself. It then collects all the individual responses, combines them, potentially transforms them, and returns a single, unified response to the client.

This capability significantly reduces the "chattiness" between the client and the backend, minimizing network overhead and overall perceived latency. For example, a mobile application displaying a user dashboard might need data from five different microservices. Without a gateway for aggregation, the client makes five separate api calls, each with its own handshake, latency, and parsing overhead. With aggregation, the client makes one call to the gateway, which then handles the five internal api waterfall calls efficiently, perhaps even in parallel where possible, before sending back a consolidated JSON object. This not only simplifies client-side development but also drastically improves performance for resource-constrained devices or high-latency networks.

2. Protocol Translation and Abstraction

In complex enterprise environments, it's common to find a mix of communication protocols. Some services might expose RESTful apis over HTTP, others might use GraphQL, SOAP, or even custom binary protocols like gRPC. An API Gateway can act as a universal translator, abstracting these underlying protocol differences from the client. It can accept requests in one protocol (e.g., REST from a web browser) and translate them into another (e.g., gRPC for a backend microservice), and then reverse the process for the response.

This capability is invaluable for API Waterfalls that span legacy systems, new microservices, and third-party integrations. It allows developers to choose the most appropriate protocol for each service without forcing clients to deal with the heterogeneity. The gateway essentially normalizes the communication, providing a consistent api interface to consumers, regardless of the diverse technologies powering the various steps in the waterfall. This significantly reduces integration complexity and future-proofs client applications against changes in backend technologies.

3. Centralized Security Policies and Enforcement

As mentioned earlier, security is paramount. In an API Waterfall involving numerous services, ensuring consistent security across all api calls can be a nightmare. The API Gateway centralizes this responsibility. Beyond basic authentication and authorization, it can enforce more sophisticated security policies:

- OAuth2/OIDC Token Validation: Validating JWTs, refreshing tokens, and managing sessions centrally.

- API Key Management: Issuing, revoking, and validating

apikeys. - IP Whitelisting/Blacklisting: Controlling access based on source IP addresses.

- Data Masking/Redaction: Preventing sensitive information from leaving the

gatewayor being logged unnecessarily. - Threat Protection: Detecting and mitigating common web vulnerabilities like SQL injection or cross-site scripting (XSS) at the

gatewaylevel.

By enforcing these policies at the gateway, all api calls in the waterfall benefit from a consistent security posture. This eliminates the need for each backend service to implement and maintain complex security logic, reducing development effort and the risk of security vulnerabilities propagating through the system. For features like resource access requiring approval, as offered by APIPark, the gateway can prevent unauthorized api calls until administrators grant explicit subscription approval, adding another layer of robust access control.

4. Observability and Monitoring for the Waterfall

Understanding the performance and health of individual api calls within a complex API Waterfall is critical for troubleshooting and optimization. An API Gateway serves as an ideal vantage point for comprehensive observability:

- Request Logging: It can log every incoming and outgoing request, including headers, payloads, timestamps, and response codes. This detailed logging is essential for auditing, debugging, and understanding

apiusage patterns. APIPark, for instance, provides comprehensive logging capabilities, recording every detail of eachapicall, allowing businesses to quickly trace and troubleshoot issues. - Metrics Collection: The

gatewaycan collect a wealth of metrics, such as request counts, error rates, latency distribution, and throughput for eachapiendpoint. These metrics provide real-time insights into thegateway's performance and the health of the underlying services it interacts with. - Distributed Tracing: Integrating with distributed tracing systems (e.g., OpenTelemetry, Jaeger) allows the

API Gatewayto inject correlation IDs into requests. These IDs propagate through everyapicall in the waterfall across various backend services, enabling developers to visualize the entire request flow, identify bottlenecks, and pinpoint exactly where latency or errors occur in the cascade. - Powerful Data Analysis: By analyzing historical call data,

API Gatewayplatforms can display long-term trends and performance changes. This predictive capability, as highlighted by APIPark's powerful data analysis features, helps businesses with preventive maintenance, identifying potential issues before they impact users.

Centralized monitoring at the gateway provides a holistic view of the API Waterfall, making it dramatically easier to diagnose performance regressions or intermittent failures that might otherwise be obscured across dozens of interdependent services.

5. Resilience Patterns and Fault Tolerance

In an API Waterfall, a failure in one service can easily cascade and bring down the entire application. The API Gateway can implement various resilience patterns to prevent such catastrophic failures:

- Circuit Breakers: If a backend service starts consistently failing or timing out, the

gatewaycan "open the circuit" to that service, preventing further requests from being sent to it for a defined period. This gives the failing service time to recover, prevents thegatewayfrom wasting resources on doomed requests, and ensures that other parts of theAPI Waterfall(or otherapis entirely) can continue functioning. - Retries: For transient errors, the

gatewaycan automatically retryapicalls a few times before deeming them failed. This can gracefully handle temporary network glitches or brief service availability issues without exposing them to the client. - Timeouts: Enforcing strict timeouts for backend

apicalls ensures that no single slow service can indefinitely hold up the entireAPI Waterfall. If a service doesn't respond within the specified time, thegatewaycan fail fast, allowing for alternative strategies or returning an immediate error to the client. - Fallbacks: In some scenarios, if a primary backend service fails, the

gatewaycan be configured to call a fallback service or return a default, cached, or degraded response. This ensures a more graceful degradation of service rather than a complete outage.

These resilience patterns, when implemented at the API Gateway, significantly enhance the fault tolerance of the entire system. They protect the api waterfall from localized failures, preventing them from propagating upstream to clients or downstream to other dependent services. This strategic implementation ensures that the complex dance of api interactions remains robust and reliable, even in the face of partial system failures. The performance benchmarks of platforms like APIPark, rivaling Nginx with high TPS and supporting cluster deployment, further underscore the importance of choosing a robust gateway for handling large-scale traffic and ensuring high availability within these complex api ecosystems.

Performance Implications and Visualization of the API Waterfall

The existence of an API Waterfall inherently carries significant performance implications for any application. Every api call in the sequence introduces potential latency, and these individual delays can accumulate rapidly, leading to a sluggish user experience. Understanding these performance dynamics and having the right tools to visualize them is critical for optimization.

Cumulative Latency and Bottlenecks

Each step in an API Waterfall involves several micro-operations: network transmission to the service, service processing time, database queries, external calls from that service, and then network transmission back to the caller (which could be the API Gateway or the client). Even if each individual api call is optimized, the cumulative effect can be substantial. For example, if a user request triggers five sequential api calls, and each call takes an average of 100ms (including network round trip and processing), the total minimum latency for that waterfall would be 500ms – half a second before the client even begins to process the final response. This doesn't account for queuing delays, garbage collection pauses, or database contention.

Identifying bottlenecks in such a chain is paramount. A single slow api in the middle of a long waterfall can bring the entire process to a crawl, overshadowing the efficiency of all other services. Without proper monitoring and tracing, pinpointing this bottleneck can be like finding a needle in a haystack. The API Gateway, by centralizing api traffic and providing comprehensive logging and metrics, becomes the first line of defense in identifying these performance culprits.

The Analogy of a Network Waterfall Chart

While "API Waterfall" primarily refers to the logical chain of dependencies, the term "waterfall chart" is a well-established visualization in network performance analysis, particularly in browser developer tools. This type of waterfall chart visually represents the loading sequence and timing of all resources (HTML, CSS, JavaScript, images, and critically, api calls) that a web page fetches. Each bar in the chart represents a resource, showing:

- Queuing time: How long the request waited before being sent.

- DNS lookup: Time to resolve the domain name.

- Initial connection/SSL handshake: Time to establish a secure connection.

- Time to first byte (TTFB): Time until the first byte of the response is received.

- Content download: Time to download the entire response.

When you look at a network waterfall chart for a complex application, you often see multiple parallel requests, but also clear sequential dependencies for api calls. For instance, an api call for user data might appear first, and then several subsequent api calls for personalized content might begin after the user data api call has completed and provided the necessary user ID. This visual representation strikingly illustrates the "waterfall" of dependencies and their cumulative impact on perceived performance. Understanding how to interpret these charts is invaluable for developers looking to optimize their api interactions.

Strategies for Performance Optimization within an API Waterfall

Optimizing an API Waterfall involves a multi-pronged approach, often leveraging the capabilities of an API Gateway:

- Parallelization of Independent Calls: Where possible, identify

apicalls in the waterfall that do not depend on the output of preceding calls. TheAPI Gatewaycan execute these calls in parallel, significantly reducing the total execution time. For instance, fetching a user's profile and their friends list can often happen concurrently. - Caching at the Gateway Level: As discussed, intelligent caching of responses for immutable or frequently accessed data at the

API Gatewaycan bypass entireapiwaterfalls for subsequent requests, dramatically reducing latency and backend load. - Reducing Chatty APIs (Aggregation): Design

apis and use thegateway's aggregation capabilities to combine multiple small requests into fewer, larger ones. Instead of fetching "name," then "address," then "phone number" via three separateapicalls, have a single "get_user_details"apicall that returns all necessary information. - Optimizing Individual Service Performance: While the

gatewayhelps manage the waterfall, the performance of each individual service within the chain remains critical. This involves optimizing database queries, improving code efficiency, reducing external service calls, and ensuring services are adequately provisioned. - Efficient Data Transfer Formats: Using compact and efficient data serialization formats (e.g., Protobuf, MessagePack) over verbose ones (like XML) can reduce network payload sizes, leading to faster data transmission within the waterfall.

- Asynchronous Processing for Non-Critical Steps: For

apicalls that are not immediately critical to the client's response (e.g., sending an audit log to a separate service, updating a statistics counter), consider processing them asynchronously after the primary response has been sent. TheAPI Gatewaycan facilitate this by immediately returning a success response to the client while queuing the non-critical backendapicalls for later execution. - Content Delivery Networks (CDNs): For static assets delivered via

apis (e.g., images, videos, large documents), leveraging CDNs can significantly reduce the load on backend services and improve delivery speed by serving content from edge locations closer to the user. While not directly anAPI Waterfalloptimization, it reduces the overall perceived latency by offloading what could otherwise beapicalls for static content.

By systematically applying these optimization strategies, often orchestrated and facilitated by a robust API Gateway, organizations can significantly improve the performance of their API Waterfalls, leading to more responsive applications and a superior user experience. This holistic approach ensures that not only are individual apis performant, but their collective impact, as they cascade through the system, is also optimized.

Building and Managing API Waterfalls Effectively

The successful management of API Waterfalls extends beyond merely deploying an API Gateway. It encompasses a holistic approach to api design, development, documentation, and continuous monitoring. Building effective API Waterfalls requires foresight, discipline, and the right tools to navigate the inherent complexities.

Design Principles for Resilient API Chains

Designing apis with the understanding that they will likely participate in a waterfall requires a shift in perspective. Each api should be designed not just for its standalone functionality but also for its role within a broader sequence of operations.

- Loose Coupling: Aim for services that are as independent as possible. While dependencies are inherent in an

API Waterfall, minimize direct, tight coupling. Services should ideally communicate through well-definedapicontracts rather than relying on internal implementation details of other services. This allows individual services to evolve and scale independently. - Idempotency: Where an

apicall modifies state, design it to be idempotent. This means that making the same request multiple times has the same effect as making it once. This is crucial for resilience in anAPI Waterfallbecause network issues or transient failures might lead to retries, and you don't want duplicate operations (e.g., charging a customer twice). - Atomic Operations (where appropriate): For critical business transactions, ensure that a sequence of operations is atomic, meaning either all operations succeed, or none do. This might involve implementing distributed transactions (though often complex) or relying on compensating transactions (undoing partial successes if a later step fails).

- Version Control: Clearly version your

apis. Changes to anapiin the middle of a waterfall can break downstream consumers if not managed carefully. Versioning allows for backward compatibility and graceful deprecation, ensuring that existingAPI Waterfallscontinue to function while new versions are introduced. TheAPI Gatewayplays a crucial role here, allowing different versions of anapito be exposed and routed appropriately. - Clear Error Handling: Define clear, consistent error responses for each

api. When a service in the waterfall fails, it should communicate a meaningful error code and message. This allows upstream services or theAPI Gatewayto understand the failure and respond appropriately, perhaps with a retry, a fallback, or a specific error message to the client.

Importance of Documentation and Versioning

In a system built on API Waterfalls, comprehensive and up-to-date documentation is not merely a good practice; it's a necessity. Without clear documentation:

- Understanding Dependencies: Developers struggle to understand which

apis depend on which, making it difficult to debug, modify, or extend the system. - Onboarding New Developers: New team members face a steep learning curve trying to decipher undocumented

apiinteractions. - Maintaining Consistency: Without documented contracts,

apis can diverge, leading to breaking changes and integration headaches.

Documentation should detail api endpoints, request/response formats, authentication requirements, error codes, and crucially, any known dependencies or sequence requirements. Tools like OpenAPI (Swagger) can automate much of this, providing machine-readable api specifications that can be used to generate documentation, client SDKs, and even test cases.

Coupled with documentation is robust api versioning. As services evolve, their api contracts might need to change. Without proper versioning, changes to one api could silently break multiple API Waterfalls that rely on it. Strategies include URI versioning (/v1/users), header versioning (Accept: application/vnd.myapi.v2+json), or query parameter versioning. The API Gateway is instrumental in managing multiple api versions, routing requests to the correct backend service based on the requested version, allowing for smooth transitions and reducing the risk of breaking existing integrations.

Testing Strategies for Complex API Integrations

Testing API Waterfalls goes beyond unit testing individual api endpoints. It requires a multi-layered approach:

- Unit Tests: Ensure each individual

apiendpoint works correctly in isolation. - Integration Tests: Verify the communication and data flow between two or more directly connected

apis. For anAPI Waterfall, this means testing the entire chain of dependency. This often involves mocking external services or setting up specific test environments. - End-to-End Tests: Simulate real user scenarios by interacting with the

API Gatewayand verifying that the entireAPI Waterfallcompletes successfully and produces the expected outcome. These tests often involve a full deployment of the application and its dependencies. - Performance Tests: Subject

API Waterfallsto realistic load to identify bottlenecks, measure latency, and evaluate the system's scalability under stress. This helps confirm that optimizations are effective and that theAPI Gatewayand backend services can handle anticipated traffic. - Chaos Engineering: Deliberately inject failures into parts of the

API Waterfall(e.g., latency, service unavailability) to test the system's resilience patterns (circuit breakers, retries) and ensure graceful degradation rather than catastrophic failure.

The Role of API Management Platforms in the Entire Lifecycle

Managing API Waterfalls effectively, from initial design to ongoing operations, is a complex undertaking that benefits immensely from dedicated API Management Platforms. These platforms, which often include an API Gateway as a core component, provide a comprehensive suite of tools and functionalities to govern the entire api lifecycle.

Features provided by platforms like APIPark are instrumental here. For instance, APIPark assists with managing the entire lifecycle of apis, including design, publication, invocation, and decommission. It helps regulate api management processes, manage traffic forwarding, load balancing, and versioning of published apis. This end-to-end perspective ensures that API Waterfalls are not just built but are also maintained, secured, and optimized throughout their operational lifespan. The ability to centralize the display of all api services for sharing within teams, or to provide independent apis and access permissions for each tenant, further streamlines collaboration and security in an environment increasingly reliant on complex api interactions. These platforms provide the necessary governance, visibility, and control to transform chaotic API Waterfalls into predictable, high-performing assets for any organization.

The Evolution and Future of API Waterfalls

The concept of chaining api calls, or the API Waterfall, is not static; it continues to evolve with advancements in software architecture and changing technological landscapes. New paradigms and tools are emerging that offer different approaches to managing and optimizing these complex inter-service communications, especially as AI services become more integrated.

Serverless Functions and Event-Driven Architectures

One significant evolution comes with the rise of serverless computing and event-driven architectures. Instead of a rigid, synchronous API Waterfall where one api call directly triggers the next, event-driven systems often rely on asynchronous event streams. An initial api call might trigger an event (e.g., "Order Placed" event), which is then published to a message queue or event bus. Multiple serverless functions (e.g., a "Process Payment" function, a "Generate Shipping Label" function, an "Send Confirmation Email" function) can then subscribe to this event and react independently.

While this doesn't eliminate dependencies entirely, it shifts from direct synchronous api calls to a more decoupled, asynchronous flow. Each step (function) is still effectively an api call (or a microservice reacting to an event), but the orchestration becomes less "waterfall-like" in its direct sequential HTTP request-response nature. The benefits include greater resilience (if one function fails, others can continue), improved scalability (functions can scale independently), and reduced latency for the initial response to the client. The API Gateway still plays a role here, often being the first point of contact that triggers the initial event.

GraphQL as an Alternative to Deep Waterfalls

For client-facing API Waterfalls primarily focused on data fetching, GraphQL has emerged as a powerful alternative. Traditional REST apis often lead to "over-fetching" (receiving more data than needed) or "under-fetching" (requiring multiple api calls to get all necessary data, thus creating a client-side waterfall). GraphQL allows clients to precisely specify the data they need from a single endpoint.

A GraphQL gateway or server can then intelligently resolve this single client query by making multiple internal api calls to various backend microservices and databases (effectively, an internal API Waterfall that is entirely abstracted from the client). This significantly reduces the client-side complexity and the number of api calls the client has to manage, making the client's interaction with the "waterfall" vastly simpler. While the internal gateway still executes an API Waterfall, it does so more efficiently and transparently for the consumer.

The Increasing Complexity with AI Services

The integration of Artificial Intelligence capabilities into applications introduces a new layer of complexity to API Waterfalls. AI models, whether for sentiment analysis, image recognition, natural language processing, or recommendation engines, are often exposed as apis themselves. An API Waterfall might now include:

- An initial

apicall to a data processing service. - Followed by an

apicall to an AI model for inference. - Then, another

apicall to a business logic service that interprets the AI's output. - Finally, an

apicall to store results or notify another system.

Managing the unique characteristics of AI apis – their potentially high latency, large data payloads, and specific input/output formats – within an existing API Waterfall poses new challenges. This is where specialized API Gateways become indispensable. An AI gateway is specifically designed to handle the nuances of AI model integration.

Platforms like APIPark are at the forefront of this evolution. As an open-source AI gateway and API management platform, APIPark directly addresses the complexities introduced by AI services. Its features like "Quick Integration of 100+ AI Models" and "Unified API Format for AI Invocation" are crucial. Instead of each step in the waterfall needing to understand the unique api of every AI model, APIPark standardizes the request data format. This ensures that changes in AI models or prompts do not affect the application or microservices, thereby simplifying AI usage and maintenance costs within an API Waterfall. Furthermore, "Prompt Encapsulation into REST API" allows users to quickly combine AI models with custom prompts to create new apis (e.g., a sentiment analysis api or a translation api). This simplifies the exposure and consumption of AI capabilities, making them seamless participants in existing or new API Waterfalls.

The future of API Waterfalls will likely see a continued emphasis on smart orchestration, abstraction, and specialized gateway capabilities. Whether through asynchronous events, client-driven data fetching, or AI-specific optimizations, the goal remains the same: to manage the inherent complexities of interdependent api calls to deliver robust, high-performing, and easily maintainable applications. The API Gateway will remain a central, evolving component in this landscape, adapting to new challenges and continuing to provide the critical control plane for the intricate flow of data across modern digital systems.

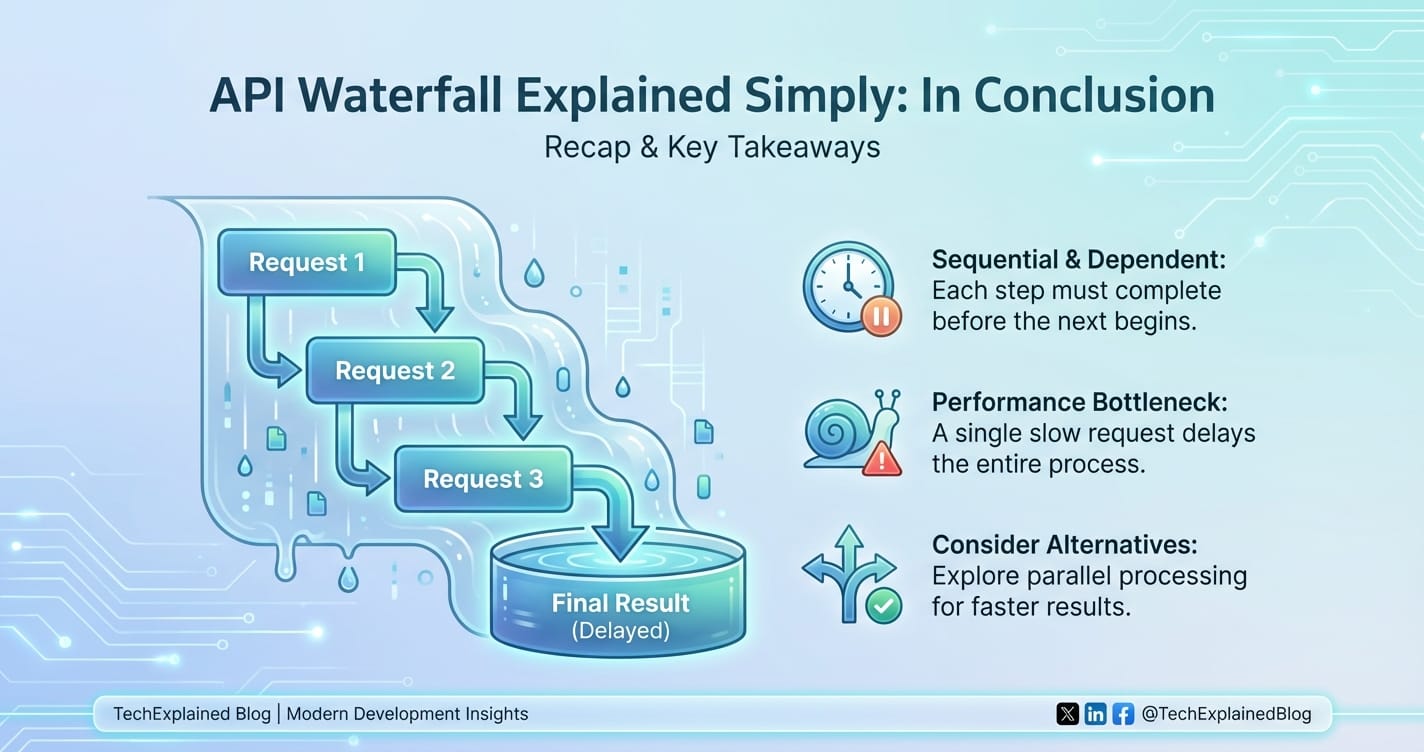

Conclusion

The "API Waterfall," while not a formal specification, is a vivid and highly relevant metaphor for the intricate, interdependent chain of api calls that forms the backbone of almost every modern digital application. From a simple e-commerce transaction to the complex orchestration of AI-driven services, data and requests rarely travel through a single api in isolation. Instead, they cascade from one service to the next, each step building upon the output of its predecessor, creating a powerful yet challenging pattern of interaction. This inherent complexity introduces significant hurdles related to performance, error handling, security, and overall manageability, demanding sophisticated architectural solutions.

At the heart of effectively taming the API Waterfall lies the API Gateway. This architectural pattern serves as the indispensable control point, acting as a single, centralized entry point for all client requests. By abstracting the complexity of numerous backend apis and orchestrating the internal waterfall of calls, an API Gateway simplifies client interactions, enhances security, optimizes performance through caching and aggregation, and provides crucial observability into the entire system. It transforms a potentially chaotic network of interdependent services into a streamlined, resilient, and manageable ecosystem.

From basic routing and authentication to advanced features like request aggregation, protocol translation, robust resilience patterns (such as circuit breakers and retries), and comprehensive monitoring, the API Gateway is instrumental in ensuring that API Waterfalls flow smoothly and reliably. As the landscape continues to evolve with serverless architectures, GraphQL, and the ever-growing integration of AI services, specialized gateway solutions become even more critical. Platforms like APIPark, an open-source AI gateway and API management platform, exemplify this evolution by providing tailored features for integrating and managing complex API Waterfalls that include diverse AI models, streamlining their invocation and lifecycle management.

Ultimately, understanding the API Waterfall is paramount for architects, developers, and operations teams aiming to build robust, scalable, and high-performing applications. By strategically employing an API Gateway and adhering to sound design principles for apis, organizations can not only mitigate the challenges posed by cascading dependencies but also unlock the full potential of their distributed systems, fostering innovation and delivering exceptional user experiences in an increasingly interconnected world. The journey through the API Waterfall is complex, but with the right tools and strategies, it is a journey that leads to powerful and resilient digital solutions.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Frequently Asked Questions (FAQ)

- What exactly does "API Waterfall" mean, and is it a formal technical term? The "API Waterfall" is a metaphorical term, not a formal technical specification. It describes a common pattern in software architecture where a single user request or application process triggers a sequence of interdependent API calls. The output of one API call often serves as the input for the next, creating a cascading flow of data and operations. It illustrates the logical chain of dependencies rather than a specific technology.

- Why do API Waterfalls create challenges for application development? API Waterfalls introduce several challenges, including increased latency due to multiple network hops and processing times, higher complexity for client applications that might need to orchestrate these calls, and difficulties in error handling as a failure in one API can cascade and affect the entire chain. Additionally, monitoring and troubleshooting performance issues or bugs within a deep waterfall can be significantly more challenging.

- How does an API Gateway help manage API Waterfalls? An API Gateway acts as a centralized entry point for all client requests, sitting in front of multiple backend services. It simplifies API Waterfalls by allowing clients to make a single call to the gateway, which then internally orchestrates the necessary backend API calls. The gateway handles crucial tasks like request routing, load balancing, authentication, rate limiting, data transformation, caching, and implementing resilience patterns, effectively abstracting the waterfall's complexity from the client and enhancing overall system reliability and performance.

- Can API Waterfalls be avoided, or are they a necessary part of modern architectures? While it's difficult to completely avoid chained API calls in complex, distributed systems (especially with microservices architectures), their direct impact on client applications can be mitigated. Strategies like using an API Gateway for request aggregation, adopting event-driven architectures, or utilizing technologies like GraphQL can abstract the internal waterfall from the client, making interactions simpler and more efficient. However, the underlying logical dependencies (the waterfall itself) are often an inherent part of achieving complex business logic through modular services.

- What role do AI-specific API Gateways play in the context of API Waterfalls? As AI services become integral to applications, API Waterfalls can include calls to various AI models (e.g., for sentiment analysis, recommendations). AI-specific API Gateways, like APIPark, are designed to streamline the integration and management of these AI models within the waterfall. They offer features like quick integration of diverse AI models, standardizing API formats for AI invocation, and encapsulating prompts into REST APIs. This simplifies the development and maintenance of AI-driven applications, making AI capabilities easier to consume and manage within complex, cascading API structures.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.