What is an AI Gateway? Definition, Benefits & How It Works

The modern enterprise stands at the precipice of a technological revolution, a transformation driven by the relentless march of Artificial Intelligence. From automating mundane tasks to unearthing profound insights from vast datasets, AI is no longer a futuristic concept but an indispensable tool shaping the very fabric of business operations. At the vanguard of this revolution are Large Language Models (LLMs), a sophisticated class of AI that can understand, generate, and process human language with unprecedented fluency and depth. These powerful models, along with a myriad of other specialized AI services, promise to redefine everything from customer service and content creation to data analysis and strategic decision-making.

However, the path to integrating these potent AI capabilities into existing enterprise architectures is fraught with challenges. Organizations often grapple with a fragmented landscape of diverse AI models, each with its unique API, authentication mechanism, and operational requirements. Ensuring security, managing costs, maintaining performance, and facilitating seamless integration across complex systems can quickly become an overwhelming endeavor. This burgeoning complexity threatens to stifle the very innovation AI promises to deliver. It is precisely in this intricate environment that the AI Gateway emerges not merely as a convenience, but as an absolute necessity.

An AI Gateway acts as a sophisticated intermediary, a control plane specifically engineered to abstract away the complexities of interacting with disparate AI models and services. It provides a unified, secure, and manageable interface, enabling businesses to harness the full power of AI without getting entangled in the underlying operational intricacies. This article will embark on a comprehensive exploration of the AI Gateway, delving into its fundamental definition, dissecting the myriad benefits it confers upon organizations, and meticulously detailing the intricate mechanisms through which it operates. By the end of this journey, readers will possess a profound understanding of why the AI Gateway is not just another piece of infrastructure, but a pivotal enabler for successful, scalable, and secure AI adoption in the enterprise.

The Evolving Landscape of AI and LLMs: A Catalyst for Specialized Management

The journey of Artificial Intelligence within the enterprise has been one of continuous evolution, marked by punctuated equilibrium and periods of explosive growth. For decades, AI was largely the domain of specialized researchers and data scientists, its applications often limited to specific, narrow tasks such as predictive analytics, recommendation engines, or robotic process automation (RPA). Early adopters wrestled with bespoke models, complex data pipelines, and a significant demand for highly specialized talent to develop, deploy, and maintain these systems. The integration of even a single AI model into a production environment was often a monumental undertaking, fraught with challenges related to data formatting, model versioning, and endpoint management.

The past few years, however, have ushered in a new epoch, primarily driven by breakthroughs in deep learning and the advent of Large Language Models (LLMs). These foundational models, trained on colossal datasets of text and code, possess astonishing capabilities across a vast spectrum of tasks, including natural language understanding, generation, summarization, translation, and even code synthesis. Models like OpenAI's GPT series, Google's Bard/Gemini, Anthropic's Claude, and a plethora of open-source alternatives have democratized access to advanced AI, transforming it from an exclusive tool for experts into a versatile resource for developers and businesses across all sectors.

The sheer power and accessibility of LLMs have ignited an unprecedented wave of enterprise AI adoption. Companies are now eager to infuse AI into every conceivable touchpoint, from enhancing customer support chatbots with human-like conversational abilities to automating the generation of marketing content, personalizing user experiences, and accelerating research and development. This rapid proliferation, while immensely promising, has introduced a new layer of operational complexity that traditional IT infrastructure was simply not designed to handle.

Enterprises today frequently find themselves navigating a multi-vendor AI environment. They might use one LLM for creative content generation, another for code assistance, a specialized model for medical diagnostics, and a proprietary internal model for customer sentiment analysis. Each of these models resides on a different platform, offers a distinct API structure, requires specific authentication credentials, and comes with its own set of pricing models and usage policies. Furthermore, the pace of innovation in AI is relentless; new models are released, existing ones are updated, and performance benchmarks shift constantly.

This fragmented and dynamic landscape presents several critical challenges:

- Complexity of Integration: Integrating multiple diverse AI models into existing applications demands significant development effort. Each integration point requires custom coding to handle different API schemas, data formats, and authentication protocols.

- Security Vulnerabilities: Direct access to AI model APIs can expose sensitive data, lead to unauthorized usage, or create entry points for malicious attacks. Managing security for dozens of individual endpoints is a daunting task.

- Cost Management: AI models, especially LLMs, can be expensive to run. Without centralized visibility and control over usage, costs can spiral rapidly and unpredictably, making budget forecasting a nightmare.

- Performance & Reliability: Ensuring consistent performance across different AI providers and models, handling outages, and implementing efficient caching strategies become critical for production-grade applications.

- Prompt Management & Governance: For LLMs, the quality and consistency of prompts are paramount. Managing different versions of prompts, ensuring adherence to brand voice, and preventing prompt injection attacks require dedicated solutions.

- Vendor Lock-in: Directly integrating with a single AI provider's API creates a strong dependency, making it difficult and costly to switch providers or experiment with alternative models if performance or cost considerations change.

- Observability & Troubleshooting: When an AI-powered application malfunctions, diagnosing whether the issue lies with the application, the AI model, or the network can be incredibly challenging without centralized logging and monitoring.

Traditional API management solutions, while robust for RESTful services, often fall short when confronted with these AI-specific complexities. They might handle basic authentication and routing, but they typically lack the specialized features required for prompt engineering, AI-specific cost tracking, intelligent model routing based on real-time performance, or unified management of diverse AI model APIs. This is where the concept of a specialized AI Gateway becomes not just advantageous, but absolutely essential for any organization serious about scaling and securing its AI initiatives.

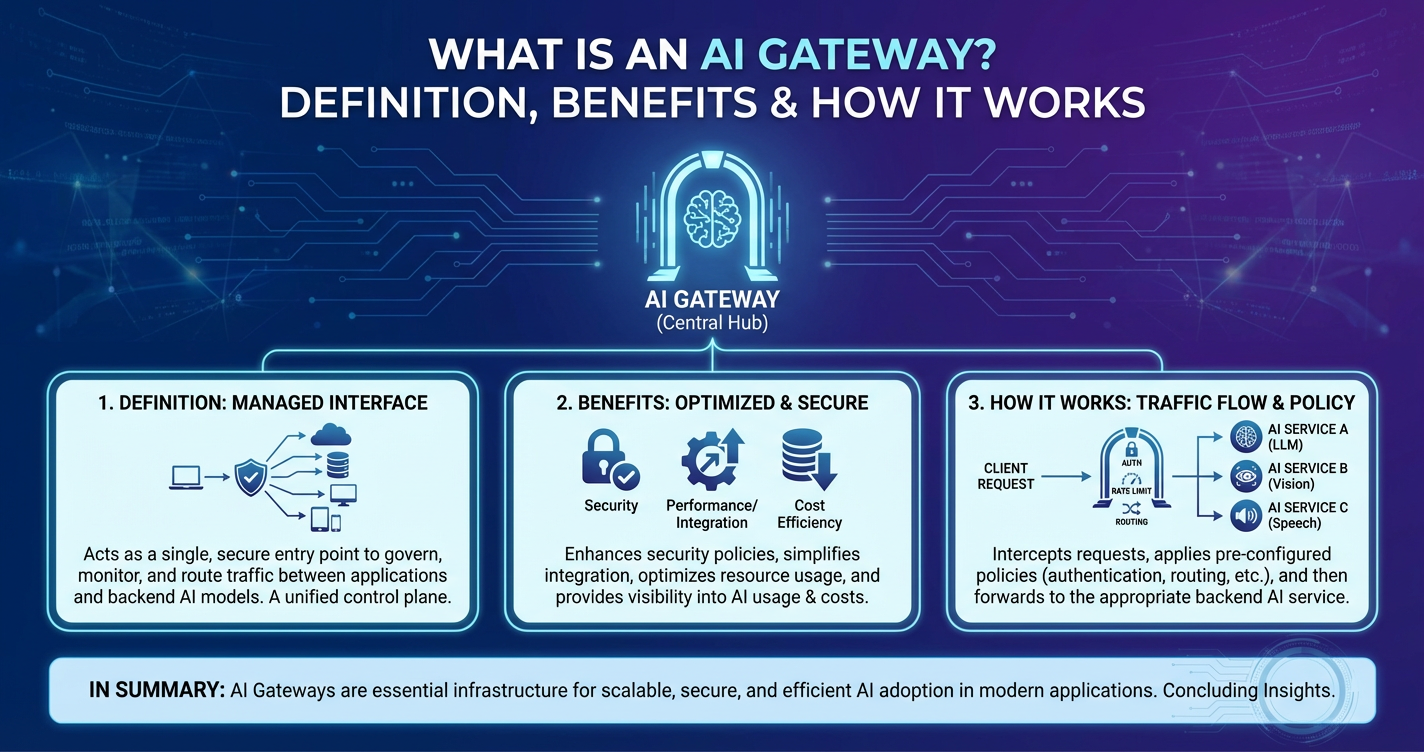

What is an AI Gateway? A Comprehensive Definition

At its core, an AI Gateway is a specialized type of API Gateway meticulously engineered to serve as the central control point for interacting with Artificial Intelligence and Machine Learning models, particularly Large Language Models (LLMs). While it shares foundational principles with a traditional api gateway, its design and feature set are profoundly tailored to address the unique complexities and demands of AI workloads. An AI Gateway acts as an intelligent intermediary, proxying requests from client applications to various AI service providers or self-hosted models, and then routing the responses back to the original callers.

Imagine an orchestra conductor, not just directing musicians, but also ensuring they all play the same sheet music (despite varying instrument types), managing their individual performance characteristics, and ensuring the entire ensemble is harmonious and efficient. This analogy captures the essence of an AI Gateway. It doesn't just pass messages; it intelligently manages, secures, optimizes, and orchestrates interactions with a diverse and often distributed ecosystem of AI models.

The primary function of an AI Gateway is to abstract away the inherent complexities of integrating with multiple, disparate AI services. Instead of applications needing to understand the unique API specifications, authentication methods, and rate limits of each individual AI model (e.g., one LLM from OpenAI, another from Google, a computer vision model from AWS, and an internal fraud detection model), they interact solely with the AI Gateway. This gateway then handles all the underlying complexities on behalf of the client application.

More specifically, an AI Gateway performs several critical roles:

- Unified Access Layer: It provides a single, consistent entry point (a unified API endpoint) for all AI interactions, regardless of the underlying model or provider. This simplifies development, as applications only need to be aware of the gateway's interface, not the specifics of each AI model.

- Intelligent Routing and Orchestration: Beyond simple request forwarding, an AI Gateway can intelligently route incoming requests to the most appropriate AI model based on predefined rules. These rules might consider factors such as the model's cost, latency, current load, capabilities, or specific features required by the request. For instance, a complex query might be routed to a powerful, expensive LLM, while a simple translation request goes to a more cost-effective, specialized model.

- Security and Access Control: It enforces robust authentication and authorization policies, ensuring that only authorized users or applications can access specific AI models or features. This includes API key management, token validation, and granular access controls.

- Performance Optimization: Through caching, request aggregation, and intelligent load balancing, an AI Gateway can significantly improve the response times and overall efficiency of AI-powered applications. Caching repetitive requests can drastically reduce latency and operational costs.

- Cost Management and Observability: It provides centralized logging, monitoring, and auditing capabilities for all AI interactions. This enables organizations to track usage patterns, identify potential cost overruns, diagnose issues, and gain deep insights into how their AI resources are being consumed.

- Request and Response Transformation: The gateway can modify incoming requests (e.g., adding headers, transforming data formats) before forwarding them to an AI model, and similarly transform responses before sending them back to the client, ensuring data consistency across the application landscape.

When we talk about an AI Gateway in the context of the current generative AI boom, the term LLM Gateway often comes into play. An LLM Gateway is a specific manifestation of an AI Gateway, hyper-focused on the unique challenges presented by Large Language Models. While it encompasses all the general features of an AI Gateway, an LLM Gateway places particular emphasis on:

- Prompt Management and Versioning: Allowing for the creation, storage, versioning, and dynamic injection of prompts, ensuring consistency and enabling A/B testing of different prompt strategies.

- Cost-Aware Routing for LLMs: Intelligent routing decisions based on the specific pricing tiers of different LLMs (e.g., routing to a cheaper model for simple tasks, a more expensive one for complex, critical queries).

- Context Window Management: Handling the limitations of context windows in LLMs, potentially by summarizing previous turns in a conversation or intelligently chunking data.

- Guardrails and Content Moderation: Implementing an additional layer of safety to filter out inappropriate content in both inputs and outputs from LLMs, ensuring responsible AI deployment.

- Fallback Strategies for LLMs: Automatically switching to an alternative LLM provider or a local model if the primary choice is unavailable or experiencing performance degradation.

In essence, an AI Gateway (and its specialized variant, the LLM Gateway) transitions AI integration from a bespoke, complex, and high-risk endeavor into a standardized, manageable, and secure operational capability. It elevates the discussion from individual model interactions to a strategic enterprise-wide AI management paradigm, enabling organizations to leverage AI at scale without being bogged down by its inherent technical intricacies.

Key Features and Components of an AI Gateway

The true power of an AI Gateway lies in its comprehensive suite of features, each designed to address a specific challenge in the lifecycle of AI models within an enterprise. These features transform a simple proxy into an intelligent orchestration layer, crucial for robust, scalable, and secure AI adoption. Understanding these components is key to appreciating the profound impact an AI Gateway has on an organization's AI strategy.

1. Unified API Endpoint and Integration Layer

One of the most immediate benefits of an AI Gateway is its ability to present a single, standardized API gateway endpoint to client applications. Instead of developers needing to integrate with a dozen different APIs from various AI providers (e.g., OpenAI, Google Cloud AI, Hugging Face, internal custom models), they interact with just one. The gateway normalizes the diverse request and response formats of underlying models into a consistent schema. This drastically reduces development time, simplifies codebases, and minimizes integration headaches. For instance, a developer can send a text generation request to the gateway, and the gateway intelligently routes it to the best available LLM, handling any necessary format transformations behind the scenes. Products like APIPark are specifically designed to provide a unified API format for AI invocation, ensuring that changes in AI models or prompts do not affect the application or microservices, thereby simplifying AI usage and maintenance costs. APIPark offers the capability to integrate a variety of AI models with a unified management system for authentication and cost tracking, streamlining the adoption of diverse AI technologies.

2. Intelligent Routing and Load Balancing

Beyond simple forwarding, an AI Gateway implements sophisticated routing logic. It can dynamically direct incoming requests to the most appropriate AI model or provider based on a multitude of factors:

- Cost-Effectiveness: Route simple queries to cheaper, smaller models, and complex queries to more powerful, albeit expensive, ones.

- Latency/Performance: Direct traffic to the model or provider with the lowest current latency or highest availability.

- Capability Matching: Send a sentiment analysis request to a specialized sentiment model, and a code generation request to an LLM optimized for coding.

- Geographical Proximity: Route requests to AI services hosted in regions closer to the end-user for reduced latency.

- Load Distribution: Distribute requests across multiple instances of the same model or across different providers to prevent bottlenecks and ensure high availability.

- Fallback Mechanisms: Automatically switch to a backup model or provider if the primary choice is unavailable or performing poorly, ensuring application resilience.

This intelligent routing capability is critical for optimizing both performance and operational costs.

3. Robust Authentication and Authorization

Securing access to valuable AI resources is paramount. An AI Gateway acts as a powerful security enforcement point, implementing comprehensive authentication and authorization mechanisms:

- API Key Management: Centralized generation, revocation, and rotation of API keys for client applications.

- OAuth/JWT Integration: Support for industry-standard token-based authentication protocols, seamlessly integrating with existing identity providers.

- Role-Based Access Control (RBAC): Granular control over which users or applications can access specific AI models, features, or data. For example, a marketing team might have access to content generation LLMs, while a compliance team has access to sensitive data classification models.

- Subscription Approval: APIPark, for example, allows for the activation of subscription approval features, ensuring that callers must subscribe to an API and await administrator approval before they can invoke it, preventing unauthorized API calls and potential data breaches.

These features protect against unauthorized access, data breaches, and misuse of expensive AI resources.

4. Rate Limiting and Throttling

To prevent abuse, ensure fair usage, and manage operational costs, an AI Gateway offers sophisticated rate limiting and throttling capabilities. It allows administrators to define limits on the number of requests a client can make within a specific timeframe (e.g., 100 requests per minute per user). This prevents individual applications from monopolizing AI resources, safeguards against Denial-of-Service (DoS) attacks, and helps control spending on usage-based AI services. Different tiers of service can be established, offering higher limits to premium users or critical applications.

5. Comprehensive Observability (Logging, Monitoring, Tracing)

Visibility into AI operations is essential for troubleshooting, performance tuning, and cost management. An AI Gateway provides a centralized hub for all AI-related telemetry:

- Detailed Logging: Capturing every API call, including request payloads, response data, timestamps, client IDs, latency, and chosen AI model. APIPark provides comprehensive logging capabilities, recording every detail of each API call, which allows businesses to quickly trace and troubleshoot issues.

- Real-time Monitoring: Dashboards and alerts to track key metrics such as request rates, error rates, latency, and resource consumption across all AI models.

- Distributed Tracing: The ability to trace a single request through the entire system, from the client, through the gateway, to the specific AI model, and back, which is invaluable for debugging complex issues in microservices architectures.

- Powerful Data Analysis: APIPark analyzes historical call data to display long-term trends and performance changes, helping businesses with preventive maintenance before issues occur.

This robust observability suite empowers operations teams to maintain system stability and optimize AI performance.

6. Caching Mechanisms

For frequently repeated AI requests, especially those that generate static or near-static responses (e.g., retrieving common facts from an LLM, generating an image description for a known image), caching can drastically improve performance and reduce costs. An AI Gateway can store responses to specific AI queries and serve them directly from its cache if an identical request is received within a defined time window. This bypasses the need to call the underlying AI model, saving processing time and reducing API call charges. Intelligent caching strategies can be implemented, considering factors like data staleness and request uniqueness.

7. Request and Response Transformation

AI models often have specific input requirements and output formats that may not align perfectly with the needs of client applications or internal data standards. An AI Gateway can perform on-the-fly transformations:

- Request Transformation: Modifying client requests before forwarding them to an AI model. This could involve adding specific headers, restructuring JSON payloads, mapping input fields, or injecting contextual information from other sources.

- Response Transformation: Normalizing or enhancing the AI model's output before sending it back to the client. This might involve parsing complex JSON, extracting specific data points, summarizing verbose LLM outputs, or converting data types.

This feature ensures seamless data flow and reduces the burden on client applications to adapt to diverse AI model interfaces.

8. Prompt Management and Engineering (Specific for LLM Gateways)

This is a cornerstone feature for any effective LLM Gateway. It centralizes the creation, storage, versioning, and management of prompts that are sent to Large Language Models. Key aspects include:

- Prompt Templates: Define reusable templates with placeholders for dynamic content.

- Version Control: Track changes to prompts over time, allowing rollback to previous versions and A/B testing of different prompt strategies.

- Dynamic Prompt Injection: The gateway can inject pre-defined or dynamically generated prompts into client requests based on the application context or user profile.

- Prompt Encapsulation: APIPark, for instance, allows users to quickly combine AI models with custom prompts to create new APIs, such as sentiment analysis, translation, or data analysis APIs. This encapsulates the prompt logic within the API, simplifying AI usage for developers.

- Guardrails and Moderation: Implementing an additional layer of safety to filter out inappropriate content in prompts (preventing prompt injection attacks) and to moderate outputs from LLMs before they reach the end-user.

Effective prompt management is crucial for ensuring consistent AI behavior, optimizing model performance, and maintaining brand voice.

9. Cost Management and Optimization

AI services, especially LLMs, can incur significant usage-based costs. An AI Gateway provides granular visibility and control over these expenditures:

- Detailed Usage Tracking: Monitor costs per user, per application, per AI model, or per department.

- Budget Alerts: Set up alerts to notify administrators when usage approaches predefined budget thresholds.

- Cost-Aware Routing: As mentioned, routing decisions can be optimized to leverage cheaper models for suitable tasks, directly impacting the bottom line.

- Billing Integration: Potentially integrate with internal billing systems for chargeback to different business units.

This empowers organizations to predict, control, and optimize their AI spending.

10. Security Policies and Data Governance

Beyond authentication and authorization, an AI Gateway can enforce broader security and data governance policies:

- Data Masking/Redaction: Automatically mask or redact sensitive information (e.g., PII, financial data) in requests before they reach the AI model or in responses before they leave the gateway, ensuring compliance with privacy regulations like GDPR or HIPAA.

- Encryption in Transit and at Rest: Ensuring all data flowing through the gateway and any cached data is securely encrypted.

- Audit Trails: Comprehensive logging provides an immutable audit trail for compliance purposes.

- Threat Detection: Integrating with security systems to detect and block malicious AI usage patterns.

These features are vital for maintaining regulatory compliance and protecting sensitive corporate data.

11. Version Control for AI Models and Prompts

As AI models evolve and prompts are refined, managing different versions becomes critical. An AI Gateway can facilitate:

- Model Version Management: Abstracting away specific model versions, allowing developers to target a logical "best" model while the gateway handles routing to the currently deployed optimal version.

- A/B Testing: Easily test different versions of an AI model or different prompt strategies in parallel, routing a percentage of traffic to each variant to compare performance metrics and inform deployment decisions.

- Rollbacks: Seamlessly revert to previous stable versions of models or prompts in case of issues.

This enables continuous improvement and experimentation without disrupting production applications.

12. End-to-End API Lifecycle Management

An AI Gateway, especially one integrated into a broader API management platform, can also assist with managing the entire lifecycle of APIs, including design, publication, invocation, and decommissioning. It helps regulate API management processes, manage traffic forwarding, load balancing, and versioning of published APIs. This holistic approach ensures that AI services are treated as first-class citizens within an organization's overall API strategy. APIPark, for example, is not just an AI gateway but an all-in-one AI gateway and API developer portal that helps manage the entire lifecycle of APIs.

The synergy of these features elevates the AI Gateway from a mere technical component to a strategic asset, empowering organizations to deploy, manage, and scale AI with confidence, efficiency, and security.

The Core Benefits of Implementing an AI Gateway

The strategic adoption of an AI Gateway yields a multitude of advantages that profoundly impact an organization's ability to effectively leverage AI. These benefits extend beyond technical convenience, touching upon cost efficiency, security posture, operational resilience, and the pace of innovation.

1. Simplified Integration & Management

Perhaps the most immediate and tangible benefit is the dramatic simplification of integrating AI capabilities into applications. Without an AI Gateway, developers face the arduous task of understanding and coding against the unique APIs, authentication mechanisms, and data formats of each individual AI model from every provider. This leads to:

- Reduced Development Overhead: Developers interact with a single, consistent interface provided by the

AI Gateway, abstracting away the underlying complexities. This minimizes the learning curve and the amount of custom code needed for integration. For example, instead of writing logic for OpenAI's API, then Google's, then a custom internal model, they write to one unified interface. This is precisely where solutions like APIPark shine, providing a unified API format for AI invocation, which simplifies AI usage and maintenance costs by standardizing interactions across diverse AI models. - Faster Time-to-Market: By streamlining integration, development teams can more rapidly build, test, and deploy AI-powered features and applications, accelerating the pace of innovation.

- Reduced Cognitive Load: Developers can focus on building core application logic rather than wrestling with the idiosyncrasies of various AI model APIs.

This simplification directly translates into increased developer productivity and faster project delivery cycles.

2. Enhanced Security Posture

AI models, especially those handling sensitive data or performing critical functions, are high-value targets. An AI Gateway acts as a formidable security enforcement point, significantly bolstering an organization's overall security posture:

- Centralized Access Control: Instead of managing security policies for dozens of individual AI endpoints, all access is funneled and controlled through the gateway. This provides a single point of enforcement for authentication (e.g., API keys, OAuth tokens) and authorization (e.g., role-based access control).

- Reduced Attack Surface: Client applications only communicate with the gateway, never directly with the backend AI models. This shields the actual AI services from direct exposure to the public internet, making them less vulnerable to attacks.

- Data Masking and Redaction: The gateway can be configured to automatically mask or redact sensitive information (e.g., personally identifiable information, financial data) from requests before they are sent to AI models and from responses before they are returned to clients, ensuring data privacy and compliance.

- Threat Detection and Prevention: Advanced gateways can integrate with security information and event management (SIEM) systems to detect and block malicious usage patterns, such as prompt injection attempts or API misuse. APIPark allows for subscription approval features, preventing unauthorized API calls and potential data breaches by ensuring callers must subscribe and be approved.

By acting as a fortified perimeter, the AI Gateway provides a critical layer of defense for valuable AI assets and the data they process.

3. Optimized Performance & Reliability

Consistent performance and high availability are non-negotiable for production AI applications. An AI Gateway employs several mechanisms to ensure optimal operation:

- Intelligent Load Balancing: Distributing requests across multiple instances of AI models or different providers prevents bottlenecks and ensures that no single service becomes overwhelmed, leading to improved response times.

- Caching: For repetitive or common AI queries, the gateway can serve responses directly from its cache, drastically reducing latency and the load on backend AI services. This also reduces dependency on external services for frequently accessed information.

- Fallback Mechanisms: In the event of an outage or degraded performance from a primary AI model or provider, the gateway can automatically reroute requests to a designated backup, ensuring continuous service availability and application resilience.

- Request Optimization: The gateway can optimize network traffic by aggregating requests or performing data compression, further reducing latency.

These optimizations contribute directly to a smoother, more responsive user experience for AI-powered applications.

4. Significant Cost Reduction and Control

AI services, particularly usage-based LLMs, can quickly become a significant operational expense if not carefully managed. An AI Gateway offers powerful tools for cost control and optimization:

- Granular Usage Tracking: Providing detailed analytics on AI model consumption across applications, users, and departments. This transparency is crucial for understanding spending patterns.

- Cost-Aware Routing: Intelligently routing requests to the most cost-effective AI model for a given task (e.g., using a cheaper, smaller model for simple classification and a more expensive, powerful LLM for complex generation tasks).

- Rate Limiting and Quotas: Preventing runaway costs by enforcing predefined limits on API calls per client, user, or application.

- Caching Benefits: By serving responses from cache, the number of expensive calls to external AI providers is reduced, leading to direct cost savings.

- Budget Alerts: Notifying administrators when AI usage or spending approaches predefined thresholds, allowing for proactive intervention.

This level of control ensures that AI investments are utilized efficiently and within budgetary constraints.

5. Improved Scalability

As an organization's AI adoption grows, so does the demand on its AI infrastructure. An AI Gateway is designed to handle this increased load with grace:

- Centralized Scalability: The gateway itself can be scaled horizontally to accommodate increasing traffic, distributing requests efficiently across its own instances and then to the underlying AI models.

- Abstracted Backend Scaling: Because client applications only interact with the gateway, scaling the backend AI models (e.g., adding more instances, switching to a more powerful provider) can be done transparently, without requiring changes to the consuming applications.

- Cluster Deployment: High-performance gateways like APIPark support cluster deployment, enabling them to handle large-scale traffic (e.g., over 20,000 TPS with modest hardware), ensuring that even rapidly growing AI usage is well-supported.

An AI Gateway ensures that AI infrastructure can grow seamlessly with the demands of the business, without becoming a bottleneck.

6. Better Governance & Compliance

Navigating the complex landscape of data privacy regulations (GDPR, HIPAA, CCPA) and ethical AI guidelines is a significant challenge. An AI Gateway helps enforce governance policies:

- Auditable Trails: Comprehensive logging of all AI interactions provides an immutable record, essential for compliance audits and demonstrating adherence to policies. APIPark's detailed API call logging is a prime example of this, allowing businesses to quickly trace and troubleshoot issues, ensuring data security and regulatory compliance.

- Policy Enforcement: Ensuring that AI models are used in accordance with internal policies and external regulations (e.g., filtering out prohibited content, ensuring data residency).

- Data Lineage: Tracing how data flows through AI models and ensuring that data handling practices meet governance requirements.

This centralized control simplifies the complex task of maintaining regulatory compliance for AI initiatives.

7. Accelerated Innovation & Experimentation

An AI Gateway fosters a culture of innovation by making it easier for developers to experiment with and deploy new AI models and features:

- A/B Testing of Models/Prompts: Easily route a percentage of traffic to new models or prompt variations, allowing for rapid experimentation and performance comparison without impacting all users.

- Reduced Vendor Lock-in: By abstracting the specific AI provider, the gateway makes it easier to switch between different models or providers. If a new, better, or cheaper model emerges, it can be integrated into the gateway with minimal disruption to consuming applications.

- Rapid API Creation: The ability to combine AI models with custom prompts to create new APIs (as with APIPark's prompt encapsulation) significantly accelerates the development of specialized AI services like sentiment analysis or data extraction.

This flexibility empowers organizations to stay agile and continuously leverage the latest advancements in AI technology.

8. Centralized Observability and Data Analysis

Having a single pane of glass for all AI operations is invaluable. The detailed logging, monitoring, and tracing capabilities of an AI Gateway provide unparalleled insights:

- Unified Monitoring: Get a holistic view of the performance and health of all AI services, regardless of their underlying provider.

- Troubleshooting: Quickly identify the root cause of issues, whether it's an application error, a gateway configuration problem, or an issue with a backend AI model.

- Performance Analytics: Identify trends, bottlenecks, and areas for optimization. APIPark’s powerful data analysis feature leverages historical call data to display long-term trends and performance changes, enabling businesses to perform preventive maintenance.

- Usage Insights: Understand how different teams or applications are consuming AI resources, informing future resource allocation and strategic decisions.

This centralized visibility is crucial for maintaining the operational excellence of AI systems.

In conclusion, an AI Gateway transcends its technical function to become a strategic enabler. It transforms the daunting task of enterprise AI integration into a streamlined, secure, cost-effective, and scalable process, allowing organizations to fully realize the transformative potential of Artificial Intelligence.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

How an AI Gateway Works: A Deep Dive into Its Mechanics

To truly grasp the significance of an AI Gateway, it is crucial to understand the intricate operational flow and the various functional layers through which it processes requests. Far more than a simple passthrough proxy, an AI Gateway is a sophisticated piece of infrastructure that intelligently intermediates every interaction with an AI model.

The typical journey of an AI request through an AI Gateway can be broken down into several distinct phases:

1. Request Interception and Entry Point

The first step in any AI interaction orchestrated by an AI Gateway is the client application sending a request to the gateway's unified endpoint. This endpoint is the single point of contact for all AI services. For instance, instead of calling api.openai.com/v1/chat/completions or generativelanguage.googleapis.com/v1beta2/models/text-bison-001:generateText, the application calls ai.mycompany.com/generate-text or ai.mycompany.com/analyze-sentiment. The AI Gateway listens for these incoming requests, acting as the primary entry point to the entire AI ecosystem.

2. Authentication and Authorization Layer

Upon receiving a request, the AI Gateway's immediate priority is to verify the identity and permissions of the caller. This phase involves:

- Authentication: Validating the client's credentials. This could involve checking an API key provided in the request header, validating a JSON Web Token (JWT), or integrating with an OAuth provider. If the credentials are invalid, the gateway rejects the request immediately.

- Authorization: Once authenticated, the gateway determines if the client has the necessary permissions to access the requested AI service. This is typically managed through Role-Based Access Control (RBAC), where specific roles are granted access to particular AI models or capabilities. For example, a customer service application might be authorized to use a text summarization LLM, but not a code generation LLM. APIPark, for example, offers subscription approval features, adding another layer of authorization where even authenticated users must be explicitly approved to use an API.

This layer is critical for protecting valuable AI resources and preventing unauthorized access or misuse.

3. Policy Enforcement

After successful authentication and authorization, the AI Gateway applies any relevant policies configured for the client or the requested service:

- Rate Limiting: Checking if the client has exceeded their allotted request limit within a given timeframe. If so, the gateway throttles or rejects the request, preventing resource exhaustion and controlling costs.

- Quota Management: Similar to rate limiting, but often related to cumulative usage over a longer period (e.g., total tokens consumed in a month).

- Security Rules: Applying specific security policies such as IP whitelisting/blacklisting, detecting known malicious patterns, or rejecting requests with unusually large payloads that might indicate an attack.

These policies ensure fair usage, prevent abuse, and maintain system stability.

4. Request Transformation and Enrichment

Before forwarding the request to a backend AI model, the AI Gateway often performs various transformations and enrichments:

- Format Normalization: Converting the incoming request payload into the specific format expected by the target AI model (e.g., mapping generic fields to model-specific parameters, adjusting JSON structures).

- Header Modification: Adding, removing, or modifying HTTP headers for the backend AI service (e.g., injecting an internal API key for the AI provider).

- Contextual Data Injection: Enriching the request with additional information not explicitly provided by the client, such as user IDs, session data, or enterprise-specific metadata, which can be useful for logging or for the AI model itself.

- Data Masking/Redaction: Automatically identifying and removing or obfuscating sensitive data within the request payload to ensure privacy compliance before it reaches the AI model.

- Prompt Injection/Management (for LLMs): For LLM Gateways, this is a crucial step. The gateway can dynamically inject a predefined prompt template, combine it with the user's input, or apply prompt engineering techniques to optimize the request for the target LLM. This allows for centralized control over prompt versions and ensures consistent AI behavior. APIPark specifically highlights its prompt encapsulation feature, where users can quickly combine AI models with custom prompts to create new APIs, abstracting this complexity.

This step ensures that the request sent to the AI model is perfectly tailored to its requirements and adheres to organizational policies.

5. Intelligent Routing Decisions

With the request transformed and policies applied, the AI Gateway makes an intelligent decision about where to send the request. This is where the "AI" in AI Gateway truly shines beyond a simple api gateway:

- Model Selection Logic: Based on the type of AI task requested (e.g., text generation, image recognition, sentiment analysis), the gateway identifies suitable AI models from its configured pool.

- Cost Optimization: If multiple models can fulfill the request, the gateway might route to the cheapest available option, especially for high-volume or non-critical tasks.

- Performance Metrics: Real-time data on model latency, uptime, and current load can influence the routing decision, directing traffic to the best-performing or least-congested option.

- Capability Matching: Routing to a specialized model if the request requires a specific capability (e.g., a highly accurate medical LLM vs. a general-purpose one).

- A/B Testing: A percentage of traffic might be routed to a new model version or a different provider for comparative testing.

- Fallback Logic: If the primary chosen model is unresponsive or returns an error, the gateway can automatically reroute the request to a pre-configured fallback model or provider, ensuring high availability. APIPark's capability to integrate 100+ AI models under a unified management system makes this intelligent routing and fallback extremely powerful.

This intelligent routing is dynamic and can adapt to changing conditions in the AI ecosystem.

6. Interaction with AI/LLM Providers

Once the target AI model or service is identified, the AI Gateway forwards the transformed request to the actual AI provider (e.g., OpenAI, Google, AWS, an internal Kubernetes cluster hosting a custom model). It handles the low-level details of making the API call, managing network connections, and waiting for the response.

7. Response Handling & Transformation

When the AI model processes the request and returns a response, the AI Gateway intercepts it before sending it back to the client application. Similar to request transformation, the gateway can perform transformations on the response:

- Format Normalization: Standardizing the output format from potentially diverse AI models into a consistent schema expected by the client application.

- Data Enhancement: Adding metadata to the response, such as the AI model used, the cost incurred, or performance metrics.

- Content Moderation/Filtering: Applying post-processing to AI-generated content to ensure it meets safety guidelines, brand voice, or regulatory requirements, filtering out potentially offensive or inappropriate outputs.

- Data Masking/Redaction: Removing or obfuscating sensitive information that might have been generated by the AI model but should not be exposed to the client.

This ensures that the client application receives a clean, consistent, and policy-compliant response, irrespective of the backend AI model that generated it.

8. Logging, Monitoring & Analytics

Throughout this entire process, from request interception to response delivery, the AI Gateway meticulously logs every detail of the interaction.

- Detailed Call Logs: Recording client IDs, request timestamps, AI model used, input prompts, output responses, latency, status codes, and any errors. APIPark excels here with its detailed API call logging, allowing for quick tracing and troubleshooting.

- Metrics Collection: Aggregating data on request rates, error rates, average latency, and resource consumption.

- Data Analysis: These logs and metrics feed into the gateway's analytics engine, which can visualize trends, identify performance bottlenecks, and provide insights into AI usage patterns and costs. APIPark’s powerful data analysis features leverage this historical data for predictive maintenance and trend identification.

- Auditing: Providing a comprehensive audit trail for compliance and security purposes.

This continuous stream of data is invaluable for operational insights, troubleshooting, cost management, and future optimization.

9. Caching Mechanism (Optional, but highly beneficial)

If a similar request has been processed recently and its response is deemed reusable (based on cache policies), the AI Gateway can serve the response directly from its internal cache. This bypasses steps 5 through 7, dramatically reducing latency and the computational cost associated with calling the actual AI model. Cache validation rules determine how long a response remains valid and when it needs to be refreshed from the backend.

Table 1: Comparison of Traditional API Gateway vs. AI Gateway

| Feature / Aspect | Traditional API Gateway | AI Gateway (including LLM Gateway) |

|---|---|---|

| Primary Focus | Managing RESTful APIs, microservices | Managing AI/ML models, especially LLMs, in addition to REST APIs |

| Routing Logic | URL-based, header-based, simple load balancing | Intelligent, context-aware routing based on model capability, cost, latency, prompt, load |

| API Format | Standardizes REST API interactions | Normalizes diverse AI model APIs (e.g., OpenAI, Google, custom) into a unified format |

| Authentication/Auth | API Keys, OAuth, JWT, RBAC | API Keys, OAuth, JWT, RBAC, plus subscription approval, AI-specific access tiers |

| Request/Response Transform | Basic header/body manipulation | Extensive, AI-specific transformation (e.g., prompt injection, PII masking, response summarization) |

| Caching | Standard HTTP caching | Context-aware caching for AI model responses (e.g., based on prompt hash) |

| Observability | General API metrics, access logs | Detailed AI model usage, cost tracking, prompt logs, performance per model |

| Security | General API security, rate limiting | AI-specific threat detection (e.g., prompt injection prevention), data governance for AI data |

| Cost Management | Primarily infrastructure cost, some API usage | Granular tracking of AI model usage costs, cost-aware routing for optimization |

| Unique AI Features | Limited or None | Prompt Management, Model Versioning, AI Guardrails, Fallback AI Models, Context Window Management |

| Vendor Lock-in | Can be mitigated by abstracting backend services | Explicitly designed to mitigate AI model vendor lock-in by providing a neutral abstraction layer |

| Example Products | Nginx, Kong Gateway, AWS API Gateway, Azure API Management | APIPark, Azure AI Hub, Vercel AI SDK (with some gateway features) |

This detailed operational breakdown illustrates how an AI Gateway meticulously controls, optimizes, and secures every facet of AI interaction, providing a robust foundation for scaling AI within any enterprise.

Use Cases and Practical Applications of an AI Gateway

The versatility of an AI Gateway makes it an invaluable asset across a wide spectrum of enterprise applications and strategic initiatives. By solving the complex challenges of integrating, managing, and securing AI models, it unlocks new possibilities and streamlines existing processes. Here are some compelling use cases and practical applications:

1. Enterprise-Wide AI Integration and Democratization

Many large organizations struggle with fragmented AI adoption. Different departments might use different AI models (e.g., marketing using one LLM for content, engineering using another for code completion, customer service using a third for chatbots). An AI Gateway provides a unified platform to onboard, manage, and expose all these diverse AI capabilities.

How it helps:

- Single Source of Truth: Developers across the enterprise can access a catalog of approved AI services through the gateway, each with standardized API documentation.

- Self-Service AI: Teams can discover and integrate AI models into their applications more easily, accelerating the internal democratization of AI.

- Consistent Policies: All AI usage across the organization adheres to centralized security, cost, and governance policies enforced by the gateway.

- Example: A global conglomerate uses an AI Gateway to expose various specialized AI models (e.g., translation, sentiment analysis, image recognition) as internal APIs. Developers in any business unit can then consume these services through a single gateway interface, without needing to know the specific providers or underlying complexities.

2. Multi-Model AI Applications and Intelligent Orchestration

Complex AI applications often require leveraging multiple AI models, sometimes from different providers, in a coordinated fashion. An AI Gateway excels at orchestrating these multi-model workflows.

How it helps:

- Intelligent Routing: The gateway can dynamically route parts of a complex request to different AI models based on the specific sub-task. For instance, a user query might first go to a summarization LLM, then its output to a sentiment analysis model, and finally its result to a knowledge retrieval system.

- Load Balancing and Fallback: If one model becomes overloaded or fails, the gateway can seamlessly switch to an alternative, ensuring the composite application remains responsive.

- Cost Optimization: The gateway can select the most cost-effective model for each sub-task in the workflow, optimizing overall expenditure.

- Example: A financial services platform uses an

LLM Gatewayto process customer queries. Simple FAQ questions are routed to a low-cost, fine-tuned LLM. More complex requests involving sensitive financial data are routed to a highly secure, private LLM instance. If the primary LLM is unavailable, the request fails over to a robust, enterprise-grade backup LLM. This multi-model approach ensures both cost-efficiency and resilience.

3. Cost-Controlled and Budget-Aware AI Deployments

Managing the operational costs of AI, particularly for usage-based LLMs, is a major concern for enterprises. An AI Gateway provides the tools to gain granular control over spending.

How it helps:

- Usage Tracking and Reporting: Detailed logs and dashboards provide clear visibility into which models are being used, by whom, and at what cost. APIPark’s powerful data analysis features are perfect for this, analyzing historical call data to display long-term trends and performance changes related to cost.

- Rate Limits and Quotas: Prevent accidental or intentional overspending by enforcing limits on API calls or token consumption per application or user.

- Cost-Aware Routing: Automatically select the cheapest AI model capable of fulfilling a request, minimizing expenditure for non-critical tasks.

- Budget Alerts: Proactively notify administrators when spending approaches predefined thresholds.

- Example: A marketing agency uses an AI Gateway to manage its content generation services. Different client projects are assigned specific budgets for LLM usage. The gateway enforces these budgets through quotas and automatically routes general content generation requests to more affordable LLMs, reserving premium, higher-cost models for critical, high-value content.

4. Secure AI Microservices and Data Governance

AI models often handle sensitive data, making security and compliance paramount. An AI Gateway acts as a critical enforcement point for data governance and security policies.

How it helps:

- Centralized Security Policy Enforcement: All AI interactions pass through the gateway, where authentication, authorization, and data encryption policies are uniformly applied.

- Data Masking and Anonymization: Automatically redact or mask sensitive information (e.g., PII, financial details) in requests before they reach the AI model and in responses before they are returned to client applications, ensuring compliance with privacy regulations like GDPR, HIPAA, or CCPA.

- Prompt Injection Prevention: Implement robust checks to filter out malicious or harmful prompts that could manipulate an LLM into generating undesirable content or revealing sensitive information.

- Audit Trails: Detailed logs provide an immutable record of all AI interactions, essential for compliance audits and forensic analysis. APIPark’s comprehensive logging capabilities are crucial here, recording every detail of each API call to ensure system stability and data security.

- Example: A healthcare provider implements an AI Gateway to manage access to its clinical decision support LLMs. The gateway ensures that all patient data is tokenized and anonymized before being sent to the LLM and that only authorized medical professionals can access specific models relevant to their roles, all while maintaining an auditable trail for HIPAA compliance.

5. AI Developer Portal for Internal and External Teams

An AI Gateway can form the backbone of a developer portal, simplifying the discovery and consumption of AI services for both internal teams and external partners.

How it helps:

- Centralized Catalog: Display all available AI services, their capabilities, documentation, and pricing in a single, accessible portal. APIPark specifically functions as an all-in-one AI gateway and API developer portal, allowing for the centralized display of all API services, making it easy for different departments and teams to find and use required services.

- Self-Service API Key Management: Developers can generate and manage their own API keys, subscribe to services, and monitor their usage.

- Unified API Experience: Regardless of the underlying AI provider, developers interact with a consistent API format and security model provided by the gateway.

- Controlled Access: Ensure that external partners only access approved AI models and adhere to specific usage policies.

- Example: A large software vendor offers an

LLM Gatewaythrough its developer portal, allowing its partner ecosystem to integrate AI-powered features into their applications. Partners get access to various LLMs for tasks like content generation, summarization, and translation, all managed and secured through the gateway, with transparent usage tracking and billing.

6. A/B Testing and Model Experimentation

The rapidly evolving nature of AI, especially LLMs, necessitates continuous experimentation with new models, versions, and prompt strategies. An AI Gateway facilitates this with ease.

How it helps:

- Traffic Splitting: Route a percentage of incoming requests to a new model or prompt variation while the majority goes to the stable production version.

- Performance Comparison: Collect metrics (latency, accuracy, cost) for each variant to objectively compare their performance and inform deployment decisions.

- Seamless Rollbacks: If an experimental model performs poorly, traffic can be instantly routed back to the stable version without downtime.

- Example: A e-commerce company uses an AI Gateway to A/B test different product description generation LLMs. 10% of traffic for new product descriptions is routed to a new, experimental LLM, while 90% goes to the current production model. The gateway collects metrics on generation quality, speed, and cost, allowing the product team to make data-driven decisions on which model to deploy next.

By supporting these diverse use cases, an AI Gateway transforms AI from a complex, niche technology into a core, manageable, and highly impactful component of enterprise operations, truly empowering businesses to innovate at scale.

Choosing the Right AI Gateway: Key Considerations

Selecting the appropriate AI Gateway is a critical decision that can profoundly impact an organization's AI strategy, operational efficiency, and long-term scalability. Given the evolving nature of AI and the diversity of solutions available, a thorough evaluation is essential. Here are the key considerations to guide the selection process:

1. Scalability and Performance

The gateway must be able to handle your current and projected AI traffic volumes without becoming a bottleneck.

- High Throughput: Can it process thousands or tens of thousands of requests per second (TPS) with low latency? Platforms like APIPark boast performance rivaling Nginx, achieving over 20,000 TPS with modest hardware, demonstrating robust capability for large-scale traffic.

- Elastic Scalability: Does it support horizontal scaling, allowing you to easily add more instances as demand grows?

- Low Latency: How much overhead does the gateway introduce to each AI request? For real-time applications, every millisecond counts.

- Resilience: Does it offer features like automatic failover, circuit breaking, and retry mechanisms to maintain availability even when backend AI models or services experience issues?

2. Robust Security Features

Given that AI models often handle sensitive data, security is paramount. The gateway must be a strong enforcement point.

- Comprehensive Authentication & Authorization: Look for support for industry standards like OAuth2, OpenID Connect, JWTs, and granular Role-Based Access Control (RBAC). The ability to manage API keys effectively and implement subscription approval workflows (like APIPark offers) is also critical.

- Data Governance & Masking: Can it automatically detect and redact/mask sensitive data (PII, financial info) in requests and responses to ensure compliance with regulations (GDPR, HIPAA)?

- Threat Protection: Does it offer features to detect and mitigate common attacks, such as prompt injection (for LLMs), DoS attacks, or API misuse?

- Audit Trails: Detailed, immutable logging for compliance and forensic analysis is essential.

3. Compatibility and Integrations

The chosen gateway must seamlessly fit into your existing and future AI ecosystem.

- Support for Diverse AI Models: Can it integrate with all the AI models you currently use (e.g., OpenAI, Google, Anthropic, Hugging Face, custom internal models)? Does it support various model types (LLMs, computer vision, speech-to-text)? APIPark, for example, emphasizes its quick integration of 100+ AI models.

- Unified API Format: Does it provide a standardized way to interact with different AI models, abstracting their unique APIs? This is a core strength of dedicated AI gateways, including APIPark.

- Ecosystem Integration: Can it integrate with your existing monitoring tools (Prometheus, Grafana), logging systems (ELK stack), identity providers, and CI/CD pipelines?

- Protocol Support: Beyond HTTP/REST, does it support streaming protocols (e.g., Server-Sent Events for real-time LLM outputs)?

4. Ease of Deployment and Management

The operational overhead of the gateway itself should be minimal.

- Deployment Flexibility: Can it be deployed in your preferred environment (cloud, on-premise, Kubernetes)? APIPark highlights its quick deployment with a single command line, making it easy to get started.

- Intuitive UI/UX: Is the management console user-friendly for configuring routes, policies, and monitoring?

- Developer Experience: How easy is it for developers to discover, consume, and integrate with AI services exposed via the gateway? A good developer portal (like APIPark's) is a huge plus.

- API Lifecycle Management: Does it assist with the entire lifecycle of APIs, from design to decommissioning, regulating management processes and versioning, as APIPark does?

5. Cost Management Capabilities

Given the often-usage-based pricing of AI models, robust cost control is vital.

- Granular Cost Tracking: Can it track AI costs per user, application, model, or department?

- Cost-Aware Routing: Does it support intelligent routing rules that prioritize cost-effectiveness (e.g., routing to cheaper models for specific tasks)?

- Budget Alerts & Quotas: Can you set up alerts for budget overruns and enforce usage quotas?

- Caching Effectiveness: How effectively does its caching mechanism reduce calls to expensive backend AI models?

6. Observability and Analytics

Deep insights into AI usage and performance are crucial for optimization and troubleshooting.

- Detailed Logging: Does it capture comprehensive logs for every AI call, including inputs, outputs, latency, and chosen model? APIPark’s detailed API call logging is a strong feature here.

- Real-time Monitoring: Provide dashboards and alerts for key metrics like request rates, error rates, and latency across all AI services.

- Powerful Data Analysis: Can it analyze historical data to identify trends, performance changes, and potential issues before they arise, as APIPark’s data analysis feature does?

- Distributed Tracing: Support for tracing requests across the entire microservices architecture.

7. Prompt Engineering & LLM-Specific Features

For organizations heavily reliant on Large Language Models, these features are non-negotiable.

- Centralized Prompt Management: Ability to create, store, version, and dynamically inject prompts.

- Prompt Encapsulation: The capability to combine AI models with custom prompts to create new, specialized APIs (a key feature of APIPark).

- Guardrails: Features for content moderation and preventing prompt injection attacks.

- A/B Testing for Prompts/Models: Easy mechanisms to test different prompt strategies or AI model versions.

8. Open Source vs. Commercial Solutions

This decision often boils down to budget, internal expertise, and specific feature requirements.

- Open Source (e.g., APIPark): Offers flexibility, transparency, community support, and no license fees. Ideal for organizations with strong internal technical teams willing to contribute or customize. APIPark is an open-source AI gateway under Apache 2.0, providing excellent core features and community engagement.

- Commercial (e.g., APIPark's Commercial Version, Cloud Provider Offerings): Often provides more out-of-the-box features, professional support, enterprise-grade SLAs, and reduced operational burden. APIPark offers a commercial version with advanced features and professional technical support for leading enterprises.

9. Vendor Support and Community

Reliable support is crucial for production systems.

- Documentation: Comprehensive and up-to-date documentation.

- Community: An active community can provide valuable peer support and contribute to feature development (especially for open-source solutions).

- Professional Support: For commercial products, evaluate the quality of technical support, SLAs, and responsiveness.

By meticulously evaluating potential AI Gateways against these critical considerations, organizations can make an informed decision that aligns with their strategic objectives, technical capabilities, and budgetary constraints, ensuring a robust and future-proof AI infrastructure.

The Future of AI Gateways: Evolving with Intelligence

The rapid evolution of Artificial Intelligence ensures that the AI Gateway, as a critical enabler, will also continue to evolve, becoming increasingly sophisticated and integral to the enterprise AI landscape. As AI models become more powerful, diverse, and embedded in every facet of business, the demands on the gateway will escalate, driving innovations that push its capabilities beyond mere traffic management.

One significant trend points towards hyper-intelligent routing and orchestration. Current AI Gateways offer smart routing based on cost, latency, or capability. The next generation will likely incorporate even more advanced decision-making, potentially leveraging AI itself to optimize routes. This could involve real-time learning from usage patterns, predictive analytics to anticipate model load or failures, and dynamic adjustment of prompts based on initial model responses. Imagine a gateway that not only chooses the best LLM but also automatically rewrites a prompt in a more effective way if the first attempt yields a suboptimal response, without any intervention from the client application.

The concept of autonomous AI agents operating through the gateway will also become more prevalent. As enterprises deploy multi-agent systems where different AI agents collaborate to achieve complex goals, the AI Gateway will serve as the central nervous system, orchestrating communication, managing access to specific models for each agent, and ensuring adherence to enterprise policies. This will require the gateway to understand more about the "intent" of the requests and the "persona" of the calling agent, moving beyond simple API calls to more nuanced, context-aware interactions.

Enhanced security and governance for AI-generated content will be another critical area of development. With the proliferation of generative AI, the risks of bias, misinformation, and the generation of inappropriate or harmful content are growing. Future AI Gateways will integrate advanced content moderation, bias detection, and ethical AI guardrails directly into their processing pipelines. They will not only monitor prompts for injection attacks but also actively analyze and potentially filter or rewrite AI outputs to ensure they align with brand values, regulatory requirements, and ethical guidelines, potentially using secondary AI models for this validation.

Furthermore, we will see a deeper integration of AI Gateways with broader enterprise data fabrics and knowledge graphs. For AI models to be truly effective, they need access to up-to-date, relevant, and secure enterprise data. The gateway will become more adept at fetching and contextualizing data from various internal systems before forwarding it to AI models, and similarly, enriching AI outputs with enterprise data before returning them to applications. This will transform the gateway into a "data-aware" entity, providing a richer, more powerful context for AI interactions.

The drive towards standardization and interoperability will also accelerate. As the AI ecosystem matures, there will be a greater push for common API standards and protocols specifically designed for AI and LLM interaction. AI Gateways will play a pivotal role in facilitating this by providing adapters and translators that bridge the gap between proprietary AI interfaces and emerging open standards, further reducing vendor lock-in and promoting a more fluid AI marketplace.

Finally, the "AI Gateway as a Service" model will likely gain even more traction. While self-hosted and open-source solutions like APIPark will continue to serve a vital role, especially for organizations seeking maximum control and customization, cloud providers and specialized vendors will offer fully managed AI Gateway services. These will abstract away the infrastructure management, allowing enterprises to focus purely on leveraging AI without worrying about the underlying operational complexities, further democratizing access to sophisticated AI management capabilities.

In essence, the AI Gateway is poised to evolve from a sophisticated traffic controller into an intelligent, proactive orchestrator of enterprise AI. It will become an indispensable brain for managing AI interactions, ensuring that organizations can confidently navigate the complexities of AI adoption, harness its full potential securely, efficiently, and ethically, and accelerate their journey towards becoming truly AI-driven enterprises.

Conclusion

The ascent of Artificial Intelligence, particularly the transformative power of Large Language Models, has inaugurated a new era of innovation and operational efficiency for enterprises worldwide. Yet, this promise of unparalleled capability is invariably accompanied by significant challenges: the daunting complexity of integrating diverse AI models, the imperative for robust security, the critical need for cost management, and the constant demand for scalability and resilience. It is within this intricate landscape that the AI Gateway emerges not merely as a technical convenience, but as an indispensable architectural cornerstone for any organization serious about harnessing AI effectively and responsibly.

As we have thoroughly explored, an AI Gateway is far more than a conventional API Gateway. It is a specialized, intelligent intermediary meticulously designed to abstract away the inherent complexities of interacting with disparate AI services. By offering a unified API endpoint, it dramatically simplifies integration, allowing developers to focus on core application logic rather than wrestling with myriad model-specific interfaces. Its sophisticated features, ranging from intelligent routing and comprehensive cost management to robust authentication, dynamic prompt management, and extensive observability, collectively address the unique operational challenges presented by AI workloads. Solutions like APIPark exemplify this holistic approach, offering powerful capabilities for integrating over 100 AI models, standardizing API formats, encapsulating prompts, and providing end-to-end API lifecycle management, thereby accelerating AI adoption and reducing operational overhead.

The benefits derived from implementing an AI Gateway are profound and far-reaching. Organizations can expect significantly simplified integration, bolstered security, optimized performance, and substantial cost reductions. Furthermore, an AI Gateway fosters improved scalability, ensures better governance and compliance, and accelerates the pace of innovation and experimentation, crucially mitigating vendor lock-in in a rapidly evolving AI ecosystem. Through its intricate mechanics – from intelligent request interception, rigorous policy enforcement, and dynamic transformations to smart routing, comprehensive logging, and strategic caching – the AI Gateway orchestrates every AI interaction with precision and foresight.

Looking ahead, the AI Gateway will continue its evolution, becoming an even more intelligent, autonomous, and integrated component within enterprise architectures. It will move beyond simple management to become a proactive orchestrator, capable of self-optimization, advanced threat detection, and deeper integration with enterprise data. For businesses navigating the complexities of the AI revolution, embracing an AI Gateway is not just a technological choice; it is a strategic imperative. It provides the essential infrastructure to unlock the full potential of AI, ensuring that innovation is not just possible, but also secure, scalable, and economically viable. By leveraging the power of an AI Gateway, enterprises can confidently build the intelligent, adaptive systems that will define success in the decades to come.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between an AI Gateway and a traditional API Gateway?

While both serve as intermediaries for API traffic, an AI Gateway is specifically designed with AI/ML workloads in mind, especially for Large Language Models (LLMs). A traditional API Gateway primarily focuses on general RESTful service management, including routing, authentication, and rate limiting. An AI Gateway extends these capabilities with AI-specific features like intelligent routing based on model cost or capability, prompt management and versioning, AI-specific data masking, robust cost tracking for usage-based AI models, and specialized guardrails for generative AI. It aims to abstract the complexities of diverse AI models into a unified, consistent interface.

2. Why do I need an AI Gateway if I only use one LLM provider like OpenAI?

Even with a single LLM provider, an AI Gateway offers substantial benefits. It provides a centralized point for managing your API keys, enforcing rate limits, and monitoring usage, helping to prevent unauthorized access and control costs. Crucially, it allows for prompt management and versioning, enabling you to maintain and iterate on your prompts independently of your application code. Furthermore, it prepares your architecture for future flexibility; if you later decide to integrate another LLM provider or an open-source model, the AI Gateway provides the abstraction layer to do so with minimal disruption to your existing applications, effectively preventing vendor lock-in.

3. How does an AI Gateway help with cost management for LLMs?

AI Gateways offer several powerful features for cost control. They provide granular usage tracking, allowing you to monitor consumption by user, application, or specific model, giving clear insights into spending. They enable cost-aware routing, where requests can be intelligently directed to the most cost-effective AI model capable of performing a given task (e.g., using a cheaper model for simple queries). By implementing rate limits and quotas, they prevent accidental or malicious overspending. Additionally, caching frequently requested AI responses reduces the number of calls to expensive backend models, further contributing to cost savings.

4. Can an AI Gateway help with AI security and data privacy compliance?

Absolutely. Security is one of the paramount functions of an AI Gateway. It acts as a central enforcement point for authentication and authorization, ensuring only legitimate users and applications can access AI models. It can implement data masking or redaction to automatically remove or obfuscate sensitive information (like PII) from requests before they reach the AI model and from responses before they return to the client, which is vital for compliance with regulations like GDPR or HIPAA. Furthermore, advanced AI Gateways offer prompt injection prevention and content moderation capabilities to safeguard against malicious inputs and ensure AI-generated outputs are safe and appropriate, while detailed logging provides an immutable audit trail.

5. Is an AI Gateway suitable for both cloud-based and on-premise AI models?

Yes, a well-designed AI Gateway is agnostic to where your AI models are hosted. Its primary role is to provide a unified abstraction layer. Whether your AI models are deployed on public cloud services (like AWS, Azure, Google Cloud), private cloud infrastructure, or on-premise servers, the AI Gateway can integrate with them. It essentially acts as a smart proxy, forwarding requests to the appropriate backend endpoint regardless of its physical location, as long as it has network connectivity and the necessary credentials. This flexibility makes it an ideal solution for hybrid or multi-cloud AI strategies.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.