Optimizing Product Lifecycle Management for LLM Software

The advent of Large Language Models (LLMs) has heralded a transformative era in software development, injecting unprecedented capabilities into applications ranging from customer service bots and sophisticated content generation tools to advanced code assistants and intricate data analysis platforms. These powerful neural networks, trained on colossal datasets, are not merely components within traditional software; they are often the core intelligence, driving a paradigm shift in how products are conceived, built, deployed, and maintained. However, this profound impact comes with an equally profound set of challenges, particularly when it comes to managing the entire product lifecycle. Unlike conventional software, LLM-powered applications contend with unique complexities stemming from their data-centric nature, emergent behaviors, continuous learning requirements, and significant operational overheads. The traditional Product Lifecycle Management (PLM) frameworks, while foundational, often fall short in addressing the idiosyncratic demands of LLM software, necessitating a bespoke approach that integrates AI-specific methodologies with established best practices.

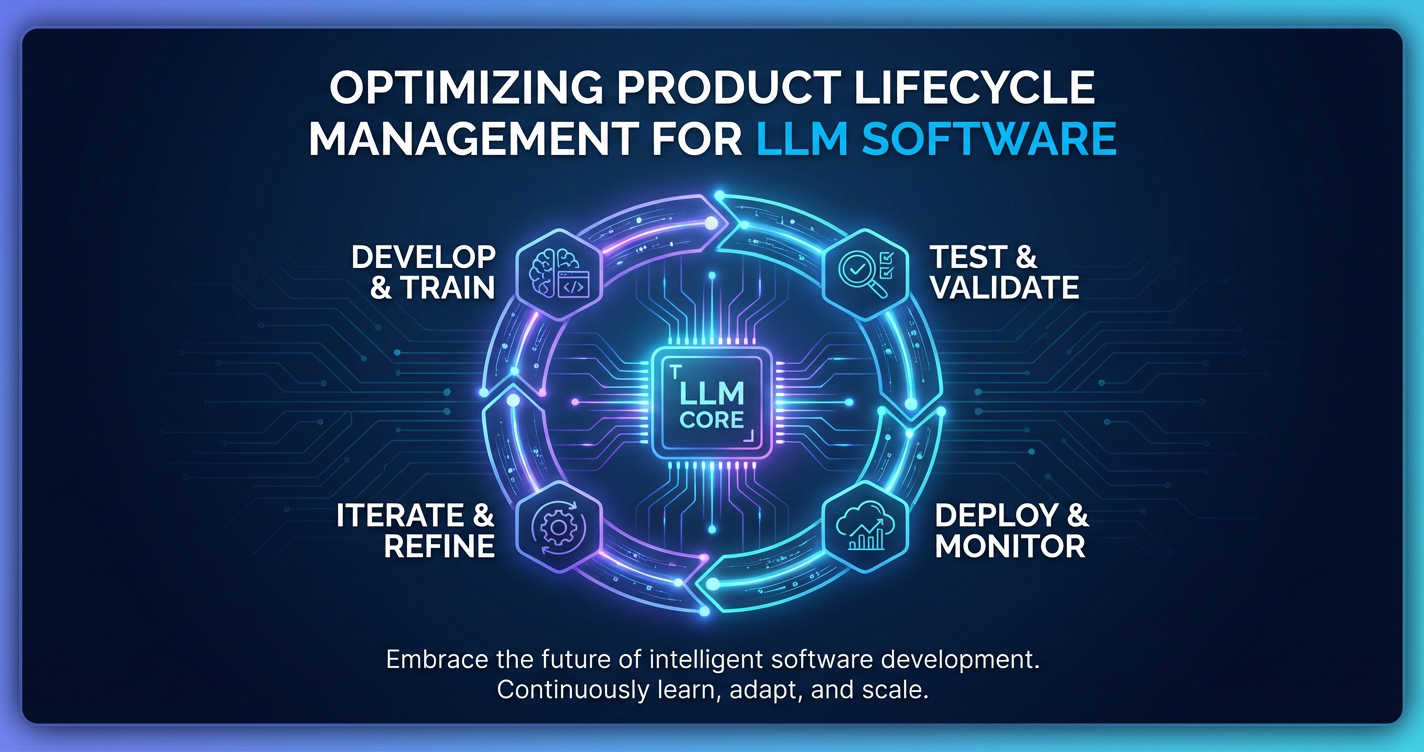

Optimizing Product Lifecycle Management for LLM software is no longer a luxury but a critical imperative for enterprises striving to harness the full potential of this technology. An inefficient PLM process can lead to spiraling costs, compromised model performance, security vulnerabilities, regulatory non-compliance, and ultimately, a failure to deliver tangible business value. From the initial spark of an idea, through the intricate stages of data curation, model training, and prompt engineering, to the complex realities of deployment, continuous monitoring, and iterative refinement, each phase demands meticulous attention and specialized tools. This comprehensive guide will delve deep into the multifaceted landscape of PLM for LLM software, dissecting the unique challenges and presenting strategic frameworks and actionable insights. We will explore how dedicated infrastructures, such as an LLM Gateway and an AI Gateway, become indispensable for robust deployment and management, and how stringent API Governance is paramount for securing, scaling, and evolving these sophisticated services. By adopting a holistic and adaptive PLM strategy, organizations can not only mitigate risks but also unlock innovation, accelerate time-to-market, and ensure the sustained success of their LLM-driven products in an increasingly competitive digital ecosystem.

1. The Unique Landscape of LLM Software Development

The development of Large Language Model (LLM) software diverges significantly from traditional software engineering paradigms, presenting a unique set of challenges and opportunities that necessitate a re-evaluation of conventional Product Lifecycle Management (PLM) strategies. Understanding these distinctions is fundamental to crafting an effective and optimized PLM approach.

At its core, traditional software development often follows a deterministic path, where inputs lead to predictable outputs based on meticulously coded logic. Errors are typically attributable to bugs in the code or flaws in the explicit design. LLM software, however, operates within a probabilistic realm. While governed by underlying algorithmic principles, the specific outputs generated by an LLM are influenced by a vast number of parameters, the nuances of its training data, and the context of the input prompt. This inherent non-determinism introduces a layer of complexity that can make debugging, performance prediction, and quality assurance far more intricate. The 'product' in LLM software is multifaceted; it's not just the application wrapper, but fundamentally the model itself, its carefully curated training data, the prompt engineering strategies employed, and the infrastructure that enables its real-time invocation.

One of the most salient distinctions lies in the data-centric nature of LLM development. Unlike traditional software where data is often an input to a pre-defined logic, in LLMs, data is the logic. The quality, diversity, and ethical sourcing of training data directly dictate the model's capabilities, biases, and performance. This necessitates robust data governance throughout the entire lifecycle, from collection and labeling to storage and versioning. Changes in data can have profound, sometimes unforeseen, impacts on model behavior, requiring continuous monitoring and validation. Furthermore, LLMs exhibit emergent properties, meaning they can perform tasks or demonstrate capabilities that were not explicitly programmed or even anticipated during their training. While this can lead to groundbreaking applications, it also poses challenges for predictable behavior, safety, and thorough testing. Ensuring that these emergent behaviors align with product goals and ethical guidelines requires sophisticated evaluation frameworks that go beyond unit tests.

Moreover, the field of LLMs is characterized by rapid evolution. New models, architectures, and fine-tuning techniques emerge with breathtaking speed, often rendering existing solutions obsolete or significantly less efficient within months. This fast-paced innovation cycle demands an agile PLM that can readily incorporate new technologies, facilitate model upgrades, and adapt product strategies to leverage the latest advancements. The ethical dimension also weighs heavily; LLMs can perpetuate biases present in their training data, generate misleading or harmful content, and raise significant privacy concerns. Integrating ethical AI principles and robust responsible design practices into every stage of the PLM is not merely a compliance issue but a fundamental requirement for building trustworthy and sustainable LLM products. This includes considerations around fairness, transparency, accountability, and user safety, requiring cross-functional collaboration and specialized expertise from the outset.

The operational landscape for LLMs is also distinct. Deploying and managing these large, resource-intensive models in production environments introduces challenges related to scalability, latency, cost optimization, and real-time monitoring. Unlike a static software binary, LLMs often require dynamic inference infrastructure, sophisticated prompt routing, and mechanisms for continuous improvement through feedback loops. The very interaction with an LLM, often through an API, necessitates robust API Governance to ensure security, versioning, performance, and clear documentation. This complex interplay of model, data, infrastructure, and ethical considerations defines the unique landscape of LLM software development, compelling organizations to adopt a more adaptive, data-driven, and AI-centric approach to Product Lifecycle Management.

2. Phase 1 - Ideation and Design for LLM Products

The foundational phase of Ideation and Design for LLM products is where the strategic direction is set, and the groundwork is laid for a successful venture. This stage is critically important because decisions made here ripple throughout the entire product lifecycle, influencing everything from technical architecture to ethical implications and market adoption. It moves beyond simply recognizing the capabilities of LLMs to meticulously defining how these capabilities can address specific market needs and create distinct value.

2.1. Market Research & User Needs: Identifying Specific Problems LLMs Can Solve

The journey begins with comprehensive market research to identify genuine pain points and unmet needs that LLMs are uniquely positioned to address. This isn't about shoehorning an LLM into an existing problem; it's about discerning where their generative, analytical, and conversational powers can deliver transformative solutions. For instance, rather than simply stating "we'll use an LLM for customer support," detailed research might reveal that a significant bottleneck lies in summarizing lengthy customer interaction transcripts or generating personalized follow-up emails, tasks where LLMs can excel. This involves delving into user interviews, competitive analysis, trend spotting, and understanding regulatory landscapes. What are users struggling with? Where are existing solutions falling short? How can an LLM provide an intuitive, scalable, or more efficient alternative? The focus must be on tangible, measurable problems rather than abstract AI potential, ensuring that the eventual product solves real-world issues for a defined target audience.

2.2. Use Case Definition: From General LLM Capabilities to Specific Applications

Once market needs are understood, the next step is to translate general LLM capabilities (e.g., text generation, summarization, translation, code generation, sentiment analysis) into concrete, well-defined use cases. This involves articulating what the LLM-powered product will do and how it will do it to solve the identified problem. A general capability like "text generation" can become specific applications such as "automated marketing copy generation for e-commerce product descriptions," "real-time meeting minute summarization," or "personalized learning content creation for educational platforms." Each use case must have clear objectives, defined inputs, expected outputs, and a measurable impact. This stage requires rigorous brainstorming, prototyping with existing models (even smaller ones), and simulating user interactions to validate the feasibility and desirability of each potential application. It also involves considering the constraints, such as real-time performance requirements, potential for hallucinations, and the need for human oversight.

2.3. Model Selection & Architecture Design: Choosing Base Models, Fine-tuning Strategies, RAG Implementation

With specific use cases in mind, technical design commences. This involves critical decisions about the underlying LLM architecture. Should a proprietary model (e.g., GPT-4, Claude) be leveraged via an API, or is a fine-tuned open-source model (e.g., Llama 2, Mistral) a better fit for cost, privacy, or customization needs? The choice often hinges on factors like performance requirements, budget, data sensitivity, and the level of control desired over the model. If an open-source model is chosen, a fine-tuning strategy (e.g., full fine-tuning, LoRA, QLoRA) must be devised, considering the availability and quality of domain-specific data. Furthermore, the architecture must account for Retrieval Augmented Generation (RAG) if the LLM needs to access proprietary, up-to-date, or factual information not included in its original training data. This involves designing the retrieval system, including vector databases, indexing strategies, and document chunking, to ensure relevant context is efficiently provided to the LLM. The overall system architecture will also consider how the LLM will integrate with existing enterprise systems, databases, and user interfaces, often necessitating a robust API layer managed by an AI Gateway or LLM Gateway for seamless interaction and control.

2.4. Data Strategy: Data Collection, Labeling, Cleaning, Ethical Sourcing

The data strategy is paramount for LLM products, as the model's intelligence is inherently tied to the data it consumes. This phase defines how training data will be acquired, processed, and managed throughout the product's lifespan. For fine-tuning, this involves identifying relevant datasets, establishing robust data collection pipelines, and designing accurate labeling processes (often requiring human annotation). Data cleaning is an extensive undertaking, involving deduplication, noise reduction, bias detection, and normalization to ensure data quality and consistency. Crucially, the ethical sourcing of data must be a top priority. This includes obtaining proper consent, anonymizing sensitive information, adhering to privacy regulations (e.g., GDPR, CCPA), and actively mitigating biases inherent in the data that could lead to discriminatory or unfair model outputs. A well-defined data strategy also encompasses data storage solutions, version control for datasets, and mechanisms for continuous data refreshes to keep the model relevant and performant.

2.5. Evaluation Metrics & Benchmarking: Defining Success Criteria Early On

Before a single line of model code is written or fine-tuning begins, establishing clear evaluation metrics and benchmarking strategies is essential. How will the success of the LLM product be measured? This goes beyond traditional software metrics like uptime or latency. For LLMs, success metrics are often complex and application-specific. For a summarization tool, metrics might include ROUGE scores for conciseness and fidelity, or human judgments for coherence and accuracy. For a chatbot, F1 scores for intent recognition, user satisfaction ratings, and reduction in human agent interaction time might be key. Benchmarking against existing solutions (human baselines or competitor products) provides a target for performance. This phase involves defining both quantitative metrics (e.g., accuracy, relevance, fluency, perplexity) and qualitative assessments (e.g., user experience, safety, absence of harmful content). Establishing these criteria early ensures that development efforts are aligned with desired outcomes and provides a clear framework for testing, iteration, and continuous improvement throughout the product lifecycle.

2.6. Ethical AI & Responsible Design: Bias Mitigation, Transparency, Safety Protocols

Integrating ethical AI and responsible design principles from the very beginning is non-negotiable for LLM products. This involves proactively identifying and mitigating potential risks associated with AI, such as bias, fairness, privacy, security, transparency, and accountability. During the design phase, teams must consider: * Bias Mitigation: How will potential biases in training data be identified and addressed? What mechanisms will be put in place to monitor for biased outputs in production? * Transparency & Explainability: To what extent can the LLM's decision-making process be understood or explained to users? Are there clear disclaimers about the AI's role? * Safety Protocols: How will the model be safeguarded against generating harmful, offensive, or inappropriate content (e.g., "guardrails," content filtering)? What are the emergency shutdown procedures if the model exhibits dangerous behavior? * Data Privacy: How will user data provided to the LLM be handled and protected? Are there clear data retention and anonymization policies? * Accountability: Who is responsible when an LLM makes an error or causes harm? What mechanisms are in place for redress?

This requires a multi-disciplinary approach, involving ethicists, legal experts, product managers, and engineers. Establishing clear guidelines, review processes, and incorporating tools for ethical AI assessment during design ensures that the product is not only effective but also trustworthy, responsible, and compliant with evolving ethical standards and regulations.

3. Phase 2 - Development and Training of LLM Software

The Development and Training phase is where the theoretical concepts from the design stage are brought to life, transforming raw data and architectural plans into functional LLM models. This phase is characterized by intense iterative work, technical complexities, and a constant need for meticulous management of data, models, and experimentation. It is far more dynamic than traditional software development, requiring specialized tooling and methodologies to navigate the unique challenges of AI model creation.

3.1. Data Engineering Pipelines: Automation, Quality Control

At the heart of LLM development are robust data engineering pipelines. These pipelines are responsible for automating the ingestion, transformation, and preparation of vast amounts of data required for model training and fine-tuning. This includes designing scalable infrastructure to handle petabytes of text, code, or multimodal data. Key components of these pipelines involve: * Ingestion: Connecting to various data sources (databases, web scraping tools, internal systems) and efficiently pulling raw data. * Cleaning and Preprocessing: Implementing algorithms for deduplication, noise removal, tokenization, stemming, lemmatization, and format standardization. This is a crucial step to eliminate inconsistencies and irrelevant information that could negatively impact model performance. * Labeling (if applicable): For supervised fine-tuning or prompt engineering reinforcement, human-in-the-loop annotation systems are often integrated to generate high-quality labeled datasets. This requires clear guidelines and quality assurance checks for annotators. * Validation and Quality Control: Implementing automated checks to ensure data integrity, identify outliers, and detect biases. Data quality issues can lead to "garbage in, garbage out" scenarios, so continuous monitoring of data health is paramount. * Version Control for Data: Just as code is versioned, datasets used for training must be versioned to ensure reproducibility, track changes, and roll back if issues arise. These pipelines must be resilient, observable, and scalable, capable of handling continuous data updates and refreshes to keep the LLM models relevant and performant over time.

3.2. Model Training & Fine-tuning: Techniques, Infrastructure Considerations

Once the data pipelines are established, the core process of model training and fine-tuning begins. This involves leveraging powerful computational resources to adapt a base LLM to specific tasks or domains. * Techniques: * Pre-training: For building foundational models from scratch, which is typically done by major AI labs due to extreme computational costs. * Fine-tuning: Adapting pre-trained models to specific downstream tasks or datasets. This can involve full fine-tuning (updating all model parameters) or more parameter-efficient methods like LoRA (Low-Rank Adaptation) or QLoRA (Quantized LoRA), which significantly reduce computational and memory requirements. * Reinforcement Learning from Human Feedback (RLHF): A critical technique for aligning LLMs with human preferences and safety guidelines, involving training reward models from human feedback and then using reinforcement learning to optimize the LLM. * Infrastructure Considerations: * Compute: Access to powerful GPUs (e.g., NVIDIA A100s, H100s) is essential. This often involves cloud-based GPU clusters, managed services, or on-premise AI supercomputers. * Distributed Training: For very large models or datasets, distributed training frameworks (e.g., PyTorch Distributed, DeepSpeed) are used to split the workload across multiple GPUs and machines. * Experiment Tracking: Tools to log and compare different training runs, hyperparameters, model versions, and evaluation metrics (e.g., MLflow, Weights & Biases). * Model Versioning and Storage: Securely storing trained models, their checkpoints, and associated metadata (e.g., training configurations, evaluation results) in version-controlled repositories. Effective management of this phase requires deep expertise in machine learning engineering, distributed systems, and MLOps practices to ensure efficient resource utilization, reproducible results, and robust model development.

3.3. Prompt Engineering & Iteration: The Art of Crafting Effective Prompts

Prompt engineering has emerged as a critical discipline in LLM development. It involves the careful design, testing, and refinement of input prompts to elicit desired behaviors and outputs from an LLM. This is often an iterative process, much like traditional software debugging: * Initial Prompt Design: Crafting clear, concise, and unambiguous prompts that guide the LLM towards the desired task. This can involve specifying roles, formats, constraints, and providing examples (few-shot learning). * Iterative Refinement: Experimenting with different phrasings, structures, and contextual information to improve output quality. This often involves trial and error, A/B testing different prompts, and analyzing model responses. * Prompt Chaining & Orchestration: For complex tasks, breaking down the problem into smaller steps and using multiple prompts or even multiple LLMs in sequence, orchestrating their interactions. * Prompt Templating: Developing reusable prompt templates that can be dynamically populated with user-specific data, ensuring consistency and scalability. * Version Control for Prompts: Just like code and data, prompts need to be versioned. Changes to prompts can drastically alter model behavior, and having a history allows for reproducibility and rollback. This is a growing area where dedicated prompt management tools are becoming essential.

Effective prompt engineering can significantly enhance the performance of an LLM without requiring further model training, making it a cost-effective and agile approach to product improvement. It requires a blend of linguistic skill, domain expertise, and an understanding of LLM capabilities and limitations.

3.4. Human-in-the-Loop (HITL) Processes: Reinforcement Learning with Human Feedback (RLHF)

Human-in-the-Loop (HITL) processes are indispensable for aligning LLMs with human values, preferences, and complex qualitative judgments that are difficult for algorithms alone to capture. One of the most prominent HITL techniques is Reinforcement Learning with Human Feedback (RLHF), which is central to developing safe, helpful, and honest LLMs. * Data Labeling for Reward Models: Humans evaluate and rank LLM outputs based on criteria like helpfulness, harmlessness, truthfulness, and style. This human preference data is then used to train a "reward model." * Reinforcement Learning: The reward model provides feedback to the LLM during training, guiding it to generate outputs that are likely to receive higher human scores. This iterative process refines the LLM's behavior over time. * Continuous Feedback: Beyond RLHF, HITL can also involve continuous feedback loops where human reviewers monitor production outputs, identify errors or undesirable behaviors, and provide corrections that can be used for further fine-tuning or retraining. * Content Moderation & Safety: Humans are often involved in reviewing potentially harmful or biased content generated by LLMs, helping to refine safety filters and guidelines. Integrating HITL effectively requires careful design of annotation tasks, robust quality control for human judgments, and efficient platforms for managing the feedback loop, ensuring that human intelligence directly contributes to the model's refinement and alignment.

3.5. Version Control for Models and Data: MLOps Practices

The complexity of LLM development necessitates rigorous MLOps (Machine Learning Operations) practices, particularly concerning version control for both models and data. Unlike traditional software where Git primarily manages code, MLOps extends this concept to all AI artifacts. * Model Versioning: Every trained model checkpoint, along with its architecture, hyperparameters, training data version, and evaluation metrics, must be meticulously versioned. This allows teams to track the evolution of models, reproduce specific results, and confidently roll back to previous versions if a new deployment causes issues. Model registries are crucial tools for managing this. * Data Versioning: As discussed, training datasets are dynamic. A robust data versioning system tracks changes to datasets, ensuring that models can always be associated with the exact data they were trained on. This is vital for debugging and regulatory compliance. * Code Versioning: Standard code version control (e.g., Git) remains essential for managing the training scripts, inference code, prompt engineering scripts, and deployment configurations. * Experiment Tracking: Integrated platforms that link model versions, data versions, and code versions to specific experimental runs, allowing for comprehensive traceability and comparison of different iterations. Implementing these MLOps practices ensures reproducibility, fosters collaboration among data scientists and engineers, and forms the backbone of a stable and auditable LLM development pipeline.

3.6. Security Considerations during Development: Data Privacy, Model Poisoning Risks

Security during the development phase of LLM software is multifaceted and critical. Protecting sensitive data and guarding against malicious attacks are paramount. * Data Privacy: Ensuring that all data used for training and fine-tuning, especially if it contains personal identifiable information (PII) or proprietary business data, is handled according to strict privacy protocols. This includes robust access controls, encryption at rest and in transit, data anonymization techniques, and compliance with data protection regulations (e.g., GDPR, CCPA). * Model Poisoning: A significant threat where malicious actors inject corrupted data into the training set to manipulate the model's behavior, causing it to generate incorrect, biased, or harmful outputs. Defenses include rigorous data validation, anomaly detection in training data, and secure data provenance tracking. * Intellectual Property Protection: Safeguarding the proprietary models, fine-tuning weights, and unique prompt engineering strategies from unauthorized access or theft. This involves secure development environments, strict access controls, and potentially model watermarking. * Vulnerability Management: Regularly scanning development environments, libraries, and dependencies for known security vulnerabilities. * Secure Development Practices: Adopting secure coding principles for all scripts and applications interacting with the LLM, including input validation and protection against common web vulnerabilities if the LLM is exposed via an API. Proactive security measures from the earliest stages of development minimize risks, build trust, and prevent costly breaches or compromises down the line.

4. Phase 3 - Deployment and Integration: The Role of LLM Gateways and AI Gateways

The transition from development to deployment is a critical juncture in the product lifecycle of LLM software. While a model might perform exceptionally well in a controlled development environment, bringing it to production demands a sophisticated infrastructure that can handle real-world challenges such as scalability, latency, cost optimization, and secure integration with existing systems. This is precisely where specialized solutions like an LLM Gateway and a broader AI Gateway become indispensable, acting as a robust intermediary layer between applications and the underlying LLM services.

4.1. Challenges of LLM Deployment: Scalability, Latency, Cost Management, Multi-model Orchestration

Deploying LLMs effectively in production introduces a unique set of operational hurdles that far exceed those of typical stateless microservices. * Scalability: LLMs are computationally intensive. Handling a surge in user requests requires dynamic scaling of GPU resources, which can be expensive and complex to manage. A single LLM instance might not suffice for high-traffic applications, necessitating load balancing across multiple instances or even multiple underlying models. * Latency: Users expect real-time responses. The inference time for LLMs can vary significantly based on model size, input length, and computational load. Minimizing latency is crucial for a positive user experience, often requiring optimized inference engines, efficient caching strategies, and geographically distributed deployments. * Cost Management: Running LLMs, especially proprietary ones, incurs significant costs based on token usage, compute resources, and API calls. Without careful management, these costs can quickly spiral out of control. Optimizing model choices, prompt lengths, and implementing intelligent caching are vital. * Multi-model Orchestration: Many sophisticated LLM applications don't rely on a single model. They might orchestrate calls to different LLMs for different tasks (e.g., one for summarization, another for translation), or use a smaller, faster model for initial filtering before escalating to a larger, more powerful one. Managing these diverse models, their APIs, and their specific requirements adds substantial complexity to the deployment strategy. * Version Control for Deployed Models: As models are continuously refined and updated, seamless deployment of new versions without downtime, and the ability to roll back to previous versions, are essential. This requires robust blue/green deployments or canary release strategies.

4.2. The Concept of an LLM Gateway: What it is, Why it's Essential

An LLM Gateway is a specialized API gateway designed specifically to manage the unique demands of interacting with Large Language Models. It acts as an abstraction layer between client applications (front-end, back-end microservices, etc.) and various LLM providers or internally deployed models. Its primary purpose is to simplify, secure, optimize, and centralize access to LLM capabilities, much like a traditional API Gateway manages access to microservices.

It is essential because direct integration with multiple LLM providers or models is brittle, complex, and prone to breaking changes. Each LLM provider might have a different API format, authentication mechanism, rate limiting policy, and pricing structure. An LLM Gateway abstracts away these complexities, providing a unified interface for developers and ensuring operational resilience and efficiency. It serves as a single point of entry and control for all LLM interactions, empowering organizations to build scalable and maintainable AI-powered applications.

4.3. Core Functions of an AI Gateway/LLM Gateway

A robust AI Gateway, which encompasses the functionalities of an LLM Gateway but often extends to other types of AI models, offers a comprehensive suite of features vital for production-grade LLM applications. An excellent example of such a platform is APIPark, an open-source AI gateway and API management platform that specifically addresses these needs, making it easier to manage, integrate, and deploy both AI and REST services.

- Unified API Interface (Abstraction Layer): An AI Gateway provides a standardized API endpoint for all LLM interactions, regardless of the underlying model or provider. This means client applications interact with one consistent interface, and the gateway handles the translation and routing to the appropriate backend LLM. APIPark excels here by offering a unified API format for AI invocation, ensuring that changes in AI models or prompts do not affect the application or microservices, thereby simplifying AI usage and maintenance costs.

- Load Balancing & Traffic Management: Distributes incoming requests across multiple LLM instances or providers to optimize performance, prevent overload, and ensure high availability. It can intelligently route traffic based on factors like latency, cost, and capacity.

- Authentication & Authorization: Secures access to LLMs by enforcing authentication mechanisms (e.g., API keys, OAuth tokens) and authorization policies, ensuring that only authorized applications or users can invoke specific models.

- Rate Limiting & Quotas: Prevents abuse, manages resource consumption, and enforces fair usage policies by setting limits on the number of requests an application or user can make within a given timeframe.

- Caching: Stores responses for frequently requested prompts or common LLM outputs. This significantly reduces latency, decreases computational costs, and lessens the load on backend LLMs by serving cached responses directly.

- Observability (Logging, Monitoring, Tracing): Collects comprehensive metrics on LLM calls, including request/response payloads, latency, error rates, token usage, and costs. This provides critical insights into model performance, usage patterns, and potential issues. APIPark, for instance, provides detailed API call logging, recording every detail of each API call, enabling businesses to quickly trace and troubleshoot issues. It also offers powerful data analysis capabilities to display long-term trends and performance changes, aiding preventive maintenance.

- Cost Optimization Across Different Providers: Allows organizations to dynamically switch between different LLM providers (e.g., OpenAI, Anthropic, Google) or internal models based on real-time cost, performance, or availability. This enables smart routing to achieve the best balance of cost and quality.

- Model Routing & Failover: Can route requests to specific model versions or different models entirely based on the context of the request, user profile, or A/B testing configurations. It also provides failover mechanisms, automatically rerouting requests if a primary LLM instance or provider becomes unavailable.

- Prompt Management & Versioning: Centralizes the management of prompts, allowing prompt templates to be versioned, tested, and updated independently of client code. This is crucial for rapid iteration and ensures consistency. APIPark enables prompt encapsulation into REST API, allowing users to quickly combine AI models with custom prompts to create new APIs, like sentiment analysis.

- Security Enhancements: Beyond basic authentication, an AI Gateway can implement additional security layers such as input/output sanitization to prevent prompt injection attacks, data masking for sensitive information, and threat detection.

- End-to-End API Lifecycle Management: Platforms like APIPark assist with managing the entire lifecycle of APIs, including design, publication, invocation, and decommissioning. This helps regulate API management processes, manage traffic forwarding, load balancing, and versioning of published APIs. It also allows for API service sharing within teams and independent API and access permissions for each tenant, enhancing collaboration and resource utilization.

- Performance: With just an 8-core CPU and 8GB of memory, APIPark can achieve over 20,000 TPS, supporting cluster deployment to handle large-scale traffic, rivaling the performance of high-demand systems like Nginx.

- Subscription Approval: APIPark allows for the activation of subscription approval features, ensuring that callers must subscribe to an API and await administrator approval before they can invoke it, preventing unauthorized API calls and potential data breaches.

4.4. Integration with Existing Systems: Microservices, Enterprise Applications

The deployment of LLM software is rarely in isolation. It needs to seamlessly integrate with a company's existing technological ecosystem, including microservices, legacy enterprise applications, databases, and front-end user interfaces. An AI Gateway plays a pivotal role in facilitating this integration. By providing a unified and consistent API, it allows various internal and external applications to consume LLM capabilities without needing to understand the underlying complexities of AI models.

For microservices, the gateway acts as a bridge, enabling them to make simple API calls to access LLM functionalities, much like they would call any other internal service. For traditional enterprise applications, the gateway provides a modernization layer, allowing them to leverage advanced AI capabilities without extensive re-engineering. This integration often involves defining clear API contracts, managing data formats, and ensuring that security and compliance standards are maintained across the entire stack. The use of a robust AI Gateway significantly reduces the integration overhead, accelerates time-to-market for AI-powered features, and ensures that LLM capabilities are readily accessible and consumable across the organization.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

5. Phase 4 - Operations, Monitoring, and Maintenance: Emphasizing API Governance

Once LLM software is deployed, the product lifecycle transitions into a continuous phase of operations, monitoring, and maintenance. This stage is critical for ensuring the sustained performance, reliability, security, and cost-effectiveness of LLM-powered applications in a dynamic production environment. Unlike traditional software, LLMs require ongoing vigilance due to their probabilistic nature, potential for data drift, and the need for continuous alignment with evolving user expectations. Central to effective management in this phase is robust API Governance, which provides the framework for managing the interfaces through which LLM services are accessed and controlled.

5.1. Continuous Monitoring of LLM Performance: Drift Detection, Hallucination Rates, Bias Monitoring

Effective LLM operations demand a comprehensive and continuous monitoring strategy that goes far beyond traditional system health checks. * Model Performance Monitoring: This involves tracking core metrics relevant to the LLM's primary function. For a summarization model, this might include the average ROUGE score or human-rated quality. For a chatbot, it could be intent recognition accuracy or success rates in resolving user queries. Tools are needed to track these metrics over time, identifying any degradation in performance. * Data Drift Detection: A critical concern for LLMs is "data drift," where the characteristics of the real-world input data change over time, diverging from the data the model was originally trained on. This can lead to decreased performance. Monitoring systems must detect shifts in input distributions (e.g., new jargon, changing user query patterns) and alert operators. * Hallucination Rates: LLMs can sometimes generate factually incorrect or nonsensical information, known as hallucinations. Monitoring systems should track instances of hallucinations, often through a combination of automated checks (e.g., against factual databases for RAG systems) and human feedback loops, to quantify their frequency and impact. * Bias Monitoring: Continuously evaluating model outputs for signs of bias (e.g., gender, racial, cultural bias) or unfairness. This requires specialized metrics and techniques, potentially involving external auditors or dedicated ethical AI monitoring tools, to ensure the model remains equitable and responsible. * Output Quality Monitoring: Beyond specific errors, the overall coherence, relevance, and fluency of LLM outputs need to be monitored. This often involves sampling outputs for human review and integrating user feedback mechanisms directly into the application. These monitoring efforts provide the early warning signals necessary to initiate retraining, model updates, or prompt adjustments before significant issues impact users.

5.2. Real-time Observability: Latency, Error Rates, Token Usage

Beyond specific LLM performance, overall system health and operational efficiency require real-time observability across several key dimensions: * Latency: Monitoring the end-to-end response time for LLM inferences, from the moment a request hits the AI Gateway to when the response is delivered back to the client. High latency can severely degrade user experience and might indicate bottlenecks in the infrastructure, model performance, or API calls. * Error Rates: Tracking the frequency and types of errors occurring during LLM invocations, whether they are API errors from external providers, internal processing errors, or model-generated errors. Detailed error logging and alerting are crucial for rapid troubleshooting. * Token Usage: For cost management, monitoring token consumption per request and over time is paramount. This helps identify inefficient prompts, potential abuse, or unexpected spikes in usage. An LLM Gateway can aggregate this data across multiple models and providers, providing a unified view for cost optimization. * Resource Utilization: Tracking CPU, GPU, memory, and network utilization of the LLM inference infrastructure. This helps ensure optimal resource allocation, prevent over-provisioning (and thus unnecessary costs), or under-provisioning (leading to performance degradation). * Dependency Health: Monitoring the health and availability of all upstream and downstream dependencies, including vector databases, data pipelines, and external LLM APIs, as their instability can directly impact the LLM application. Robust dashboards, alerting systems, and tracing tools are essential to provide engineering and operations teams with real-time insights into the LLM system's behavior, enabling proactive problem-solving.

5.3. Feedback Loops & Retraining: Adapting Models Based on Production Data

The dynamic nature of LLM environments necessitates continuous adaptation. Establishing effective feedback loops and strategic retraining mechanisms is vital for long-term product success. * User Feedback Integration: Directly incorporating user feedback, such as explicit ratings of LLM responses, thumbs up/down buttons, or qualitative comments, into the model improvement cycle. This "human-in-the-loop" data is invaluable for fine-tuning and alignment. * Production Data Monitoring: Analyzing how the model performs on real-world production data. This includes identifying problematic use cases, emergent biases, or areas where the model frequently underperforms. * Scheduled Retraining: Implementing a cadence for retraining models with fresh, recent data to counteract data drift and incorporate new knowledge. The frequency will depend on the domain's dynamism. * Triggered Retraining: Developing automated triggers for retraining based on significant performance degradation, detection of new data patterns, or identification of critical safety issues. * A/B Testing for Models: When deploying updated models or fine-tuned versions, A/B testing in production allows for side-by-side comparison of different model versions, ensuring that new iterations genuinely improve performance and user experience before a full rollout. These feedback loops and retraining strategies ensure that LLM models remain relevant, accurate, and aligned with user expectations, driving continuous improvement throughout the product's lifespan.

5.4. The Importance of API Governance in LLM Ecosystems

As LLMs are increasingly exposed as services through APIs, comprehensive API Governance becomes an absolute necessity. It provides the policies, processes, and tools required to manage the entire lifecycle of APIs, ensuring they are secure, reliable, well-documented, and aligned with business objectives. In the context of LLMs, where the underlying "logic" (the model) can change rapidly and unpredictably, robust API Governance mitigates risks and enables scalable innovation.

- Defining Standards and Policies for API Design and Usage: Establishing clear guidelines for API design (e.g., RESTful principles, naming conventions, data formats) and usage (e.g., authentication methods, error handling). This ensures consistency across all LLM-powered services, making them easier for developers to consume and maintain.

- Version Management for APIs: As LLMs evolve, their capabilities, inputs, and outputs might change. API Governance dictates how these changes are managed through API versioning (e.g.,

v1,v2). This allows for backward compatibility, clear communication to consumers about deprecation schedules, and controlled transitions to new functionality without breaking existing applications. - Security Policies (OAuth, API Keys): Enforcing stringent security protocols for API access, including robust authentication mechanisms (e.g., OAuth 2.0, JWT), authorization rules (role-based access control), and secure API key management. This prevents unauthorized access, data breaches, and protects the intellectual property embedded in the LLM.

- Documentation and Developer Portals: Providing comprehensive, up-to-date documentation for all LLM APIs, including examples, use cases, error codes, and rate limits. A well-designed developer portal (like the one APIPark helps create) makes it easy for internal and external developers to discover, understand, and integrate with LLM services, accelerating adoption and reducing support overhead.

- Compliance and Regulatory Adherence: Ensuring that LLM APIs comply with relevant industry standards, data privacy regulations (e.g., GDPR, HIPAA), and ethical AI guidelines. This includes auditing API access logs, enforcing data residency requirements, and managing consent.

- Lifecycle Management of APIs (Publishing, Deprecation): Governing the entire lifespan of an API, from its initial publication to its eventual deprecation and retirement. This involves formal processes for API review, release, monitoring, and communication with consumers when an API version is phased out. An API Gateway, such as APIPark, is instrumental in providing end-to-end API lifecycle management, enabling robust governance. Effective API Governance transforms LLM services from isolated technical components into well-managed, scalable, and secure product offerings that can be reliably integrated into a broader ecosystem, fostering trust and enabling widespread adoption.

5.5. Incident Response & Troubleshooting: Strategies for Addressing Model Failures or API Issues

Despite robust monitoring, incidents will inevitably occur. A well-defined incident response and troubleshooting strategy is crucial for minimizing downtime and maintaining trust in LLM services. * Automated Alerting: Implementing comprehensive alerting mechanisms based on anomaly detection in performance metrics (latency, error rates), sudden spikes in token usage, or critical errors in logs. Alerts should be routed to the appropriate teams (e.g., MLOps, SRE, product). * Runbooks and Playbooks: Developing clear, step-by-step runbooks for common incidents, outlining diagnostic procedures, potential remedies, and escalation paths. This ensures consistent and efficient responses. * Diagnostic Tools: Providing engineering teams with access to robust logging, tracing, and debugging tools that can pinpoint the source of an issue, whether it's an underlying LLM, the AI Gateway, network infrastructure, or an application-level problem. APIPark's detailed API call logging can be invaluable here. * Rollback Capabilities: Ensuring the ability to quickly roll back to a previous stable model version or API configuration in case a new deployment introduces critical bugs or performance regressions. * Post-Mortem Analysis: Conducting thorough post-mortem analyses after significant incidents to identify root causes, document lessons learned, and implement preventative measures to avoid recurrence. A proactive and well-rehearsed incident response strategy is fundamental for maintaining the reliability and resilience of LLM-powered products in production.

5.6. Cost Management in Production: Optimizing Token Usage, Model Choices

Managing the operational costs of LLMs in production is a continuous effort, given the consumption-based pricing models of many proprietary LLMs and the compute intensity of open-source deployments. * Token Usage Optimization: * Prompt Engineering: Refining prompts to be concise and avoid unnecessary verbosity. * Response Truncation: Truncating LLM responses to the minimum necessary length before sending them back to the user. * Input Filtering: Pre-processing user inputs to remove irrelevant or redundant information before sending to the LLM. * Context Management: Strategically managing context windows to only include information truly relevant to the current query, avoiding sending entire conversation histories if not needed. * Intelligent Model Choices: * Tiered Model Strategy: Using smaller, faster, and cheaper models for simple queries or initial filtering, and only escalating to larger, more expensive models for complex or high-value tasks. An AI Gateway can implement this routing logic seamlessly. * Open-Source vs. Proprietary: Evaluating the cost-benefit trade-off of running fine-tuned open-source models internally versus relying on proprietary APIs. Open-source models might have higher upfront infrastructure costs but lower per-token costs. * Caching Strategies: As discussed, aggressive caching of LLM responses for frequently occurring inputs dramatically reduces token usage and API calls. * Resource Scaling Optimization: Dynamically scaling GPU instances based on demand, rather than maintaining always-on peak capacity, to minimize compute costs for internally hosted models. * Billing Monitoring & Alerting: Implementing systems to track LLM-related expenditures in real-time and trigger alerts when usage exceeds predefined thresholds. Proactive and continuous cost management strategies are vital for ensuring the economic viability and sustainability of LLM-powered products.

6. Phase 5 - Evolution and Retirement: Continuous Improvement

The final stages of the Product Lifecycle Management for LLM software—Evolution and Retirement—are not terminal but rather cyclical, emphasizing continuous improvement and strategic adaptation. In the rapidly evolving AI landscape, LLM products must constantly iterate, integrate new capabilities, and gracefully sunset outdated versions to remain competitive and relevant. This phase ensures that the product doesn't stagnate but rather grows with technological advancements and changing user needs.

6.1. Iterative Development & Feature Enhancements: Adding New Capabilities, Improving Existing Ones

LLM products are inherently iterative. The initial launch is rarely the final form; it’s a starting point for continuous refinement and expansion. * Roadmapping for AI Features: Establishing a clear product roadmap that outlines future capabilities and improvements, guided by user feedback, market trends, and technological advancements. For instance, an initial LLM-powered chatbot might only handle basic FAQs, but the roadmap could include features like personalized recommendations, proactive outreach, or multimodal interactions. * A/B Testing New Features: Before full deployment, new LLM features or improved model versions should be rigorously A/B tested in production to objectively measure their impact on key metrics like user engagement, task completion rates, or conversion. This data-driven approach ensures that enhancements genuinely add value. * Modular Development: Designing the LLM application architecture with modularity in mind allows for easier integration of new models, prompt engineering techniques, or external services without requiring a complete overhaul of the system. An AI Gateway facilitates this by providing a unified interface for new LLM capabilities. * Experimentation Culture: Fostering a culture of continuous experimentation, where data scientists and engineers are encouraged to explore novel LLM techniques, fine-tuning approaches, or prompt strategies to discover incremental and breakthrough improvements. This agile approach to development ensures the product remains at the cutting edge.

6.2. Model Updates & Upgrades: Transitioning to Newer, More Capable LLMs

The pace of innovation in LLMs means that newer, more capable, or more efficient models are regularly released. Strategic management of model updates is crucial. * Evaluating New Models: Regularly evaluating emerging foundational models or updated versions of existing ones for potential benefits in terms of performance, cost, security, or new capabilities. This involves benchmarking against existing models on relevant tasks. * Controlled Migration Strategies: Planning and executing controlled migrations to newer models. This often involves: * Canary Deployments: Gradually rolling out the new model to a small percentage of users and monitoring performance before a full rollout. * Blue/Green Deployments: Running the old and new models in parallel and switching traffic once the new model is validated. * Backward Compatibility: Ensuring that API changes associated with new models are managed through API Governance to maintain backward compatibility for existing consumers, or providing clear migration paths. * Retraining and Fine-tuning: If transitioning to a new base model, existing fine-tuning datasets and prompt engineering strategies may need to be re-evaluated and adapted to the new model's characteristics. This could involve re-running fine-tuning pipelines or adjusting prompt templates. * Performance and Cost Monitoring Post-Upgrade: Meticulously monitoring the performance, latency, error rates, and cost implications of the new model post-upgrade to ensure it delivers the expected benefits without introducing new issues. An LLM Gateway can provide the necessary insights by comparing metrics between different model versions.

6.3. Deprecation Strategies: Managing End-of-Life for Models or API Versions

Just as models evolve, older models or API versions eventually need to be retired. A thoughtful deprecation strategy minimizes disruption for consumers and prevents technical debt. * Clear Communication: Announcing deprecation well in advance, providing clear timelines, reasons for deprecation, and guidance on migrating to newer versions. This communication should be disseminated through developer portals, release notes, and direct channels. * Support Period: Maintaining a defined support period for deprecated versions, allowing consumers ample time to migrate their integrations. This often involves critical bug fixes but no new feature development. * Phased Retirement: Gradually phasing out older models or API versions. This might involve reducing their capacity, increasing their cost, or eventually deactivating them. An AI Gateway can manage traffic routing to facilitate this phased retirement. * Monitoring Usage of Deprecated Versions: Continuously monitoring the usage of deprecated versions to track migration progress and identify any consumers who are struggling to transition. * Archiving: Securely archiving deprecated models, data, and API definitions for historical reference, auditability, or potential future use. This is especially important for compliance requirements. A well-managed deprecation process is a hallmark of mature API Governance and ensures a smooth transition for all stakeholders.

6.4. Knowledge Transfer & Documentation: Ensuring Continuity

As products evolve and personnel change, robust knowledge transfer and up-to-date documentation are paramount for long-term product health and operational continuity. * Comprehensive Internal Documentation: Maintaining detailed documentation of the LLM product's architecture, design decisions, data pipelines, model training processes, prompt engineering strategies, evaluation metrics, and operational procedures. This includes runbooks, system diagrams, and troubleshooting guides. * External Documentation & Developer Portal: Ensuring that all external-facing documentation, especially for APIs managed by an AI Gateway, is kept current. This includes API specifications, usage examples, authentication details, and release notes for all LLM service versions. * Regular Knowledge Sharing Sessions: Conducting regular internal workshops, presentations, and training sessions to share insights, best practices, and new developments across data science, engineering, and product teams. * Centralized Knowledge Repositories: Utilizing centralized platforms (e.g., wikis, Confluence, internal knowledge bases) to store and organize all product-related information, making it easily searchable and accessible to relevant teams. Effective knowledge management minimizes dependencies on individual team members, accelerates onboarding of new staff, and provides a reliable reference for future development and maintenance efforts.

6.5. Leveraging Data for Future Products: Insights from Production Usage

The ongoing operation of an LLM product generates a wealth of valuable data from real-world user interactions. This data is a strategic asset that can inform the development of future products and features. * User Interaction Analysis: Analyzing user queries, LLM responses, and feedback to identify unmet needs, common pain points, or emerging use cases that could form the basis of new LLM products or extensions. * Performance Data for Benchmarking: Using aggregated performance data (e.g., latency, accuracy, cost) from current products to set realistic benchmarks and goals for future LLM initiatives. * Identifying Gaps and Opportunities: Looking for instances where the current LLM struggles or fails to generate satisfactory responses. These "failure modes" often highlight opportunities for more specialized models, better fine-tuning, or entirely new AI solutions. * Domain-Specific Data Acquisition: Production usage can highlight areas where additional domain-specific data would significantly improve model performance, guiding future data collection efforts. By strategically leveraging insights derived from the operational phase, organizations can continuously innovate, stay ahead of the curve, and ensure that their LLM product portfolio remains dynamic, relevant, and impactful.

7. Key Methodologies and Best Practices for LLM PLM

Optimizing Product Lifecycle Management for LLM software requires a synthesis of established software engineering methodologies with specialized practices tailored for machine learning. Integrating MLOps, adapting DevOps principles, embracing Agile development, and fostering cross-functional collaboration are paramount to navigating the complexities and rapid evolution inherent in LLM projects.

7.1. MLOps for LLMs: Extending MLOps to Include Prompt Engineering, Evaluation, and Ethical Considerations

MLOps (Machine Learning Operations) provides the foundational practices for managing the end-to-end machine learning lifecycle, from data preparation to model deployment and monitoring. For LLMs, MLOps must be extended to account for their unique characteristics:

- Data-centric MLOps: Given the paramount importance of data quality in LLMs, MLOps for LLMs places an even greater emphasis on robust data pipelines. This includes automated data ingestion, cleaning, validation, versioning (DVC or similar), and continuous monitoring for data drift. Ensuring traceability from raw data to model predictions is critical for debugging and auditability.

- Model Versioning and Registry: Just like traditional code, LLM models, along with their associated artifacts (hyperparameters, training scripts, evaluation metrics), must be meticulously versioned and stored in a central model registry. This allows for reproducible experiments, controlled deployments, and easy rollbacks.

- Prompt Engineering Lifecycle: MLOps for LLMs must incorporate the lifecycle of prompt engineering. This means versioning prompts, testing them systematically, tracking their performance, and managing their deployment. An AI Gateway or specialized prompt management platform often facilitates this, allowing prompts to be treated as first-class citizens in the development process.

- Automated Evaluation and Benchmarking: Beyond standard ML metrics, LLM evaluation requires automated and human-in-the-loop assessments for quality, coherence, relevance, factual accuracy, and safety. MLOps should automate the execution of these evaluation suites against new model versions or prompt changes, providing continuous feedback.

- Ethical AI Integration: MLOps for LLMs must explicitly incorporate ethical considerations. This involves automated bias detection during training and inference, monitoring for harmful content generation, and integrating mechanisms for transparency and explainability into the operational workflows. Regular ethical audits of models in production should be part of the MLOps pipeline.

- Inference Management: Deploying and scaling LLMs in production requires specialized infrastructure and tools for inference. This includes optimized serving frameworks, efficient resource allocation (GPUs), dynamic load balancing (often managed by an LLM Gateway), and comprehensive observability for real-time performance, cost, and usage monitoring.

7.2. DevOps Principles Applied to LLMs: CI/CD for Model Deployment, Infrastructure as Code

DevOps principles, which emphasize collaboration, automation, and continuous delivery, are equally vital for LLM software, albeit with AI-specific adaptations:

- Continuous Integration (CI) for Models: CI pipelines for LLMs extend beyond code compilation to include automated data validation, prompt validation, model training or fine-tuning, and preliminary evaluation. Every code commit that affects the model or its interaction should trigger these automated checks to ensure early detection of issues.

- Continuous Delivery/Deployment (CD) for Models: CD pipelines automate the release of new LLM versions to production. This involves packaging models, configuring inference services, and deploying them to infrastructure. Strategies like canary deployments or blue/green deployments are crucial for LLMs to minimize risk during updates. An AI Gateway can be configured as part of the CD pipeline to manage traffic routing between old and new model versions.

- Infrastructure as Code (IaC): Managing the underlying infrastructure for LLM training and inference (e.g., GPU clusters, vector databases, inference endpoints) using IaC tools (Terraform, CloudFormation). This ensures reproducible environments, consistent configurations, and efficient resource provisioning.

- Monitoring and Logging: Implementing comprehensive monitoring and logging across the entire LLM stack, from data pipelines to inference services. This provides critical observability for performance, errors, costs, and security, enabling rapid incident response.

- Automated Testing for LLMs: Developing sophisticated automated tests that go beyond unit tests, including integration tests for LLM Gateway interactions, end-to-end tests simulating user scenarios, and adversarial tests to probe for model vulnerabilities or biases.

7.3. Agile Development for LLM Projects: Iterative, Adaptive Approaches

Agile methodologies, known for their iterative and adaptive nature, are particularly well-suited for LLM projects due to the inherent uncertainty and rapid evolution of the technology.

- Short Sprints and Iterations: Breaking down LLM product development into short, focused sprints (e.g., 2-week cycles). Each sprint can aim to deliver a working increment, such as an improved prompt, a fine-tuned model iteration, or a new evaluation metric.

- Continuous Feedback and Adaptation: Agile emphasizes continuous feedback loops, both from internal stakeholders and end-users. This allows teams to quickly adapt to new data insights, unexpected model behaviors, or changing market requirements, rather than rigidly adhering to a long-term plan.

- Cross-Functional Teams: Agile promotes cross-functional teams comprising data scientists, machine learning engineers, software engineers, product managers, and ethicists. This collaborative structure ensures that all perspectives are considered throughout the development process, from ideation to deployment.

- Minimum Viable Product (MVP) and Iterative Refinement: Launching an MVP with core LLM functionality and then iteratively adding features and refining the model based on real-world usage and feedback. This minimizes initial investment and allows for early market validation.

- Embracing Change: Agile frameworks encourage embracing change as a natural part of the development process, which is critical in the fast-moving LLM landscape where new models and techniques emerge constantly.

7.4. Cross-functional Teams: Data Scientists, Engineers, Product Managers, Ethicists

The complexity and multidisciplinary nature of LLM software necessitate truly cross-functional teams. Siloed departments often lead to miscommunication, inefficient workflows, and suboptimal products.

- Data Scientists: Focus on model development, training, fine-tuning, prompt engineering, and advanced evaluation techniques. They bring expertise in statistical modeling and machine learning algorithms.

- Machine Learning Engineers (MLEs): Bridge the gap between data science and software engineering. They build and maintain MLOps pipelines, optimize models for production, manage inference infrastructure, and implement LLM Gateway configurations.

- Software Engineers: Develop the surrounding application logic, front-end interfaces, backend services, and integrate LLM capabilities via APIs managed by an AI Gateway. They ensure the entire application is robust, scalable, and user-friendly.

- Product Managers: Define the product vision, strategy, and roadmap. They translate market needs into LLM-specific features, prioritize development efforts, and ensure the product delivers value to users. They also play a crucial role in managing the API Governance aspects from a business perspective.

- Ethicists/Responsible AI Specialists: Crucial for identifying, assessing, and mitigating ethical risks (bias, fairness, privacy, safety) throughout the product lifecycle. They provide guidance on responsible design, data sourcing, model evaluation, and compliance.

- Domain Experts: Individuals with deep knowledge of the specific industry or problem domain. They provide invaluable context for data labeling, prompt engineering, and validating the relevance and accuracy of LLM outputs. By fostering collaboration and clear communication across these diverse roles, organizations can build more innovative, reliable, and responsible LLM products. Tools like APIPark, with its ability to share API services within teams and provide independent access permissions, significantly enhance this cross-functional collaboration, streamlining the entire LLM product lifecycle management process.

Conclusion

Optimizing Product Lifecycle Management for LLM software is an intricate yet indispensable endeavor for any organization seeking to harness the transformative power of generative AI. The journey from nascent idea to a fully operational, continuously evolving LLM product is fraught with unique challenges that traditional PLM frameworks often fail to address adequately. We have traversed this landscape, highlighting the distinct characteristics of LLM development, the critical considerations at each stage—from data-centric design and iterative prompt engineering to scalable deployment and vigilant operational oversight.

The introduction of LLM technology mandates a shift towards more adaptive, data-driven, and ethically conscious product management. Strategic planning from the ideation phase, meticulously defining use cases, data strategies, and ethical boundaries, sets the stage for success. During development, robust MLOps practices, encompassing version control for models, data, and even prompts, coupled with human-in-the-loop processes, ensure the creation of high-quality, aligned models.

However, the true test of an LLM product's viability often lies in its deployment and sustained operation. Here, specialized infrastructure like the LLM Gateway and the broader AI Gateway emerge as non-negotiable components. These gateways act as the intelligent intermediaries, simplifying complex integrations, ensuring scalability, optimizing costs, and fortifying security for LLM services. Platforms such as APIPark exemplify this critical role, offering a unified interface, comprehensive API lifecycle management, and powerful observability features that are vital for real-world deployments.

Furthermore, diligent API Governance underpins the long-term success of LLM software by establishing clear standards for API design, managing versions, enforcing security policies, and providing comprehensive documentation. This structured approach not only enhances developer experience but also safeguards the integrity and reliability of LLM-powered applications across an enterprise. Finally, embracing continuous improvement through iterative development, strategic model updates, graceful deprecation, and fostering cross-functional collaboration ensures that LLM products remain cutting-edge, relevant, and capable of delivering sustained value.

The future of LLM software is one of relentless innovation and increasing complexity. Organizations that invest in a holistic, AI-centric PLM strategy, leveraging advanced tooling and methodologies, will be best positioned to navigate this dynamic landscape. By thoughtfully managing every aspect of the product lifecycle—from data to deployment, and from governance to evolution—enterprises can unlock unprecedented capabilities, drive meaningful impact, and establish themselves as leaders in the age of intelligent applications.

Comparison of Traditional Software PLM vs. LLM Software PLM

| Aspect | Traditional Software PLM | LLM Software PLM |

|---|---|---|

| Product Core | Code logic, algorithms | Model parameters, training data, prompt engineering |

| Primary Artifacts | Executables, source code, libraries | Trained models, datasets, prompts, evaluation metrics |

| Development Paradigm | Deterministic, rule-based | Probabilistic, data-driven, emergent behaviors |

| Quality Assurance Focus | Code bugs, functional correctness | Model performance (accuracy, relevance), bias, hallucinations, safety, ethical alignment |

| Key Resource | Developer time, computational resources | High-quality data, GPU compute, specialized AI talent |

| Version Control | Source code (Git) | Source code, model weights, data versions, prompt versions |

| Deployment Complexity | Server/container configuration, microservices | Optimized inference engines, GPU management, multi-model orchestration, LLM Gateway |

| Operational Monitoring | Uptime, latency, error rates, resource usage | Uptime, latency, error rates, token usage, data/model drift, bias detection, hallucination rates |

| Evolution/Maintenance | Feature updates, bug fixes, refactoring | Model retraining, fine-tuning, prompt optimization, data refresh, new model architecture integration |

| Key Governance Need | Software Architecture Governance, Security Governance | API Governance, Data Governance, Ethical AI Governance, MLOps Governance |

| Security Concerns | Vulnerabilities in code, access control | Data privacy, model poisoning, prompt injection, intellectual property theft |

| Primary Cost Driver | Infrastructure, developer salaries | GPU compute, data acquisition, proprietary LLM API usage, AI talent salaries |

Frequently Asked Questions (FAQs)

1. What is an LLM Gateway, and why is it crucial for LLM software deployment? An LLM Gateway is a specialized API gateway designed to manage interactions with Large Language Models. It acts as an abstraction layer between client applications and various LLM providers or internally deployed models, offering a unified interface. It's crucial because it simplifies deployment, handles complexities like load balancing, authentication, rate limiting, and cost optimization across multiple LLMs, ensuring scalability, security, and consistent performance in production environments. Without it, managing diverse LLM APIs directly becomes brittle and inefficient.

2. How does API Governance specifically apply to LLM software? API Governance for LLM software extends traditional API management to address the unique characteristics of AI services. It involves defining standards for LLM API design, managing versioning for evolving models and prompts, enforcing stringent security policies (e.g., preventing prompt injection), and providing comprehensive documentation via developer portals. It ensures LLM capabilities are exposed reliably, securely, and consistently across an organization, facilitating integration while mitigating risks associated with AI's probabilistic nature and rapid changes.

3. What are the biggest challenges in the "Development and Training" phase for LLM software compared to traditional software? The biggest challenges include the data-centric nature (requiring robust data engineering pipelines, ethical sourcing, and continuous quality control), the iterative and experimental nature of model training and prompt engineering, and the need for Human-in-the-Loop (HITL) processes like RLHF to align models with human values. Furthermore, version control extends beyond code to encompass models, datasets, and prompts, demanding sophisticated MLOps practices, unlike the more deterministic code-focused approach of traditional software development.

4. How can organizations effectively manage the operational costs associated with LLM software in production? Effective cost management involves a multi-pronged strategy. This includes optimizing token usage through concise prompt engineering and response truncation, employing intelligent model choices (e.g., using smaller models for simpler tasks and larger ones only when necessary, often facilitated by an AI Gateway for routing), implementing aggressive caching for common queries, and optimizing resource scaling for internally hosted models. Continuous monitoring of token consumption and cloud expenditures is also vital to identify and address cost inefficiencies in real-time.

5. Why is a cross-functional team approach particularly important for LLM Product Lifecycle Management? LLM PLM is inherently multidisciplinary, blending data science, machine learning engineering, traditional software development, product management, and ethical considerations. A cross-functional team ensures all critical perspectives are integrated from ideation to retirement. Data scientists develop models, MLEs operationalize them, software engineers build the surrounding application, product managers define strategy and user needs, and ethicists guide responsible AI practices. This collaboration minimizes silos, accelerates innovation, improves problem-solving, and ensures the development of more robust, ethical, and market-aligned LLM products.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.