Mastering API Gateway Main Concepts: Your Essential Guide

In the intricate tapestry of modern software architecture, where applications are no longer monolithic giants but rather constellations of interconnected services, the role of Application Programming Interfaces (APIs) has become unequivocally central. APIs act as the universal language, the standardized protocols that allow disparate systems to communicate, share data, and collaborate seamlessly. From the smallest mobile application fetching data from a backend server to sprawling enterprise systems integrating with a myriad of third-party services, APIs are the invisible threads that hold the digital world together. However, this burgeoning reliance on APIs brings with it a complex array of challenges: how do you manage hundreds, or even thousands, of distinct API endpoints? How do you ensure their security, optimize their performance, maintain their reliability, and provide a consistent experience for developers? The answer, increasingly, lies in the strategic implementation and mastery of an API Gateway.

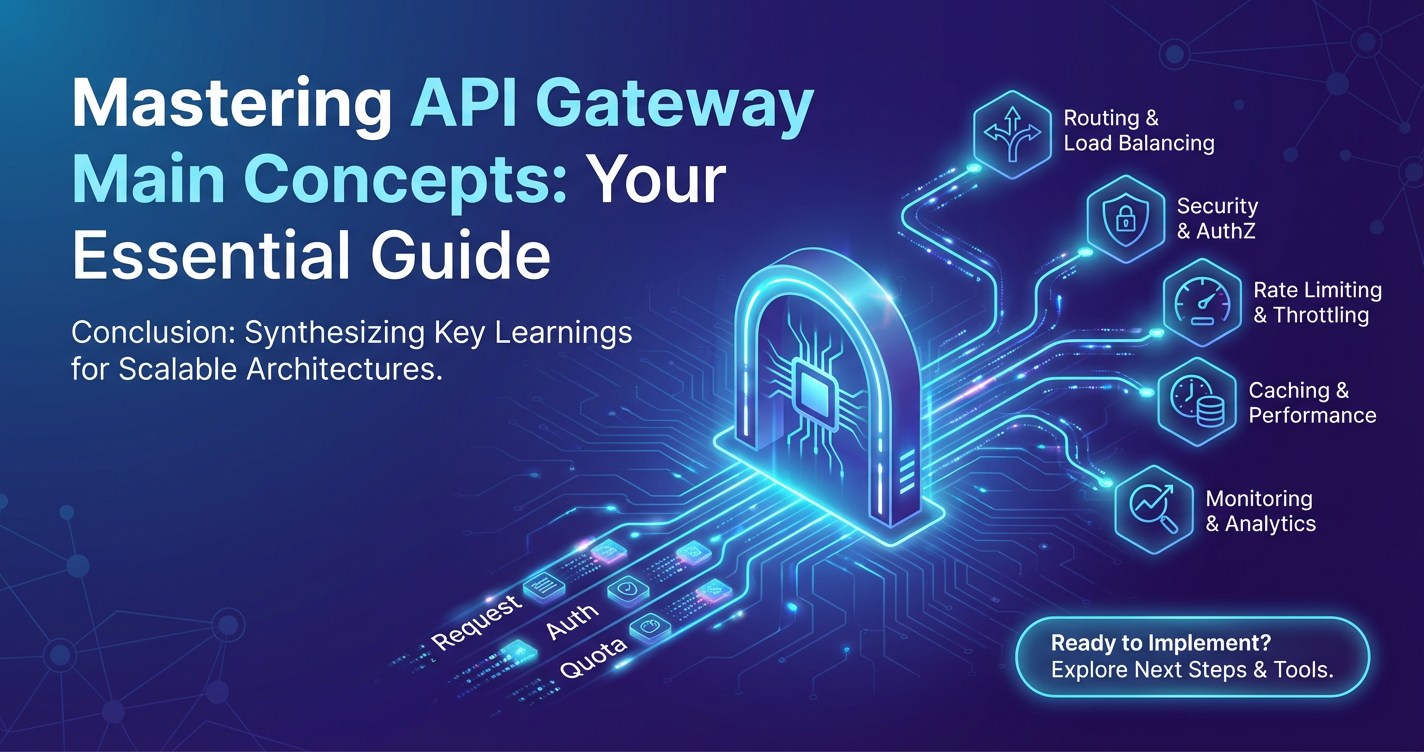

An API Gateway stands as the vigilant sentinel at the perimeter of your API ecosystem, acting as a single, unified entry point for all incoming requests. It's more than just a simple proxy; it's an intelligent traffic controller, a robust security enforcer, and a sophisticated policy engine, all rolled into one indispensable component. Without a well-designed and properly configured API Gateway, organizations risk succumbing to the chaos of scattered security policies, fragmented monitoring efforts, and a tangled web of direct service invocations that are prone to errors, security vulnerabilities, and scaling nightmares. This guide aims to demystify the core concepts behind API Gateway technology, providing a deep dive into its essential functions, architectural implications, and best practices. By thoroughly understanding these principles, you will be equipped to harness the full power of an API Gateway to build more secure, scalable, and manageable API-driven solutions, ultimately propelling your digital transformation journey forward.

What is an API Gateway? A Fundamental Understanding

At its heart, an API Gateway is a server that acts as an API management tool, sitting between a client and a collection of backend services. It’s the single point of entry for all incoming client requests, routing them to the appropriate backend service, and returning the aggregated responses to the client. Imagine it as the command center for your entire API landscape, a sophisticated control tower orchestrating the flow of communication between consumers and providers. This central role is not merely about forwarding requests; it's about adding a layer of intelligence, control, and consistency that significantly enhances the overall API experience and operational efficiency.

The concept of an API Gateway emerged as a direct response to the increasing complexity of modern software architectures, particularly with the widespread adoption of microservices. In a traditional monolithic application, a single application handles all functionalities. However, in a microservices architecture, an application is broken down into small, independent services, each responsible for a specific business capability. While this approach offers immense benefits in terms of scalability, resilience, and independent deployment, it also introduces a significant challenge: how do clients interact with potentially dozens or hundreds of distinct services? Directly exposing each microservice to external clients would create a client-side complexity nightmare, requiring clients to understand the network location, authentication requirements, and specific endpoints of every service they needed to interact with. Moreover, applying cross-cutting concerns like security, rate limiting, and monitoring consistently across all these services would become a monumental, if not impossible, task.

This is where the API Gateway steps in, acting as a crucial abstraction layer. It consolidates multiple API requests from clients into a single, optimized request to the backend services. For instance, a mobile application might need to fetch user profile information, order history, and current shopping cart contents. Without an API Gateway, it would have to make three separate calls to three different microservices. The API Gateway allows the client to make a single request, which the gateway then intelligently dispatches to the respective services, aggregates their responses, and returns a unified payload to the client. This significantly simplifies client development, reduces network chattiness, and improves overall application performance.

Furthermore, an API Gateway differs significantly from a traditional reverse proxy or load balancer. While a reverse proxy forwards requests to a server on behalf of a client and a load balancer distributes network traffic across multiple servers, an API Gateway operates at a higher application level. It understands the semantics of the API calls, allowing it to apply more sophisticated routing logic, perform data transformations, enforce complex security policies based on the request content, and provide detailed API-specific metrics. It’s not just about directing traffic; it’s about actively managing, enhancing, and securing that traffic in a way that is deeply aware of the API context. This evolution from simple network proxies to intelligent API orchestrators has solidified the API Gateway's position as an indispensable component in any robust, scalable, and secure API ecosystem.

Core Concepts and Functions of an API Gateway

The power of an API Gateway lies in its comprehensive suite of functions, each designed to address specific challenges in API management. These core concepts collectively transform the gateway from a mere traffic forwarder into an intelligent, feature-rich platform that forms the backbone of modern API strategies.

A. Request Routing and Load Balancing

At its foundational level, an API Gateway is a sophisticated router. When a client sends a request to the gateway, the first and most critical task is to determine which backend service or set of services should receive that request. This isn't a simple one-to-one mapping; modern API Gateways employ highly configurable routing rules based on a variety of criteria. These criteria can include the request path (e.g., /users vs. /products), HTTP methods (GET, POST, PUT, DELETE), request headers (e.g., Accept or custom headers), query parameters, and even the source IP address of the client. This granular control allows organizations to direct specific types of requests to specialized services, enabling fine-tuned service decomposition and optimization. For instance, requests from mobile clients might be routed to a mobile-optimized version of a service, while web client requests go to another.

Beyond merely routing, the API Gateway also takes on the crucial responsibility of load balancing. In a distributed system, multiple instances of the same service often run concurrently to handle increased traffic and ensure high availability. When a request needs to be sent to a particular service, the gateway intelligently distributes that request among the available instances of that service. Various load balancing algorithms can be employed, such as: * Round Robin: Requests are distributed sequentially to each server in the pool. * Least Connections: The request is sent to the server with the fewest active connections, ensuring more idle servers are utilized. * Weighted Round Robin/Least Connections: Servers are assigned a weight based on their capacity, and traffic is distributed proportionally. * IP Hash: The client's IP address is used to determine which server receives the request, ensuring session stickiness.

The importance of dynamic service discovery cannot be overstated in this context. In highly dynamic microservices environments, service instances can frequently scale up, scale down, or fail. The API Gateway needs to be aware of the current operational status and network locations of all backend services. This is typically achieved by integrating the gateway with a service registry (e.g., Consul, Eureka, ZooKeeper) or a Kubernetes service mesh. This integration allows the gateway to automatically discover available service instances and remove unhealthy ones from the routing pool, ensuring that requests are always sent to healthy, available services. This automated resilience and scalability are fundamental to maintaining a high-performance and reliable API ecosystem, preventing outages and ensuring that user experiences remain consistently positive even under fluctuating loads.

B. Authentication and Authorization

One of the most vital functions of an API Gateway is to act as the primary enforcement point for security, specifically handling authentication and authorization. Placing these concerns at the gateway layer centralizes security logic, offloads individual backend services from repetitive security tasks, and ensures consistent policy application across all APIs.

Authentication is the process of verifying the identity of the client making the API request. The API Gateway supports a wide array of authentication mechanisms to cater to diverse client types and security requirements: * API Keys: A simple, often self-service method where clients are issued unique keys. The gateway validates these keys against a stored list or a dedicated authentication service. While easy to implement, API keys are typically best suited for lower-security contexts or for tracking usage. * OAuth 2.0: A widely adopted standard for delegated authorization, allowing third-party applications to access a user's resources without needing their credentials. The API Gateway can act as a resource server, validating access tokens issued by an OAuth authorization server. * JSON Web Tokens (JWT): Self-contained tokens that securely transmit information between parties. The gateway can validate the signature and claims within a JWT (e.g., expiration, audience) to authenticate the client and even extract user information without needing to query an identity provider for every request. * OpenID Connect (OIDC): An identity layer built on top of OAuth 2.0, providing single sign-on (SSO) and identity verification. The gateway can integrate with OIDC providers to authenticate users. * Mutual TLS (mTLS): A more robust method where both the client and the server authenticate each other using X.509 certificates, ensuring that only trusted clients can connect to the gateway.

Once a client is authenticated, authorization comes into play. This is the process of determining what actions an authenticated client is permitted to perform. The API Gateway enforces authorization policies based on various factors: * Role-Based Access Control (RBAC): Clients are assigned roles (e.g., "admin," "user," "guest"), and each role has specific permissions. The gateway checks if the authenticated user's role has the necessary permissions to access a particular API endpoint or perform a specific action. * Attribute-Based Access Control (ABAC): A more flexible and granular approach where access decisions are based on a combination of attributes associated with the user (e.g., department, location), the resource (e.g., sensitivity, ownership), and the environment (e.g., time of day). * Scope-Based Authorization (OAuth scopes): In an OAuth context, access tokens are issued with specific "scopes" (e.g., read:profile, write:orders) that define the extent of access granted. The gateway validates that the token presented has the required scopes for the requested operation.

By centralizing these security mechanisms, the API Gateway becomes an impenetrable front door, significantly reducing the attack surface for backend services. It abstracts away complex security protocols from individual service developers, allowing them to focus on business logic while ensuring that every request entering the system adheres to the defined security posture. Token validation, credential handling, and policy enforcement are consistently applied, mitigating the risk of security gaps that can arise from inconsistent implementations across multiple services.

C. Rate Limiting and Throttling

To ensure the stability, fairness, and availability of backend services, API Gateways implement sophisticated mechanisms for rate limiting and throttling. These functions are critical for preventing various issues, including resource exhaustion, denial-of-service (DoS) attacks, and unfair resource consumption by specific clients.

Rate limiting restricts the number of API requests a client can make within a specified time window. For example, a client might be limited to 100 requests per minute. Once this limit is reached, subsequent requests from that client within the time window are rejected, typically with an HTTP 429 "Too Many Requests" status code. This protects backend services from being overwhelmed by a single client, whether maliciously or accidentally (e.g., due to a bug in a client application creating an infinite loop of requests).

Throttling, while often used interchangeably with rate limiting, can refer to a more nuanced control mechanism. It might involve delaying requests, prioritizing certain clients over others, or dynamically adjusting limits based on system load. For instance, a gateway might allow a burst of requests but then slow down the processing for subsequent requests if the backend services are under stress.

The API Gateway employs various strategies to enforce these limits: * Fixed Window: A straightforward approach where a fixed time window (e.g., 60 seconds) is defined, and requests are counted within that window. At the end of the window, the counter resets. The challenge here is the "burst problem" at the window edges, where a client might make a large number of requests just before and just after the window resets, effectively doubling their allowed rate. * Sliding Window Log: This method tracks the timestamps of all requests within the last N seconds. When a new request comes in, the gateway checks how many requests occurred in the current window. This provides a more accurate rate count but can be resource-intensive due to storing all timestamps. * Sliding Window Counter: A more efficient variation that combines the benefits of fixed and sliding windows. It tracks the count for the current window and the previous window and estimates the count for the overlapping portion of the sliding window. * Token Bucket: This algorithm imagines a bucket with a fixed capacity for "tokens." Tokens are added to the bucket at a constant rate. Each API request consumes one token. If the bucket is empty, the request is rejected or queued. This method allows for bursts of requests up to the bucket's capacity while enforcing a sustainable average rate.

Beyond protecting backend services, rate limiting is also a crucial component of API monetization strategies. Different tiers of service (e.g., free, basic, premium) can be defined with varying rate limits and quotas. A free tier might have a very restrictive limit, while premium subscribers enjoy much higher or even unlimited access. The API Gateway centrally enforces these quotas, managing access for different types of users and applications. This precise control over API consumption ensures fair resource distribution, prevents abuse, and provides a clear mechanism for commercializing API usage.

D. Traffic Management and Policy Enforcement

An API Gateway is a versatile traffic manager, capable of applying a wide array of policies to incoming requests and outgoing responses. These policies extend beyond routing and security, encompassing data transformation, fault tolerance, and versioning, all designed to enhance the flexibility and robustness of the API ecosystem.

Request/Response Transformation is a powerful feature that allows the gateway to modify the content of requests before they reach backend services and modify responses before they are returned to clients. This can involve: * Header Manipulation: Adding, removing, or modifying HTTP headers. For example, injecting an authenticated user ID into a custom header for the backend service, or removing sensitive headers from the response. * Payload Modification: Transforming the request or response body. This is particularly useful when backend services expect a different data format (e.g., XML) than what the client sends (e.g., JSON), or when a backend service returns a verbose response that needs to be trimmed down for a mobile client. Schema validation can also occur here, ensuring that incoming data conforms to expected structures.

Protocol Translation is another advanced capability. An API Gateway can translate between different communication protocols. For instance, a client might send a standard RESTful HTTP request, but the backend service might communicate using gRPC or a message queue. The gateway can act as an intermediary, bridging these protocol differences seamlessly, allowing clients to interact with services using their preferred protocols without backend changes.

Circuit Breakers and Retries are essential for building resilient distributed systems. When a backend service starts exhibiting failures (e.g., high error rates or slow responses), a circuit breaker pattern implemented in the gateway can temporarily stop sending requests to that service. Instead of continually hammering a failing service, the gateway "breaks the circuit," allowing the service time to recover. After a configurable period, the gateway will cautiously try sending a few requests (half-open state) to see if the service has recovered. Similarly, retries allow the gateway to automatically re-attempt a failed request a few times, often with an exponential backoff strategy, reducing transient network or service-side errors perceived by the client.

Caching is a critical optimization technique. The API Gateway can cache responses from backend services for a specified duration. If a subsequent request for the same resource arrives within the cache's validity period, the gateway can serve the response directly from its cache, bypassing the backend service entirely. This significantly reduces latency for clients, decreases the load on backend services, and improves overall system performance, especially for frequently accessed, immutable data.

CORS (Cross-Origin Resource Sharing) Policy Enforcement is vital for web-based clients. Web browsers impose security restrictions that prevent JavaScript code from making requests to a different domain than the one from which the web page originated. The API Gateway can be configured to add the necessary CORS headers to responses, allowing legitimate cross-origin requests while maintaining security.

Finally, Versioning Management is crucial for evolving APIs without breaking existing client applications. The API Gateway can route requests to specific versions of a backend service based on various indicators, such as a version number in the URL path (e.g., /v1/users), a custom header (e.g., X-API-Version: 2), or a query parameter. This allows multiple versions of an API to coexist, enabling graceful deprecation strategies and giving developers time to migrate to newer API versions without immediate disruption. These sophisticated traffic management capabilities empower organizations to create highly flexible, robust, and performant APIs that can adapt to changing demands and evolving architectural patterns.

E. Monitoring, Logging, and Analytics

Visibility into the health, performance, and usage of your APIs is paramount for operational excellence. An API Gateway provides a centralized vantage point for comprehensive monitoring, detailed logging, and powerful analytics, offering invaluable insights into the entire API ecosystem.

Monitoring capabilities within an API Gateway are designed to provide real-time snapshots of API traffic. This includes critical metrics such as: * Request Volume: The total number of requests processed over a specific period. * Latency: The time taken for the gateway to process a request and receive a response from the backend service. This can be broken down into gateway processing time, backend service response time, and network latency. * Error Rates: The percentage of requests resulting in error codes (e.g., 4xx client errors, 5xx server errors). * Resource Utilization: CPU, memory, and network bandwidth consumption of the gateway itself. These metrics are typically visualized in real-time dashboards, allowing operations teams to quickly identify anomalies, detect performance degradation, and respond proactively to potential issues before they impact end-users. Integration with external monitoring systems like Prometheus, Grafana, or cloud-native monitoring services allows for centralized observability across the entire infrastructure.

Logging is another critical function, providing a granular record of every API call that passes through the gateway. These logs typically capture a wealth of information, including: * Timestamp of the request and response. * Client IP address and user agent. * Requested URL path and HTTP method. * HTTP status code of the response. * Request and response headers. * Latency details. * Authentication and authorization outcomes. Detailed API call logs are indispensable for auditing, forensic analysis, security investigations, and most importantly, for troubleshooting. When a client reports an issue, these logs provide the necessary breadcrumbs to trace the request's journey, identify the point of failure (whether at the gateway or a backend service), and rapidly diagnose the root cause. This level of detail is crucial for maintaining system stability and ensuring data security.

For platforms like APIPark, these logging capabilities are a cornerstone feature. APIPark offers "Detailed API Call Logging," recording every nuance of each API invocation. This means businesses can quickly trace and troubleshoot issues, ensuring system stability and safeguarding data. This feature provides a robust audit trail, critical for compliance and security forensics.

Analytics extends beyond raw monitoring and logging to derive actionable intelligence from the aggregated data. By analyzing historical call data, the API Gateway can reveal long-term trends and performance changes, which are invaluable for: * Capacity Planning: Understanding peak usage patterns helps in provisioning adequate resources for backend services and the gateway itself. * Performance Optimization: Identifying slow APIs or bottlenecks, enabling targeted optimization efforts. * Usage Pattern Analysis: Discovering which APIs are most popular, who the primary consumers are, and how they are being used. This data can inform API design decisions, marketing strategies, and product development. * Business Intelligence: For commercial APIs, analytics can track revenue generation, subscription tier usage, and customer engagement.

Again, products like APIPark exemplify the power of integrated analytics. With its "Powerful Data Analysis" features, APIPark analyzes historical call data to display long-term trends and performance changes. This predictive insight helps businesses perform preventive maintenance and address potential issues before they escalate, ensuring continuous service availability and optimal performance. By centralizing monitoring, logging, and analytics, the API Gateway provides an unparalleled level of transparency and control over the entire API landscape, transforming raw data into strategic insights that drive better decision-making and operational efficiency.

F. Security and Threat Protection

The API Gateway acts as the primary defense line for your backend services, offering robust security features that go beyond simple authentication and authorization. It serves as a crucial component in protecting your APIs from a myriad of cyber threats and vulnerabilities. By centralizing security at the gateway layer, organizations can ensure consistent application of protection policies, reducing the likelihood of exploits that often target individual, inconsistently secured services.

A well-configured API Gateway mitigates many of the risks outlined in the OWASP Top 10, a widely recognized standard for web application security. Specifically, it can help protect against: * Injection Flaws: While backend services are primarily responsible for preventing SQL injection or command injection by properly sanitizing inputs, the API Gateway can perform preliminary validation and sanitization of request payloads. It can detect and block requests containing known malicious patterns or excessive length that often indicate injection attempts. * Cross-Site Scripting (XSS): Similar to injection flaws, the gateway can strip potentially malicious scripts from input fields or ensure that responses only contain safe content types, preventing clients from inadvertently executing harmful code. * Broken Authentication and Session Management: As discussed earlier, the gateway is the central point for authentication, securely handling API keys, JWTs, and OAuth tokens, thereby preventing weak or compromised authentication mechanisms from reaching backend services. * Security Misconfiguration: By enforcing security policies from a central point, the gateway reduces the surface area for misconfigurations that can expose vulnerabilities in individual backend services. * Denial of Service (DoS) and Distributed DoS (DDoS) Attacks: Beyond rate limiting, the API Gateway can implement more sophisticated DoS/DDoS protection mechanisms. This includes identifying and blocking traffic from suspicious IP addresses, enforcing connection limits, and prioritizing legitimate traffic.

Specific threat protection features commonly found in API Gateways include: * IP Whitelisting/Blacklisting: Allowing access only from a predefined list of trusted IP addresses (whitelisting) or explicitly blocking requests from known malicious IP addresses (blacklisting). * Bot Detection and Mitigation: Identifying and blocking automated bot traffic, which can range from harmless web crawlers to malicious scrapers and credential stuffing bots. This often involves analyzing user agent strings, request patterns, and behavioral analytics. * Threat Intelligence Integration: Some gateways can integrate with external threat intelligence feeds to automatically identify and block traffic from known malicious sources or IP ranges associated with recent attacks. * Schema Validation: Enforcing strict schema validation for request and response payloads. Any request body that does not conform to the expected JSON or XML schema can be rejected outright, preventing malformed data from reaching backend services and potentially exploiting parsing vulnerabilities. * Content Filtering: Inspecting the content of requests and responses for sensitive data (e.g., credit card numbers, PII) or malicious patterns, and either redacting it or blocking the transmission.

In essence, an API Gateway often functions as a specialized Web Application Firewall (WAF) for API traffic. It provides a crucial layer of defense, shielding backend services from direct exposure to the public internet and acting as a filter for all incoming requests. This centralized, proactive security posture not only enhances the overall security of your API ecosystem but also simplifies compliance with various regulatory standards by providing a consistent enforcement point and detailed audit trails. By offloading these complex security responsibilities from individual service developers, the gateway enables them to focus on core business logic, confident that a robust security perimeter is in place.

G. Developer Experience and Portal

While the previous sections focused on the operational and security benefits of an API Gateway for the organization, another crucial aspect is its profound impact on the developer experience. A well-implemented API Gateway, especially when coupled with an API developer portal, significantly enhances the usability, discoverability, and adoption of your APIs, both internally and externally.

A key function here is the provision of a centralized, user-friendly platform where developers can explore, learn about, and interact with available APIs. This typically involves: * API Documentation Hosting: The gateway or its associated portal serves as the single source of truth for API documentation. This usually includes interactive documentation generated from OpenAPI/Swagger specifications, allowing developers to understand endpoints, request/response structures, and authentication requirements. Clear and comprehensive documentation is fundamental for reducing the learning curve and accelerating integration time for developers. * Sandbox Environments: To facilitate frictionless development, an API Gateway can route requests to dedicated sandbox or testing environments. These isolated environments allow developers to experiment with APIs, test their integrations, and debug issues without affecting production systems or incurring real-world costs. This separation is crucial for fostering rapid iteration and confident deployment. * Self-Service Registration: A developer portal, often integrated with the API Gateway, empowers developers to register their applications, obtain API keys or OAuth client credentials, and subscribe to desired APIs without manual intervention from an administrator. This self-service model streamlines the onboarding process and makes APIs more accessible, encouraging wider adoption. * Subscription Management: For organizations offering different tiers of API access (e.g., free, basic, premium), the portal allows developers to manage their subscriptions, monitor their usage against rate limits, and upgrade or downgrade their plans. This transparency and control enhance the developer relationship.

Furthermore, platforms like APIPark extend these capabilities by prioritizing team collaboration and controlled access. APIPark is an all-in-one AI gateway and API developer portal that facilitates "API Service Sharing within Teams." This means it offers a centralized display of all API services, making it remarkably easy for different departments and teams within an organization to discover, find, and utilize the necessary API services. This significantly reduces duplication of effort, promotes reuse, and fosters a more collaborative development environment.

Moreover, in scenarios where controlled access is paramount, APIPark supports "API Resource Access Requires Approval." This feature allows for the activation of subscription approval, ensuring that callers must explicitly subscribe to an API and await administrator approval before they can invoke it. This prevents unauthorized API calls, enhances security, and provides an additional layer of governance, which is particularly crucial for sensitive or mission-critical APIs where data breaches could have severe consequences.

By providing comprehensive documentation, easy-to-use testing environments, and streamlined access mechanisms, the API Gateway and its associated developer portal transform the experience for API consumers. It shifts the paradigm from a tedious, request-driven integration process to a self-service, intuitive journey, ultimately accelerating innovation and maximizing the value derived from your API assets.

API Gateway in Modern Architectures

The rise of the API Gateway is inextricably linked to the evolution of modern software architectures, particularly the move towards distributed systems and microservices. Its capabilities are perfectly aligned with the demands and complexities introduced by these architectural patterns.

Microservices Architecture

The most prominent relationship is with microservices. In a microservices architecture, a large application is broken down into a suite of small, independently deployable services, each running in its own process and communicating with others using lightweight mechanisms, typically HTTP APIs. While this offers immense benefits in terms of scalability, resilience, and organizational agility, it introduces several challenges that the API Gateway is uniquely positioned to solve: * Client-Side Complexity: Without an API Gateway, clients would need to know the endpoints of numerous microservices, manage their individual authentication tokens, and often aggregate data from multiple services. The gateway aggregates requests, simplifies client interaction, and provides a single, consistent API surface. * Cross-Cutting Concerns: Security, rate limiting, logging, and monitoring are concerns that affect all services. Implementing these repeatedly in each microservice is inefficient, error-prone, and leads to inconsistencies. The API Gateway centralizes these cross-cutting concerns, offloading them from the microservices and ensuring uniform application of policies. * Service Discovery and Routing: Microservices are dynamic; instances come and go. The API Gateway, integrated with a service registry, dynamically discovers service instances and routes requests appropriately, abstracting away the underlying infrastructure details from clients. * Protocol Translation: Microservices might use different communication protocols (e.g., REST, gRPC, messaging queues). The API Gateway can bridge these differences, presenting a unified protocol to external clients.

Essentially, the API Gateway becomes the "edge" of the microservices ecosystem, shielding the internal complexity of the dozens or hundreds of services from external consumers. It enables a clean separation of concerns, allowing microservice developers to focus purely on business logic, while the gateway handles the intricacies of external communication, security, and management.

Event-Driven Architectures

While primarily associated with request-response patterns, the API Gateway also has a role in event-driven architectures. It can act as a bridge, transforming incoming synchronous API calls into asynchronous events that are then published to a message broker (e.g., Kafka, RabbitMQ). This allows clients to interact with event-driven systems through a familiar API interface, while the backend benefits from the decoupled, scalable nature of event processing. For example, a client could make a POST /order request to the gateway, which then publishes an "OrderPlaced" event, allowing multiple downstream services to react independently. The gateway might then return an immediate acknowledgment to the client or poll for the status of the asynchronous operation.

Serverless Functions

The rise of serverless computing (e.g., AWS Lambda, Azure Functions, Google Cloud Functions) also makes the API Gateway an indispensable component. Serverless functions are typically small, stateless pieces of code triggered by events, including HTTP requests. An API Gateway is commonly used as the front-end for these functions, providing: * Unified Endpoint: A single public endpoint for multiple functions, abstracting individual function URLs. * Request Mapping: Translating incoming HTTP requests into the specific event payloads expected by the serverless functions. * Authentication and Authorization: Securing access to functions, which might not have built-in comprehensive security mechanisms. * Rate Limiting: Protecting functions from excessive invocation, which can incur high costs. * Response Transformation: Formatting the function's output into a standard HTTP response.

Hybrid and Multi-Cloud Environments

As organizations increasingly adopt hybrid cloud strategies (combining on-premises infrastructure with public cloud resources) and multi-cloud strategies (using multiple public cloud providers), managing APIs across these disparate environments becomes a significant challenge. An API Gateway offers a centralized control plane that can span these boundaries. * Consistent Policy Enforcement: Ensures that security, rate limiting, and other policies are applied uniformly, regardless of where the backend service resides. * Seamless Integration: Allows clients to interact with services deployed across different clouds or on-premises data centers through a single, logical API entry point, abstracting the underlying network topology. * Traffic Management: Intelligently routes traffic to the most appropriate service instance, considering location, latency, and cost across hybrid and multi-cloud deployments.

In essence, the API Gateway has evolved from a useful tool to an architectural imperative in the context of modern distributed systems. It acts as the intelligent facade that simplifies client interactions, enhances security, optimizes performance, and provides a coherent management layer over the intricate landscape of microservices, serverless functions, and diverse deployment environments. Its role is to tame complexity, allowing organizations to leverage the full benefits of these advanced architectures without succumbing to their inherent challenges.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Choosing an API Gateway: Key Considerations

Selecting the right API Gateway is a critical decision that can profoundly impact the success of your API strategy. The market offers a diverse range of options, from open-source projects to enterprise-grade commercial products and fully managed cloud services. Making an informed choice requires a careful evaluation of several key factors:

Deployment Models

The first consideration is how the gateway will be deployed and managed: * Self-Hosted/On-Premises: You deploy and manage the API Gateway software on your own infrastructure (servers, VMs, containers). This offers maximum control and customization but requires significant operational overhead for installation, configuration, scaling, and maintenance. Examples include open-source options like Kong Gateway, Apache APISIX, or commercial solutions deployed on-premises. * Managed Service (Cloud Provider): The API Gateway is offered as a fully managed service by a cloud provider (e.g., AWS API Gateway, Azure API Management, Google Cloud Apigee). The cloud provider handles all infrastructure, scaling, and maintenance. This reduces operational burden but might come with vendor lock-in and potentially higher costs for high usage. It also offers less control over the underlying infrastructure. * Hybrid: A combination where the API Gateway control plane is managed in the cloud, but the runtime gateways are deployed on-premises or in a different cloud environment. This offers a balance of control and ease of management, suitable for hybrid cloud strategies.

Scalability and Performance

The chosen API Gateway must be able to handle the expected traffic volume and maintain low latency, especially for high-throughput APIs. * Transactions Per Second (TPS): Evaluate the gateway's benchmarked TPS under various load conditions. Can it handle your peak traffic demands? * Latency: How much overhead does the gateway introduce to each API call? Minimizing latency is crucial for responsive applications. * Cluster Deployment: For high availability and fault tolerance, the gateway should support cluster deployment across multiple nodes, data centers, or availability zones. This ensures that even if one instance fails, others can seamlessly take over. * APIPark, for instance, highlights its impressive performance, stating it can achieve over 20,000 TPS with just an 8-core CPU and 8GB of memory. It also explicitly supports cluster deployment, demonstrating its capability to handle large-scale traffic and provide resilience. This kind of performance metric is crucial for enterprises with demanding API landscapes.

Feature Set Alignment with Business Needs

Carefully map the gateway's capabilities to your specific requirements: * Core Features: Does it support all the essential functions discussed (routing, auth, rate limiting, logging, etc.)? * Advanced Features: Do you need advanced capabilities like content transformation, protocol translation, caching, or custom plugin development? * Developer Portal: Is an integrated developer portal important for fostering API adoption and self-service? * AI Integration: For modern applications, especially those leveraging AI models, an AI-aware gateway is beneficial. APIPark is a prime example here, as it's designed as an AI gateway that offers quick integration of 100+ AI models, unified API formats for AI invocation, and prompt encapsulation into REST APIs. If your organization is heavily invested in AI, this kind of specialized feature set can be a significant differentiator.

Extensibility and Customization

No two API strategies are identical. The ability to extend or customize the gateway's behavior is often vital: * Plugin Architecture: Does it support custom plugins or policies that can be developed to meet unique business logic or integration requirements? * Scripting Capabilities: Can you inject custom code (e.g., Lua, JavaScript) at various points in the request/response lifecycle? * Integration Ecosystem: How well does it integrate with existing tools like identity providers, monitoring systems, service meshes, and CI/CD pipelines?

Community and Commercial Support

Consider the ecosystem and support options available: * Open Source: Open-source gateways (like APIPark under Apache 2.0) offer transparency, community-driven development, and no licensing costs for the core product. However, internal expertise is needed for support, or you might need to purchase commercial support. * Commercial Vendor: Commercial products come with professional support, SLAs, and often more advanced features. This provides peace of mind but at a recurring cost. * APIPark offers a balanced approach: it's an open-source AI gateway under the Apache 2.0 license, meeting basic needs for startups. However, it also provides a commercial version with advanced features and professional technical support tailored for leading enterprises, allowing organizations to scale their support as their needs evolve.

Cost

Evaluate the total cost of ownership (TCO) across different models: * Licensing Fees: For commercial products. * Infrastructure Costs: For self-hosted options (servers, network, storage). * Operational Costs: Staffing for management, maintenance, and support. * Traffic-based Pricing: Cloud-managed services often charge based on API calls, data transfer, or processed messages, which can be predictable for low usage but scale significantly with high traffic.

By systematically evaluating these factors against your organization's specific needs, existing infrastructure, budget, and future growth plans, you can select an API Gateway that not only meets your current requirements but also serves as a robust and scalable foundation for your evolving API ecosystem.

Best Practices for API Gateway Implementation

Implementing an API Gateway effectively goes beyond merely installing software; it involves strategic design, meticulous configuration, and continuous operational vigilance. Adhering to best practices ensures that your gateway becomes a robust asset rather than a new point of failure or complexity.

Design for Resilience and High Availability

Your API Gateway is a single point of entry, making it a potential single point of failure if not designed for resilience. * Redundancy: Deploy multiple gateway instances across different availability zones or data centers. Use load balancers (external to the gateway) to distribute traffic across these instances. * Clustering: Configure gateway instances in a cluster to share state, policies, and configuration, ensuring seamless failover if one node goes down. * Statelessness (where possible): Design gateway policies to be as stateless as possible to simplify horizontal scaling and recovery. Where state is required (e.g., rate limiting counters), ensure it's distributed and highly available. * Graceful Degradation: Implement circuit breakers and fallback mechanisms. If a backend service is unhealthy, the gateway should either return a cached response, a default error, or reroute to a degraded service, rather than propagating the backend failure to the client.

Security First Approach

Given its position at the edge of your API ecosystem, the API Gateway must be secured with the highest priority. * Least Privilege: Grant the gateway only the minimum necessary permissions to perform its functions. * TLS Everywhere: Enforce TLS/SSL for all communication, both client-to-gateway and gateway-to-backend services, to encrypt data in transit. Implement strong cipher suites and regularly update certificates. * Regular Audits and Penetration Testing: Periodically audit gateway configurations, access logs, and perform penetration tests to identify and remediate vulnerabilities. * Strong Authentication and Authorization: Meticulously configure and test all authentication and authorization policies. Leverage strong methods like OAuth 2.0, JWT, and mTLS where appropriate. * Input Validation: While backend services perform definitive validation, the gateway can do preliminary schema validation and content filtering to block obviously malicious requests early.

Comprehensive Monitoring and Alerting

Visibility is key to operational stability. * Capture All Relevant Metrics: Monitor request volume, latency, error rates, resource utilization (CPU, memory, network I/O), and cache hit ratios for the gateway and its backend services. * Centralized Logging: Aggregate gateway access logs and error logs into a centralized logging system (e.g., ELK stack, Splunk) for easy searching, analysis, and compliance. APIPark provides "Detailed API Call Logging" and "Powerful Data Analysis" precisely for this purpose, enabling businesses to quickly trace and troubleshoot issues and anticipate future problems. * Proactive Alerting: Set up alerts for critical thresholds (e.g., high error rates, elevated latency, service instance failures) to ensure that operational teams are notified immediately of potential problems. * Correlation IDs: Implement correlation IDs that are passed through the gateway and all downstream services to facilitate end-to-end tracing of requests for debugging and monitoring.

Version Control for Configurations

Treat API Gateway configurations as code. * Configuration as Code: Manage all gateway policies, routes, authentication rules, and other configurations in a version control system (e.g., Git). * Automated Deployment: Automate the deployment and update of gateway configurations through CI/CD pipelines. This ensures consistency, repeatability, and reduces manual errors. * Rollback Capability: Design your deployment process to allow for quick and easy rollbacks to previous stable configurations in case of issues.

Clear Documentation for Developers

A powerful API Gateway is only as good as its discoverability and usability. * Developer Portal: Provide a comprehensive developer portal (like APIPark) that includes interactive API documentation, clear onboarding instructions, SDKs, code samples, and a self-service registration process. * Consistent Naming and Standards: Enforce consistent API design principles, naming conventions, and data formats across all APIs exposed through the gateway. * Change Management: Clearly communicate API deprecation policies and provide ample notice and migration guides for API version changes.

Start Small, Iterate, and Measure

Don't attempt to implement every API Gateway feature simultaneously. * Phased Rollout: Begin with a few critical APIs and gradually onboard more services and features as you gain experience and confidence. * Measure Impact: Continuously monitor the impact of gateway implementation on performance, security, and developer experience. Use this feedback to iterate and optimize your gateway strategy.

By meticulously following these best practices, organizations can transform their API Gateway from a simple traffic handler into a sophisticated, resilient, and developer-friendly control plane that underpins their entire digital strategy, driving innovation while maintaining operational stability and robust security.

Case Studies / Real-world Applications

The theoretical benefits of an API Gateway truly come to life when observed in real-world applications across various industries. Its adoption is widespread because it addresses tangible business and technical challenges, enabling companies to scale, secure, and monetize their digital offerings more effectively.

1. E-commerce Platforms Handling Peak Loads: Consider a major online retailer during a Black Friday sale. The sheer volume of traffic – millions of concurrent users browsing products, adding items to carts, and checking out – can overwhelm backend systems. An API Gateway is critical here. It centralizes rate limiting, preventing specific bots or clients from DDOSing product catalog or checkout services. It also intelligently routes traffic, perhaps sending static content requests to caching services and dynamically load balancing authentication and payment requests across hundreds of instances of microservices. Caching frequently accessed product details at the gateway significantly reduces the load on database and inventory services, ensuring a smooth shopping experience even under extreme pressure. Furthermore, the gateway might implement circuit breakers for payment APIs; if a specific payment processor is experiencing issues, the gateway can temporarily route requests to an alternative or gracefully inform the user of a temporary delay, preventing cascading failures.

2. Financial Services Securing Transactions: In the highly regulated financial industry, security and compliance are paramount. A digital banking application processes sensitive customer data and financial transactions through numerous APIs. An API Gateway acts as an impenetrable shield. It enforces multi-factor authentication for sensitive operations, validates every incoming request against strict schemas to prevent injection attacks, and applies granular authorization policies based on the user's role and account permissions. Every single API call is logged with meticulous detail for audit trails, a feature akin to APIPark's "Detailed API Call Logging," which is essential for regulatory compliance and forensic investigations. Furthermore, the gateway can mask or encrypt sensitive data in transit, ensuring that PII (Personally Identifiable Information) and financial details are protected even if intercepted. This centralized security management offloads compliance burdens from individual banking microservices.

3. IoT Platforms Managing Device Connections: An Internet of Things (IoT) platform might connect millions of diverse devices, from smart home sensors to industrial machinery, all generating continuous streams of data. Managing these connections and the resulting data ingest is a monumental task. The API Gateway scales to handle millions of concurrent connections from diverse devices, routing device telemetry data to appropriate data ingestion services (e.g., Kafka queues or data lakes). It authenticates each device using device-specific certificates or tokens and applies rate limits to prevent any single malfunctioning device from flooding the system. The gateway can also perform protocol translation, allowing devices to communicate using lightweight protocols like MQTT or CoAP, while internal services consume data via HTTP/REST, simplifying the integration for various device types.

4. Healthcare Interoperability Solutions: Healthcare providers often need to integrate disparate systems – electronic health records (EHR), lab results, pharmacy systems – to achieve a holistic patient view. An API Gateway can standardize the interaction with these legacy and modern systems. It exposes a unified, secure API layer, often conforming to standards like FHIR (Fast Healthcare Interoperability Resources), even if the backend systems use older or proprietary protocols. The gateway enforces strict access controls to comply with regulations like HIPAA, ensuring that only authorized applications and users can access patient data. It can also perform data transformations to normalize data formats from different sources into a consistent view for consuming applications, greatly enhancing data interoperability and reducing the complexity of integration for new healthcare applications.

In each of these scenarios, the API Gateway proves to be far more than just a proxy. It is an intelligent orchestrator that directly contributes to the organization's ability to innovate, scale securely, meet regulatory requirements, and deliver a superior experience to both end-users and developers. Its versatility makes it an indispensable component in almost any complex, API-driven digital strategy.

Conclusion

The journey through the core concepts of an API Gateway underscores its undeniable prominence as a cornerstone of modern software architecture. In an era where digital transformation is inextricably linked to API-first strategies, the API Gateway transcends its initial definition as a simple traffic director, evolving into an indispensable, multi-faceted control plane for the entire API ecosystem.

We have explored how the API Gateway acts as the vigilant sentinel, centralizing critical functions that are otherwise fragmented and complex. From intelligently routing requests and load balancing traffic across dynamic microservices, to rigorously enforcing authentication and authorization policies that safeguard sensitive data, its role is pivotal. The gateway protects backend services from overload through sophisticated rate limiting and throttling, while simultaneously enhancing performance through caching and ensuring resilience with circuit breakers. Furthermore, its robust monitoring, detailed logging, and powerful analytics capabilities, as exemplified by platforms like APIPark, provide unprecedented visibility and actionable insights into API usage and health. Crucially, the API Gateway also acts as a powerful enabler of developer experience, offering streamlined access, comprehensive documentation, and a self-service portal that fosters rapid adoption and innovation.

The strategic deployment of an API Gateway is not merely a technical choice; it is a strategic imperative that empowers organizations to unlock the full potential of their APIs. It simplifies the complexity of distributed systems, strengthens security postures, enhances operational efficiency, and accelerates the pace of innovation. As APIs continue to proliferate and become even more embedded in every facet of business, mastering the concepts and best practices associated with the API Gateway will remain essential for building scalable, secure, and future-proof digital products and services. Embrace the API Gateway not just as a tool, but as the intelligent orchestrator that will drive your organization's success in the interconnected digital future.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between an API Gateway and a traditional Load Balancer or Reverse Proxy?

While a load balancer distributes network traffic and a reverse proxy forwards requests, an API Gateway operates at a higher, application-specific level. It understands the semantics of API calls, allowing it to apply intelligent routing based on API paths, headers, and content, perform request/response transformations, enforce granular security policies (authentication, authorization), and implement API-specific rate limiting and caching. It's an intelligent layer 7 proxy specifically designed for API management, whereas traditional load balancers and reverse proxies are more generic network traffic handlers.

2. Is an API Gateway necessary for all types of applications, especially smaller ones?

For very small, monolithic applications with only a few APIs and limited security or performance requirements, an API Gateway might introduce unnecessary overhead. However, as applications grow in complexity, adopt microservices, need to integrate with multiple client types (web, mobile, IoT), or require robust security, monitoring, and scaling capabilities, an API Gateway quickly becomes indispensable. It centralizes cross-cutting concerns, simplifies client interactions, and dramatically improves the manageability and resilience of the API ecosystem.

3. How does an API Gateway improve API security?

An API Gateway significantly enhances security by acting as the primary enforcement point for authentication and authorization. It can validate API keys, JWTs, OAuth tokens, and apply fine-grained access policies before requests reach backend services. It also helps mitigate common web vulnerabilities like DDoS attacks (via rate limiting), SQL injection (via input validation), and provides a central point for logging all API access for audit and forensics. Essentially, it acts as a specialized firewall and security broker for your APIs.

4. Can an API Gateway help with API versioning?

Yes, API Gateways are excellent for managing API versions. They can route incoming requests to specific backend service versions based on various criteria, such as version numbers embedded in the URL path (e.g., /v1/users vs. /v2/users), custom HTTP headers, or query parameters. This allows multiple API versions to coexist simultaneously, enabling developers to introduce new features without breaking existing client applications and facilitating a smooth transition process.

5. What are the main considerations when choosing between an open-source and a commercial API Gateway solution?

Choosing between open-source and commercial API Gateways depends on your specific needs, budget, and internal capabilities. Open-source solutions (like APIPark) offer flexibility, transparency, no direct licensing costs for the core product, and a strong community, but require internal expertise for deployment, maintenance, and support. Commercial solutions typically provide enterprise-grade features, professional technical support, SLAs, and often more user-friendly interfaces, but come with licensing costs and potential vendor lock-in. A hybrid approach, such as using an open-source core with optional commercial support/features, can offer a good balance for many organizations.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.