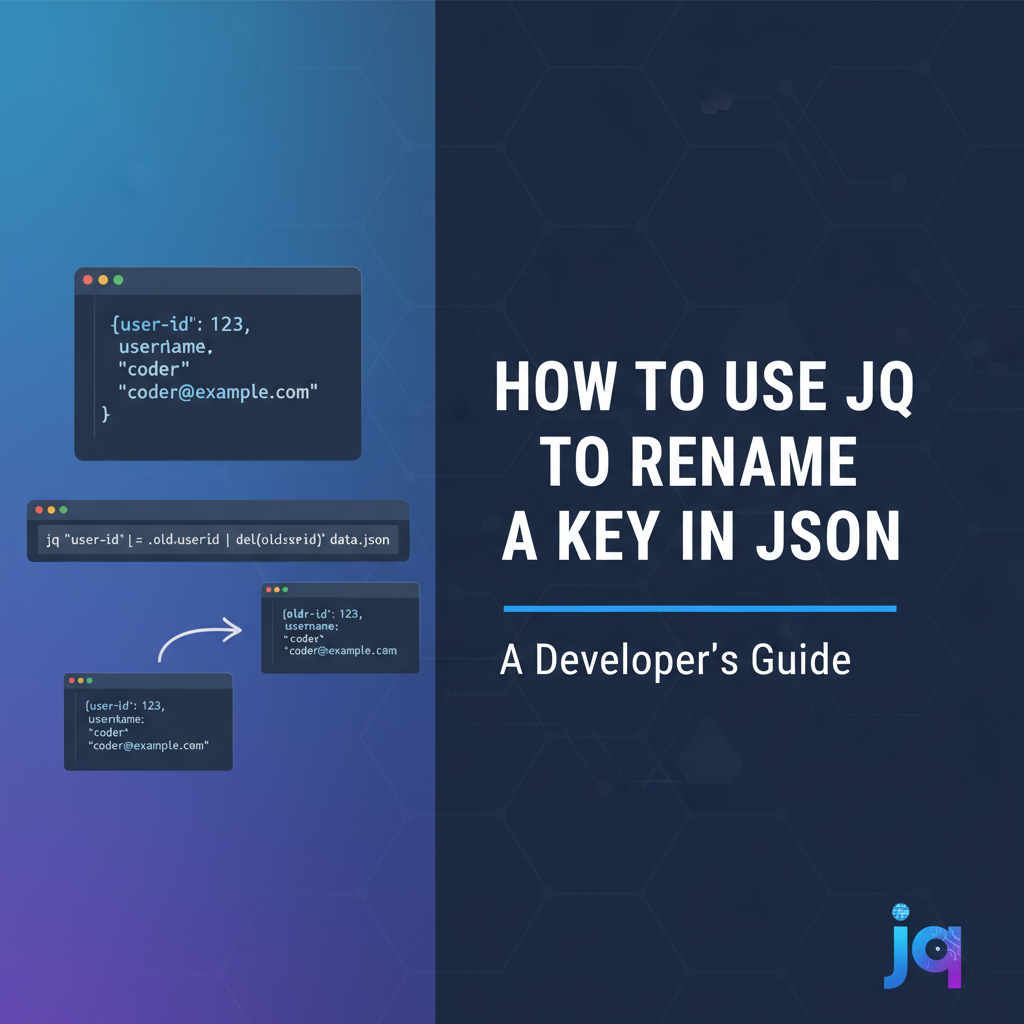

How to Use jq to Rename a Key in JSON

In the intricate world of modern software development, where data flows ceaselessly between systems, the ability to manipulate and transform data efficiently is paramount. JSON (JavaScript Object Notation) has emerged as the lingua franca for data interchange, thanks to its human-readable and machine-parseable structure. From configuring applications to serving responses for web apis, JSON is ubiquitous. However, the consistent evolution of software systems, varying data schema requirements, and the need for seamless integration often necessitate modifications to this data. One such common modification is renaming keys within JSON objects. This seemingly simple task can become a complex challenge, especially when dealing with deeply nested structures, arrays of objects, or conditional renaming requirements.

Enter jq, the command-line JSON processor. jq is often described as sed for JSON data, but its capabilities extend far beyond simple text replacement. It's a lightweight and flexible tool designed for slicing, filtering, mapping, and transforming structured data with a concise and powerful syntax. Whether you're a developer debugging api responses, a system administrator processing log files, or a data engineer preparing data for analysis, jq offers an invaluable toolkit for interacting with JSON data directly from your terminal. Its declarative approach to data manipulation allows users to express complex transformations with surprising brevity, making it an indispensable utility in today's data-driven landscape.

This comprehensive guide will delve deep into the art and science of renaming keys in JSON using jq. We will explore various techniques, from the most straightforward single-key renaming to intricate conditional and recursive transformations. Beyond mere syntax, we will unpack the underlying jq concepts that empower these operations, providing you with a foundational understanding that extends to many other JSON manipulation tasks. Furthermore, we will contextualize these techniques within real-world api development and management scenarios, highlighting how such transformations are critical for schema evolution, api gateway functionality, and ensuring compliance with OpenAPI specifications. By the end of this journey, you will not only be proficient in renaming JSON keys with jq but also possess a deeper appreciation for the tool's elegance and power in streamlining your data processing workflows.

Understanding JSON and the Need for Transformation

Before we dive into the specifics of jq, it's crucial to solidify our understanding of JSON itself and the various scenarios that necessitate key renaming. JSON represents data as collections of key-value pairs (objects) and ordered lists of values (arrays). Keys are always strings, and values can be strings, numbers, booleans, null, objects, or arrays. This hierarchical structure is both its strength and, at times, its complexity.

The Anatomy of JSON

Consider a simple JSON object representing a user profile:

{

"user_id": "u12345",

"firstName": "John",

"lastName": "Doe",

"emailAddress": "john.doe@example.com",

"isActive": true,

"roles": ["admin", "editor"]

}

In this example, user_id, firstName, lastName, etc., are keys, and "u12345", "John", etc., are their corresponding values. The ability to access, modify, or rename these keys is fundamental to data processing.

Why Renaming Keys Becomes Necessary

The reasons for renaming keys in JSON are manifold and often stem from practical challenges in system integration, data standardization, and evolving business requirements:

- Schema Evolution and Versioning: As software systems mature, their data models often change. A key named

user_idin an older version might becomeidin a newerapiversion to align with modern best practices or to reduce verbosity. Maintaining backward compatibility while introducing new schemas frequently requires data transformation, including key renaming, especially when supporting differentapiversions. For instance, anapi gatewaymight translate requests or responses betweenapiversions to ensure seamless communication for clients using older contracts. - Integration with External Systems: When integrating data from various sources, you often encounter differing naming conventions. One system might use

customerNamewhile another expectsclient_name. To aggregate or exchange data effectively, harmonizing these key names is essential. This is particularly true in microservices architectures where different services might be developed independently but need to share a common data understanding. - Data Standardization and Consistency: Within a single organization, different teams might adopt slightly different naming conventions for similar data points. Enforcing a standardized naming scheme across all

apis and databases improves maintainability, reduces confusion, and simplifies data analysis. Renaming keys is a crucial step in achieving this uniformity. OpenAPISpecification Alignment: Tools and frameworks that generate code fromOpenAPI(formerly Swagger) specifications often have strict naming requirements or preferences. If your backend data model deviates from these, transforming the JSON data to match theOpenAPIcontract is necessary to ensure correct code generation andapidocumentation. For instance, if anOpenAPIdefinition specifiesproductIdbut your database returnsproduct_id, a key rename is needed.- User Interface or Report Generation: Sometimes, keys need to be renamed to be more user-friendly or to match specific display requirements for a frontend application or a data report. For example,

total_amount_duemight be more presentable asAmount Duein a user interface. - Data Cleansing and Preprocessing: Before data can be fed into analytical tools, machine learning models, or data warehouses, it often requires extensive cleansing and preprocessing. This can include standardizing key names, removing redundant keys, or correcting inconsistencies, which falls squarely within

jq's capabilities.

Understanding these motivations clarifies why jq's ability to rename keys is not just a niche feature but a fundamental operation in the modern data landscape.

Introducing jq: The Command-Line JSON Processor

jq is an incredibly powerful, yet surprisingly small and fast, command-line JSON processor. It's written in C and has no runtime dependencies, making it highly portable. Its design philosophy centers around a functional programming paradigm, where data flows through a series of filters that transform it.

Why jq?

While one could theoretically use sed, awk, grep, or even write custom scripts in Python or Node.js to manipulate JSON, jq offers several distinct advantages:

- JSON-Awareness: Unlike generic text processing tools,

jqunderstands the JSON structure. It can parse, validate, and navigate JSON documents without breaking their integrity. This prevents common errors that arise when treating JSON as plain text. - Conciseness and Expressiveness:

jq's filter language is designed to be highly expressive, allowing complex transformations to be specified in a compact syntax. This reduces the amount of code needed compared to scripting languages. - Speed: Being a compiled C application,

jqis remarkably fast, even when processing large JSON files or streams. - Streaming Capability:

jqcan process JSON streams, meaning it doesn't need to load the entire JSON document into memory. This is crucial for handling very large files efficiently. - Functional Design: Its functional nature makes

jqscripts predictable and easy to reason about. Each filter takes an input and produces an output without side effects.

Installation

jq is available on most platforms.

- macOS:

bash brew install jq - Linux (Debian/Ubuntu):

bash sudo apt-get install jq - Linux (CentOS/RHEL):

bash sudo yum install jq - Windows:

jqcan be installed via Chocolatey:bash choco install jqOr downloaded directly from the officialjqwebsite.

To verify your installation, run:

jq --version

You should see the jq version number printed to your console.

Basic jq Syntax

The general syntax for jq is:

jq 'filter' [input_file(s)]

If no input file is provided, jq reads from standard input.

Common jq Filters:

.: The identity filter; prints the entire input JSON..key_name: Accesses the value associated withkey_name..[]: Iterates over elements of an array or values of an object..[index]: Accesses an element at a specificindexin an array.|: The pipe operator; takes the output of the filter on the left and feeds it as input to the filter on the right.

Let's use our sample JSON for demonstration:

{

"user_id": "u12345",

"firstName": "John",

"lastName": "Doe",

"emailAddress": "john.doe@example.com",

"isActive": true,

"roles": ["admin", "editor"]

}

Save this to user.json.

- Print entire JSON:

bash jq '.' user.jsonOutput: The entire JSON object. - Access a specific key:

bash jq '.firstName' user.jsonOutput:"John" - Access a nested key (if it were nested): Suppose the JSON was:

{"user": {"firstName": "John"}}bash jq '.user.firstName' nested_user.jsonOutput:"John"

This brief introduction sets the stage for more complex transformations, including the core topic of this article: renaming keys.

Fundamental Techniques for Renaming Keys

Renaming keys in jq often involves a combination of deleting the old key and adding a new key with the desired name and the original value. jq provides several expressive ways to achieve this, from direct assignments to more functional approaches that iterate over object entries.

Method 1: Direct Assignment and Deletion (Most Straightforward for Top-Level Keys)

For renaming a single, top-level key, the most intuitive method is to create a new key with the desired name and the value of the old key, then delete the old key. This approach is highly readable for simple cases.

Example: Renaming firstName to givenName

Given user.json:

{

"user_id": "u12345",

"firstName": "John",

"lastName": "Doe",

"emailAddress": "john.doe@example.com",

"isActive": true,

"roles": ["admin", "editor"]

}

The jq command:

jq '.givenName = .firstName | del(.firstName)' user.json

Explanation:

.givenName = .firstName: This part creates a new key namedgivenNameand assigns it the value currently held by thefirstNamekey. At this point, the object contains bothfirstNameandgivenName.|: The pipe operator passes the modified object to the next filter.del(.firstName): This filter then deletes the originalfirstNamekey from the object.

Output:

{

"user_id": "u12345",

"lastName": "Doe",

"emailAddress": "john.doe@example.com",

"isActive": true,

"roles": [

"admin",

"editor"

],

"givenName": "John"

}

Notice that givenName might appear at the end of the object due to the order of operations. JSON objects are technically unordered, but jq often preserves insertion order for new keys. If key order is critical (which it generally shouldn't be for valid JSON), more complex jq logic would be required. However, for most api consumption and data processing, the order of keys within an object is irrelevant.

This method is highly effective for top-level keys or keys at a known, specific path.

Method 2: Using with_entries for Functional Renaming

For more generic or dynamic renaming, especially when iterating over objects or applying transformations based on key names, with_entries is a powerful construct. with_entries transforms an object into an array of key-value objects (e.g., {"key": "firstName", "value": "John"}), allows you to manipulate this array, and then transforms it back into an object.

Example: Renaming firstName to givenName and lastName to familyName

This approach is particularly elegant for multiple renames or conditional renames.

Given user.json:

{

"user_id": "u12345",

"firstName": "John",

"lastName": "Doe",

"emailAddress": "john.doe@example.com",

"isActive": true,

"roles": ["admin", "editor"]

}

The jq command:

jq '

with_entries(

if .key == "firstName" then .key = "givenName"

elif .key == "lastName" then .key = "familyName"

else .

end

)

' user.json

Explanation:

with_entries(...): This filter takes an object and converts it into an array of{key: K, value: V}objects. It then applies the enclosed filter to each of these elements and converts the resulting array back into an object.- Inside

with_entries,.refers to each{"key": K, "value": V}object. if .key == "firstName" then .key = "givenName": If thekeyfield of the current entry object is"firstName", it renames it to"givenName".elif .key == "lastName" then .key = "familyName": Similarly, if it's"lastName", it renames it to"familyName".else .: For any other key, the entry remains unchanged (.is the identity filter).

Output:

{

"user_id": "u12345",

"givenName": "John",

"familyName": "Doe",

"emailAddress": "john.doe@example.com",

"isActive": true,

"roles": [

"admin",

"editor"

]

}

This method is highly flexible because it allows you to define a set of rules for key transformation, making it ideal for batch renaming or when the mapping from old to new names is dynamic. It's especially useful when you need to rename keys based on patterns or conditions that are difficult to express with simple direct assignments.

Method 3: Using a Mapping Object with reduce or with_entries

For situations where you have a predefined mapping of old keys to new keys, you can integrate this mapping directly into your jq script. This makes the renaming logic very clear and maintainable, especially when the number of keys to rename grows.

Let's define a mapping for our renaming: {"firstName": "givenName", "lastName": "familyName"}.

Using reduce (More verbose but powerful for complex state management): While reduce can be used, with_entries is generally more idiomatic for simple key remapping. However, reduce is good to know for arbitrary transformations.

A more straightforward approach with a mapping is still with_entries.

Revised Example with with_entries and a mapping:

Let's assume our mapping is hardcoded into the jq script for simplicity, or could be passed as an argument using --argjson.

jq '

. as $input |

{

"firstName": "givenName",

"lastName": "familyName",

"emailAddress": "email"

} as $mapping |

$input |

with_entries(

if $mapping[.key] then

.key = $mapping[.key]

else

.

end

)

' user.json

Explanation:

. as $input: Stores the original input object in a variable$input.{...} as $mapping: Defines an object$mappingwhere keys are old names and values are new names.$input | with_entries(...): Applieswith_entriesto the input object.if $mapping[.key] then .key = $mapping[.key] else . end: Insidewith_entries, it checks if the current key (.key) exists as a key in our$mappingobject.- If it does (

$mapping[.key]evaluates to a non-null, non-false value), it renames the current entry's key (.key) to the corresponding value from$mapping. - Otherwise, the entry remains unchanged.

- If it does (

Output:

{

"user_id": "u12345",

"givenName": "John",

"familyName": "Doe",

"email": "john.doe@example.com",

"isActive": true,

"roles": [

"admin",

"editor"

]

}

This mapping-based approach is highly versatile. You could even load the $mapping object from a separate JSON file, making your jq script very dynamic and configurable.

These fundamental techniques form the bedrock of renaming keys in jq. The choice of method depends on the complexity of your renaming task: direct assignment for simple cases, and with_entries for more generalized or conditional transformations.

Renaming Nested Keys

The real power of jq comes into play when dealing with nested JSON structures. Renaming keys within nested objects or arrays of objects requires careful navigation and often a recursive approach.

Renaming a Key at a Specific Nested Path

When the key you want to rename is not at the top level but deeply embedded within the JSON structure, you need to first navigate to its parent object.

Example: Renaming city to locationCity within an address object

Consider the following JSON:

{

"orderId": "O12345",

"customer": {

"customerId": "C9876",

"billingAddress": {

"street": "123 Main St",

"city": "Anytown",

"zipCode": "12345"

},

"shippingAddress": {

"street": "456 Oak Ave",

"city": "Otherville",

"zipCode": "67890"

}

},

"items": [...]

}

Save this as order.json. We want to rename customer.billingAddress.city to customer.billingAddress.locationCity.

Using the direct assignment and deletion method, but targeting the specific path:

jq '

.customer.billingAddress.locationCity = .customer.billingAddress.city |

del(.customer.billingAddress.city)

' order.json

Explanation:

.customer.billingAddress.locationCity = .customer.billingAddress.city: We access thebillingAddressobject withincustomer, create a newlocationCitykey, and assign it the value of the existingcitykey within the samebillingAddressobject.del(.customer.billingAddress.city): We then delete the originalcitykey from thebillingAddressobject.

Output (partial for brevity, focusing on relevant part):

{

"orderId": "O12345",

"customer": {

"customerId": "C9876",

"billingAddress": {

"street": "123 Main St",

"zipCode": "12345",

"locationCity": "Anytown"

},

"shippingAddress": {

"street": "456 Oak Ave",

"city": "Otherville",

"zipCode": "67890"

}

},

"items": []

}

Notice that only the city in billingAddress was renamed. The city in shippingAddress remains unchanged, demonstrating the precision of this path-specific approach.

Renaming Keys Within All Objects in an Array

A very common scenario is when you have an array of objects, and you need to rename a key within each of those objects. jq's array iteration (.[]) combined with object manipulation is perfect for this.

Example: Renaming productId to itemId for all items in an items array

Using order.json, let's expand the items array:

{

"orderId": "O12345",

"customer": { ... },

"items": [

{

"productId": "P001",

"name": "Widget A",

"quantity": 2,

"price": 10.50

},

{

"productId": "P002",

"name": "Gadget B",

"quantity": 1,

"price": 25.00

}

]

}

Save this updated JSON as order_with_items.json.

We want to transform each productId into itemId within the items array.

The jq command:

jq '

.items[] |= (

.itemId = .productId |

del(.productId)

)

' order_with_items.json

Explanation:

.items[]: This part selects all elements within theitemsarray.|=: This is the "update assignment" operator. It takes the current element as input, applies the filter on the right, and then updates the current element with the filter's output. In this case, each item object in the array is updated.(.itemId = .productId | del(.productId)): This is the same rename logic as before, applied to each individual item object. Within this context,.refers to the current item object being processed from theitemsarray.

Output (partial, focusing on items array):

{

"orderId": "O12345",

"customer": { ... },

"items": [

{

"name": "Widget A",

"quantity": 2,

"price": 10.50,

"itemId": "P001"

},

{

"name": "Gadget B",

"quantity": 1,

"price": 25.00,

"itemId": "P002"

}

]

}

This is a very common and powerful pattern for batch transformations within arrays of objects.

Renaming Keys Anywhere in the Document (Recursive Descent)

What if you don't know the exact path to a key, or if a key (e.g., "id") appears at multiple, arbitrary levels of nesting and you want to rename all occurrences? jq's recursive descent operator .. combined with select and with_entries can achieve this.

Example: Renaming all instances of id to identifier wherever they appear.

Consider a more complex JSON:

{

"userId": "U001",

"order": {

"id": "ORD001",

"items": [

{"id": "ITEM001", "name": "Pen"},

{"id": "ITEM002", "name": "Notebook", "category": {"id": "CAT01", "name": "Stationery"}}

]

},

"metadata": {

"auditId": "A999"

}

}

Save this as deep_data.json. We want to rename all id keys to identifier.

The jq command:

jq '

walk(

if type == "object"

then

with_entries(

if .key == "id" then .key = "identifier" else . end

)

else

.

end

)

' deep_data.json

Explanation:

walk(f): This is an extremely powerfuljqfunction. It recursively traverses the entire JSON structure and applies filterfto every value (object, array, string, number, etc.) encountered during the traversal. Theffilter is applied after the children have been processed (post-order traversal).if type == "object" then ... else . end: Insidewalk, we first check if the current value (.) is an object. If it is, we proceed with renaming. If not (e.g., it's a string, number, or array), we leave it unchanged (.).with_entries(if .key == "id" then .key = "identifier" else . end): This is the familiarwith_entriespattern applied to the current object. It iterates over the object's key-value pairs and renamesidtoidentifierif found.

Output:

{

"userId": "U001",

"order": {

"identifier": "ORD001",

"items": [

{

"identifier": "ITEM001",

"name": "Pen"

},

{

"identifier": "ITEM002",

"name": "Notebook",

"category": {

"identifier": "CAT01",

"name": "Stationery"

}

}

]

},

"metadata": {

"auditId": "A999"

}

}

This walk function is incredibly versatile for applying transformations recursively, not just for renaming keys but for any form of data normalization or modification at arbitrary depths. It's an indispensable tool for api developers dealing with complex, potentially inconsistent, JSON payloads from various services.

Conditional and Advanced Renaming Techniques

Beyond simple one-to-one renaming, jq excels at conditional transformations, where keys are renamed only if certain criteria are met, or when the new key name needs to be dynamically generated.

Conditional Renaming Based on Key Presence or Value

Sometimes, you might only want to rename a key if it exists, or if its associated value meets a specific condition.

Example 1: Rename code to productCode only if code exists

Assume some products might have a code and some might not.

[

{"name": "Laptop", "code": "LAP-001", "price": 1200},

{"name": "Mouse", "price": 25},

{"name": "Keyboard", "code": "KB-005", "price": 75}

]

Save as products.json.

jq '

map(

if has("code") then

.productCode = .code | del(.code)

else

.

end

)

' products.json

Explanation:

map(...): Applies the enclosed filter to each element of the input array.if has("code") then ... else . end: For each object,has("code")checks if thecodekey exists.- If it does, the key is renamed from

codetoproductCode. - If not, the object remains unchanged (

.).

- If it does, the key is renamed from

Output:

[

{

"name": "Laptop",

"price": 1200,

"productCode": "LAP-001"

},

{

"name": "Mouse",

"price": 25

},

{

"name": "Keyboard",

"price": 75,

"productCode": "KB-005"

}

]

Example 2: Rename status to orderStatus only if its value is "PENDING"

{

"orderId": "ORD100",

"status": "PENDING",

"customer": "Alice"

}

and

{

"orderId": "ORD101",

"status": "SHIPPED",

"customer": "Bob"

}

Assume these are individual objects.

jq '

if .status == "PENDING" then

.orderStatus = .status | del(.status)

else

.

end

'

Run this on pending_order.json (the first example) and then shipped_order.json (the second example).

For pending_order.json:

{

"orderId": "ORD100",

"customer": "Alice",

"orderStatus": "PENDING"

}

For shipped_order.json:

{

"orderId": "ORD101",

"status": "SHIPPED",

"customer": "Bob"

}

Only the order with status: "PENDING" was transformed. This level of conditional control is invaluable for data validation and transformation pipelines, especially in api gateways where specific conditions trigger different processing logic.

Dynamic Key Naming

Sometimes, the new key name isn't fixed but needs to be constructed from existing data within the JSON.

Example: Prepending a prefix to a key name based on a value

Suppose you have data where a key like value needs to be renamed to something like product_value or service_value depending on a type field.

{

"type": "product",

"id": "P1",

"value": 123.45

}

and

{

"type": "service",

"id": "S2",

"value": "monthly"

}

Save as dynamic_data.json.

jq '

. as $obj |

.value as $val |

($obj.type + "_value") as $newKey |

del(.value) |

.[$newKey] = $val

' dynamic_data.json

Explanation:

. as $obj: Stores the entire input object in$obj..value as $val: Stores the value ofvaluein$val.($obj.type + "_value") as $newKey: Constructs the new key name by concatenating thetypevalue with_valueand stores it in$newKey.del(.value): Deletes the originalvaluekey..[$newKey] = $val: Uses bracket notation.[expression]to create a new key whose name is the result of$newKeyand assigns it the stored$val.

Output for type "product":

{

"type": "product",

"id": "P1",

"product_value": 123.45

}

Output for type "service":

{

"type": "service",

"id": "S2",

"service_value": "monthly"

}

This dynamic key naming ability is extremely powerful for data normalization, especially when dealing with schemas that have variable components or require metadata to influence key structure.

Using select and map with with_entries for Targeted Renaming

Combining select with map and with_entries allows for very precise transformations on subsets of data.

Example: Rename id to uniqueId only for objects that also have a type key set to "customer"

[

{"id": "C1", "type": "customer", "name": "Alice"},

{"id": "P1", "type": "product", "name": "Widget"},

{"id": "C2", "type": "customer", "name": "Bob"}

]

Save as mixed_data.json.

jq '

map(

if .type == "customer" then

with_entries(

if .key == "id" then .key = "uniqueId" else . end

)

else

.

end

)

' mixed_data.json

Explanation:

map(...): Processes each item in the array.if .type == "customer" then ... else . end: Checks if the current object'stypekey is"customer".- If it is, the

with_entriesblock is executed on that specific object. - If not, the object is passed through unchanged.

- If it is, the

with_entries(...): Inside the conditional block, this renamesidtouniqueIdfor thecustomerobjects.

Output:

[

{

"uniqueId": "C1",

"type": "customer",

"name": "Alice"

},

{

"id": "P1",

"type": "product",

"name": "Widget"

},

{

"uniqueId": "C2",

"type": "customer",

"name": "Bob"

}

]

This demonstrates fine-grained control over which objects and keys are subjected to transformation, crucial for maintaining data integrity in complex data pipelines.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

jq in the API Ecosystem: API Gateways and OpenAPI

The ability to manipulate JSON data with jq extends far beyond ad-hoc command-line usage. It plays a critical role in the broader api ecosystem, particularly concerning api gateways and OpenAPI specifications. These tools and concepts are central to modern distributed systems, and jq's capabilities for data transformation are indispensable for their effective operation.

API Data Transformation: Bridging the Gaps

In a world of diverse services and evolving schemas, apis often need to interact with data formats that are not perfectly aligned. A backend service might expose data in one format, but a frontend application, a third-party integration, or a downstream service might require a slightly different structure or naming convention. This is where jq shines as a data transformation workhorse.

Consider an api that fetches product details. The raw response from the inventory service might look like this:

{

"prod_id": "P101",

"prod_name": "Premium Keyboard",

"current_stock": 50,

"last_updated_ts": 1678886400

}

However, the consumer of this api (e.g., a mobile app) might expect:

{

"itemId": "P101",

"itemName": "Premium Keyboard",

"availableQuantity": 50,

"lastUpdated": "2023-03-15T00:00:00Z"

}

Here, multiple transformations are needed: 1. Rename prod_id to itemId. 2. Rename prod_name to itemName. 3. Rename current_stock to availableQuantity. 4. Rename last_updated_ts to lastUpdated and convert the Unix timestamp to an ISO 8601 string.

While jq can handle all these transformations, writing and maintaining complex jq scripts can become challenging for an entire api catalog. This is where specialized api management platforms and api gateways come into play.

The Role of API Gateways in Data Transformation

An api gateway acts as a single entry point for all api requests, sitting between clients and backend services. Beyond basic routing, load balancing, and authentication, a key function of an api gateway is data transformation. API gateways can normalize requests before they reach backend services and transform responses before they are sent back to clients. This allows:

- Decoupling: Clients can interact with a consistent

apiinterface, even if backend services evolve or use different data models. - Version Management:

API gateways can manage multipleapiversions by translating requests/responses between them, allowing clients to use older versions while backend services run newer ones. - Standardization: Enforcing common data formats and naming conventions across all

apis, improving consistency and reducing integration friction. - Security: Masking sensitive data or restructuring payloads to meet security requirements.

Many api gateways offer built-in transformation capabilities, often using their own templating languages or integration with scripting engines. In some cases, jq-like syntax or actual jq execution might be integrated for complex JSON transformations.

For instance, an advanced api gateway like ApiPark offers robust api management and transformation features. As an open-source AI gateway and api management platform, ApiPark is designed to handle intricate api lifecycle management, including powerful data transformation capabilities that are crucial for integrating diverse services and AI models. While jq provides granular control for on-the-fly transformations at the command line, platforms like ApiPark centralize these operations, making it easier to maintain consistent data formats across various apis and services, especially when dealing with AI-driven apis or complex OpenAPI specifications. ApiPark simplifies the process of standardizing api formats, encapsulating prompts into REST apis, and managing the entire api lifecycle from design to deployment. This is vital for modern enterprises where apis are not just data endpoints but also critical business assets.

OpenAPI and Schema Alignment

OpenAPI (formerly Swagger) is a language-agnostic, human-readable specification for describing RESTful apis. It defines the operations, parameters, responses, and data models of an api. When developing apis based on OpenAPI specifications, ensuring that the actual api payloads conform to the defined schemas is critical for:

- Documentation: Accurate documentation generated from

OpenAPIspecs. - Code Generation: Generating client SDKs or server stubs that correctly understand the data structures.

- Validation: Ensuring

apirequests and responses adhere to expected formats.

Key renaming, as performed by jq, is frequently necessary to align real-world JSON data with the ideal schemas defined in an OpenAPI document. If your OpenAPI specification dictates a key named camelCaseIdentifier but your internal service returns snake_case_identifier, a transformation step (perhaps at the api gateway or within a middleware layer) is required. jq can be used to prototype these transformations or even integrate them into automated scripts that preprocess data before it's validated against an OpenAPI schema. This ensures that the data model presented by your api matches its public contract, preventing unexpected errors and improving the developer experience for api consumers.

Best Practices and Performance Considerations

While jq is incredibly powerful, like any tool, it's most effective when used thoughtfully. Understanding best practices and performance considerations can significantly improve the maintainability, readability, and efficiency of your jq scripts.

Readability and Maintainability

Complex jq filters can quickly become difficult to read and understand, especially when dealing with nested logic or multiple transformations.

- Use

.for Identity and Context: Remember that.represents the current input. Using it judiciously helps clarify what data is being operated on at each step. - Break Down Complex Filters: For intricate transformations, use the pipe

|operator to break down the filter into smaller, manageable steps. Each step should perform a single logical operation. - Use Variables (

as $var): Store intermediate results or frequently used values in variables to avoid repetition and improve clarity. This is particularly useful when you need to refer back to the original input or a specific part of it after performing transformations.bash jq '.customer.billingAddress as $billing | $billing.locationCity = $billing.city | del($billing.city)' order.json - Format Your

jqCode: For multi-line scripts, use proper indentation.jqignores whitespace between filters, allowing for clear formatting. Enclosing thejqfilter in single quotes'...'is standard, but for scripts with internal single quotes or complex logic, you might need to usebash's$'...'or heredoc<<EOF ... EOFsyntax. - Add Comments (Informal): Although

jqdoesn't have a formal comment syntax within the filter string itself, you can add comments in your shell script orMakefileabove thejqcommand to explain its purpose.

Performance Considerations

jq is generally very fast, but for extremely large JSON files or high-throughput api environments, optimization might be necessary.

- Avoid Unnecessary Operations: Every filter and operation adds overhead. If you only need to process a small part of a large JSON document, try to select that part early in your filter chain.

- Streaming vs. In-Memory Processing:

jqcan stream very large JSON arrays or concatenated JSON objects. However, operations that require the entire object to be in memory (e.g.,sort_by,group_by, or building large mapping objects) will consume more memory. Be mindful of these operations for extremely large datasets. - Path Specificity: When possible, use specific paths (

.a.b.c) rather than recursive descent (..) if you only need to target keys at known locations. Recursive descent traverses the entire document, which can be slower. - Regex Performance: If using

test()ormatch()with regular expressions, ensure your regex patterns are efficient. Poorly constructed regexes can be computationally expensive. - Measure and Profile: For critical

apidata transformations, especially within anapi gateway, measure the performance impact of yourjqscripts. Tools liketimecan give basic metrics. In a productionapicontext, theapi gatewayitself would provide performance monitoring.

Error Handling

While jq is resilient, malformed JSON input or filters that attempt to access non-existent keys in a way that produces an error can lead to unexpected outputs or script failures.

- Graceful Handling of Missing Keys: Use

has("key")orif .key? then ... else ... endto conditionally operate on keys that might not always be present..followed by?(.key?) is a "null-safe" operator that returnsnullinstead of an error if the key doesn't exist. - Default Values: Provide default values using

//(the alternative operator) for keys that might be missing ornull. For example,(.key // "default_value")will usedefault_valueif.keyisnullor missing. - Input Validation: Ensure your

jqscript is robust against variations in input JSON schema. Testing with diverse inputs is crucial.

By adhering to these best practices, you can write jq scripts that are not only functional but also maintainable, performant, and resilient, which is vital for reliable api data processing and api gateway operations.

Comparison with Other JSON Manipulation Tools

While jq is a stellar choice for command-line JSON manipulation, it's worth briefly noting other tools that can achieve similar results, and understanding why jq often stands out for its specific niche.

Python with json Module

Python is a general-purpose programming language with an excellent built-in json module.

Pros: * Full Programming Power: Python offers the full power of a programming language, allowing for arbitrary complex logic, external dependencies, and integration with other systems. * Readability (for complex tasks): For very intricate transformations that involve more than just data mapping, a well-structured Python script can often be more readable than a dense jq filter. * Ecosystem: Access to a vast ecosystem of libraries for data processing, networking, and more.

Cons: * Setup Overhead: Requires a Python interpreter to be installed. * Verbosity: Even for simple tasks, Python can be more verbose than jq. For example, loading a JSON file, parsing it, modifying, and then dumping it back out is more lines of code. * Ad-hoc Use: Less convenient for quick, interactive command-line transformations compared to jq.

Example (Renaming firstName to givenName in Python):

import json

import sys

# Read JSON from stdin or file

data = json.load(sys.stdin)

if "firstName" in data:

data["givenName"] = data["firstName"]

del data["firstName"]

json.dump(data, sys.stdout, indent=2)

This is clearly more code than jq '.givenName = .firstName | del(.firstName)'.

Node.js with JSON.parse and JSON.stringify

Similar to Python, Node.js provides a robust environment for JSON processing.

Pros: * JavaScript Ecosystem: Leverages the vast npm ecosystem and JavaScript's native JSON support. * Asynchronous Operations: Ideal for api and web-related tasks where asynchronous I/O is common.

Cons: * Runtime Overhead: Requires Node.js runtime. * Verbosity: Similar to Python, more verbose for simple tasks.

sed / awk

These are powerful text processing utilities, but they are not JSON-aware.

Pros: * Ubiquitous: Often pre-installed on Unix-like systems. * Fast: Highly optimized for text processing.

Cons: * Not JSON-Aware: They operate on text strings, not the JSON data model. Renaming keys with sed or awk reliably is extremely difficult and error-prone due to the lack of context about JSON structure (e.g., distinguishing a key from a string value, handling nested objects, escaping characters). * Fragile: Changes in JSON formatting (whitespace, key order, nesting depth) can easily break sed/awk scripts.

Example (Don't do this, but for illustration - highly unreliable):

# This is a very fragile example and should NOT be used for real JSON parsing

sed 's/"firstName":/"givenName":/' user.json

This would replace "firstName" even if it appeared as a value or within a string, not just as a key.

Conclusion on Tools

jq occupies a sweet spot: it's JSON-aware, concise, fast, and ideal for command-line or shell scripting use cases. For simple to moderately complex JSON transformations, it often outperforms scripting languages in terms of development speed and conciseness, while being far more robust than generic text processing tools like sed or awk. When the task becomes excessively complex, requires external data sources beyond simple lookups, or demands full programming logic, then a general-purpose language like Python or Node.js becomes the more appropriate choice. But for the vast majority of JSON transformation tasks in an api developer's or system administrator's toolkit, jq is the undisputed champion.

A Practical Use Case: Standardizing API Responses for OpenAPI Compliance

Let's tie together several concepts with a more elaborate practical example relevant to api development. Imagine you are building an api gateway layer for an existing microservice. This microservice returns product information, but its response schema (legacy_product.json) doesn't quite match the desired OpenAPI specification for your new unified api.

Legacy Microservice Response (legacy_product.json):

{

"product_id": "P001",

"name_en": "Super Widget",

"description_en": "A really super widget for all your needs.",

"sku_code": "SW-001-A",

"pricing": {

"base_price": 99.99,

"currency_symbol": "$",

"discount_percent": 10

},

"available_stock": 150,

"category_tags": ["electronics", "home"],

"supplier_info": {

"supplier_id": "SUP001",

"supplier_name": "Global Supplies Co."

},

"created_at": 1678886400,

"last_modified": 1678972800,

"active": true

}

Desired OpenAPI Compliant Schema (target_product.json):

{

"id": "P001",

"productName": "Super Widget",

"description": "A really super widget for all your needs.",

"sku": "SW-001-A",

"priceDetails": {

"amount": 89.99, # Calculated: base_price - (base_price * discount_percent / 100)

"currency": "USD" # Mapping currency_symbol to ISO code

},

"stockQuantity": 150,

"categories": ["electronics", "home"],

"vendorDetails": {

"vendorId": "SUP001",

"vendorName": "Global Supplies Co."

},

"createdAt": "2023-03-15T00:00:00Z", # Convert timestamp to ISO 8601

"updatedAt": "2023-03-16T00:00:00Z", # Convert timestamp to ISO 8601

"isActive": true

}

We need to perform multiple key renames and some value transformations.

The jq Transformation Script:

jq '

# Store the current input object for easy access

. as $product |

# Define currency symbol to ISO code mapping

{ "$" : "USD", "€" : "EUR", "£" : "GBP" } as $currencyMap |

{

# Renaming top-level keys

id: $product.product_id,

productName: $product.name_en,

description: $product.description_en,

sku: $product.sku_code,

stockQuantity: $product.available_stock,

categories: $product.category_tags,

isActive: $product.active,

# Nested object transformation for pricing

priceDetails: {

amount: ($product.pricing.base_price * (100 - $product.pricing.discount_percent) / 100) | round, # Calculate discounted price

currency: ($currencyMap[$product.pricing.currency_symbol] // "UNKNOWN") # Map currency symbol to ISO code, with fallback

},

# Nested object transformation for supplier info

vendorDetails: {

vendorId: $product.supplier_info.supplier_id,

vendorName: $product.supplier_info.supplier_name

},

# Timestamp conversions to ISO 8601 string

# fromunixtime(seconds) converts Unix epoch seconds to ISO 8601 string

createdAt: ($product.created_at | fromunixtime),

updatedAt: ($product.last_modified | fromunixtime)

}

' legacy_product.json

Explanation of the jq script:

. as $product: We store the entire input JSON object in a variable$productfor clarity and ease of access to original values.{ "$" : "USD", ... } as $currencyMap: We define a mapping object for currency symbols to ISO codes. This demonstrates how external mappings can be incorporated.- The main output is constructed as a new object

{}, where each key-value pair is explicitly defined. This gives precise control over the output structure and allows for calculations and transformations on values.id: $product.product_id: Renamesproduct_idtoid. Similar direct renames for other top-level keys.priceDetails: { ... }: A new nested objectpriceDetailsis created.amount: (...) | round: Calculates the discounted price and rounds it. This is a value transformation, not just a key rename.currency: ($currencyMap[$product.pricing.currency_symbol] // "UNKNOWN"): Uses the$currencyMapto convert the symbol to an ISO code. The// "UNKNOWN"provides a fallback if the symbol isn't found in the map, demonstrating basic error handling or default value assignment.

vendorDetails: { ... }: Similar nested object creation and key renaming for supplier information.createdAt: ($product.created_at | fromunixtime): Converts the Unix timestampcreated_atinto an ISO 8601 formatted string usingjq's built-infromunixtimefunction. This is a critical data type transformation, often required forOpenAPIschema validation.

- The final output is a completely restructured JSON object that matches the desired

OpenAPIspecification, effectively acting as anapi gatewaytransformation filter.

This example showcases how jq can be used to perform comprehensive transformations, not just simple key renames, making it an incredibly versatile tool for api developers and api management professionals. It bridges the gap between disparate data formats, ensuring api consistency and OpenAPI compliance, crucial aspects managed efficiently by platforms like APIPark.

Conclusion

The journey through jq's capabilities for renaming keys in JSON reveals a tool of remarkable power and flexibility. From simple, top-level key transformations to complex, conditional, and recursive operations across deeply nested structures, jq provides an elegant and efficient syntax for manipulating JSON data at the command line. We've explored foundational techniques like direct assignment and deletion, the versatile with_entries filter, and advanced patterns using walk for arbitrary depth transformations.

The practical relevance of these techniques extends profoundly into the api ecosystem. In an environment where apis are the backbone of digital services, the ability to rapidly adapt and standardize data formats is not merely convenient but essential. Whether it's aligning responses with evolving OpenAPI specifications, harmonizing data for seamless integration across microservices, or preprocessing data for an api gateway, jq stands as an indispensable utility. It empowers developers and operators to confidently manage data schema discrepancies, ensuring api consistency and reducing the friction inherent in distributed systems.

Furthermore, we've seen how dedicated api management platforms like ApiPark elevate these capabilities by centralizing and operationalizing api transformations, alongside comprehensive api lifecycle management, AI model integration, and OpenAPI support. While jq provides the granular control at the command line, platforms like APIPark provide the robust, scalable infrastructure needed for enterprise-grade api governance, including complex data mapping for AI apis and seamless deployment.

Mastering jq not only equips you with a powerful tool for immediate data transformation tasks but also fosters a deeper understanding of functional data processing paradigms. As you continue your work with JSON data, let jq be your trusted companion, simplifying complex challenges and empowering you to shape data precisely to your needs. Its concise syntax and potent filters will undoubtedly streamline your workflows, saving you countless hours and ensuring the integrity and usability of your JSON data across all your apis and applications.

Frequently Asked Questions (FAQs)

- What is

jqand why should I use it for JSON manipulation?jqis a lightweight and flexible command-line JSON processor. You should use it because it is JSON-aware (unlikesedorawk), concise, very fast, and specifically designed for slicing, filtering, mapping, and transforming structured JSON data without breaking its integrity. It's ideal for scripting,apidebugging, and data preprocessing. - Can

jqrename keys at any depth in a JSON document? Yes,jqcan rename keys at any depth. For known, specific paths, you can chain accessors like.parent.child.key. For arbitrary depths,jq'swalk()function combined withwith_entries()allows for recursive traversal and conditional renaming of keys wherever they appear in the document. - How can I rename multiple keys in a single

jqcommand? You can rename multiple keys using a few methods:- Chained

|=anddel(): For top-level or specific-path keys,jq '.newKey1 = .oldKey1 | del(.oldKey1) | .newKey2 = .oldKey2 | del(.oldKey2)'. with_entries()withif/elif/else: This is more functional and scalable for multiple conditional renames:jq 'with_entries(if .key == "old1" then .key = "new1" elif .key == "old2" then .key = "new2" else . end)'.- Mapping Object: For many renames, define a mapping object and use it with

with_entries()to dynamically rename keys.

- Chained

- Is it possible to dynamically generate new key names using

jq? Absolutely.jqallows you to construct key names from existing values within the JSON object. You can use string concatenation (.value_a + "_" + .value_b) to create a new key name as a string, and then use bracket notation (.[$dynamicKey] = $value) to assign a value to this dynamically generated key. - How does key renaming with

jqrelate toAPI Gateways andOpenAPIspecifications? Key renaming is crucial forAPI Gateways andOpenAPIspecs because it helps standardizeapidata. AnAPI Gatewaycan use transformations (which can be prototyped or inspired byjqlogic) to align backend service responses with a consistent publicapischema, fulfillingOpenAPIdefinitions. This ensures compatibility for consumers, simplifiesapiversioning, and provides a unified experience across different services. Platforms likeAPIParkoffer built-in capabilities to manage these transformations as part of theirAPI managementfeatures.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.