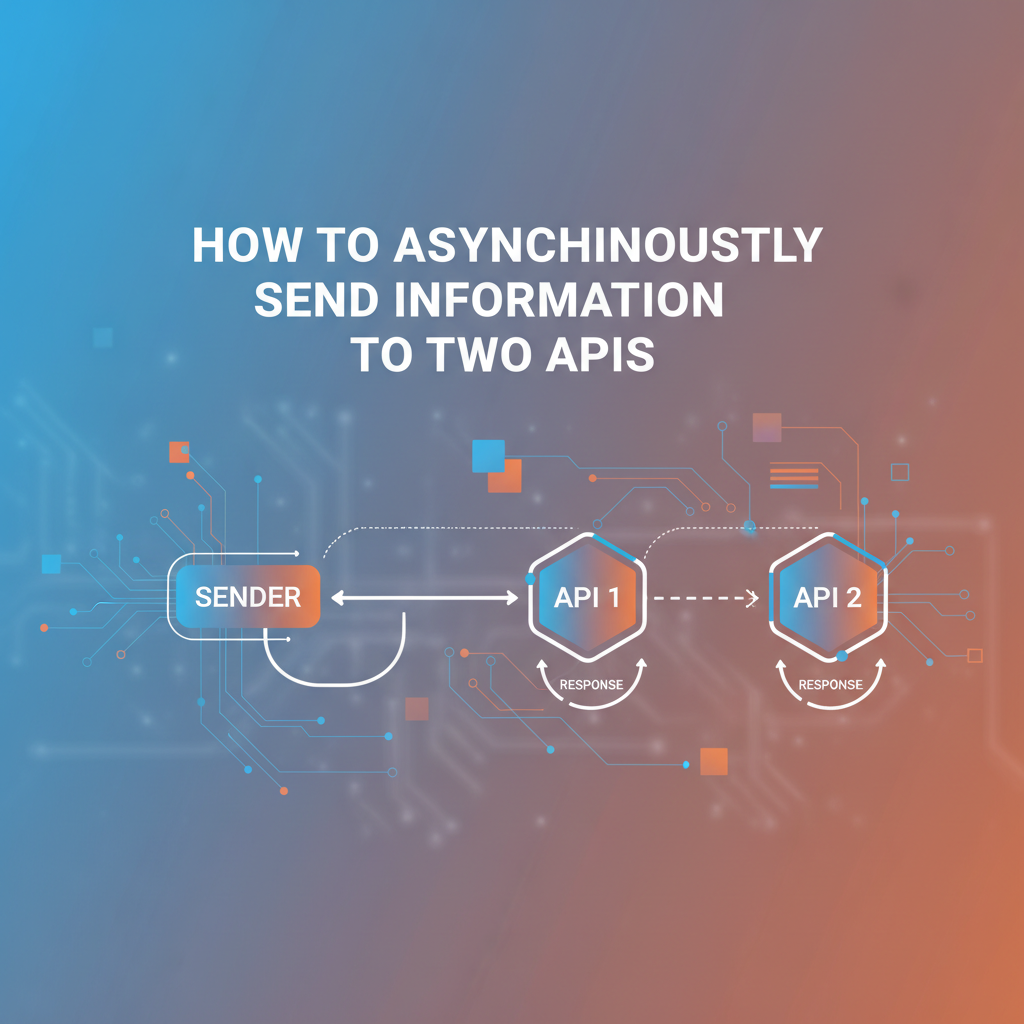

How to Asynchronously Send Information to Two APIs

In the vast and interconnected digital landscape of today, applications rarely exist in isolation. They are part of a complex ecosystem, constantly interacting with other services, platforms, and data sources. The backbone of this intricate communication network is the Application Programming Interface (API). From fetching user data to processing transactions, APIs facilitate the seamless exchange of information that powers our modern world. However, as systems grow in complexity and user expectations for responsiveness skyrocket, the traditional synchronous approach to API interaction often falls short, especially when dealing with multiple external services. This is where the power of asynchronous communication truly shines, offering a pathway to building more resilient, scalable, and responsive applications.

Imagine a scenario where a single user action, such as signing up for a service, needs to trigger updates across several disparate systems – perhaps creating a user record in a primary database, registering them with an email marketing platform, and simultaneously notifying an internal analytics service. If each of these operations were performed synchronously, the user would have to wait for all three API calls to complete before their sign-up process could finalize. This cascading dependency can lead to unacceptable delays, poor user experience, and a brittle system architecture prone to failure. If just one of these external APIs experiences a momentary slowdown or outage, the entire user registration process grinds to a halt.

This comprehensive guide will explore the intricacies of asynchronously sending information to two (or more) APIs. We will delve into the fundamental differences between synchronous and asynchronous communication, articulate the compelling reasons for adopting asynchronous patterns, and meticulously examine various architectural approaches and practical considerations. Our journey will cover everything from message queues and serverless functions to the pivotal role of an api gateway in orchestrating these complex interactions. By the end, you will possess a profound understanding of how to design and implement robust, performant, and future-proof systems that leverage the full potential of asynchronous api communication, ensuring your applications remain responsive and resilient even in the face of distributed system challenges.

The Fundamental Divide: Synchronous vs. Asynchronous API Communication

To truly appreciate the advantages of asynchronous communication, it's essential to first grasp the nature of its counterpart: synchronous communication. Understanding both paradigms provides the necessary context for making informed architectural decisions tailored to specific application requirements and performance goals.

Synchronous API Communication: The Direct Approach

Synchronous communication is the most straightforward and often the default model for interacting with an api. When a client makes a synchronous api call, it sends a request and then waits for the server to process that request and return a response before proceeding with any further operations. Think of it like a conversation where you ask a question and remain silent, doing nothing else, until you receive an answer. Only after hearing the response do you formulate your next thought or action.

How it Works:

- Request Sent: The client initiates an

apicall, sending data to a specific endpoint. - Blocking State: The client's execution thread enters a blocking state. It pauses all other operations, dedicating its resources to waiting for the server's reply.

- Server Processing: The

apiserver receives the request, processes the data, performs any necessary operations (e.g., database writes, calculations), and prepares a response. - Response Received: The server sends the response back to the client.

- Unblocking and Continuation: The client receives the response, unblocks its thread, and resumes its normal operations, often using the data from the response to continue its workflow.

Advantages of Synchronous Communication:

- Simplicity: For single, independent

apicalls, synchronous communication is easy to implement and understand. The flow of control is linear and predictable. - Immediate Feedback: The client gets an immediate response, which is crucial for operations where the subsequent steps depend directly on the outcome of the

apicall (e.g., login authentication: you need to know if it succeeded before redirecting the user). - Easier Debugging: The direct request-response cycle makes it simpler to trace issues, as the problematic step is usually evident from the point of failure.

Disadvantages of Synchronous Communication:

- Latency Accumulation: When calling multiple APIs synchronously, the total response time becomes the sum of the latencies of all individual calls, plus any network overhead. This can lead to significant delays for the end-user.

- Blocking Operations: The client's thread is blocked, meaning it cannot perform other useful work while waiting. In a web server context, this can tie up valuable resources, limiting the number of concurrent requests the server can handle and degrading overall performance and scalability.

- Reduced Resilience: If one

apiin a chain of synchronous calls becomes slow or unresponsive, it can cause the entire chain to slow down or fail. This "cascading failure" can bring down parts of or even the entire application. - Tight Coupling: Synchronous calls often imply a direct dependency between the calling service and the called service. Changes in one can directly impact the other, making system evolution more challenging.

- Resource Consumption: Maintaining open connections and waiting threads consumes memory and CPU cycles, especially under high load.

Asynchronous API Communication: The Concurrent Approach

Asynchronous communication, in contrast, allows the client to send a request and then immediately continue with other tasks without waiting for a response. The client expresses its interest in a future response and delegates the waiting to another mechanism, freeing its primary thread to perform other work. When the response eventually arrives, a predefined callback or event handler processes it. This is analogous to sending an email or leaving a voicemail: you send your message and immediately move on to other tasks, expecting a reply at some point in the future, which you'll handle when it arrives.

How it Works (Conceptual):

- Request Sent: The client initiates an

apicall. - Non-Blocking State: Instead of waiting, the client's execution thread immediately returns control, continuing with other operations. The

apicall is essentially handed off to a background process or mechanism. - Server Processing: The

apiserver processes the request. - Notification/Callback: Once the server completes the processing, it sends a response back. This response might trigger a callback function on the client side, push a message to a queue, or trigger an event.

- Response Handling: The client, at a later point, receives and processes the response through the designated mechanism (e.g., an event listener, a promise resolution, or a message consumer).

Advantages of Asynchronous Communication:

- Improved Responsiveness: The calling application remains responsive, as its main thread is not blocked waiting for

apiresponses. This leads to a smoother user experience and better resource utilization. - Enhanced Scalability: By not blocking threads, an application can handle many more concurrent

apirequests and other operations. This allows systems to scale more effectively to meet increasing demand. - Increased Fault Tolerance: If one

apicall fails or is slow, it doesn't necessarily block other operations or cause a cascade of failures. Individual failures can be isolated and handled gracefully (e.g., retries, dead-letter queues). - Decoupling: Asynchronous patterns naturally promote decoupling between services. The caller often doesn't need to know the intimate details of the callee's availability or processing time, only that the message will eventually be delivered and processed.

- Resource Optimization: Fewer blocking threads mean less memory and CPU consumption per request, making more efficient use of underlying hardware.

- Parallel Processing: Multiple asynchronous

apicalls can be initiated almost simultaneously, allowing for true parallel execution and significantly reducing the total time required for a sequence of operations compared to sequential synchronous calls.

Disadvantages of Asynchronous Communication:

- Increased Complexity: Asynchronous patterns introduce more moving parts (callbacks, promises, queues, event handlers, state management across requests). This can make the code harder to write, debug, and reason about, especially for developers new to the paradigm.

- Eventual Consistency: Since operations are decoupled and occur independently, data across different systems might not be immediately consistent. There's a delay before all systems reflect the latest state, leading to "eventual consistency." Applications must be designed to handle this temporal discrepancy.

- Debugging Challenges: Tracing the flow of an asynchronous operation across multiple services and time can be significantly more challenging than debugging a linear synchronous flow. Distributed tracing tools become essential.

- Monitoring Complexity: Keeping track of the status of multiple outstanding asynchronous operations and ensuring they all complete successfully requires sophisticated monitoring and alerting systems.

In the context of sending information to two APIs, the choice between synchronous and asynchronous becomes even more critical. While a synchronous approach might seem simpler at first glance, the compounded latency and increased fragility quickly make it impractical for most real-world scenarios. Asynchronous communication offers a powerful alternative, enabling applications to perform multiple api interactions efficiently, reliably, and without sacrificing user experience.

Why Asynchronously Send Information to Two APIs? Compelling Use Cases

The decision to send information asynchronously to two or more APIs isn't merely an architectural preference; it's often a strategic necessity driven by common business requirements and the pursuit of optimal system performance, resilience, and user experience. Let's explore some compelling scenarios where this pattern proves invaluable.

1. Data Replication and Synchronization Across Disparate Systems

Many enterprise applications operate with multiple specialized systems, each managing a specific domain of data. For instance, a customer relationship management (CRM) system might store detailed customer profiles, while a separate marketing automation platform handles email campaigns and lead nurturing. When a customer updates their contact information in the CRM, this change ideally needs to be reflected in the marketing platform to ensure consistent communication.

- Scenario: A user updates their email address in a web application.

- API Calls:

- Update User Profile API (Internal Database/CRM)

- Update Contact in Marketing Automation API (External System like Mailchimp, Salesforce Marketing Cloud)

- Why Asynchronous: Waiting for the marketing platform

apito respond synchronously would unnecessarily delay the user's perception of their profile update being complete. Furthermore, if the marketingapiis temporarily unavailable, the primary user profile update shouldn't fail. Asynchronously, the core profile update can succeed immediately, and the marketing update can be retried or processed in the background.

2. Event-Driven Workflows and Business Process Automation

Modern applications often orchestrate complex business processes that span multiple services. An event in one system can trigger a series of actions in others. Asynchronous communication is the natural fit for these event-driven architectures, allowing processes to unfold without blocking the initial event source.

- Scenario: A customer places an order on an e-commerce website.

- API Calls:

- Process Payment API (Payment Gateway like Stripe, PayPal)

- Update Inventory API (Internal Stock Management System)

- Notify Shipping API (Third-party Logistics Provider like FedEx, DHL)

- Send Order Confirmation Email API (Email Service like SendGrid, Mailgun)

- Why Asynchronous: The immediate priority is confirming the order to the customer and initiating payment. The inventory update, shipping notification, and email sending are crucial but don't need to happen in real-time while the user waits. Each of these can be triggered asynchronously after the initial order confirmation, allowing the system to respond quickly to the customer while reliably processing all subsequent steps in the background. If the shipping

apiis slow, it won't impact payment processing or the user's order confirmation page load.

3. Augmenting Data from Multiple Sources

Sometimes, a single request requires enriching data by pulling information from various specialized APIs. Performing these lookups synchronously can lead to noticeable delays, especially if the external services have varying latencies.

- Scenario: Displaying a product page that includes product details, real-time stock availability, and user reviews from different

apis. - API Calls:

- Get Product Details API (Internal Product Catalog)

- Get Stock Availability API (Warehouse Management System)

- Get User Reviews API (Third-party Review Platform)

- Why Asynchronous: While some initial product details might be fetched synchronously to render the basic page, loading stock availability and user reviews can be done asynchronously and displayed once available. This improves the perceived performance of the page. Even better, if the server-side logic needs to aggregate this data before sending it to the client, initiating all three

apicalls in parallel asynchronously will drastically reduce the total time compared to sequential synchronous calls. Theapi gatewaypattern, which we will discuss later, is particularly adept at handling such fan-out scenarios, making it easier to orchestrate these parallel calls.

4. Performance Optimization and User Experience

Minimizing user waiting time is paramount for modern applications. Asynchronous calls inherently improve responsiveness by allowing the primary application thread to remain free, processing other requests or displaying immediate feedback to the user while background tasks complete.

- Scenario: A dashboard needs to display data from several analytical services.

- API Calls:

- Get Sales Data API

- Get Website Traffic API

- Get Social Media Engagement API

- Why Asynchronous: Each data point can be fetched independently and in parallel. The dashboard can then update widgets as the data becomes available, offering a progressive loading experience rather than making the user wait for all data points to load synchronously before rendering anything.

5. Enhancing System Resilience and Fault Tolerance

Distributed systems are inherently prone to failures – network glitches, service outages, or slow responses are inevitable. Asynchronous communication patterns can build greater resilience into an architecture.

- Scenario: Publishing an article in a CMS that also needs to be cross-posted to a social media platform and indexed by a search service.

- API Calls:

- Publish Article API (CMS Internal)

- Post to Social Media API (e.g., Twitter, Facebook)

- Index Content API (e.g., Elasticsearch, Algolia)

- Why Asynchronous: The primary action (publishing the article) should succeed even if the social media

apior indexing service is temporarily down. By making these secondary calls asynchronous, failures can be handled gracefully with retries, logging, or moving to a Dead Letter Queue (DLQ), without blocking the article publication process. The user gets immediate confirmation that their article is live, while the system ensures eventual consistency across all platforms.

In essence, asynchronously sending information to two APIs (or more) is a powerful technique for building systems that are not only faster and more responsive but also more robust, scalable, and decoupled. It enables applications to handle complex workflows gracefully, manage dependencies efficiently, and ultimately deliver a superior experience to the end-users. The choice to embrace asynchrony is a commitment to a more modern, resilient, and performant architecture.

Core Principles of Asynchronous Design

Successfully implementing asynchronous communication, especially when targeting multiple APIs, relies on adhering to several fundamental design principles. These principles guide the architecture and implementation, ensuring that the benefits of asynchrony are fully realized while mitigating its inherent complexities.

1. Decoupling

At the heart of asynchronous design is the principle of decoupling. It means reducing the direct dependencies between different components or services within a system. In a decoupled architecture, a service doesn't need to know the intimate details of another service's internal workings, its immediate availability, or its processing time. It simply needs to know how to send a message or trigger an event.

- Impact on Two APIs: When you asynchronously send information to two APIs, you effectively remove the direct temporal coupling between your calling service and each of the target APIs. Your service doesn't wait for API A to finish before sending to API B, nor does it wait for API B to respond. This allows each API to process information at its own pace, independently.

- Benefits:

- Increased Flexibility: Services can evolve independently. Changes in one

api's implementation are less likely to break others. - Improved Resilience: The failure or slowdown of one

apidoes not necessarily bring down the entire workflow or other dependent services. - Easier Maintenance: Components can be updated, scaled, or replaced without affecting other parts of the system, simplifying maintenance and deployment.

- Enhanced Scalability: Individual services can be scaled up or down based on their specific load requirements, rather than being constrained by the slowest link in a synchronous chain.

- Increased Flexibility: Services can evolve independently. Changes in one

2. Non-Blocking Operations

Non-blocking operations are the technical manifestation of asynchronous behavior. Instead of waiting for an I/O operation (like an api call, database query, or file read/write) to complete, the executing thread immediately relinquishes control or moves on to other tasks. When the I/O operation finishes, a mechanism (e.g., an event loop, callback, or promise) notifies the system, and the corresponding result is processed.

- Impact on Two APIs: When you initiate two

apicalls asynchronously, your application's primary thread doesn't stop and wait for the firstapicall to finish before starting the second. Instead, it initiates both calls and immediately becomes available for other work. The responses from these APIs will arrive independently and be handled as they become available. - Benefits:

- Maximized Resource Utilization: CPU cycles are not wasted waiting for I/O. The system can serve more requests or perform more computations concurrently with fewer resources.

- Improved Responsiveness: The user interface or primary application logic remains fluid and reactive, as it's not held up by potentially slow external interactions.

- Higher Throughput: The system can process a greater volume of requests in a given time frame.

3. Event-Driven Architecture (EDA)

Event-driven architecture is a software design paradigm that focuses on the production, detection, consumption of, and reaction to events. An event represents a significant change in state, such as "Order Placed," "User Registered," or "Payment Processed." Services communicate by emitting and subscribing to events rather than making direct api calls.

- Impact on Two APIs: Instead of directly calling "API A" and "API B," your service might emit an "Order Placed" event. Other services (or consumers listening to this event) would then react. One consumer might call "API A" (e.g., inventory update), and another might call "API B" (e.g., shipping notification). The original service doesn't even know which APIs are being called; it only knows it emitted an event.

- Benefits:

- Extreme Decoupling: Services don't even need to know about each other's existence, only about the events they are interested in.

- Scalability: New consumers can be added to react to existing events without modifying the event producer.

- Flexibility: Business logic can be easily extended by adding new event handlers.

- Real-time Processing: Well-suited for scenarios requiring real-time responses to system changes.

4. Fault Tolerance

Fault tolerance refers to the ability of a system to continue operating, perhaps in a degraded manner, even when one or more of its components fail. In a distributed, asynchronous environment, failures are a given. Designing for fault tolerance means anticipating these failures and building mechanisms to handle them gracefully.

- Impact on Two APIs: If one of the two target APIs is down or returns an error, an asynchronous design allows you to isolate that failure. The call to the other

apican still succeed. Mechanisms like retries with exponential backoff, circuit breakers, and Dead Letter Queues (DLQs) can ensure that the failed call is eventually processed or handled appropriately without impacting the overall system's stability. - Benefits:

- Increased Reliability: The system is less likely to experience total outages due to isolated component failures.

- Improved User Experience: Users are less likely to encounter "service unavailable" errors for critical functions.

- Graceful Degradation: The system can continue to function partially, providing essential services even when non-critical components are experiencing issues.

5. Scalability

Scalability is the ability of a system to handle a growing amount of work by adding resources. Asynchronous designs inherently support scalability by enabling more efficient resource utilization and facilitating horizontal scaling.

- Impact on Two APIs: When using patterns like message queues, the workload of calling the two APIs can be distributed among multiple consumer instances. If the load increases, you can simply add more consumers to process messages faster. The original service calling the APIs doesn't need to change.

- Benefits:

- Horizontal Scaling: Easily add more instances of services or consumers to handle increased load without redesigning the architecture.

- Elasticity: Scale resources up or down dynamically based on demand, optimizing cost and performance.

- Efficient Resource Use: Prevents bottlenecks caused by blocking operations, allowing existing resources to handle more work.

These core principles form the bedrock of robust asynchronous api communication. By keeping them in mind throughout the design and implementation phases, developers can construct systems that are not only performant and responsive but also resilient, maintainable, and capable of evolving with future demands.

Architectural Patterns for Asynchronous API Calls to Multiple Endpoints

When the goal is to send information asynchronously to two or more APIs, various architectural patterns emerge, each with its strengths, complexities, and ideal use cases. The choice among these patterns depends on factors like the desired level of decoupling, consistency requirements, volume of data, and operational overhead.

A. Client-Side Asynchrony (Briefly Mentioned for Context)

While this article primarily focuses on server-side or service-to-service asynchronous communication, it's worth briefly touching upon client-side asynchrony as it forms the foundational understanding for non-blocking operations. In client-side JavaScript, modern constructs like Promises, async/await, and the fetch API allow web browsers or Node.js applications to make multiple api calls concurrently without blocking the UI thread or the main event loop.

- Limitations: While effective for parallelizing calls from a single client, this pattern doesn't address server-side decoupling, fault tolerance for backend processes, or the complex orchestration of long-running operations. For robust server-to-server asynchronous communication, more sophisticated patterns are required.

Example (Node.js/JavaScript):```javascript async function sendDataToTwoAPIs(data) { try { const apiCall1 = fetch('https://api.example.com/serviceA', { method: 'POST', body: JSON.stringify(data), headers: { 'Content-Type': 'application/json' } }); const apiCall2 = fetch('https://api.example.com/serviceB', { method: 'POST', body: JSON.stringify(data), headers: { 'Content-Type': 'application/json' } });

const [response1, response2] = await Promise.all([apiCall1, apiCall2]);

// Process responses

const result1 = await response1.json();

const result2 = await response2.json();

console.log('API A Response:', result1);

console.log('API B Response:', result2);

return { result1, result2 };

} catch (error) {

console.error('An error occurred:', error);

// Handle errors for either API call

}

}// Call the function sendDataToTwoAPIs({ message: 'Hello from client!' }); ```

B. Message Queues: The Workhorse for Robust Asynchrony

Message queues are perhaps the most common and powerful pattern for achieving robust asynchronous communication and decoupling in distributed systems. They act as intermediaries, storing messages until they can be processed by a consumer.

- Concept: A message queue operates on a producer-consumer model. A "producer" sends messages to a queue, and a "consumer" retrieves messages from the queue for processing. The queue itself acts as a buffer, ensuring messages are not lost if consumers are temporarily unavailable or overwhelmed.

- How it Works for Two APIs:

- Producer Sends Message: Your application (the producer) creates a message containing the information to be sent to the two APIs. This message is then published to a specific queue (or topic, depending on the message broker). Crucially, after sending the message, the producer's process can immediately continue without waiting.

- Message Stored: The message broker (e.g., RabbitMQ, Kafka, AWS SQS, Azure Service Bus) stores the message reliably in the queue.

- Consumers Process Messages:

- Option 1: Single Message, Two Consumers (Publish/Subscribe): If using a publish/subscribe model (like Kafka topics or RabbitMQ exchanges with fanout), a single message can be published to a topic/exchange. Two different consumers (Consumer A and Consumer B), each responsible for calling one of the target APIs, can subscribe to this topic. Both consumers receive a copy of the message independently and call their respective APIs. This offers maximum decoupling.

- Option 2: Single Message, One Orchestrating Consumer: A single consumer retrieves the message. This consumer's logic is then responsible for making the calls to both API A and API B, potentially in parallel using internal asynchronous mechanisms (like Promises in Node.js,

asyncioin Python, or RxJava in Java). This offers more control over the immediate interaction between the two API calls but introduces a single point of failure and more coupling within the consumer. - Option 3: Two Separate Messages, Two Separate Queues/Consumers: The producer might send two distinct messages, each tailored for a specific API, to two different queues. Each queue then has its dedicated consumer (or pool of consumers) responsible for calling its corresponding API. This is ideal when the two API calls require different message formats or processing logic.

- Benefits:

- Extreme Decoupling: Producers and consumers have no direct knowledge of each other. Producers don't know who consumes their messages, and consumers don't know who produced them.

- Load Leveling/Buffering: Queues absorb spikes in traffic, protecting downstream services from being overwhelmed.

- Fault Tolerance and Durability: Messages can be persisted in the queue, ensuring they are not lost even if consumers fail. Most message brokers support automatic retries and Dead Letter Queues (DLQs) for messages that cannot be processed successfully.

- Scalability: You can easily scale consumers horizontally by adding more instances to process messages from the queue in parallel.

- Guaranteed Delivery (at least once): Message queues typically ensure that a message is delivered and processed at least once, even in the event of consumer failure.

- Examples of Message Brokers:

- RabbitMQ: A general-purpose message broker implementing the Advanced Message Queuing Protocol (AMQP). Excellent for complex routing and flexible messaging patterns.

- Apache Kafka: A distributed streaming platform, ideal for high-throughput, fault-tolerant real-time data feeds and event streaming.

- AWS SQS (Simple Queue Service): A fully managed message queuing service by Amazon, great for simple decoupling and scaling microservices.

- Azure Service Bus: Microsoft's fully managed enterprise message broker for integrating applications and services.

C. Task Queues / Job Processors

Task queues are specialized forms of message queues designed to offload time-consuming, CPU-intensive, or background jobs from the main application thread. They often come with richer features like scheduling, retry logic, and monitoring dashboards.

- Concept: When an application needs to perform a background task (e.g., generating a report, sending mass emails, processing an image), it enqueues a "job" onto a task queue. A separate worker process picks up this job and executes it, often calling external APIs as part of the job.

- How it Works for Two APIs:

- Enqueue Jobs: The main application enqueues two separate jobs,

CallAPIAJobandCallAPIBJob, each with the necessary data. Or, it can enqueue a singleOrchestrateTwoAPIsJobwhich internally makes the twoapicalls. - Worker Processes: Dedicated worker processes (often running on separate servers) poll the task queue.

- Job Execution: A worker picks up a job, executes the defined code (which involves calling one or both APIs), handles any errors or retries, and then marks the job as complete.

- Enqueue Jobs: The main application enqueues two separate jobs,

- Benefits:

- Simplified Background Processing: Provides a structured way to handle background tasks, often with built-in retry logic and error handling.

- Scheduling: Many task queues allow jobs to be scheduled for future execution.

- Monitoring: Often include dashboards to monitor job status, success/failure rates, and queue depth.

- Examples:

- Celery (Python): A widely used asynchronous task queue/job queue for Python applications.

- Sidekiq (Ruby): A popular background job processor for Ruby applications, powered by Redis.

- BullMQ (Node.js): A robust, fast, and reliable queue system for Node.js, also built on Redis.

D. Serverless Functions (FaaS) & Orchestration

Serverless computing (Function as a Service - FaaS) offers a powerful, event-driven paradigm for executing code in response to events without provisioning or managing servers. Combined with orchestration tools, it becomes an excellent choice for complex asynchronous workflows involving multiple APIs.

- Concept: You write small, stateless functions (e.g., AWS Lambda, Azure Functions, Google Cloud Functions) that are triggered by various events (e.g., an HTTP request, a message in a queue, a file upload). The cloud provider automatically manages the underlying infrastructure, scaling functions up or down based on demand.

- How it Works for Two APIs:

- Triggering Function: An initial event (e.g., an HTTP POST request to an

api gateway, a message arriving in an SQS queue) triggers a serverless function. - Parallel API Calls within a Function: The triggered function can then asynchronously initiate calls to both API A and API B using language-native asynchronous capabilities (e.g.,

Promise.allin Node.js Lambda,Task.WhenAllin C# Azure Function). It awaits both responses before proceeding or simply fires and forgets if no immediate response handling is needed. This function effectively acts as a lightweight orchestrator. - Function Chaining/Orchestration: For more complex, multi-step workflows, dedicated serverless orchestration services can be used:

- AWS Step Functions: Allows you to define workflows as state machines, visually chaining together multiple Lambda functions,

apicalls, and other services. It handles retries, parallel execution, error handling, and state management between steps. You can easily define a parallel state that invokes two distinct Lambda functions, each responsible for oneapicall. - Azure Logic Apps / Durable Functions: Similar to Step Functions, Logic Apps provide a visual designer for workflows, while Durable Functions allow you to write orchestrator functions that manage state and execution of other functions over time.

- Google Cloud Workflows: Orchestrates and automates HTTP-based APIs and Google Cloud services with serverless workflows.

- AWS Step Functions: Allows you to define workflows as state machines, visually chaining together multiple Lambda functions,

- Triggering Function: An initial event (e.g., an HTTP POST request to an

- Benefits:

- High Scalability: Functions scale automatically and almost infinitely in response to load.

- Reduced Operational Overhead: No servers to manage, patch, or monitor.

- Cost-Effective: Pay only for the compute time consumed by your functions.

- Inherent Asynchrony: Designed around an event-driven model, making asynchrony natural.

- Built-in Resilience: Orchestration services provide robust error handling, retries, and state management.

E. The Role of an API Gateway in Asynchronous Communication

An api gateway is a critical component in modern microservices architectures, acting as a single entry point for all client requests. It sits in front of backend services, routing requests, enforcing policies, and often performing various cross-cutting concerns. While not an asynchronous mechanism itself in the way message queues are, an api gateway plays a pivotal role in enabling and managing asynchronous flows, especially when sending data to multiple APIs.

- What is an API Gateway? An

api gatewayis essentially a reverse proxy that acceptsapicalls, enforces security policies, handles routing, and can potentially transform requests and responses. It serves as an abstraction layer, shielding clients from the complexity of the underlying microservices architecture. It can also manage traffic, handle rate limiting, authentication, and logging for all incomingapitraffic. - How an API Gateway Supports Asynchrony:

- Request Buffering and Decoupling: An

api gatewaycan accept an incoming request from a client, immediately return an "202 Accepted" response, and then place the actual processing request onto a message queue. This decouples the client from the backend processing, providing immediate feedback to the client while the heavy lifting happens asynchronously. - Fan-out to Multiple APIs: A single incoming request to the

api gatewaycan be configured to trigger calls to multiple backend services or APIs in parallel. The gateway handles initiating these concurrent calls and might aggregate their responses (if synchronous fan-out is desired, which can still be initiated asynchronously from the client's perspective) or simply ensure they are triggered. This is particularly useful for the data augmentation and event-driven scenarios discussed earlier. - Asynchronous Response Handling (202 Accepted): For long-running operations or processes involving multiple backend APIs, the

api gatewaycan acknowledge the initial request with a202 Acceptedstatus. The client is then given a "ticket" or a status URL to poll for the final result, or the result can be pushed back to the client via webhooks or WebSockets when complete. This frees the client from waiting. - Backend Service Abstraction: The

api gatewayhides the complexity of multiple backend API calls from the client. The client makes one call to the gateway, and the gateway orchestrates the necessary interactions with two or more underlying APIs. - Traffic Management and Resilience: Gateways provide features like rate limiting, throttling, load balancing, and circuit breakers. These are crucial for protecting downstream asynchronous systems from being overwhelmed and for preventing cascading failures if one of the target APIs becomes unhealthy.

- Centralized Monitoring and Logging: All requests flowing through the gateway can be logged and monitored centrally. This provides invaluable observability into asynchronous workflows, helping to trace requests across multiple backend

apicalls.

- Request Buffering and Decoupling: An

- Connecting to APIPark: For organizations seeking robust API management and gateway capabilities, especially in a world increasingly reliant on AI services, a platform like APIPark offers comprehensive solutions. As an open-source AI gateway and API management platform, APIPark is designed to simplify the complexities of integrating and managing various AI and REST services. When you need to send information asynchronously to two distinct APIs, particularly if one or both involve AI models, an AI gateway like APIPark can abstract away much of the underlying complexity.APIPark's features such as Quick Integration of 100+ AI Models and Unified API Format for AI Invocation mean that even if your two target APIs include complex AI services, the

api gatewayprovides a standardized and managed interface. It can take a single incoming request, transform it, and fan it out to both a traditional REST API and an AI model endpoint, handling authentication, rate limiting, and even prompt encapsulation (Prompt Encapsulation into REST API) in a transparent manner. This enables developers to build event-driven systems where a simple trigger can asynchronously initiate sophisticated processes involving multiple specialized APIs, ensuring performance and stability. The End-to-End API Lifecycle Management provided by APIPark further assists in regulating these asynchronousapimanagement processes, including traffic forwarding, load balancing, and versioning of published APIs, making it an invaluable tool for orchestrating complex multi-API interactions.

Choosing the Right Strategy

The selection of an architectural pattern depends heavily on specific requirements:

- Simple decoupling, high volume, fire-and-forget: Message Queues (Option 1: Publish/Subscribe) are excellent.

- Complex workflows, state management, built-in retries: Serverless Orchestration (Step Functions, Logic Apps) is ideal.

- Exposure of backend services, traffic management, simple fan-out: An

api gatewayis a foundational layer, often used in conjunction with other patterns. - Background tasks, scheduling, dedicated workers: Task Queues are a good fit.

Often, a combination of these patterns yields the most robust and scalable asynchronous architecture. For example, an api gateway might accept a request, place it on a message queue, and then a serverless function might consume that message and orchestrate calls to two backend APIs using its internal async/await features.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Implementing Asynchronous Calls: Practical Considerations

Beyond choosing the right architectural pattern, successful implementation of asynchronous api calls, especially to multiple endpoints, requires careful attention to several practical considerations. Neglecting these aspects can lead to systems that are difficult to manage, debug, and ultimately, unreliable.

A. Error Handling and Retries

Failures are inevitable in distributed systems. External APIs can be slow, return errors, or be temporarily unavailable. A robust asynchronous system must be designed to handle these eventualities gracefully.

- Idempotency: A critical concept when dealing with retries. An operation is idempotent if executing it multiple times produces the same result as executing it once. For example, setting a value is idempotent (setting

status = 'processed'multiple times has the same effect), but incrementing a counter is not. When designing API calls that might be retried, ensure they are idempotent where possible. If not, design the consumer logic to check for duplicates or use unique transaction IDs to prevent unintended side effects. - Exponential Backoff: Instead of immediately retrying a failed

apicall, implement an exponential backoff strategy. This means increasing the delay between successive retries (e.g., 1 second, then 2, then 4, then 8, up to a maximum). This prevents overwhelming a potentially recovering service and gives it time to stabilize. Most message brokers and serverless orchestration services offer built-in exponential backoff for retries. - Dead Letter Queues (DLQs): For messages or jobs that consistently fail after a maximum number of retries, they should be moved to a Dead Letter Queue. This prevents poison messages from endlessly blocking the main processing queue. DLQs allow engineers to inspect failed messages, understand the root cause of the failure, and potentially reprocess them manually or after a fix.

- Circuit Breakers: Implement the circuit breaker pattern. If an external

apiexperiences a high rate of failures, the circuit breaker "opens," preventing further calls to thatapifor a predefined period. This gives the failing service time to recover and prevents your application from wasting resources on calls that are likely to fail. After a timeout, the circuit breaker enters a "half-open" state, allowing a few test requests to pass through. If they succeed, the circuit closes; otherwise, it opens again. - Timeouts: Always configure reasonable timeouts for external

apicalls. This prevents your application from hanging indefinitely if an external service becomes unresponsive. If a timeout occurs, treat it as a temporary failure and apply retry logic.

B. Observability and Monitoring

Asynchronous flows, by their nature, are harder to observe than linear synchronous calls. A comprehensive observability strategy is crucial for understanding system behavior, identifying bottlenecks, and troubleshooting issues.

- Distributed Tracing: Implement distributed tracing (e.g., using OpenTelemetry, Jaeger, Zipkin). This allows you to follow a single request or event as it travels across multiple services, queues, and functions, even when the execution is asynchronous. Tracing provides a clear timeline of operations, showing latencies and identifying which service or

apicall is causing delays or failures. - Logging: Implement centralized, structured logging. Every significant event, message ingestion,

apicall (request and response), and error should be logged with sufficient context (e.g., correlation IDs, timestamps, service names, relevant business IDs). Centralized logging tools (e.g., ELK Stack, Splunk, Datadog) enable aggregation, searching, and analysis of logs across the entire distributed system. - Metrics: Collect and monitor key performance indicators (KPIs) for each component of your asynchronous workflow.

- Queue Metrics: Queue depth, number of messages sent/received, processing rates, message age.

- API Metrics: Latency (response time), error rates (4xx, 5xx), throughput (requests per second) for each external

apicall. - Consumer Metrics: Number of active consumers, processing time per message, number of successful/failed processes.

- Alerting: Set up alerts based on these metrics. Thresholds for queue depth,

apierror rates, consumer lag, or sudden drops in throughput should trigger notifications to operations teams. Alerts should be actionable and provide enough context to diagnose issues quickly. - APIPark's Detailed API Call Logging and Powerful Data Analysis: Platforms like APIPark inherently offer Detailed API Call Logging, recording every detail of each

apicall. This is invaluable in an asynchronous setup, allowing businesses to quickly trace and troubleshoot issues. Furthermore, its Powerful Data Analysis capabilities can analyze historical call data to display long-term trends and performance changes, assisting with preventive maintenance and ensuring the stability of your asynchronous interactions.

C. Data Consistency (Eventual Consistency)

One of the trade-offs of highly decoupled and asynchronous systems is that data across different services might not be immediately consistent. This is known as "eventual consistency."

- Understanding the Trade-offs: Accept that after an update, it might take some time for all dependent systems to reflect the new state. For many business processes (e.g., sending an order confirmation email after an order is placed), eventual consistency is perfectly acceptable. For others (e.g., critical financial transactions), strong consistency might still be required, necessitating different patterns (like two-phase commit, though this often reintroduces synchronous blocking).

- Strategies for Managing Eventual Consistency:

- Saga Pattern: For complex business transactions involving multiple services, a Saga defines a sequence of local transactions, where each transaction updates data within a single service and publishes an event to trigger the next step. If a step fails, compensation transactions are executed to undo the preceding successful transactions, maintaining business consistency.

- Idempotent Consumers: Ensure consumers can handle duplicate messages, as message queues often guarantee "at-least-once" delivery, which can lead to duplicates.

- Read-Your-Writes Consistency: For user-facing features, ensure that a user can immediately read their own updates, even if those updates haven't propagated everywhere else yet. This often involves caching or specific data retrieval logic.

- User Feedback: Provide clear feedback to the user that an operation has been initiated and is being processed, rather than making them wait for full eventual consistency across all systems.

D. Security

Security is paramount for any api interaction, especially when orchestrating calls to multiple external APIs. Each api call is a potential attack vector.

- Secure Communication: Always use HTTPS/TLS for all

apicommunications to encrypt data in transit, preventing eavesdropping and tampering. - Authentication and Authorization: Each external

apicall must be properly authenticated (e.g., API keys, OAuth tokens, JWTs) and authorized. Your application must securely store and manage these credentials. Avoid embedding secrets directly in code. - Principle of Least Privilege: Grant only the minimum necessary permissions to your services when interacting with external APIs. If an

apikey only needs read access, don't give it write access. - Input Validation: Thoroughly validate all data received from external sources and before sending data to external APIs. This prevents injection attacks and ensures data integrity.

- APIPark's Access Controls: An

api gatewaylike APIPark provides strong security features. It enables Independent API and Access Permissions for Each Tenant, ensuring multi-tenancy security. Crucially, its API Resource Access Requires Approval feature means that callers must subscribe to an API and await administrator approval before they can invoke it, significantly preventing unauthorizedapicalls and potential data breaches across your asynchronous workflows. This centralizes and simplifies security management for all your APIs.

E. Scalability and Performance Tuning

While asynchronous systems are inherently more scalable, proper tuning and design decisions are still critical to maximize performance and handle increasing load.

- Horizontal Scaling of Consumers: Design your consumers to be stateless and independently scalable. When load increases, simply add more instances of your consumer application to process messages in parallel from the queue.

- Optimizing Message Payloads: Keep message sizes as small as possible, containing only the essential information needed for the consuming service. Large messages consume more network bandwidth, storage, and processing time.

- Batching Requests (where appropriate): If an external

apisupports batch operations, and your asynchronous workflow accumulates several similar requests, it might be more efficient to process them in batches rather than individual calls. This reducesapioverhead and network chatter. - Concurrency Limits: While parallelizing is good, be mindful of the concurrency limits of the external APIs you are calling. Overwhelming an

apican lead to rate limiting, errors, or even service outages. Implement client-side rate limiting or use circuit breakers to manage the outgoing call rate. - Efficient Language Constructs: Use appropriate asynchronous programming constructs in your chosen language (e.g.,

async/await, Promises, Goroutines, threads) to efficiently manage concurrency within your consumers or orchestrator functions.

By diligently addressing these practical considerations, developers can build asynchronous systems that are not only performant and scalable but also resilient, secure, and manageable in the long term, effectively sending information to two or more APIs with confidence.

Choosing the Right Asynchronous Strategy: A Comparative Table

Selecting the optimal asynchronous strategy for sending information to multiple APIs involves weighing various factors such as complexity, control, cost, and specific use cases. The table below provides a comparative overview of the primary architectural patterns discussed.

| Feature / Strategy | Message Queues (e.g., RabbitMQ, SQS, Kafka) | Serverless Orchestration (e.g., AWS Step Functions, Azure Logic Apps) | API Gateway Fan-out (e.g., APIPark, AWS API Gateway) | Task Queues (e.g., Celery, Sidekiq) |

|---|---|---|---|---|

| Complexity | Moderate (manage infra, consumers, message contracts) | Low-Moderate (visual workflow designer, FaaS functions) | Low (configuration-based, routing rules) | Moderate (manage queue infra, workers, job definitions) |

| Control & Flexibility | High (full control over consumer logic, retry, scaling) | High (explicit state machine, sequential/parallel steps, branching) | Moderate (pre-defined rules, limited custom logic within gateway) | High (full control over worker logic, job retries) |

| Cost Model | Per instance/message (self-hosted) or per message/invocation (managed service) | Per state transition/invocation | Per request/data transfer | Per instance/job (self-hosted) or managed service |

| Primary Use Case | Decoupling, load leveling, reliable delivery, event streaming, background processing | Complex multi-step business workflows, long-running processes, managing state | Exposing APIs, traffic management, authentication, simple request fan-out | Offloading CPU-bound or time-consuming background jobs, scheduling |

| Error Handling | DLQs, manual retries, consumer logic, visibility timeouts | Built-in retries, error paths, compensation logic, state rollback | Basic retries (gateway level), backend specific error responses | Built-in retries, DLQs, monitoring dashboards |

| Latency Impact | Introduces queue latency (milliseconds to seconds) | Generally low for steps, depends on step duration and service limits | Minimal (adds gateway overhead, typically < 50ms) | Introduces queue latency (seconds to minutes for background jobs) |

| Data Consistency | Eventual consistency (high probability) | Can enforce stronger consistency within a workflow, but often eventual | Immediate feedback to client (202 Accepted) with backend eventual | Eventual consistency |

| Ideal Scenarios | High-volume event processing, microservices communication, pub/sub | Business process automation, data transformation pipelines, user onboarding flows | API exposition, rate limiting, authentication, simple parallel calls to known backends | Report generation, image processing, sending notifications, data cleanup |

| APIPark Relevance | Can serve as the entry point before placing messages into queues, or orchestrate consumers. | Can integrate with serverless functions and act as the triggering gateway. | Directly provides this fan-out capability as an api gateway, especially for AI/REST services. |

Can be the triggering mechanism for enqueuing tasks. |

This table provides a high-level overview. In practice, multiple strategies are often combined. For example, an api gateway might receive a request, put it on a message queue, and then a serverless function might consume that message to orchestrate calls to two other APIs using its internal async capabilities. The best approach will always depend on the specific architectural requirements and constraints of your project.

Future Trends in Asynchronous API Communication

The landscape of api communication is constantly evolving, driven by the demand for ever-more responsive, resilient, and intelligent applications. Asynchronous patterns, already central to modern architectures, are poised to become even more sophisticated and ubiquitous.

1. Event-Driven APIs (AsyncAPI Specification)

While REST APIs primarily focus on request-response interaction, the future increasingly points towards event-driven APIs. REST is great for fetching data, but less so for real-time notifications or streams of updates.

- Concept: Instead of polling an

apiendpoint for changes, clients subscribe to streams of events. When something significant happens, an event is pushed to the subscribers. This enables true real-time reactive applications. - AsyncAPI: Just as OpenAPI (Swagger) defines synchronous REST APIs, the AsyncAPI specification is emerging as the standard for defining event-driven APIs over various protocols like Kafka, RabbitMQ, WebSockets, and MQTT. It allows developers to formally describe the messages, channels, and operations involved in asynchronous communication.

- Impact on Two APIs: Instead of explicitly calling two APIs, your system might emit a single business event. Multiple services (or even external partners) could then subscribe to this event and react independently, each calling their respective APIs or performing other actions. This pushes decoupling to an even higher level, enabling more complex, real-time reactive systems.

2. Serverless-Native Asynchronous Patterns

Cloud providers are continually enhancing their serverless offerings, making them even more powerful for asynchronous workflows.

- Managed Workflows: Services like AWS Step Functions, Azure Logic Apps, and Google Cloud Workflows are becoming more feature-rich, providing visual designers, robust error handling, and direct integrations with a wider array of services. They are evolving into comprehensive platforms for orchestrating complex business processes asynchronously, abstracting away much of the underlying infrastructure complexity.

- Event Filtering and Routing: Enhanced capabilities in event buses (like AWS EventBridge) allow for more granular filtering and routing of events to specific serverless functions or other targets. This means even more precise control over which events trigger which

apicalls, reducing unnecessary processing. - Long-Running Operations: Serverless functions are extending their capabilities to handle long-running processes (e.g., Azure Durable Functions, AWS Lambda extensions for longer durations). This reduces the need for external state management when orchestrating multi-step asynchronous

apiinteractions.

3. AI-Driven API Orchestration and Management

The rise of Artificial Intelligence and Machine Learning is profoundly impacting how APIs are designed, consumed, and managed.

- Intelligent Routing and Load Balancing: AI algorithms can analyze real-time traffic patterns,

apiperformance metrics, and historical data to intelligently route requests to the most optimal backend services, improving efficiency and reducing latency in asynchronous calls. Anapi gatewaylike APIPark, with its focus on AI model integration, is well-positioned to leverage such capabilities. - Automated Error Detection and Recovery: AI can be used to predict potential

apifailures, detect anomalies inapicall patterns, and even suggest or automatically trigger recovery actions, making asynchronous systems more robust. - Generative AI for API Integration: Large Language Models (LLMs) are already assisting developers in generating

apiintegration code and even designingapispecifications. In the future, AI could actively help in orchestrating calls to multiple APIs, automatically generating the necessary asynchronous patterns based on high-level business requirements. For instance, an AI could analyze a request, determine which two (or more) APIs need to be called, and construct the appropriate asynchronous workflow using available tools. APIPark's capability to encapsulate prompts into REST APIs suggests a future where AI itself can become anapiprovider that is seamlessly managed by the gateway, making its orchestration capabilities even more critical. - Semantic API Understanding: AI could eventually understand the semantics of different APIs, allowing for more intelligent composition and orchestration of services even if they weren't explicitly designed to work together. This would simplify the process of sending data to disparate APIs dramatically.

4. GraphQL Subscriptions and Live Queries

While GraphQL is often associated with synchronous request-response, its "subscriptions" feature is inherently asynchronous, allowing clients to receive real-time updates when data changes. "Live queries" extend this concept, enabling client-side frameworks to automatically re-fetch data when relevant changes occur on the server. This provides a more efficient and reactive way for clients to consume dynamically changing data from potentially multiple backend sources.

These trends highlight a clear trajectory towards more autonomous, intelligent, and real-time asynchronous communication. As systems grow in scale and complexity, the ability to effectively send information to multiple APIs without blocking the main application flow will not just be a best practice, but a foundational requirement for all modern software.

Conclusion

The journey through the intricate world of asynchronously sending information to two (or more) APIs reveals a landscape of architectural possibilities, each designed to address the challenges of building scalable, resilient, and responsive distributed systems. We began by sharply contrasting synchronous and asynchronous communication, clearly demonstrating how the latter liberates applications from the performance bottlenecks and fragility inherent in sequential, blocking operations. The imperative to embrace asynchrony becomes undeniably clear when faced with scenarios like data synchronization across disparate platforms, the orchestration of complex event-driven workflows, the augmentation of data from multiple sources, and the fundamental pursuit of enhanced user experience and system fault tolerance.

Our exploration delved into core asynchronous design principles: the profound importance of decoupling to foster independent service evolution, the efficiency gains of non-blocking operations, the reactive power of event-driven architectures, the necessity of fault tolerance to withstand inevitable failures, and the inherent scalability that underpins modern cloud-native applications. These principles serve as guiding stars for architects and developers navigating the complexities of distributed systems.

We then dissected various architectural patterns, from the robust and highly decoupled message queues (like RabbitMQ or Kafka) that buffer and reliably deliver messages, to the structured and state-managed serverless orchestration services (like AWS Step Functions) that enable complex, multi-step business processes. Task queues offer a specialized approach for offloading heavy background jobs, while serverless functions provide unparalleled scalability for event-driven computations.

Crucially, we examined the pivotal role of an api gateway in this ecosystem. An api gateway is not merely a traffic manager; it can act as an intelligent orchestrator, buffering requests, fanning out calls to multiple backend APIs, and providing essential cross-cutting concerns like security, monitoring, and rate limiting. It effectively abstracts the complexities of multiple asynchronous backend interactions from the client. In this context, platforms like APIPark emerge as powerful tools, particularly for managing a blend of traditional REST APIs and advanced AI services. Its features, such as unified API formats, prompt encapsulation, and robust lifecycle management, enable developers to construct sophisticated asynchronous workflows with greater ease, security, and efficiency.

Finally, we traversed the practical implementation considerations, emphasizing the critical need for comprehensive error handling (idempotency, exponential backoff, circuit breakers, DLQs), robust observability (distributed tracing, centralized logging, metrics, alerting), intelligent data consistency strategies (understanding eventual consistency, Sagas), stringent security measures (HTTPS, authentication, least privilege), and continuous scalability and performance tuning. Looking ahead, the rise of Event-Driven APIs (AsyncAPI), sophisticated serverless-native patterns, and AI-driven API orchestration promises an even more dynamic and capable future for asynchronous communication.

In conclusion, asynchronously sending information to two (or more) APIs is far more than a technical trick; it is a fundamental paradigm shift towards building adaptable, high-performance, and resilient software systems capable of thriving in the interconnected digital age. By meticulously applying the principles, patterns, and best practices outlined in this guide, developers and organizations can confidently construct robust architectures that meet current demands and gracefully evolve with future challenges, delivering superior experiences to users and greater stability to the business.

5 Frequently Asked Questions (FAQs)

1. What is the main benefit of sending information asynchronously to two APIs compared to synchronously?

The main benefit is improved responsiveness and resilience. Asynchronous communication allows your application to initiate calls to multiple APIs without waiting for each one to complete before starting the next. This prevents your primary application thread from blocking, significantly reducing perceived latency for users, especially if one API is slow or unavailable. It also increases fault tolerance, as a failure in one API call won't necessarily halt the entire process or affect other parallel API calls. This leads to a more fluid user experience and a more robust system architecture capable of handling failures gracefully.

2. When should I choose a Message Queue over Serverless Orchestration for asynchronous API calls?

You should generally choose a Message Queue (like Kafka or SQS) when your primary goal is high-volume event streaming, extreme decoupling between services (producers don't know who consumes, consumers don't know who produces), load leveling to protect downstream systems from traffic spikes, or durable storage of messages for reliable "at-least-once" delivery. Serverless Orchestration (like AWS Step Functions) is better suited for complex multi-step business workflows that require explicit state management, built-in retry logic across steps, visual representation of the workflow, and conditional branching, especially when these steps involve various cloud services and serverless functions, including making external API calls. Often, an api gateway might place an event on a message queue, which then triggers a serverless orchestration.

3. How does an API Gateway like APIPark help with asynchronous communication to multiple APIs?

An api gateway serves as a central entry point that can significantly enhance asynchronous communication. For one, it can buffer incoming requests, immediately return a 202 Accepted status to the client, and then asynchronously forward the request to backend services or queue it for later processing. Crucially, it can be configured to fan out a single incoming request to multiple backend APIs in parallel, acting as an orchestrator for simple parallel calls. Moreover, an api gateway provides essential cross-cutting concerns like authentication, rate limiting, and centralized monitoring and logging, which are vital for managing complex asynchronous flows and ensuring the reliability and security of calls to multiple APIs. Platforms like APIPark further simplify the integration and management of both traditional REST and AI APIs in such asynchronous patterns, providing unified formats and powerful management features.

4. What is "eventual consistency" and why is it a trade-off in asynchronous systems?

Eventual consistency is a consistency model where, after an update, dependent systems or data replicas might not immediately reflect the latest state. Instead, they will eventually become consistent, given enough time and that no new updates are made to the same data. It's a trade-off because while it enables high availability, improved performance, and extreme decoupling (as services don't need to synchronously coordinate for every update), it introduces a temporary period where data across your distributed system might be out of sync. Applications must be designed to accommodate this delay, perhaps by showing "processing" statuses or using compensation mechanisms to handle scenarios where an update fails to propagate.

5. What are Dead Letter Queues (DLQs) and why are they important for asynchronous API calls?

Dead Letter Queues (DLQs) are specialized queues where messages are sent after they have failed to be processed successfully a certain number of times by a consumer (e.g., after exhausting all retries). They are critically important for asynchronous API calls because they prevent "poison messages" from endlessly blocking the main processing queue and consuming system resources. By isolating failed messages in a DLQ, operations teams can inspect them, diagnose the root cause of the processing failure (e.g., malformed data, external API outage, bug in consumer logic), and then decide whether to discard, correct, or reprocess the messages. This mechanism significantly improves the resilience and reliability of asynchronous workflows.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.