Demystifying API Gateway Main Concepts

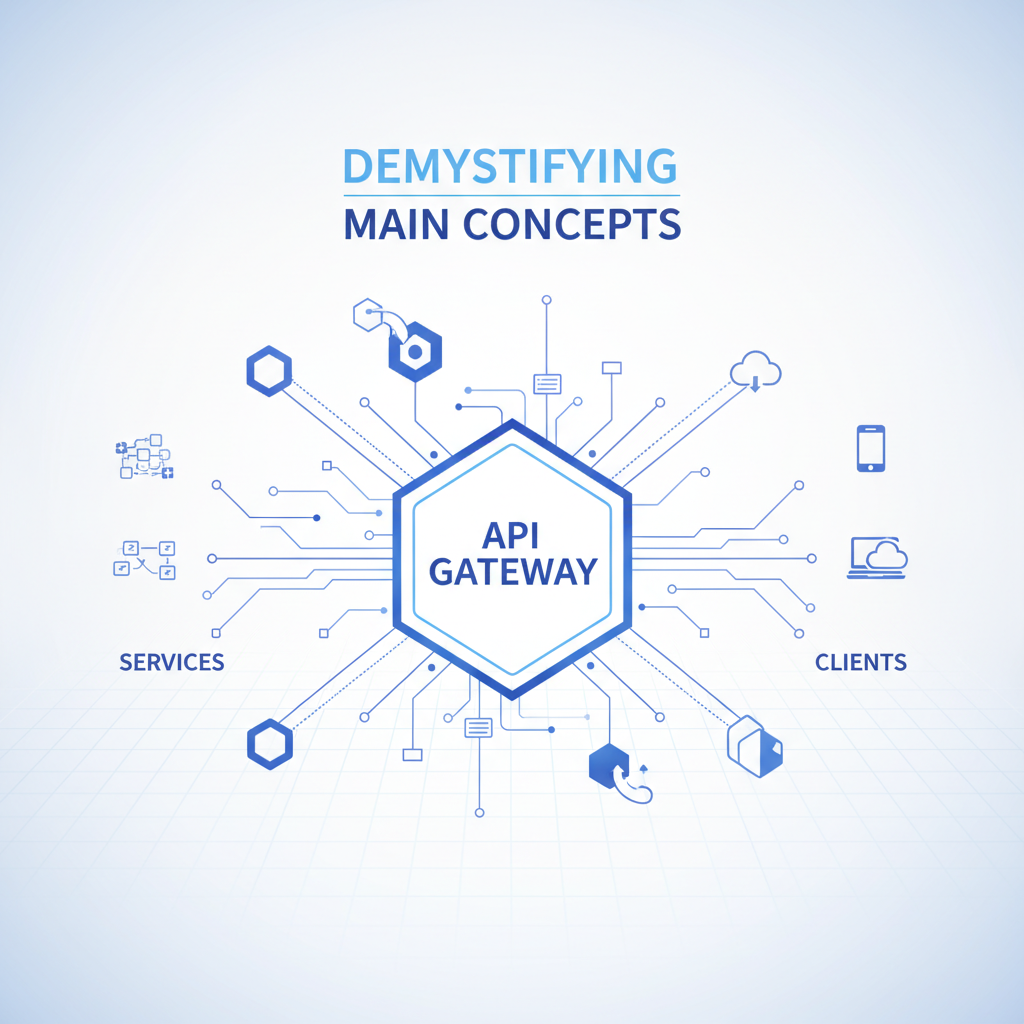

In the sprawling, interconnected landscape of modern software architecture, where microservices dance in intricate choreography and data flows like an unstoppable river, the Application Programming Interface (API) has emerged as the universal language of communication. From the smallest mobile application to the largest enterprise system, APIs form the crucial arteries through which functionality and information are exchanged. However, as the number of APIs proliferates, and the complexity of backend services grows, managing these vital connections can quickly become a daunting challenge. This is precisely where the API Gateway steps onto the stage, not merely as a component, but as the linchpin of a resilient, secure, and scalable digital infrastructure.

Imagine a bustling metropolis with countless buildings, each housing a specialized service. Without a well-organized system of roads, traffic lights, and central dispatch, chaos would reign. The API Gateway serves as this central nervous system for your digital ecosystem, a single entry point for all client requests, effectively acting as a powerful orchestrator standing between your consumers and your multitude of backend services. It is an indispensable architectural pattern that addresses the inherent complexities of distributed systems, offering a unified facade that simplifies client interactions, enhances security, bolsters performance, and provides invaluable insights into API usage.

This comprehensive exploration aims to demystify the main concepts underpinning the API Gateway. We will embark on a journey from understanding its fundamental purpose and the problems it solves, delve deep into its core responsibilities and the mechanisms by which it fulfills them, examine various architectural patterns and deployment strategies, and finally, look towards the advanced capabilities and the future trajectory of this critical component. Whether you are a seasoned architect wrestling with microservices, a developer seeking to understand the tools of the trade, or a business leader aiming to leverage APIs more effectively, this guide will illuminate the profound impact and enduring relevance of the API Gateway in today's digital world.

1. The Genesis of the API Gateway – Why We Needed It

Before the widespread adoption of the API Gateway pattern, applications often communicated directly with various backend services. In simpler, monolithic architectures, this direct interaction was manageable. A single client application would interact with a single, large backend, and the complexities were relatively contained within that one system. However, the advent of microservices, characterized by small, independent, and loosely coupled services, fundamentally altered this paradigm, introducing a new set of challenges that quickly underscored the necessity for a specialized intermediary.

The Era of Direct Service Calls and Tangled Dependencies

In the nascent stages of distributed systems, particularly when moving away from monoliths but before the API Gateway became a standard, client applications, be it web browsers, mobile apps, or other services, would often make direct calls to individual backend microservices. A typical scenario might involve a client needing data from a user service, an order service, and a product catalog service to render a single page or execute a complex transaction. Each call would require the client to know the specific endpoint, authentication requirements, and data formats of each underlying service.

While seemingly straightforward on the surface, this direct interaction model quickly led to a tangled web of dependencies and client-side complexity. Each client became intimately aware of the internal structure and deployment details of the backend services. Any change in a service’s API, its network location, or its authentication mechanism would necessitate updates across potentially numerous client applications. This tight coupling made development cycles longer, deployments riskier, and overall system evolution painstakingly slow. Moreover, the sheer volume of distinct network calls from a single client for a composite view could introduce significant latency, degrade user experience, and strain network resources.

The Amplified Challenges of Microservices

The move towards microservices, while offering undeniable benefits in terms of agility, scalability, and independent deployment, simultaneously magnified these existing problems and introduced new ones:

- Increased Network Overhead: A single user interface action might require interactions with dozens of granular microservices. Without an intermediary, this would translate into numerous separate HTTP requests from the client to various backend endpoints, leading to increased network latency and poorer performance for the end-user. Each request incurs overhead, and aggregating data on the client side became a complex, error-prone task.

- Security Vulnerabilities: Exposing every internal microservice directly to the internet is a major security risk. Each service would need its own authentication, authorization, and security protocols, leading to inconsistent security policies and a larger attack surface. Managing these varied security implementations across an expanding fleet of services became an operational nightmare, dramatically increasing the likelihood of oversight and potential breaches.

- Inconsistent APIs and Protocols: Different teams building microservices might use different communication protocols, data formats (e.g., REST, gRPC, SOAP, XML, JSON), or versioning strategies. Clients would have to adapt to this heterogeneity, adding complexity to their codebase and making client-side development a constant uphill battle against shifting interfaces.

- Lack of Centralized Monitoring and Logging: With direct client-to-service communication, gaining a holistic view of API traffic, performance metrics, and error rates across all services became incredibly challenging. Debugging issues that spanned multiple services was like finding a needle in a distributed haystack, leading to extended mean time to resolution (MTTR) and impaired system observability.

- Difficult Rate Limiting and Throttling: Preventing abuse, ensuring fair usage, and protecting individual services from being overwhelmed by traffic spikes became nearly impossible without a central choke point. Implementing rate limits at each service level was redundant, difficult to coordinate, and prone to inconsistencies, failing to protect the system holistically.

- Version Management Headaches: As services evolved, managing different API versions and ensuring backward compatibility for various client applications became a significant logistical challenge. Clients might depend on older versions while services updated, leading to complex routing and potentially breaking changes.

- Lack of Service Discovery and Resilience: Clients would need to know the exact network locations of services. In dynamic environments where services scale up and down, or fail and restart, managing these endpoints directly from the client becomes impractical. Moreover, implementing resilience patterns like circuit breakers or retries at the client level for every service was an overwhelming burden.

These challenges collectively highlighted a critical architectural gap. There was a desperate need for a dedicated component that could abstract away the complexities of the microservices backend, provide a single, consistent interface to clients, and implement cross-cutting concerns uniformly. This pressing need led to the conceptualization and widespread adoption of the API Gateway. It emerged as a pragmatic solution to bring order, security, and efficiency to the chaotic beauty of distributed systems, offering a unified facade and a powerful control plane that empowers developers and enhances the overall stability and performance of modern applications.

2. Core Responsibilities of an API Gateway

The API Gateway is far more than just a simple proxy; it is a sophisticated traffic manager, a vigilant security guard, and an insightful observer, all rolled into one. Its multifaceted role enables it to centralize various cross-cutting concerns that would otherwise clutter individual microservices or burden client applications. Understanding these core responsibilities is key to appreciating the immense value an API Gateway brings to a modern distributed architecture.

2.1 Routing & Load Balancing

At its fundamental level, an API Gateway acts as the initial point of contact for all client requests. Its primary function, therefore, is to intelligently direct incoming requests to the appropriate backend service. This process, known as routing, involves examining various aspects of the incoming request, such as the URL path, HTTP method, headers, or query parameters, to determine which downstream service is intended to handle it. For instance, a request to /users/{id} might be routed to a "User Service," while a request to /products/{id} goes to a "Product Catalog Service." This abstraction allows clients to interact with a single, uniform endpoint provided by the gateway rather than needing to know the specific network addresses of individual services.

Beyond simple routing, an API Gateway often incorporates sophisticated load balancing capabilities. In environments where multiple instances of a backend service are running (e.g., for scalability or fault tolerance), the gateway intelligently distributes incoming requests across these available instances. This ensures that no single service instance becomes overloaded, thereby maintaining optimal performance and preventing bottlenecks. Common load balancing algorithms include:

- Round Robin: Requests are distributed sequentially to each server in the pool. It's simple and effective for evenly distributed loads.

- Least Connections: The request is sent to the server with the fewest active connections, aiming to balance the current workload.

- IP Hash: The client's IP address is used to determine which server receives the request, ensuring that a particular client consistently connects to the same server. This is useful for maintaining session affinity.

- Weighted Round Robin/Least Connections: Servers are assigned weights based on their capacity, directing more traffic to more powerful instances.

Effective load balancing also relies on health checks. The API Gateway continuously monitors the health and availability of backend service instances. If a service instance becomes unhealthy or unresponsive, the gateway will temporarily remove it from the load balancing pool, preventing requests from being sent to a failing service. Once the instance recovers, it is automatically re-added. This dynamic adjustment is critical for ensuring high availability and resilience in a microservices ecosystem. By centralizing routing and load balancing, the API Gateway significantly enhances the scalability, reliability, and fault tolerance of the entire system, abstracting away the complexities of service discovery and instance management from client applications.

2.2 Authentication & Authorization

Security is paramount in any networked system, and the API Gateway serves as the primary enforcement point for securing access to backend services. It centralizes the authentication and authorization processes, preventing malicious or unauthorized requests from ever reaching the internal microservices. This consolidates security logic, reduces the attack surface, and ensures consistent security policies across all exposed APIs.

Authentication is the process of verifying the identity of a client (who are you?). The gateway can support various authentication mechanisms:

- API Keys: Simple tokens often embedded in request headers or query parameters, used to identify the calling application. While less secure for user authentication, they are common for machine-to-machine communication or identifying developer applications.

- OAuth 2.0: A robust authorization framework that allows third-party applications to obtain limited access to an HTTP service, either on behalf of a resource owner by orchestrating an approval interaction between the resource owner and the HTTP service, or by allowing the third-party application to obtain access on its own behalf. The gateway can act as a resource server, validating tokens issued by an identity provider.

- JSON Web Tokens (JWT): Self-contained, digitally signed tokens that contain claims about the authenticated user or client. The gateway can validate these tokens cryptographically without needing to query an identity provider for every request, improving performance.

- Mutual TLS (mTLS): Provides two-way authentication, where both the client and the server verify each other's digital certificates, establishing a highly secure communication channel.

Once a client's identity has been established (authentication), the API Gateway then performs authorization (what are you allowed to do?). This involves checking if the authenticated client has the necessary permissions to access the requested resource or perform the desired operation. Authorization policies can be defined at various granularities:

- Role-Based Access Control (RBAC): Users are assigned roles (e.g., "admin," "user," "guest"), and permissions are associated with these roles.

- Attribute-Based Access Control (ABAC): Access decisions are made based on the attributes of the user, resource, and environment.

The API Gateway acts as a Policy Enforcement Point (PEP), intercepting requests, extracting credentials (e.g., JWTs), validating them against configured identity providers or internal policy stores, and then either allowing the request to proceed to the backend service or rejecting it with an appropriate error (e.g., HTTP 401 Unauthorized or 403 Forbidden). This centralized approach significantly simplifies security management, ensures uniformity, and strengthens the overall security posture of the API landscape, preventing unauthorized access to sensitive data and functionality. Furthermore, it offloads complex security logic from individual microservices, allowing them to focus purely on their business domain.

2.3 Rate Limiting & Throttling

To ensure fair usage, prevent abuse, and protect backend services from being overwhelmed by traffic spikes, the API Gateway implements robust rate limiting and throttling mechanisms. Without these controls, a sudden surge in requests from a single client, a denial-of-service (DoS) attack, or even legitimate but excessive usage could cripple individual services, leading to degraded performance or complete outages for all users.

Rate Limiting focuses on setting a hard cap on the number of requests a client can make within a specified time window. For instance, a client might be limited to 100 requests per minute. If this limit is exceeded, subsequent requests from that client are immediately rejected with an HTTP 429 Too Many Requests status code, often accompanied by a Retry-After header indicating when the client can safely retry. The algorithms commonly used for rate limiting include:

- Token Bucket: Imagine a bucket with a fixed capacity that tokens are added to at a constant rate. Each request consumes one token. If the bucket is empty, the request is rejected. This allows for small bursts of requests (up to the bucket capacity) but maintains an average rate.

- Leaky Bucket: This is similar to a bucket with a hole at the bottom. Requests are placed into the bucket, and they "leak out" (are processed) at a constant rate. If the bucket overflows, new requests are dropped. This smooths out bursts of traffic into a steady stream.

- Fixed Window Counter: A simple approach where requests are counted within a fixed time window (e.g., 60 seconds). If the count exceeds the limit, further requests are blocked. A drawback is that a large burst at the start and end of a window can allow double the rate.

- Sliding Window Log/Counter: More sophisticated methods that address the edge cases of fixed window counters by using timestamps of requests or combining multiple fixed windows to provide a more accurate and fair rate limit.

Throttling, while related, is subtly different. It's about controlling the overall consumption of resources to maintain a smooth flow and prevent resource exhaustion, rather than just imposing hard limits. For example, a system might throttle all requests to a database if the database load is too high, regardless of individual client rates. Throttling can also be used to enforce commercial agreements, where different subscription tiers allow different request volumes or sustained rates.

The API Gateway centralizes these controls, allowing administrators to define rate limits based on various criteria: per API, per user, per API key, per IP address, or even dynamically based on backend service health. This fine-grained control allows businesses to:

- Prevent DDoS Attacks: By quickly identifying and blocking excessive requests from malicious sources.

- Ensure Fair Usage: Guaranteeing that one "noisy neighbor" client doesn't monopolize resources to the detriment of others.

- Protect Backend Services: Shielding downstream microservices from unexpected spikes in traffic, maintaining their stability and performance.

- Manage Costs: For cloud-based services, limiting requests can help control operational expenses.

- Monetize APIs: Offering tiered access levels based on request limits, providing premium access for higher-paying customers.

By intelligently managing the flow of requests, the API Gateway acts as a crucial traffic cop, ensuring the stability, fairness, and economic viability of the entire API ecosystem.

2.4 Request/Response Transformation

One of the most powerful capabilities of an API Gateway is its ability to perform real-time request and response transformations. In a diverse ecosystem of microservices, different services might expect or produce data in varying formats, or clients might require specific data structures that differ from the raw service output. The gateway acts as a flexible adapter, bridging these inconsistencies without requiring changes to the backend services or the client applications themselves.

Request Transformation involves modifying an incoming client request before forwarding it to the backend service. This can include:

- Header Manipulation: Adding, removing, or modifying HTTP headers. For instance, injecting an

X-Client-IDheader based on authentication information or stripping sensitive headers before sending to the backend. - Query Parameter Modification: Adding default query parameters, renaming existing ones, or removing unnecessary parameters.

- Body Transformation: Converting the request body from one format to another (e.g., XML to JSON, or a simplified JSON structure from a mobile client to a more verbose internal JSON structure). This is particularly useful when integrating with legacy systems that might only understand older data formats or when optimizing data payloads for specific client types.

- Content Enrichment: Adding additional data to the request, such as user role information derived from an authentication token, before sending it to the backend service.

Response Transformation involves modifying the response from a backend service before sending it back to the client. This is equally crucial for adapting service outputs to client expectations:

- Header Manipulation: Adding security headers, removing internal headers that expose implementation details, or modifying cache control headers.

- Body Transformation: Converting a backend service's response (e.g., a detailed internal JSON structure) into a format suitable for a specific client (e.g., a simplified JSON for a mobile app, or a different XML structure for a legacy client). This allows services to maintain a consistent internal API while catering to diverse external consumers.

- Data Aggregation/Composition: In more advanced scenarios, the gateway can make multiple calls to different backend services, combine their responses, and present a single, unified response to the client. This effectively implements the "Backend for Frontend" (BFF) pattern at the gateway level, reducing client-side complexity and the number of network requests the client has to make. For example, a client requesting user details might receive a response that aggregates data from a "User Profile Service," an "Order History Service," and a "Payment Service" into a single, cohesive payload.

The ability to perform these transformations significantly enhances the flexibility and maintainability of the entire system. It allows backend service developers to focus on their domain logic without worrying about the diverse needs of various clients. Similarly, client developers can interact with a simplified, consistent API, regardless of the underlying backend complexities. This decoupling is a cornerstone of agile development and promotes independent evolution of both client and service layers.

2.5 Caching

Improving performance and reducing the load on backend services are critical concerns for any scalable application. Caching is a highly effective strategy to achieve these goals, and the API Gateway is an ideal location to implement it. By storing frequently accessed responses, the gateway can serve subsequent identical requests directly from its cache, bypassing the need to invoke the backend service entirely. This significantly reduces latency for clients and decreases the processing load on downstream systems.

The caching mechanism at the API Gateway typically works as follows:

- When an incoming request arrives, the gateway first checks its cache to see if a valid response for that specific request (identified by factors like URL, headers, and query parameters) already exists.

- If a cached response is found and is still considered fresh (i.e., not expired or invalidated), the gateway serves this response directly to the client, resulting in a "cache hit." This is incredibly fast, often reducing response times from milliseconds to microseconds.

- If no valid cached response is found (a "cache miss"), the gateway forwards the request to the appropriate backend service.

- Once the backend service returns a response, the gateway can store this response in its cache for a specified duration, along with relevant metadata like its expiration time, before forwarding it to the client.

Key considerations for implementing caching in an API Gateway include:

- Cache Invalidation Strategies: Determining when a cached item is no longer valid.

- Time-To-Live (TTL): Responses are cached for a fixed period. After this time, they are considered stale and must be re-fetched from the backend.

- Event-Driven Invalidation: Backend services can explicitly notify the gateway to invalidate specific cached entries when their underlying data changes. This ensures data freshness but requires tighter coupling between services and the gateway.

- Cache-Control Headers: Backend services can use HTTP

Cache-Controlheaders (e.g.,max-age,no-cache) to instruct the gateway on how to cache their responses.

- Cache Scope: Caches can be local to a single gateway instance or distributed across multiple gateway instances to ensure consistency and availability in a clustered environment.

- Cache Keys: Carefully defining what constitutes a unique request for caching purposes (e.g., URL path, query parameters, specific headers).

- Sensitive Data: Caching should be carefully managed for responses containing sensitive or personalized data to avoid security risks. Often, only public, non-user-specific data is cached.

The benefits of API Gateway caching are substantial:

- Reduced Latency: Faster response times for clients, leading to improved user experience.

- Reduced Backend Load: Less strain on databases, application servers, and other downstream resources, which can lead to cost savings and higher system stability.

- Increased Throughput: The gateway can handle a higher volume of requests by serving many of them from cache.

- Improved Resilience: Even if a backend service experiences a temporary outage, the gateway might still be able to serve stale (but possibly acceptable) cached responses, offering a degree of fault tolerance.

By strategically caching API responses, the API Gateway acts as a powerful performance accelerator, optimizing resource utilization and delivering a superior experience for API consumers.

2.6 Monitoring, Logging & Analytics

To ensure the health, performance, and security of an API ecosystem, robust observability is indispensable. The API Gateway, being the single entry point for all API traffic, is uniquely positioned to centralize and aggregate vital monitoring data, logs, and analytics. This capability transforms the gateway into an invaluable source of operational intelligence, providing deep insights into how APIs are being used and how the backend services are performing.

Monitoring involves collecting real-time metrics about API traffic and gateway operations. Key metrics typically include:

- Request Volume: Total number of requests, requests per second (RPS).

- Latency: Response times for API calls, broken down by API, client, or backend service. This helps identify performance bottlenecks.

- Error Rates: Percentage of requests resulting in various HTTP error codes (e.g., 4xx, 5xx), providing early warnings of issues.

- CPU/Memory Usage: Resources consumed by the gateway itself.

- Cache Hit Ratio: Percentage of requests served from cache, indicating caching effectiveness.

These metrics are typically pushed to dedicated monitoring systems (e.g., Prometheus, Datadog) where they can be visualized on dashboards and trigger alerts when thresholds are breached.

Logging involves recording detailed information about each API call that passes through the gateway. This includes:

- Request Details: Client IP, timestamp, requested URL, HTTP method, headers, request body.

- Response Details: Status code, response headers, response body (or part thereof), response time.

- Authentication/Authorization Outcomes: Whether a request was allowed or denied, and why.

- Error Messages: Detailed information about any errors encountered during processing.

These logs are crucial for troubleshooting, auditing, security analysis, and compliance. Centralized log management systems (e.g., ELK Stack, Splunk) are commonly used to aggregate, store, and allow for efficient searching and analysis of these vast volumes of log data.

Analytics goes beyond raw metrics and logs, transforming them into actionable business intelligence. By analyzing historical call data, the API Gateway can provide insights such as:

- API Usage Patterns: Which APIs are most popular, when are they used, and by whom?

- Client Behavior: Identify top consumers, understand their usage trends, and detect anomalies.

- Performance Trends: Long-term changes in latency or error rates, helping identify degrading services or capacity issues.

- Cost Analysis: For pay-per-use APIs, analytics can track consumption for billing.

- Security Audits: Identify potential attack vectors, suspicious access patterns, or policy violations.

Platforms like ApiPark, for example, are specifically designed to offer detailed API call logging, recording every nuance of each interaction. This robust logging capability enables businesses to quickly trace and troubleshoot issues in API calls, thereby ensuring system stability and enhancing data security. Furthermore, APIPark’s powerful data analysis features allow organizations to analyze historical call data to display long-term trends and performance changes, which is invaluable for proactive maintenance and identifying potential issues before they impact users.

By centralizing monitoring, logging, and analytics, the API Gateway provides a comprehensive observability layer for the entire API ecosystem. This unified view empowers operations teams to quickly detect and diagnose problems, security teams to identify threats, and business teams to understand product usage, making informed decisions that drive continuous improvement and ensure the reliability and success of API-driven initiatives.

2.7 Security Policies & Firewalling

Beyond authentication and authorization, the API Gateway acts as a crucial first line of defense, implementing a range of security policies and firewalling capabilities to protect backend services from various cyber threats. By consolidating these security controls at the edge, organizations can ensure a consistent and robust security posture for all their APIs, minimizing the risk of breaches and malicious attacks.

Common security policies and firewalling capabilities include:

- Web Application Firewall (WAF) Integration: Many API Gateways either incorporate WAF-like functionalities or integrate seamlessly with external WAF solutions. A WAF monitors and filters HTTP traffic between web applications and the internet, protecting web applications from a variety of attacks, including cross-site scripting (XSS), SQL injection, command injection, path traversal, and other vulnerabilities listed in the OWASP Top 10. By scrutinizing incoming requests for known attack patterns, the gateway can block malicious traffic before it reaches sensitive backend services.

- IP Whitelisting/Blacklisting: Administrators can configure the gateway to allow requests only from specific IP addresses or ranges (whitelisting) or to block requests from known malicious IP addresses (blacklisting). This is an effective way to control access at a network level and mitigate attacks from suspicious origins.

- Bot Protection: Identifying and mitigating automated attacks from bots, such as credential stuffing, brute-force attacks, or content scraping. This can involve techniques like CAPTCHA challenges, behavioral analysis, or IP reputation checks.

- Payload Validation: Ensuring that the structure and content of incoming request bodies conform to expected schemas (e.g., JSON Schema validation). This prevents malformed requests that could exploit vulnerabilities in backend parsers or introduce invalid data.

- Threat Detection and Prevention: Advanced API Gateways can integrate with threat intelligence feeds and utilize machine learning to detect anomalous behavior or emerging threats in real-time. This includes identifying unusual traffic patterns, multiple failed authentication attempts, or rapid access to sensitive endpoints.

- SSL/TLS Termination and Management: The gateway typically handles the termination of SSL/TLS connections, offloading the encryption/decryption overhead from backend services. It also centralizes certificate management, ensuring that all API traffic is encrypted in transit from the client to the gateway. This provides a secure communication channel and simplifies certificate rotation for internal services.

- Data Masking/Redaction: For certain responses, the gateway can be configured to mask or redact sensitive data (e.g., credit card numbers, personal identifiers) before sending it to the client, ensuring that only authorized information is exposed.

By implementing these comprehensive security policies, the API Gateway acts as a powerful security enforcement point, centralizing and standardizing the protection of API resources. This significantly reduces the burden on individual microservices to implement complex security logic, allowing them to focus on their core business functions while relying on the gateway to filter out most threats at the perimeter. This layered security approach is crucial for maintaining trust, ensuring compliance, and protecting valuable digital assets.

2.8 Versioning

In a dynamic software development environment, APIs are rarely static. They evolve over time, gaining new features, changing data structures, or sometimes even deprecating old functionalities. Managing these changes gracefully, without breaking existing client applications, is a significant challenge. The API Gateway plays a vital role in simplifying API versioning, allowing backend services to evolve independently while maintaining compatibility with diverse client needs.

The API Gateway facilitates several common API versioning strategies:

- URL Versioning: The version number is included directly in the URL path (e.g.,

/v1/users,/v2/users). The gateway can then route requests based on this version identifier to the appropriate backend service instance or even different versions of the same service. - Header Versioning: The version number is specified in a custom HTTP header (e.g.,

X-API-Version: 1). The gateway inspects this header and routes the request accordingly. This approach keeps the URL cleaner but requires clients to manage custom headers. - Query Parameter Versioning: The version is passed as a query parameter (e.g.,

/users?api-version=1). Similar to header versioning, this keeps the base URL clean but can make URLs less intuitive. - Content Negotiation (Accept Header): The client specifies the desired media type, which can include a version, in the

Acceptheader (e.g.,Accept: application/vnd.myapi.v1+json). The gateway can then use this to route to the correct service version.

The gateway's role in versioning is primarily to abstract this complexity from the client. A client always calls the gateway with a specific version indicator. The gateway then uses its routing rules to direct that request to the correct backend service endpoint that supports that version. This means:

- Backward Compatibility: Older clients can continue to use older API versions exposed by the gateway, while newer clients can leverage the latest features, even if the underlying backend services have undergone significant changes. The gateway can even perform transformations (as discussed in Section 2.4) to bridge differences between old client expectations and new service interfaces.

- Graceful Deprecation: When an old API version needs to be retired, the API Gateway can be configured to return specific warnings or HTTP status codes (e.g.,

410 Gone) for deprecated versions, guiding clients to migrate to newer versions. This allows for a controlled transition period rather than abrupt breakage. - Independent Service Evolution: Backend teams can develop and deploy new versions of their services without immediately forcing all clients to upgrade. The gateway provides the necessary decoupling layer.

- A/B Testing: The gateway can even be used to route a small percentage of traffic to a new API version (or a new implementation of a service) for A/B testing purposes, allowing developers to gather feedback before a full rollout.

By centralizing version management, the API Gateway ensures that API evolution is a smooth and controlled process. It empowers both service providers to innovate and clients to integrate reliably, fostering a stable and adaptable API ecosystem.

Here's a summary table of the core responsibilities of an API Gateway:

| Responsibility | Description | Key Benefits |

|---|---|---|

| Routing & Load Balancing | Directs incoming client requests to the correct backend service and distributes traffic across multiple instances to prevent overload. | Enhances scalability, improves reliability, ensures high availability, abstracts service locations from clients. |

| Authentication & Authorization | Verifies client identity (authN) and checks permissions (authZ) before allowing access to backend services. | Centralizes security logic, reduces attack surface, ensures consistent security policies, offloads security from microservices. |

| Rate Limiting & Throttling | Controls the number of requests a client can make within a given timeframe to prevent abuse, ensure fair usage, and protect backend services. | Prevents DDoS attacks, ensures system stability, allocates resources fairly, enables API monetization. |

| Request/Response Transformation | Modifies request data before sending to backend and response data before sending to client (e.g., format conversion, header manipulation). | Bridges API inconsistencies, simplifies client-side development, enables integration with legacy systems, optimizes data payloads. |

| Caching | Stores frequently accessed responses to serve subsequent identical requests directly from memory, bypassing backend service calls. | Reduces latency, decreases load on backend services, increases throughput, improves resilience during backend outages. |

| Monitoring, Logging & Analytics | Collects real-time metrics, detailed logs, and usage patterns to provide insights into API performance, health, and security. | Facilitates troubleshooting, enables proactive problem detection, supports security audits, informs business decisions. |

| Security Policies & Firewalling | Implements WAF-like protections, IP whitelisting/blacklisting, payload validation, and SSL/TLS termination to guard against cyber threats. | Provides a robust first line of defense, strengthens overall security posture, centralizes threat mitigation. |

| Versioning | Manages different API versions, allowing clients to access specific versions while backend services evolve independently. | Ensures backward compatibility, enables graceful deprecation, supports independent service evolution, facilitates A/B testing. |

3. Architectural Patterns and Deployment Models

The implementation of an API Gateway can take various forms, each with its own advantages and considerations, largely depending on the scale, complexity, and specific requirements of the underlying architecture. The choice of architectural pattern and deployment model significantly impacts the system's performance, resilience, management overhead, and developer autonomy.

3.1 Centralized Gateway

The most straightforward and widely adopted pattern involves deploying a single, centralized API Gateway that acts as the sole entry point for all client requests into the entire backend microservices ecosystem. All traffic from external clients first hits this single gateway, which then handles routing, authentication, rate limiting, and other cross-cutting concerns before forwarding requests to the appropriate internal services.

Pros:

- Simplicity and Consistency: Provides a single, unified point for managing all API traffic, leading to easier configuration and consistent application of policies (e.g., security, rate limiting) across the entire system.

- Reduced Client Complexity: Clients only need to know the URL of the single gateway, significantly simplifying client-side development and reducing coupling to backend services.

- Centralized Observability: All traffic passes through one point, making it easier to collect comprehensive monitoring data, logs, and analytics for the entire API landscape.

- Easier Security Enforcement: A single point of entry simplifies the enforcement of security policies and acts as a choke point for external threats.

Cons:

- Single Point of Failure (SPOF): If the centralized gateway fails, the entire application becomes unreachable. This necessitates robust high availability (HA) measures, such as clustering, replication, and sophisticated failover mechanisms, which add their own complexity.

- Performance Bottleneck: As all traffic flows through a single component, the gateway itself can become a performance bottleneck if not adequately scaled. This requires careful capacity planning and horizontal scalability.

- Tight Coupling with Gateway Team: Any change to gateway configurations or policies requires coordination with the team managing the central gateway, potentially slowing down individual microservice teams.

- "God Object" Anti-Pattern Risk: There's a risk of the gateway accumulating too many responsibilities, becoming overly complex and difficult to maintain, resembling a "god object" anti-pattern in object-oriented design.

Despite the potential drawbacks, a centralized gateway remains a popular choice for many organizations, especially when starting with microservices, due to its initial simplicity and ease of management.

3.2 Decentralized Gateway (Micro-Gateways or Backend for Frontend - BFF)

In contrast to a centralized approach, a decentralized gateway strategy involves deploying multiple, smaller API Gateways, often dedicated to specific business domains, teams, or even client types. A common manifestation of this pattern is the "Backend for Frontend" (BFF) pattern, where each type of client (e.g., web app, iOS app, Android app) has its own dedicated API Gateway tailored to its specific needs. These micro-gateways typically handle only the concerns relevant to their particular client or domain.

Pros:

- Increased Autonomy and Agility: Different teams can manage and deploy their own domain-specific gateways independently, aligning with the microservices philosophy. This speeds up development and deployment cycles.

- Reduced Blast Radius: The failure of one micro-gateway only impacts a subset of clients or services, not the entire system.

- Optimized for Specific Clients: BFFs can be specifically designed to serve the unique data formats, query structures, and aggregation needs of a particular client application, reducing client-side code complexity.

- Avoids "God Object": Responsibilities are distributed, preventing a single gateway from becoming overly complex and a bottleneck for development.

Cons:

- Increased Operational Overhead: Managing multiple gateway instances introduces more complexity in terms of deployment, monitoring, and maintenance.

- Inconsistent Policies: Ensuring consistent security, rate limiting, and other cross-cutting policies across multiple micro-gateways can be challenging without proper governance.

- Potential for Duplication: Common functionalities (like core authentication) might need to be implemented or integrated into multiple gateways, leading to code duplication.

- Client Management: Clients still need to know which specific gateway to call, although this is usually simpler than knowing individual service endpoints.

Decentralized gateways are often favored by larger organizations with many independent teams and diverse client applications, where the benefits of agility and reduced coupling outweigh the increased operational complexity.

3.3 Hybrid Approaches

Many organizations find that a pure centralized or decentralized approach doesn't perfectly fit their needs. Instead, they adopt a hybrid model, aiming to combine the benefits of both. A common hybrid strategy involves:

- A Central "Edge" Gateway: This external-facing gateway handles basic, common concerns like global rate limiting, initial authentication, SSL/TLS termination, and potentially WAF protection for all incoming traffic.

- Internal Micro-Gateways/BFFs: Behind the edge gateway, domain-specific or client-specific micro-gateways further process requests, handle more granular routing, service composition, and client-specific transformations before forwarding to the actual microservices.

This approach provides a strong perimeter defense and centralized control over universal concerns, while still allowing for the agility and specialization of decentralized gateways closer to the services.

3.4 Sidecar Pattern

While not strictly an API Gateway in the traditional sense of a central entry point, the sidecar pattern is a powerful architectural concept often used in conjunction with or as an alternative to external gateways for certain cross-cutting concerns within a service mesh context. A sidecar is a secondary container deployed alongside a primary application container (e.g., a microservice) within the same pod in Kubernetes. This sidecar intercepts all incoming and outgoing network traffic for the main application.

- Explanation: The sidecar acts as a proxy (often based on Envoy or similar technology) for its primary application. All communication to and from the microservice goes through its sidecar proxy.

- Benefits:

- Decoupling: Cross-cutting concerns like traffic management, monitoring, security, and resilience (e.g., circuit breakers, retries) are offloaded from the application code into the sidecar. This keeps the microservice clean and focused on business logic.

- Language Agnostic: Since the sidecar is a separate process, it can be written in any language and configured externally, allowing services written in different programming languages to share common infrastructure concerns without language-specific libraries.

- Observability: Provides rich telemetry (metrics, logs, traces) for each service's network interactions.

- Resilience: Implements patterns like circuit breakers, retries, and timeouts directly at the service level.

- Relationship to Service Mesh: When sidecars are deployed across an entire application, managed by a control plane (like Istio, Linkerd), this forms a "service mesh." A service mesh extends many API Gateway functionalities (routing, load balancing, security, observability) to internal, service-to-service communication within the cluster. An external API Gateway typically handles north-south (external client to internal service) traffic, while a service mesh handles east-west (internal service to internal service) traffic. They are often complementary.

3.5 Deployment Options

API Gateways can be deployed in various environments, each with its own set of operational considerations:

- On-premise: For organizations with strict data sovereignty requirements or existing extensive data centers. This offers maximum control but requires significant operational expertise for hardware, networking, and software management.

- Cloud-based: Leverages public cloud providers (AWS, Azure, Google Cloud) for scalability, managed services, and reduced operational burden. This is often the default choice for new applications due to flexibility and cost efficiency.

- Hybrid Cloud: Combines on-premise and cloud deployments, allowing sensitive data or legacy systems to remain on-premise while leveraging cloud resources for scalability and agility. This often involves gateways that can bridge these environments.

- Serverless: In some cases, lightweight gateway functionalities can be implemented using serverless functions (e.g., AWS Lambda, Azure Functions) to create highly scalable and cost-effective event-driven gateways.

Choosing the right architectural pattern and deployment model for your API Gateway is a strategic decision that should align with your organization's development philosophy, security requirements, operational capabilities, and the specific needs of your application ecosystem. Factors such as team structure, existing infrastructure, budget, and desired levels of autonomy and control all play a role in this critical choice.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

4. Beyond the Basics – Advanced API Gateway Concepts

While the core responsibilities form the foundation, modern API Gateways often extend their capabilities significantly, delving into more sophisticated patterns and features that further enhance the manageability, resilience, and monetization potential of an API ecosystem. These advanced concepts empower organizations to build more robust, intelligent, and business-aligned API programs.

4.1 Service Discovery Integration

In a dynamic microservices environment, service instances are constantly scaling up, scaling down, restarting, or even failing. Their network locations (IP addresses and ports) are not static. For an API Gateway to effectively route requests, it needs to know which service instances are currently available and where they are located. This is where Service Discovery Integration becomes crucial.

Instead of maintaining a static list of service endpoints, which would be brittle and require manual updates, an API Gateway integrates with a service discovery mechanism. This mechanism could be:

- Client-Side Discovery: The client (in this case, the API Gateway) queries a service registry (e.g., Eureka, Consul, Apache ZooKeeper) to get the locations of available service instances.

- Server-Side Discovery: A load balancer (which the gateway might sit behind or integrate with) queries the service registry and routes requests to healthy instances.

- Kubernetes Service Discovery: In Kubernetes environments, the gateway can leverage Kubernetes' native service discovery capabilities, using service names that are resolved to healthy pod IPs by the Kubernetes DNS or kube-proxy.

The API Gateway dynamically fetches the list of available instances for a target service from the registry. This enables automatic updates to routing tables, ensuring that requests are always sent to healthy and available service instances, even as the backend landscape changes. This dynamic routing capability is fundamental for achieving high availability, scalability, and resilience in cloud-native applications, as it allows the system to gracefully handle service failures and elastic scaling without manual intervention.

4.2 Circuit Breaker & Fallback

Resilience is a critical concern in distributed systems. When a backend service becomes slow or unresponsive, repeated requests to that service can exhaust resources (e.g., connection pools) in the calling service (the API Gateway), potentially leading to cascading failures across the entire system. The Circuit Breaker pattern, often implemented within the API Gateway, is a powerful mechanism to prevent such scenarios.

- Circuit Breaker: Imagine an electrical circuit breaker. When a fault occurs, it trips, interrupting the flow of electricity to prevent further damage. Similarly, an API Gateway with a circuit breaker monitors calls to a particular backend service. If the error rate or latency for calls to that service exceeds a predefined threshold (e.g., 50% failures in the last 10 seconds), the circuit "trips" and enters an OPEN state.

- In the OPEN state, the gateway immediately stops sending requests to the failing service. Instead of waiting for a timeout, it quickly fails the request and returns an error (or a fallback response) to the client. This prevents the gateway from wasting resources attempting to call an unhealthy service and gives the backend service time to recover.

- After a configurable timeout period (e.g., 30 seconds), the circuit enters a HALF-OPEN state. The gateway allows a small number of "test" requests to pass through to the backend service. If these test requests succeed, it indicates the service has recovered, and the circuit closes (CLOSED state). If they fail, the circuit returns to the OPEN state for another timeout period.

- In the CLOSED state, requests flow normally, and the gateway continues to monitor the service.

- Fallback: When a circuit breaker is open (or if a service call otherwise fails), simply returning an error to the client might not always be the best user experience. A fallback mechanism allows the API Gateway to provide an alternative response or invoke a different, less critical service when the primary service is unavailable. For example, if a recommendation engine is down, the gateway might return a static list of popular products instead of an empty recommendations section. This degrades gracefully, ensuring a minimal, but still functional, experience for the user.

By implementing circuit breakers and fallbacks, the API Gateway significantly enhances the fault tolerance and resilience of the entire system, preventing isolated failures from cascading and ensuring a more stable experience for API consumers.

4.3 API Composition & Orchestration

Modern applications often require data from multiple backend services to fulfill a single client request. For example, rendering a user's dashboard might require fetching profile information from a User Service, recent orders from an Order Service, and recommended products from a Product Catalog Service. Making these multiple calls directly from the client can lead to increased latency, complex client-side code, and higher network overhead.

The API Gateway can mitigate these issues by offering API Composition and Orchestration capabilities. This means the gateway itself can:

- Fan-out Calls: Receive a single client request, internally make multiple parallel or sequential calls to various backend microservices.

- Aggregate Data: Collect responses from these multiple services.

- Compose a Unified Response: Combine, transform, and format the aggregated data into a single, cohesive response that is tailored to the client's needs, before sending it back to the client.

This pattern is often closely associated with the "Backend for Frontend" (BFF) pattern, where the API Gateway effectively becomes the backend tailored for a specific frontend. For example, a /dashboard endpoint on the gateway might internally call /users/{id} on the User Service, /orders?user_id={id} on the Order Service, and /recommendations?user_id={id} on the Product Service, then merge all the data into a single JSON object for the client.

Benefits include:

- Reduced Client Complexity: The client doesn't need to know about the internal microservices or perform complex data aggregation. It just makes one simple call to the gateway.

- Optimized Network Traffic: Fewer network calls from the client, leading to lower latency and better performance.

- Enhanced Performance: The gateway can often make internal service calls more efficiently than a remote client (e.g., within the same data center, over optimized internal networks).

- Flexibility: The composition logic can be easily modified at the gateway level without impacting backend services or client applications.

By acting as an orchestrator, the API Gateway transforms a fragmented microservices landscape into a streamlined and client-friendly interface, simplifying interactions and improving overall user experience.

4.4 Developer Portal & Documentation

While technical capabilities are crucial, the success of an API strategy heavily relies on how easily developers can discover, understand, and integrate with the APIs. This is where a Developer Portal comes into play, often integrated with or provided by the API Gateway. A developer portal is a self-service platform designed to empower API consumers, making it simple for them to find, test, and utilize available APIs.

Key features of a robust developer portal include:

- Centralized API Catalog: A discoverable list of all available APIs, often categorized by domain or business function.

- Interactive Documentation: Automatically generated and up-to-date documentation (e.g., using OpenAPI/Swagger specifications) that provides detailed information about endpoints, parameters, request/response formats, and authentication requirements. Many portals offer interactive "Try It Out" features that allow developers to make live API calls directly from the documentation.

- API Key Management: A self-service mechanism for developers to register applications, generate and manage API keys, and monitor their usage.

- Usage Analytics: Dashboards showing API consumption metrics for specific applications or users, allowing developers to monitor their own integration's performance.

- Code Samples and SDKs: Providing ready-to-use code snippets and software development kits (SDKs) in various programming languages to accelerate integration.

- Support and Community Features: FAQs, forums, or direct support channels to help developers overcome integration challenges.

- Onboarding Workflows: Streamlined processes for new developers to register, get access to APIs, and start building.

Platforms like ApiPark exemplify this, functioning as an all-in-one AI gateway and API developer portal. They facilitate API service sharing within teams by offering a centralized display of all API services, making it remarkably easy for different departments and teams to find and use the required API services. Furthermore, APIPark empowers enterprises with the capability for independent API and access permissions for each tenant, allowing for multiple teams (tenants) to operate with independent applications, data, user configurations, and security policies while sharing underlying infrastructure, which greatly improves resource utilization and reduces operational costs. The option for API resource access requiring approval adds another layer of security, ensuring controlled access to sensitive APIs.

By providing a comprehensive and user-friendly developer portal, the API Gateway (or its associated platform) fosters a vibrant API ecosystem, accelerates developer adoption, reduces the support burden on API providers, and ultimately drives the business value of APIs.

4.5 Monetization & API Productization

For many businesses, APIs are not just technical interfaces but strategic products that can generate revenue or enable new business models. The API Gateway plays a crucial role in supporting API Monetization and Productization by providing the infrastructure needed to meter usage, enforce service level agreements (SLAs), and offer different commercial tiers.

Key aspects include:

- Usage Metering and Billing: The gateway accurately tracks API calls, data transfer, and other consumption metrics for each API consumer. This data is then fed into billing systems to generate invoices based on predefined pricing models (e.g., pay-per-call, tiered usage, subscription models).

- Tiered Access: Offering different levels of service based on subscription plans. For example, a "Free" tier might have lower rate limits and fewer features, while a "Premium" tier offers higher limits, dedicated support, and access to advanced APIs. The gateway enforces these tier-specific policies.

- Service Level Agreements (SLAs): Defining and enforcing guarantees around API availability, performance (e.g., latency), and error rates. The gateway monitors these metrics and can be configured to alert if SLAs are breached. For premium tiers, the gateway might prioritize traffic to ensure SLA compliance.

- Customization and Bundling: Allowing businesses to create custom API bundles for specific customer segments, combining multiple APIs into a single product offering managed and exposed through the gateway.

- Revenue Generation Analytics: Beyond basic usage, the gateway can provide insights into which APIs are generating the most revenue, which tiers are most popular, and customer lifetime value, informing business strategy.

By providing these capabilities, the API Gateway transforms APIs from mere technical interfaces into well-managed, monetizable products. It empowers businesses to create new revenue streams, foster partnerships, and leverage their digital assets in innovative ways, turning their API infrastructure into a profit center.

5. Implementing and Managing Your API Gateway

Selecting, deploying, and maintaining an API Gateway is a critical undertaking that requires careful planning, robust design, and continuous operational vigilance. The success of your API strategy, and indeed your entire microservices architecture, can hinge on how effectively you implement and manage this central component.

5.1 Choosing an API Gateway

The market offers a diverse range of API Gateway solutions, from open-source projects to commercial offerings and cloud-managed services. The "best" choice is highly contextual and depends on several factors:

- Features: Does it support all the core responsibilities and advanced concepts you need (routing, authentication, rate limiting, transformation, caching, monitoring, versioning, WAF, developer portal, etc.)? Are there specific integrations required (e.g., identity providers, service meshes, serverless platforms)?

- Scalability and Performance: Can it handle your projected traffic volumes with low latency? Does it support horizontal scaling and distributed deployment? For instance, ApiPark boasts performance rivaling Nginx, capable of achieving over 20,000 TPS with just an 8-core CPU and 8GB of memory, and supports cluster deployment to handle large-scale traffic, making it a strong contender for high-throughput environments.

- Deployment Options: Does it support your preferred deployment environment (on-premise, public cloud, hybrid, Kubernetes)? How easy is it to deploy and manage? APIPark, for example, highlights its quick deployment, taking just 5 minutes with a single command line.

- Extensibility and Customization: How easily can you extend its functionality with custom plugins or logic? Can you integrate it with your existing tools and systems?

- Operational Maturity and Ecosystem: Is it a mature product with a strong community or vendor support? What's the ecosystem of tools, documentation, and skilled professionals like?

- Cost: Licensing fees, operational costs, and resource consumption. Open-source solutions often reduce direct licensing costs but may require more internal operational expertise.

- Security: How robust are its inherent security features? Does it have a good track record for security updates and vulnerability management?

Popular choices include:

- Open-source: Kong, Apache APISIX, Tyk, Express Gateway, Nginx (used as a basic gateway).

- Cloud-native: AWS API Gateway, Azure API Management, Google Cloud Apigee.

- Commercial: IBM API Connect, Mulesoft Anypoint Platform, Eolink (the company behind APIPark).

For startups and small to medium-sized businesses, an open-source solution like APIPark, which is under the Apache 2.0 license, can provide a robust foundation for basic API resource management. For leading enterprises requiring advanced features, professional technical support, and potentially a commercial version, the same platform might offer those expanded capabilities.

5.2 Design Considerations

Once an API Gateway solution is chosen, its design and configuration require careful thought:

- Performance: Optimize routing rules, judiciously apply caching, and ensure the gateway infrastructure itself is adequately provisioned and scaled. Avoid excessive transformations or complex logic that could introduce latency.

- Security: Implement strong authentication and authorization, integrate with WAF, ensure robust TLS configuration, and regularly audit security policies. The API Gateway is a critical security perimeter.

- Observability: Configure comprehensive monitoring, logging, and tracing. Ensure metrics are exported, logs are centralized, and distributed tracing is enabled to effectively diagnose issues.

- Maintainability and Governance: Establish clear guidelines for defining API routes, policies, and versions. Use Infrastructure as Code (IaC) to manage gateway configurations, ensuring consistency and reproducibility.

- High Availability and Disaster Recovery: Design for redundancy. Deploy gateway instances across multiple availability zones/regions, use load balancers in front, and plan for automated failover.

- API Lifecycle Management: Consider how the gateway integrates with your broader API lifecycle, from design and development to deployment, versioning, and deprecation. Platforms like APIPark assist with managing the entire lifecycle of APIs, including design, publication, invocation, and decommission, helping to regulate processes, manage traffic forwarding, load balancing, and versioning of published APIs.

5.3 Operational Best Practices

Managing an API Gateway effectively demands ongoing operational excellence:

- Continuous Monitoring and Alerting: Actively monitor key metrics (latency, error rates, request volume, resource utilization) and set up alerts for anomalies. Proactive detection is crucial.

- Automated Deployment (CI/CD): Treat API Gateway configurations as code. Integrate them into your CI/CD pipelines to automate testing, deployment, and versioning of gateway policies.

- Regular Security Audits: Continuously review security configurations, update vulnerability patches, and perform penetration testing on the gateway itself.

- Capacity Planning: Regularly assess traffic growth and performance trends to proactively scale gateway resources and prevent bottlenecks.

- Documentation: Maintain up-to-date documentation for gateway configurations, operational procedures, and troubleshooting guides.

- Rollback Strategy: Have a clear plan and automated processes for rolling back gateway configuration changes in case of issues.

5.4 Scalability Challenges and Solutions

Scalability is a primary motivator for using an API Gateway, but the gateway itself can become a bottleneck if not properly managed.

- Challenge: Single Point of Failure/Bottleneck: A single gateway instance can be overwhelmed or fail.

- Solution: Horizontal scaling. Deploy multiple gateway instances behind a load balancer. Use distributed caching and state management (e.g., using Redis or shared databases) to ensure consistency across instances.

- Challenge: High Latency: The gateway adds an extra hop.

- Solution: Optimize gateway configuration, minimize unnecessary transformations, use efficient protocols (e.g., gRPC for internal communication), and leverage caching aggressively for static or frequently accessed data.

- Challenge: Complex Configuration Management: Many routes, policies, and transformations can become unwieldy.

- Solution: Use declarative configuration (e.g., YAML, Kubernetes CRDs) with version control. Implement configuration management tools.

- Challenge: Resource Contention: The gateway might compete with backend services for resources in a shared environment.

- Solution: Isolate gateway resources or use separate clusters/pods. Employ resource limits and quotas.

By meticulously planning, designing, and operating your API Gateway, you can unlock its full potential, transforming it from a mere component into a strategic asset that underpins the reliability, security, and agility of your entire digital platform. The ease of deployment offered by solutions like APIPark, which provides a quick start with a single command, exemplifies how modern platforms strive to simplify this initial hurdle, allowing teams to focus more on configuration and operational excellence.

6. The Future of API Gateways

The digital landscape is in constant flux, driven by emerging technologies and evolving architectural paradigms. The API Gateway, as a foundational element of modern distributed systems, is likewise undergoing continuous evolution, adapting its capabilities to meet new demands and integrate with innovative solutions. Its future trajectory points towards greater intelligence, deeper integration, and a more pervasive role in the edge-to-cloud continuum.

6.1 AI Integration: Smart Gateways

The rise of Artificial Intelligence and Machine Learning (AI/ML) is poised to fundamentally transform the capabilities of API Gateways. We can expect to see gateways becoming "smarter" through:

- Intelligent Routing and Traffic Management: AI algorithms can analyze real-time traffic patterns, backend service health, and historical performance data to dynamically optimize routing decisions, predict congestion, and even reroute traffic to prevent failures before they occur. This goes beyond static load balancing to truly adaptive traffic steering.

- Anomaly Detection and Predictive Analytics: ML models can identify unusual API usage patterns (e.g., sudden spikes, atypical access times) that might indicate a security breach, a DDoS attack, or an emerging performance issue. Predictive analytics can forecast future traffic loads, enabling proactive scaling of gateway resources or backend services.

- Automated Policy Enforcement: AI can assist in dynamically adjusting rate limits, security policies, and even authentication challenges based on contextual risk assessments, rather than rigid, predefined rules.

- Enhanced API Security: AI-powered WAFs can detect zero-day exploits and sophisticated attacks by analyzing behavior and identifying subtle deviations from normal patterns, offering a more adaptive and resilient defense against evolving threats.

- Automated A/B Testing and Rollouts: AI can intelligently manage staged rollouts of new API versions, automatically monitoring key performance indicators (KPIs) and rolling back if negative impacts are detected.

Products like ApiPark are already at the forefront of this trend, positioning themselves as open-source AI Gateways. Their ability to quickly integrate 100+ AI models with a unified management system for authentication and cost tracking, and to offer a unified API format for AI invocation, demonstrates a clear direction towards AI-centric API management. Furthermore, the feature to encapsulate prompts into REST APIs signifies a future where the gateway itself becomes a platform for easily creating and exposing AI-powered microservices.

6.2 Serverless Gateways & Event-Driven Architectures

The adoption of serverless computing is accelerating, with functions-as-a-service (FaaS) becoming a popular choice for building highly scalable, event-driven microservices. API Gateways are adapting to this paradigm:

- Direct Integration with Serverless Functions: Cloud-native API Gateways (e.g., AWS API Gateway) are tightly coupled with serverless platforms, allowing APIs to directly trigger functions without needing traditional backend servers.

- Event-Driven Routing: Beyond simple HTTP routing, gateways are evolving to handle event-driven interactions, acting as event brokers or connecting to message queues/streaming platforms (e.g., Kafka, Kinesis) to process and route asynchronous events.

- Cost Efficiency: Serverless gateways offer a pay-per-execution model, aligning costs directly with usage and eliminating idle resource charges.

This shift moves the gateway beyond merely forwarding HTTP requests to orchestrating a broader range of real-time events and computations, blurring the lines between API management and event streaming.

6.3 Edge Computing & IoT: Gateways Closer to the Data

With the proliferation of IoT devices and the demand for real-time processing, API Gateways are extending their presence closer to the data source, moving from centralized data centers to the network edge:

- Edge Gateways: Deploying lightweight API Gateways on edge devices or local networks enables data processing, filtering, and aggregation closer to where the data is generated (e.g., smart factories, autonomous vehicles). This reduces latency, conserves bandwidth, and enhances privacy.

- Offline Capabilities: Edge gateways can provide limited functionality even when disconnected from the central cloud, buffering data and applying local policies.

- Security for IoT: At the edge, gateways provide crucial security for potentially vulnerable IoT devices, handling authentication, encryption, and anomaly detection before data is sent to the cloud.

This decentralization of the gateway extends its role beyond the traditional datacenter, making it a vital component in increasingly distributed and latency-sensitive environments.

6.4 API Governance and Compliance

As APIs become the bedrock of digital business, regulatory scrutiny and the need for robust governance are intensifying. Future API Gateways will feature more sophisticated capabilities for:

- Automated Compliance Checks: Ensuring APIs adhere to industry standards (e.g., GDPR, HIPAA, PCI DSS) through automated policy enforcement and auditing.

- Centralized Policy Management: Providing a unified platform to define, manage, and enforce security, data privacy, and usage policies across a vast array of APIs.

- API Lifecycle Automation: Deeper integration with API design tools, testing frameworks, and deployment pipelines to ensure consistency and quality throughout the API lifecycle, from inception to deprecation.

- Auditability: Enhanced logging and reporting features to provide irrefutable evidence of API access, usage, and compliance for regulatory purposes.

The API Gateway is evolving from a technical intermediary to a strategic platform that underpins the entire digital business. Its future is one of intelligence, ubiquity, and deeper integration, continuing to shape how applications communicate, how data flows, and how businesses leverage their digital assets in an increasingly interconnected world. The journey of demystifying the API Gateway reveals not just its current power, but its boundless potential as the critical orchestrator of tomorrow's digital ecosystem.

Conclusion

In the intricate tapestry of modern distributed systems, the API Gateway stands as an indispensable architectural pattern, a testament to the continuous evolution of software design in response to escalating complexity. We began by tracing its genesis, understanding how the proliferation of microservices and the inherent challenges of direct client-to-service communication necessitated a sophisticated intermediary. What emerged was a component designed not just to simplify, but to fortify and optimize.

Our deep dive into the core responsibilities illuminated the multifaceted nature of the API Gateway. From its fundamental role in routing and intelligent load balancing that ensures scalability and resilience, to its vigilant oversight of authentication, authorization, and security policies that safeguard precious digital assets, the gateway centralizes critical cross-cutting concerns. It acts as a master translator, performing request and response transformations that bridge disparate data formats, and a performance accelerator through judicious caching. Moreover, its pervasive vantage point enables unparalleled monitoring, logging, and analytics, providing the insights crucial for operational health and strategic decision-making. Finally, its role in managing API versioning ensures a graceful evolution of services, preventing breaking changes and fostering long-term compatibility.

We explored the diverse architectural patterns, from the simplicity of a centralized gateway to the agility of decentralized micro-gateways and the nuanced efficiency of hybrid approaches. The emergence of the sidecar pattern within service meshes further underscores the distribution of gateway-like functionalities closer to the services themselves. We also considered the critical factors in choosing, designing, and operating an API Gateway, emphasizing the importance of performance, security, observability, and robust deployment strategies, exemplified by solutions that prioritize ease of deployment and high performance.

Looking ahead, the future of the API Gateway is vibrant and transformative. It is evolving into a more intelligent entity with AI integration, poised to offer predictive analytics, adaptive security, and smart traffic management. Its growing embrace of serverless and event-driven architectures positions it as a key orchestrator of asynchronous processes, while its expansion into edge computing and IoT highlights its increasing ubiquity. Ultimately, the API Gateway is transcending its technical role to become a strategic platform for API governance, compliance, and monetization, firmly embedding itself as the indispensable nerve center for the digital enterprise.

To demystify the API Gateway is to recognize its profound impact on building secure, scalable, and manageable applications in the age of microservices. It is not merely a tool, but a fundamental paradigm shift in how we conceive and execute our digital strategies, promising continued innovation and simplification in an ever more complex digital world.

Frequently Asked Questions (FAQs)

1. What is an API Gateway, and why is it essential for modern architectures? An API Gateway is a central entry point for all client requests into a microservices-based application. It acts as a proxy, routing requests to the appropriate backend services while also handling cross-cutting concerns like authentication, authorization, rate limiting, and caching. It's essential because it simplifies client applications, enhances security by consolidating enforcement points, improves performance and scalability, and provides centralized observability, all of which are critical for managing the complexity of distributed systems.

2. What are the key benefits of using an API Gateway? The primary benefits include improved security through centralized authentication and authorization, enhanced performance via caching and load balancing, simplified client-side development by abstracting backend complexities, greater scalability due to efficient traffic management, and better observability through aggregated monitoring and logging. It also facilitates easier API versioning and can act as an enforcement point for service level agreements (SLAs).

3. How does an API Gateway differ from a traditional Load Balancer or a Service Mesh? While a traditional load balancer distributes traffic across multiple instances of a single service, an API Gateway operates at a higher level, routing requests to different services based on API paths, and performs advanced functions like authentication, request transformation, and rate limiting. A service mesh, conversely, focuses on east-west (service-to-service) communication within a cluster, providing similar cross-cutting concerns (e.g., traffic management, observability) but primarily for internal service interactions, whereas an API Gateway typically handles north-south (external client to internal service) traffic. They are often complementary.

4. What are some common challenges in implementing and managing an API Gateway? Common challenges include potential for a single point of failure (if not designed for high availability), becoming a performance bottleneck (if not adequately scaled), managing complex configurations, ensuring consistent security policies across a large number of APIs, and integrating with diverse backend services. Choosing the right API Gateway solution and implementing robust operational best practices (like CI/CD, monitoring, and capacity planning) are crucial to overcome these challenges.