Caching vs. Stateless Operation: A Deep Dive for Optimal Performance

The relentless pursuit of optimal performance stands as a cornerstone in the development of modern software systems. In an era where milliseconds dictate user satisfaction and business success, architects and developers are constantly seeking strategies to build applications that are not just functional but also lightning-fast, highly scalable, and resilient. Among the myriad of techniques available, two fundamental paradigms — caching and stateless operation — emerge as powerful, yet often misunderstood, allies in this quest. While seemingly disparate, often discussed in opposition, a deeper understanding reveals that these two approaches, when wielded effectively, can synergistically drive profound improvements in system efficiency and responsiveness.

This article embarks on an exhaustive exploration of caching and stateless operation, dissecting their core principles, advantages, challenges, and the contexts in which each excels. We will delve into how these concepts manifest across various layers of a distributed architecture, paying particular attention to their critical role within gateway and api gateway implementations. Furthermore, we will examine their growing importance in the burgeoning field of artificial intelligence, especially concerning the emerging needs of an AI Gateway. By the end of this deep dive, the goal is to equip the reader with a nuanced perspective, enabling informed architectural decisions that balance performance, consistency, and complexity in the pursuit of truly optimal systems.

The Foundations of Performance Optimization: Why Speed Matters

In today's hyper-connected world, performance is no longer merely a desirable trait; it is a fundamental expectation. Users, accustomed to instantaneous responses from their digital interactions, exhibit dwindling patience for slow-loading pages or sluggish application behavior. This user-centric imperative directly translates into significant business implications, impacting everything from customer retention and conversion rates to operational costs and competitive positioning.

User Experience and Engagement: The most immediate impact of performance is on the end-user experience. A system that responds quickly feels responsive, reliable, and professional. Conversely, even minor delays can lead to frustration, reduced engagement, and ultimately, user abandonment. Studies consistently show a direct correlation between page load times and bounce rates, underscoring how performance directly influences the usability and perceived quality of a service. For instance, an e-commerce platform with a two-second delay might see a significant drop in conversion rates, directly affecting revenue.

Scalability and Resilience: Beyond individual user interactions, performance is intrinsically linked to a system's ability to scale. An inefficient system will buckle under increased load much faster than an optimized one, requiring disproportionately more resources (servers, bandwidth, database connections) to handle growth. Good performance optimization often involves reducing the workload on critical components, thereby extending their capacity and enabling horizontal scaling. Moreover, a high-performing system tends to be more resilient; it can better absorb unexpected traffic spikes or transient failures without collapsing, maintaining availability even under stress.

Operational Costs: Resource consumption directly translates to operational costs. In cloud-native environments, where resources are billed on a pay-as-you-go model, inefficiencies can lead to unexpectedly high infrastructure expenses. Optimizing performance through techniques like caching or designing for statelessness can significantly reduce the CPU, memory, storage, and network bandwidth required to serve a given number of requests, leading to substantial cost savings over time. This is particularly relevant for high-traffic api gateway deployments that process millions of requests daily.

Competitive Advantage: In crowded markets, performance can be a key differentiator. A faster, more reliable service can attract and retain users who might otherwise gravitate towards competitors. This is especially true for APIs, where developers choose providers based on ease of integration, reliability, and crucially, response times. An API Gateway that consistently delivers low-latency responses will be favored over one that introduces noticeable delays.

Key Performance Metrics: To effectively optimize, one must first define and measure success. Key performance indicators (KPIs) include:

- Latency: The time taken for a single request to travel from the client, be processed by the server, and return a response. Often measured in milliseconds.

- Throughput: The number of requests or transactions a system can process per unit of time (e.g., requests per second, TPS).

- Response Time: The total time from a user initiating a request until they receive the complete response. This often includes network latency, server processing time, and client-side rendering.

- Availability: The percentage of time a system is operational and accessible to users. While not a direct performance metric, highly performant systems are often more available due to better resource utilization and resilience.

- Error Rate: The percentage of requests that result in an error. While not directly performance, high error rates can mask underlying performance issues or lead to poor user experience.

Understanding these metrics provides a quantifiable basis for evaluating the impact of architectural decisions, including the strategic adoption of caching and stateless operations. These two approaches offer distinct yet complementary avenues for improving these metrics, each with its own set of trade-offs and ideal use cases.

Caching: The Art of Remembering for Speed

Caching is a fundamental optimization technique that revolves around the simple yet profound idea of remembering computation results or frequently accessed data so that subsequent requests for the same information can be served more quickly, without having to re-compute or re-fetch it from its original source. It capitalizes on the principles of temporal locality (data that has been accessed recently is likely to be accessed again soon) and spatial locality (data that is near recently accessed data is also likely to be accessed soon). By storing copies of data closer to the consumer or the processing unit, caching significantly reduces latency, decreases the load on backend systems, and ultimately enhances overall system throughput.

What is Caching? Definition and Core Principles

At its core, a cache is a temporary storage area that holds copies of data. When a request for data arrives, the system first checks the cache. If the data is found in the cache (a "cache hit"), it is returned immediately, bypassing the slower, more resource-intensive process of retrieving it from its original source (e.g., a database, an external API, or a complex computation). If the data is not in the cache (a "cache miss"), the system retrieves it from the source, serves it to the requester, and then stores a copy in the cache for future use.

The efficacy of caching hinges on several factors:

- Hit Rate: The percentage of requests that result in a cache hit. A higher hit rate indicates a more effective cache.

- Latency Reduction: The difference in time taken to serve data from the cache versus the original source.

- Cache Size: The amount of data the cache can hold. Larger caches can store more data but require more memory.

- Eviction Policy: The strategy used to remove data from the cache when it reaches its capacity (e.g., Least Recently Used (LRU), Least Frequently Used (LFU)).

Types of Caching: A Layered Approach

Caching can be implemented at various layers of a system, forming a hierarchy where data moves closer to the user as it becomes more frequently accessed or predicted to be needed.

- Client-Side Caching (Browser Cache): This is the closest cache to the end-user. Web browsers store copies of static assets (HTML, CSS, JavaScript, images) and even API responses based on HTTP headers (e.g.,

Cache-Control,Expires). This significantly speeds up subsequent visits to the same website by reducing the need to download assets from the server. For dynamic content, browser caches can also store responses, provided the server explicitly allows it. - CDN Caching (Content Delivery Network): CDNs are geographically distributed networks of servers that cache static and sometimes dynamic content at "edge locations" close to users. When a user requests content, it's served from the nearest CDN node, drastically reducing network latency and offloading traffic from the origin server. CDNs are indispensable for global applications, ensuring fast content delivery irrespective of the user's location. They act as a massive, distributed

gatewayfor content. - Reverse Proxy/Load Balancer Caching: A reverse proxy or

API Gatewaycan cache responses before they ever reach the backend application servers. This is particularly effective for highly accessed public APIs or static content that would otherwise be served by the application. This layer can cache entire HTTP responses, significantly reducing the load on upstream services and acting as a primary line of defense against traffic spikes. AnAPI Gatewaycan serve as an intelligent caching layer, storing common responses to alleviate stress on origin servers. - Application-Level Caching: Within the application code itself, developers can implement caches for frequently used objects, database query results, or computationally expensive function outputs. This can be an in-memory cache (e.g., using a hash map or specialized libraries like Caffeine/Ehcache) or an embedded cache. This cache is localized to a specific application instance.

- Distributed Caching: As applications scale horizontally across multiple instances, local application caches become inconsistent. Distributed caches, such as Redis or Memcached, provide a centralized, shared cache that can be accessed by all application instances. They are designed for high throughput and low latency, offering features like data persistence, replication, and clustering. These are critical for

api gatewaydeployments and microservices architectures where state needs to be shared across many stateless instances, allowing them to collectively benefit from cached data. For anAI Gateway, a distributed cache could store results of common AI model invocations, prompt variations, or even pre-computed embeddings. - Database Caching: Databases often have their own internal caching mechanisms (e.g., buffer pools, query caches) to store frequently accessed data blocks or query results. ORMs (Object-Relational Mappers) can also implement caching layers to store entity objects, reducing direct database calls.

Benefits of Caching

The strategic deployment of caching offers a multitude of benefits that directly contribute to superior system performance:

- Reduced Latency: By serving data from a cache that is physically or logically closer to the requester, the round-trip time is dramatically reduced. This leads to faster response times for individual requests.

- Increased Throughput: With a significant portion of requests being handled by the cache, the backend systems are freed up to process other, more complex requests. This allows the overall system to handle a higher volume of traffic without degrading performance.

- Lower Backend Load: Caching acts as a buffer, shielding databases and application servers from the full onslaught of requests. This reduces CPU, memory, and I/O utilization on these critical components, allowing them to operate more efficiently and reliably.

- Cost Savings: By reducing the load on backend infrastructure, caching can lead to substantial cost savings. Fewer database transactions, less server processing, and lower bandwidth usage directly translate to reduced infrastructure costs, especially in cloud environments where resource consumption is directly billed.

- Improved User Experience: Faster loading times and more responsive applications directly translate to happier users, leading to higher engagement, better conversion rates, and increased customer satisfaction.

- Enhanced Resilience: By reducing the dependency on the origin server for every request, caching can make the system more resilient to backend failures. If the primary database or application server experiences an outage, the cached data can still be served for a period, maintaining partial service availability.

Challenges and Considerations for Caching: The Hard Problems

Despite its immense benefits, caching introduces its own set of complexities and challenges that, if not carefully managed, can negate its advantages or even introduce new problems.

- Cache Invalidation: The "Hardest Problem in Computer Science": This phrase, popularized by computer scientist Phil Karlton, highlights the difficulty of ensuring that cached data remains fresh and consistent with the source of truth.

- Time-To-Live (TTL): The simplest strategy. Data is stored for a fixed duration and then automatically expires. After expiration, the next request will trigger a fetch from the original source. While easy to implement, it can lead to stale data if the source changes before the TTL expires, or inefficient use of cache if the data is rarely accessed within its TTL.

- Write-Through: Data is written to both the cache and the backing store simultaneously. This ensures consistency but adds latency to write operations.

- Write-Back: Data is written only to the cache initially, and then asynchronously written to the backing store at a later point. This offers low write latency but introduces the risk of data loss if the cache fails before data is persisted.

- Cache-Aside (Lazy Loading): The application is responsible for reading and writing data to the cache. On a read, it checks the cache first. On a write, it updates the backing store and then invalidates or updates the cache. This is a common and flexible pattern.

- Event-Driven Invalidation: When the source data changes, an event is published (e.g., via a message queue), and the cache listener invalidates the corresponding entry. This offers strong consistency but adds complexity with eventing infrastructure.

- Cache Coherency: In distributed systems with multiple caches (e.g., a CDN cache, a

gatewaycache, and application caches), ensuring all copies of data are consistent becomes a significant challenge. Mechanisms like distributed locks, cache invalidation messages, or versioning are required.

- Stale Data: The inherent trade-off in caching is between performance and data freshness. Tolerating some degree of stale data is often acceptable for read-heavy, non-critical information (e.g., news feeds, product descriptions). However, for sensitive or critical data (e.g., financial transactions, inventory levels), stale data can lead to serious errors. Architects must carefully assess the acceptable level of staleness for different data types.

- Cache Warm-up: When a cache is initially deployed or restarted, it's empty. Requests will result in cache misses, potentially overwhelming the backend system until the cache is populated. "Cache warm-up" strategies involve pre-populating the cache with frequently accessed data, either proactively or by simulating user requests.

- Memory Management and Eviction Policies: Caches have finite capacity. When full, a strategy must be in place to decide which data to remove to make space for new data. Common policies include:

- LRU (Least Recently Used): Evicts the item that has not been accessed for the longest time. Highly effective for temporal locality.

- LFU (Least Frequently Used): Evicts the item with the lowest access count.

- FIFO (First-In, First-Out): Evicts the item that was added first. Simplest but often least efficient.

- Random: Evicts a random item.

- Single Point of Failure (for non-distributed caches): A local in-memory cache within a single application instance can become a single point of failure. If that instance crashes, the cache is lost, leading to increased load on backend systems during recovery. Distributed caches mitigate this by offering replication and fault tolerance.

- Complexity: Adding a caching layer introduces additional complexity to the system architecture. Developers must manage cache keys, expiration policies, invalidation logic, and potentially a separate caching infrastructure (like Redis clusters). This overhead must be justified by the performance gains. Debugging issues with cached data can also be more challenging.

Caching in the Context of Gateways and AI Gateways

API Gateway deployments are prime candidates for leveraging caching due to their position as the first point of contact for external traffic.

- Edge Caching at the Gateway Level: An

API Gatewaycan cache responses for common API calls, reducing the burden on backend microservices or monolithic applications. For instance, if an API provides a list of product categories that changes infrequently, thegatewaycan cache this response for minutes or hours, serving millions of requests without ever touching the backend. This offloads significant traffic from the core services. - Caching Authentication Tokens and Authorization Decisions: Repeated validation of authentication tokens (e.g., JWTs) can be computationally expensive. An

API Gatewaycan cache the results of token validation or authorization decisions for a short period, significantly accelerating subsequent requests from the same authenticated user. This means thegatewaycan quickly determine if a request is valid and authorized without needing to re-consult an identity provider or authorization service for every single API call. - Rate Limit Data: The

gatewayoften enforces rate limits. Storing rate limit counters in a fast, in-memory distributed cache (like Redis) allows for highly efficient and synchronized rate limiting across multiplegatewayinstances. - Configuration Caching: An

API Gatewayneeds access to its routing rules, policies, and service definitions. Caching these configurations in memory allows for extremely fast routing decisions without frequent trips to a configuration database or service discovery system.

For an AI Gateway, caching takes on an even more specialized role:

- Caching AI Model Responses: Many AI models, especially large language models (LLMs) or image recognition models, are computationally intensive. If a common prompt or input is received repeatedly, an

AI Gatewaycould cache the model's response. For instance, if users frequently ask for "summarize this paragraph" with the same paragraph, theAI Gatewaycould return the cached summary without re-invoking the LLM. - Caching Intermediate AI Processing Results: Complex AI workflows might involve multiple steps. Caching intermediate results (e.g., sentiment analysis score for a given text, entity extraction results) can prevent redundant computation in subsequent stages or for related queries.

- Caching Model Embeddings or Pre-computed Features: For search or recommendation systems powered by AI, pre-computed embeddings for frequently queried items or user profiles can be cached to accelerate vector similarity searches.

- Prompt Caching: While the model output might change based on dynamic input, the structured part of a prompt or the "system instructions" might be static. An

AI Gatewaymight cache parsed prompt structures to speed up request preparation.

The decision to cache and what to cache within an AI Gateway requires careful consideration of the AI model's determinism, the cost of inference, the frequency of identical inputs, and the acceptable latency vs. freshness trade-off for AI-driven insights.

Stateless Operation: The Power of Forgetting for Scale

In stark contrast to caching's philosophy of remembering, stateless operation embraces the power of forgetting. A stateless system, or a stateless component within a larger system, is one that does not store any client-specific context or session data between requests. Each request from a client to a server must contain all the information necessary for the server to fulfill that request, without relying on any prior interactions or stored state on the server itself. The server processes the request based solely on the data provided in that single request.

What is Statelessness? Definition and Core Principles

The fundamental principle of statelessness dictates that every request is an independent, self-contained transaction. The server treats each request as if it were the very first, completely oblivious to any previous requests from the same client. This means:

- No Session State: The server does not maintain session objects, session IDs, or any other form of client-specific state in its memory or on its local disk between requests.

- Self-Contained Requests: All necessary information (e.g., user identity, authentication credentials, request parameters, context) must be included with each request.

- Idempotency (often): While not strictly required, stateless operations often lend themselves to idempotency, meaning performing the same operation multiple times with the same inputs will produce the same result without unintended side effects.

Contrast this with stateful systems, where servers remember client interactions. For instance, a traditional web application often stores user session data (e.g., logged-in status, shopping cart contents) on the server. If the user makes a subsequent request, the server uses this stored session data to understand the context of the new request.

Characteristics of Stateless Systems

Stateless systems typically exhibit several defining characteristics:

- No Sticky Sessions: Because no client state is held on the server, there's no need to route subsequent requests from the same client to the same server instance (i.e., "sticky sessions"). Any available server can handle any request.

- Horizontal Scalability: This is perhaps the most significant advantage. To scale a stateless service, one simply adds more identical instances. Load balancers can then distribute requests evenly across these instances without concern for session affinity.

- Fault Tolerance/Resilience: If a server instance fails, it simply stops processing requests. Other instances can pick up the slack immediately, as no client state is lost within the failed server. Clients might need to retry a request, but they won't lose their entire session context.

- Simplicity of Server Logic (for state management): Developers don't need to write complex code to manage, synchronize, or persist session state on the server. The server's logic focuses purely on processing the immediate request.

- RESTful Principle: Representational State Transfer (REST), the architectural style for distributed hypermedia systems, explicitly mandates that communication between client and server must be stateless.

Benefits of Statelessness

The adoption of stateless principles yields substantial architectural advantages, primarily centered around scalability and resilience:

- Effortless Horizontal Scalability: This is the paramount benefit. When servers don't hold state, any server can handle any request. This makes adding or removing server instances trivial, allowing systems to scale out or in dynamically in response to fluctuating load. A load balancer can distribute traffic uniformly across all available instances, maximizing resource utilization without complex session management. For an

API Gatewaythat might need to handle bursts of traffic, statelessness is foundational to its ability to scale quickly. - Enhanced Reliability and Resilience: In a stateless architecture, the failure of an individual server instance has minimal impact. There's no session data tied to that specific server to be lost, corrupted, or migrated. Other healthy instances can immediately take over, ensuring continuous service. This dramatically improves fault tolerance and overall system uptime.

- Simplified Load Balancing: Without the need for sticky sessions, load balancing becomes much simpler. Basic algorithms like round-robin or least connections can be used effectively, as any server is equally capable of serving any request. This reduces the complexity of

gatewayor load balancer configurations. - Simplified Deployment and Management: Deploying new versions or scaling up/down is easier in stateless systems. Instances can be spun up or down without worrying about disrupting active user sessions on specific servers. This contributes to faster continuous integration/continuous deployment (CI/CD) pipelines.

- Improved Resource Utilization: Because requests can be distributed more evenly and any server can handle any request, resources across the server pool are generally utilized more efficiently. There's less chance of "hot spots" where some servers are overloaded while others are idle because of sticky session requirements.

Challenges and Considerations for Stateless Operation

While statelessness offers significant benefits, it also presents its own set of challenges and trade-offs that require careful architectural planning:

- Increased Request Size/Overhead: Since each request must carry all necessary context, the size of individual requests can increase. For example, instead of a small session ID, a stateless approach might require sending a full JSON Web Token (JWT) containing user claims with every request. While often negligible for small pieces of data, for very chatty APIs or large contextual payloads, this could lead to increased network bandwidth usage and serialization/deserialization overhead.

- Client-Side or External State Management: The state doesn't disappear; it merely shifts location. In stateless systems, state management responsibilities typically fall to:

- The Client: The client (e.g., browser, mobile app) is responsible for maintaining its own state and including it in requests. This can introduce security concerns if sensitive data is stored client-side without proper encryption or validation.

- A Separate, Shared State Store: A dedicated, highly available, and potentially distributed data store (e.g., a database, a distributed cache like Redis, a key-value store) is used to store session-like data, which the stateless services can query when needed. This centralizes state management but introduces a dependency on an external service, which then becomes a critical component that needs its own high availability and scalability.

- Security Implications: When state is transferred via tokens (like JWTs) in stateless communication, careful attention must be paid to token security. Tokens must be signed (to prevent tampering), potentially encrypted (for sensitive data), and have appropriate expiration times. Revoking compromised tokens can be challenging in a purely stateless system, often requiring external blacklist mechanisms or short TTLs.

- Developer Experience/Complexity for Client-Side State: Developers building client applications might find it more complex to manage application state and ensure it's correctly passed with every request, especially for complex user flows.

- Potential for Performance Overhead (for certain workloads): While generally good for scalability, the need to re-transmit and re-parse context with every request can introduce minor performance overhead compared to a stateful system where context is readily available in memory. However, this is usually outweighed by the scalability benefits.

Statelessness in the Context of Gateways and AI Gateways

Stateless design is almost a de facto standard for robust and scalable API Gateway implementations.

- Core Principle for API Gateways: An

API Gatewayis positioned at the edge of a system, handling a massive volume of requests. Its primary functions – routing, authentication, authorization, rate limiting – benefit immensely from a stateless design. When agatewayreceives a request, it should perform its logic (e.g., validate a token, check rate limits, apply routing rules) based solely on the information in that request. It should not rely on internal state tied to a specific client session. This allows any instance of thegatewayto handle any request, making it incredibly scalable and resilient. - JWTs for Apparent State: JSON Web Tokens (JWTs) are a common pattern that enables stateless communication while still providing clients with an "apparent" session. After authentication, an identity provider issues a JWT to the client. This token contains claims about the user (e.g., user ID, roles, expiration time). The client then sends this JWT with every subsequent request. The

API Gatewaycan cryptographically verify the JWT's signature (without needing to contact the identity provider for every request), extract the claims, and make authorization decisions. Thegatewayitself remains stateless; it doesn't store the session, but rather trusts the self-contained token. - Distributed Rate Limiting: While rate limit counters are a form of state, a truly stateless

gatewaywould externalize this state to a distributed cache (like Redis). Thegatewayinstances themselves remain stateless; they simply query and update the shared cache for rate limit data. This pattern exemplifies how stateless services often rely on external state stores. - Configuration as External State: The routing rules and policies that an

API Gatewayapplies are essentially configuration state. A statelessgatewaywill load this configuration at startup or fetch it from a configuration service. It doesn't modify this configuration internally based on client interactions. This separation allows thegatewayinstances to remain identical and interchangeable.

For an AI Gateway, statelessness is equally critical, particularly for scalability of AI inference.

- Distributing AI Inference Tasks: AI model inference can be computationally intensive and vary widely in duration. An

AI Gatewaythat is stateless can easily distribute AI requests across a pool of AI model servers (e.g., GPU instances). If a request takes a long time, thegatewaycan simply forward it and move on to the next, without holding up resources tied to a specific client session. This ensures that theAI Gatewayitself does not become a bottleneck. - Handling Bursts of AI Queries: The demand for AI inference can be spiky. A stateless

AI Gatewaycan rapidly scale up its backend AI model instances to handle sudden bursts of queries, then scale down when demand subsides, optimizing resource usage and cost. - Unified API Format and Model Agnosticism: A product like APIPark, an open-source

AI gatewayandAPI management platform, emphasizes a unified API format for AI invocation. This approach naturally encourages statelessness. Thegatewaytransforms client requests into a standardized format for the AI model and routes it. It doesn't need to remember which specific client asked for which specific model configuration; each request is complete in itself, allowingAPIParkto effectively manage, integrate, and deploy AI services at scale.

The Synergistic Dance: Caching and Statelessness in Harmony

At first glance, caching and stateless operation might appear to be opposing forces. Caching explicitly involves remembering data, while statelessness emphasizes forgetting. However, this superficial dichotomy belies a profound truth: these two paradigms are not mutually exclusive but rather deeply complementary. When skillfully combined, they form a powerful architectural duo, delivering optimal performance, scalability, and resilience in complex distributed systems. The art lies in understanding when and where to apply each, allowing them to enhance rather than conflict with one another.

Are They Mutually Exclusive? No, They Are Complementary

The key to their coexistence lies in perspective. Statelessness primarily concerns the server's internal state regarding client sessions. A stateless server does not store contextual information that ties a client's sequence of requests together. Caching, on the other hand, is about data availability and access speed. It concerns storing copies of data that might be expensive to retrieve or compute, regardless of whether that data is client-specific or general.

A server can be stateless concerning client sessions while simultaneously leveraging caching for shared, immutable, or frequently accessed data. For instance, an API Gateway can process each client request independently (stateless), using JWTs to authenticate without storing session data on the gateway itself. Concurrently, that same API Gateway can cache the responses of a backend service that provides static configuration data, or the results of token validation checks for performance (caching). The gateway isn't storing client state, but it is remembering frequently accessed system data or shared resource data.

When to Cache in a Stateless World

In a world where statelessness reigns supreme for scalability, caching becomes particularly valuable for specific scenarios:

- Read-Heavy Operations with Stable Data: This is the classic use case for caching. If an API endpoint or a backend service is primarily read-oriented and the data it provides changes infrequently, caching its responses dramatically reduces the load on the source system and improves response times. Examples include product catalogs, news articles, public profiles, or configuration data.

- Expensive Computations: If processing a request involves heavy computation (e.g., complex database queries, report generation, AI model inference), caching the results for identical inputs can save significant computational resources and time. An

AI Gatewaywill find immense value in caching common AI model predictions if they are deterministic and expensive to re-compute. - Authentication and Authorization Results: While the

API Gatewayitself might be stateless, validating authentication tokens (e.g., JWTs) can involve cryptographic checks or database lookups (for revocation lists). Caching the results of these validations for a short period can significantly speed up the authorization process for subsequent requests from the same token. This allows thegatewayto make quick, trusted decisions without repeated overhead. - Rate Limit Counters and Quotas: Although these represent a form of state, they are usually shared system-wide. Storing them in a fast, distributed cache (e.g., Redis) allows multiple stateless

gatewayinstances to coordinate rate limiting policies without introducing sticky sessions or server-specific state. Thegatewayqueries the cache and remains stateless regarding its internal processing. - Microservice Configuration: In a microservices architecture, each service is often stateless. However, these services need to retrieve their configuration (e.g., database connection strings, feature flags) at startup or periodically. Caching this configuration locally (or at the

gatewaylevel) prevents constant round-trips to a configuration service.

Architectural Patterns Combining Both

The most robust and high-performing systems often employ sophisticated architectural patterns that strategically combine caching and statelessness:

- Stateless API Gateway with Distributed Caching for Backend Services:

- Architecture: An

API Gateway(e.g., APIPark) is deployed as a stateless component, capable of horizontal scaling and handling requests without session affinity. - Caching Layer: Behind the

gateway, or even within thegatewayfor certain responses, a distributed cache (like Redis) is used. - Function: The

gatewayforwards requests to stateless backend microservices. However, for frequently accessed data that these microservices provide, thegatewayor the microservices themselves might interact with the distributed cache. For example, thegatewaymight cache API responses, while a microservice might cache database query results in Redis. - Benefit: This setup ensures high availability and scalability at the

gatewaylevel, while also reducing the load on databases and backend services through caching.

- Architecture: An

- CDN + Stateless API Gateway + Stateless Microservices:

- Architecture: Content Delivery Networks (CDNs) provide edge caching for static assets and often for dynamic API responses at geographically distributed points. Requests then hit a stateless

API Gateway, which routes them to stateless backend microservices. - Caching Layer: Multiple layers of caching are employed: CDN at the outermost edge, potentially

API Gatewaycaching for certain API responses, and application-level or distributed caching within the microservices. - Function: Static content is served directly from the CDN. Dynamic requests pass through the CDN (which might also cache some API responses), then to the stateless

API Gatewayfor routing, authentication, and policy enforcement, and finally to the appropriate stateless microservice. - Benefit: Achieves extremely low latency for global users due to multi-layered caching, while maintaining the scalability and resilience benefits of a fully stateless backend.

- Architecture: Content Delivery Networks (CDNs) provide edge caching for static assets and often for dynamic API responses at geographically distributed points. Requests then hit a stateless

- Serverless Functions with External State Stores and Caches:

- Architecture: Serverless functions (e.g., AWS Lambda, Azure Functions) are inherently stateless; each invocation is independent. They rely on external services for persistence and state.

- Caching Layer: These functions often interact with managed databases (e.g., DynamoDB, Cosmos DB) and managed caching services (e.g., ElastiCache, Azure Cache for Redis) to store and retrieve data efficiently.

- Function: A serverless function performs its task based on the input event and any data it retrieves from external state stores or caches. It doesn't maintain any state between invocations.

- Benefit: Extreme scalability, pay-per-execution cost model, and simplified operational overhead, underpinned by the combination of stateless compute and managed state/caching.

Choosing the Right Approach: A Decision Matrix

The decision of where to cache and where to remain strictly stateless is not one-size-fits-all. It requires careful consideration of various factors:

| Feature/Consideration | Primarily Stateless Operations | Strategic Caching |

|---|---|---|

| Primary Goal | Maximize scalability, resilience, simplify load balancing. | Reduce latency, increase throughput, offload backend systems. |

| Data Volatility | Ideal for highly volatile, frequently changing data (e.g., shopping cart updates, transaction processing) where freshness is paramount. | Best for immutable, slowly changing, or static data where some staleness is acceptable. |

| Read/Write Patterns | Suitable for balanced read/write ratios or write-heavy operations. | Highly advantageous for read-heavy workloads. |

| Consistency Requirements | Strong consistency is easier to achieve as every request goes to the source of truth (or a single source of truth external state store). | Eventual consistency is often tolerated. Strong consistency with caching is complex and introduces significant overhead (e.g., distributed invalidation). |

| Scalability | Enables horizontal scaling with ease; simply add more instances. | Enhances scalability by offloading backend, but the cache itself (especially if distributed) needs to be scalable and highly available. |

| Complexity | Simplifies server-side logic by removing state management concerns. Shifts state management to client or external state store. | Adds complexity: cache invalidation, coherency, eviction policies, infrastructure for distributed caches. Requires careful design and monitoring. |

| Network Overhead | Potentially higher request size due to sending full context (e.g., JWT). | Lower network overhead for cache hits, as data is served locally or from a closer node. |

| Security | Requires careful handling of tokens (signing, encryption, expiration, revocation) if sensitive data is transmitted with each request. | Requires careful management of sensitive data in cache; ensure proper encryption at rest/in transit for cache entries. Cache invalidation is crucial for security-sensitive data. |

| Best Use Cases | Transaction processing, real-time updates, user authentication/authorization flow (where tokens are stateless but represent state), highly dynamic APIs. Primarily for API Gateway core logic. |

Static content delivery (CDN), frequently accessed API responses, database query results, computationally expensive AI model outputs (for an AI Gateway), authentication token validation results, rate limit counters. |

The most effective architectures are often hybrids, leveraging statelessness for the core processing units (like gateway instances and microservices) to ensure maximum scalability and resilience, while strategically applying caching at various layers (client, CDN, API Gateway, application, distributed cache) for specific data types or computationally intensive results to maximize performance and reduce backend load. This nuanced approach allows systems to achieve both unparalleled speed and robust scalability.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Practical Applications and Real-world Scenarios

To fully appreciate the interplay between caching and stateless operations, it is insightful to examine their application in common real-world scenarios. From consumer-facing applications to intricate backend systems, these two paradigms form the bedrock of high-performing, scalable architectures.

E-commerce Platforms: Balancing Dynamic and Static

E-commerce websites present a classic case study for the combined application of caching and statelessness. These platforms handle a vast array of operations, from browsing static product catalogs to dynamic, user-specific actions like adding items to a cart or processing payments.

- Caching for Product Catalogs and Static Content: Product pages, category listings, static images, CSS, and JavaScript files are often read-heavy and change infrequently. These are ideal candidates for caching. CDNs (Content Delivery Networks) will cache static assets at the edge, drastically speeding up page load times for users worldwide.

API Gatewaysor reverse proxies might cache responses for API endpoints that retrieve product details or category information, reducing the load on the backend product service. This ensures that the most frequently requested data is served with minimal latency. - Statelessness for Cart and Checkout Processes: Conversely, operations involving a user's shopping cart, checkout flow, or order placement are highly dynamic and user-specific. These are typically handled by stateless services. When a user adds an item to their cart, the request is sent to a stateless backend service that updates the cart data, usually stored in a highly available, external database or a distributed key-value store (e.g., Redis). Each subsequent request (e.g., updating quantity, proceeding to checkout) carries the necessary context (e.g., cart ID, user token), allowing any available instance of the stateless service to process it. This ensures that the system can scale effortlessly during peak shopping seasons, as no single server holds critical, transient user state. The

API Gatewayorchestrating these requests remains stateless, simply routing them to the appropriate backend service.

Content Delivery Networks (CDNs): Massive Edge Caching

CDNs are perhaps the most pervasive example of large-scale caching. They are built on the principle of distributed edge caching, delivering content from servers physically closest to the end-user.

- Global Caching Layer: CDNs strategically place cache servers (PoPs – Points of Presence) around the globe. When a user requests a web asset (image, video, script), the request is routed to the nearest PoP. If the content is cached there, it's served immediately, bypassing the origin server entirely.

- Offloading the Origin Server (which is often stateless): The origin server (where the original content resides) benefits immensely. It only needs to serve content for cache misses or for truly dynamic requests that cannot be cached. This allows the origin server, often a stateless web server or

API Gatewayserving dynamic content, to handle a much higher volume of unique requests without being overwhelmed. The CDN essentially acts as a massive, intelligent, and geographically distributedgatewaythat selectively caches content, allowing the backend to remain stateless and highly scalable.

Microservices Architectures: The Stateless Foundation

The microservices architectural style is inherently aligned with stateless principles, particularly for the service instances themselves.

- Stateless Microservice Instances: Each microservice is typically designed to be stateless regarding client sessions. If a user interacts with a "User Profile Service," the service instances don't maintain a session. Instead, they operate on incoming requests, fetching or storing data in a shared, persistent backend (e.g., a database, an object store). This enables independent scaling of each microservice.

- API Gateway as the Orchestrator: An

API Gatewaysits in front of these microservices, acting as the entry point. It receives requests, performs authentication, authorization, rate limiting (often in a stateless manner by externalizing state to distributed caches), and then routes them to the appropriate stateless microservice. - Strategic Caching within Microservices: While the microservice instances are stateless, they can still utilize caching internally. A "Product Service" might cache frequently accessed product details in an in-memory cache or a distributed cache (like Redis) to reduce database load. The caching layer here doesn't introduce client-specific state to the service instance but rather optimizes access to shared, domain-specific data.

The Critical Role of API Gateways

The API Gateway acts as a crucial control point where both caching and statelessness converge to deliver optimal performance and architectural robustness.

- Stateless Core for Routing and Policy Enforcement: The core functionality of an

API Gateway—request routing, authentication, authorization, rate limiting, traffic management—is fundamentally stateless. Eachgatewayinstance operates independently, processing requests based on the incoming request's data (e.g., HTTP headers, body, JWTs) and its loaded configuration. This stateless design is what allowsAPI Gatewaysolutions to achieve immense scalability and high availability, making them resilient to individual instance failures and capable of handling fluctuating traffic. - Strategic Caching for Performance Boosts: While its core is stateless, an

API Gatewayis also an ideal place to implement strategic caching. It can cache responses from slow backend services, authentication token validation results, or frequently accessed internal configurations. This is particularly valuable for public APIs that expose data consumed by many clients. Thegatewayacts as a smart cache at the system's edge, preventing redundant calls to upstream services, thereby reducing their load and improving overall response times for clients.

A concrete example of this balance is seen in products like APIPark. As an open-source AI gateway and API management platform, APIPark is designed for high performance and scalability. Its ability to rival Nginx in performance, achieving over 20,000 TPS with modest hardware, strongly indicates an architecture that leans heavily on stateless operations for its core routing, traffic forwarding, and load balancing capabilities. This inherent statelessness allows APIPark to be deployed in clusters, supporting large-scale traffic and ensuring resilience – any instance can handle any incoming API or AI invocation request.

Simultaneously, APIPark's advanced features suggest areas where intelligent caching would be invaluable. For instance, its capability to integrate 100+ AI models and simplify AI invocation with a unified API format means it's handling complex, potentially expensive AI inference requests. An AI Gateway like APIPark could internally leverage caching for: * Frequent AI Model Responses: If a common prompt or query is sent to an AI model repeatedly, APIPark could cache the model's output, significantly reducing latency and computational cost for subsequent identical requests. * Authentication and Authorization Outcomes: Similar to a standard API Gateway, caching the results of API key validation or user token verification for API access requests within APIPark would accelerate access control decisions. * API and AI Model Configuration: Caching the routing rules, policies, and AI model specifics (e.g., prompt templates, model IDs) allows APIPark to make rapid routing and transformation decisions without constant database lookups.

By combining a stateless core with strategic, intelligent caching for specific data or expensive operations, APIPark demonstrates how these two powerful concepts work in synergy to deliver a high-performance, scalable, and efficient AI Gateway and API management solution. Its detailed API call logging and powerful data analysis also benefit from understanding which operations are hitting caches versus those that are fully processed, informing further optimization.

Advanced Considerations and Future Trends

The landscape of software architecture is constantly evolving, and with it, the sophisticated interplay of caching and statelessness. Emerging technologies and architectural patterns continue to redefine how we approach performance optimization.

Edge Computing and Caching

Edge computing pushes computation and data storage closer to the source of data generation and consumption. This paradigm inherently champions caching. Edge nodes act as local caches, processing requests and storing data locally to minimize latency and bandwidth usage to central cloud data centers. An API Gateway or AI Gateway deployed at the edge would primarily leverage caching for frequently accessed data and AI model inference results relevant to that specific geographic region, while still relying on a stateless core to process individual requests. This multi-layered, geographically distributed caching strategy is crucial for low-latency experiences in IoT, autonomous vehicles, and real-time gaming.

Serverless Architectures and their Inherently Stateless Nature

Serverless functions (FaaS - Function as a Service) are a prime example of inherently stateless compute. Each function invocation is independent, stateless, and short-lived. This simplifies scaling dramatically – the cloud provider automatically scales functions up and down based on demand without requiring developers to manage servers or worry about session state. However, serverless functions still need to interact with state. This is typically done through external, managed services like:

- Managed Databases: (e.g., AWS DynamoDB, Azure Cosmos DB, Google Cloud Firestore)

- Managed Caching Services: (e.g., AWS ElastiCache, Azure Cache for Redis, Google Cloud Memorystore)

- Object Storage: (e.g., AWS S3, Azure Blob Storage, Google Cloud Storage)

Thus, serverless architectures epitomize the combination: stateless compute (the functions) leveraging external, managed state and caching services for performance and persistence. The API Gateway often serves as the entry point for serverless functions, further reinforcing the pattern.

Intelligent Caching (ML-driven Prediction)

Traditional caching relies on simple policies like LRU or TTL. However, with advancements in machine learning, "intelligent caching" is emerging. This involves using ML models to predict which data is most likely to be requested next or which items should be evicted, based on historical access patterns, user behavior, and contextual information. For an AI Gateway, this could mean proactively caching results for common follow-up questions or popular AI requests based on real-time traffic analysis, rather than waiting for a cache miss. This predictive approach can significantly increase cache hit rates and further optimize performance.

Stateful vs. Stateless Functions in Event-Driven Systems

While statelessness is generally preferred for scalability, some modern architectural patterns, particularly in event-driven and stream processing systems, are exploring "stateful functions." These are functions designed to retain state across invocations, often managed by a framework that ensures fault tolerance and consistency (e.g., Apache Flink, Akka Cluster Sharding). This reintroduces state at the compute layer but in a highly controlled and resilient manner, suitable for scenarios requiring complex, long-running processes or aggregations over data streams where externalizing state for every small interaction would be inefficient. However, for an API Gateway or AI Gateway primarily focused on request/response, statelessness remains the dominant and preferred model.

The Rise of AI Gateway and Specialized Caching for AI Models

The emergence of the AI Gateway as a distinct architectural component highlights a critical need for specialized caching strategies. AI model inference, especially with large foundation models, can be very resource-intensive (GPU cycles, memory) and incur significant costs.

- Semantic Caching: Beyond simple key-value caching of exact prompts, an

AI Gatewaycould implement "semantic caching." This involves understanding the meaning of a query. If two different prompts have semantically similar meanings and would likely result in very similar AI model outputs, theAI Gatewaycould serve a cached response even if the prompts aren't exact matches. This requires embedding models and similarity search within the cache logic. - Caching Prompt Engineering Outputs: As prompts become more complex and involve chains of thought or specific formatting, caching the results of intermediate prompt processing steps (e.g., variable substitution, template rendering) can save valuable time before the actual model invocation.

- Model Load Caching: Loading an AI model into memory (especially large ones) can be slow. An

AI Gatewaymight manage a pool of pre-loaded models, effectively "caching" the loaded state of the model to reduce cold-start latencies for inference requests. - Cost-Aware Caching: Given the per-token or per-inference cost of many AI models, an

AI Gatewaycan implement cost-aware caching strategies, prioritizing caching for the most expensive or frequently invoked AI tasks to optimize operational expenditures.

These advanced considerations demonstrate that the discussion around caching and statelessness is not static. As technology progresses, so too do the methods and strategies for optimizing performance, continuously pushing the boundaries of what distributed systems can achieve.

Decision Framework and Best Practices

Navigating the complexities of caching and stateless operations requires a systematic approach. Architects and developers must integrate these concepts into a broader decision framework to design systems that are not only performant but also maintainable, secure, and cost-effective.

A Consolidated Guide: How to Decide

The ultimate decision on whether to prioritize statelessness or leverage caching, and where, is a balancing act influenced by several factors:

- Analyze Data Characteristics:

- Volatility: How frequently does the data change? (High volatility = lean towards stateless, less caching; Low volatility = good candidate for caching).

- Freshness Requirements: How critical is it for users to see the absolute latest data? (Strict freshness = stateless with immediate data retrieval; Eventual consistency acceptable = good for caching).

- Read/Write Ratio: Is the data primarily read, or is there a high volume of writes? (High reads = strong candidate for caching; High writes = stateless operation with direct write-through to persistent storage).

- Data Size: Does the data need to be transferred frequently? (Large, frequently transferred data = caching can reduce network overhead).

- Evaluate Performance Goals:

- Latency Targets: What are the acceptable response times for different operations? (Low latency goals = caching is crucial).

- Throughput Requirements: How many requests per second must the system handle? (High throughput = stateless for horizontal scaling, caching to reduce backend load).

- Scalability Needs: How rapidly must the system scale up or down? (Rapid, elastic scaling = statelessness is paramount).

- Consider Architectural Layer:

- Client/Edge: For static assets and general public data, client-side and CDN caching are almost always beneficial.

- API Gateway: A

gatewayshould be stateless for its core routing and policy enforcement. However, it's an excellent candidate for caching common API responses, authentication outcomes, and rate limit data to protect backend services. - Backend Services/Microservices: Services should generally be stateless regarding client sessions for maximum scalability. They can, however, use local or distributed caches for internal data or expensive computations.

- AI Gateway: An

AI Gatewayshould be stateless for distributing AI inference tasks efficiently but can gain significant performance and cost benefits from caching AI model outputs for common prompts or intermediate processing results.

- Assess Complexity vs. Benefit:

- Caching Complexity: Understand that implementing robust caching, especially with complex invalidation or coherency requirements, adds significant architectural and operational complexity. The performance gains must outweigh this complexity.

- Statelessness Complexity: While simplifying server-side state, it shifts state management to the client or an external store, introducing new challenges (e.g., token security, external store reliability).

- Security Implications:

- Caching: Ensure sensitive data in caches is properly encrypted. Plan for secure cache invalidation for security-critical data.

- Statelessness: If using tokens (e.g., JWTs), ensure they are signed, have appropriate expiration, and that revocation mechanisms (if needed) are in place (e.g., via a distributed blacklist cache).

Best Practices for Implementation

- Cache What Matters Most: Don't cache everything. Focus on data that is frequently accessed, expensive to retrieve/compute, and relatively stable.

- Embrace Immutability: Immutable data is far easier to cache correctly. If data can't be truly immutable, strive for eventual consistency models for cached data.

- Design for Cache Invalidation from Day One: Don't treat cache invalidation as an afterthought. Choose an appropriate strategy (TTL, event-driven, write-through) based on data freshness requirements.

- Externalize State for Stateless Services: When designing stateless services, identify what "state" they might need (e.g., user sessions, configuration, counters) and plan to store it in a highly available, scalable external service (e.g., distributed cache, database).

- Use Distributed Caches for Shared State: For microservices or

API Gatewayinstances that need to share state (like rate limits or cached authentication tokens), use a robust distributed cache like Redis or Memcached. - Monitor Cache Performance: Continuously monitor cache hit rates, eviction rates, and latency. Low hit rates indicate an ineffective cache or poor eviction policy.

- Observability for Stateless Systems: Ensure robust logging and tracing for stateless systems. Since requests are independent, good observability is crucial for understanding user journeys and debugging issues across multiple service calls.

- Implement Fallbacks: Design your system to gracefully degrade if the cache is unavailable or if an external state store fails. Don't let a cache failure bring down your entire application.

- Security First: Always prioritize security. Encrypt sensitive data in caches, properly sign and validate tokens in stateless communication, and implement strict access controls for both caches and external state stores.

- Iterate and Optimize: Performance optimization is rarely a one-time task. Continuously monitor your system, identify bottlenecks, and iterate on your caching and statelessness strategies. A/B testing can reveal the real-world impact of your optimizations.

Conclusion

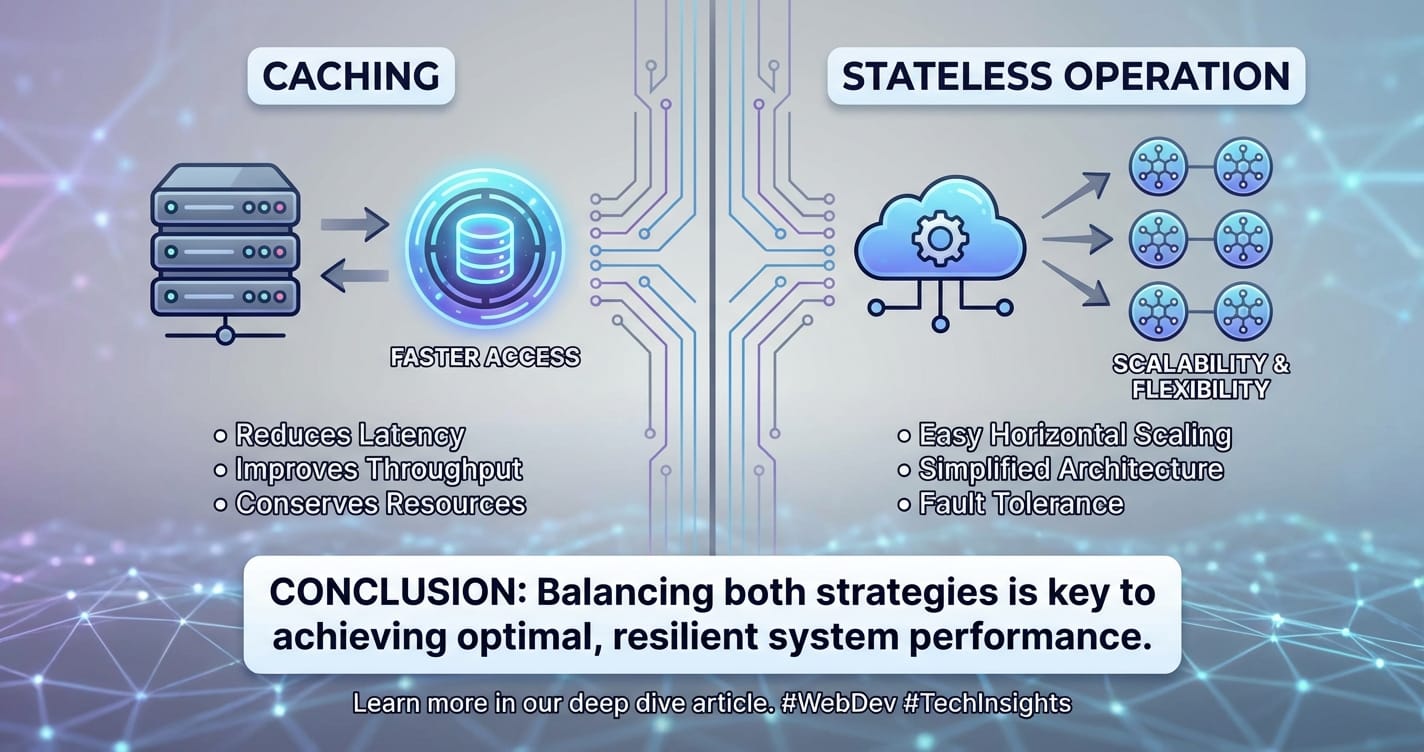

The journey into caching versus stateless operation reveals that these are not opposing forces in the architectural cosmos, but rather two potent and complementary strategies in the ceaseless quest for optimal system performance. Statelessness provides the bedrock for unparalleled horizontal scalability, resilience, and operational simplicity for core services, particularly for infrastructure components like the gateway and api gateway. It ensures that systems can flex and adapt to fluctuating demands without crumbling under the weight of persistent session state.

Conversely, caching, the art of strategic remembrance, acts as a powerful accelerator, dramatically reducing latency and offloading strain from backend systems. Whether at the browser, CDN, API Gateway, application, or distributed cache layer, judicious caching can transform sluggish operations into lightning-fast interactions, conserving valuable computational resources and enhancing user satisfaction. Its importance is only amplified in the context of an AI Gateway, where expensive AI model inferences can be intelligently cached to boost performance and manage costs effectively.

The most successful modern architectures are not defined by an exclusive commitment to one paradigm over the other, but by a thoughtful, deliberate, and nuanced combination of both. It is in the synergistic dance of stateless components leveraging intelligent caching that true performance optimization is realized. This requires architects and developers to deeply understand the characteristics of their data, the demands of their workloads, and the trade-offs involved in terms of consistency, complexity, and security. By applying a robust decision framework and adhering to best practices, organizations can construct systems that are not only performant and scalable but also resilient, maintainable, and poised for future innovation. In the end, the mastery lies not in choosing between remembering and forgetting, but in discerning precisely what to remember and what to forget, and when.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between caching and stateless operation? The fundamental difference lies in their purpose regarding data retention. Stateless operation means a server does not store any client-specific context or session data between requests; each request must contain all necessary information. It emphasizes forgetting past interactions to achieve scalability and resilience. Caching, on the other hand, is about remembering frequently accessed or computationally expensive data (regardless of client session) closer to the point of use to reduce latency and backend load. A system can be stateless (regarding client sessions) while still utilizing caching for shared data.

2. Why is statelessness so important for API Gateways and microservices? Statelessness is crucial for API Gateway and microservices architectures primarily because it enables horizontal scalability and resilience. Since no client state is tied to a specific server instance, any instance can handle any request. This makes it trivial to add or remove servers to cope with varying loads, and the failure of a single instance doesn't lead to session loss. This simplifies load balancing and ensures high availability, making the system robust against traffic spikes and server failures.

3. Can caching introduce new problems into a system? Yes, while highly beneficial, caching introduces complexities. The "hardest problem" is cache invalidation, ensuring cached data remains consistent with the source of truth. Other challenges include stale data (when cached data is out of date), cache coherency (keeping multiple caches consistent), cache warm-up (populating a new cache), and memory management (deciding what to evict when the cache is full). Incorrect caching strategies can lead to data inconsistencies, debugging difficulties, and even performance degradation.

4. How does an AI Gateway benefit from both statelessness and caching? An AI Gateway like APIPark benefits immensely. Its core function of routing AI invocation requests to various models is stateless, allowing it to scale horizontally and distribute computationally intensive AI inference tasks efficiently without being a bottleneck. Simultaneously, it can strategically leverage caching for frequently requested AI model responses or intermediate processing results. For example, if many users ask the same prompt to an LLM, the AI Gateway can cache the output, drastically reducing latency, computational cost, and API call expenses for subsequent identical queries.

5. When should I prioritize strong consistency over performance (and thus limit caching)? You should prioritize strong consistency when dealing with data where even momentary staleness could lead to significant negative consequences. This includes scenarios like financial transactions, inventory management (where selling out-of-stock items is critical), user authentication state changes (e.g., password resets), or any data where legal or business rules demand absolute accuracy and real-time updates. In these cases, it's often better to ensure every request goes to the single source of truth, even if it incurs slightly higher latency, rather than risking inconsistencies due to cached data. For such data, stateless operations that directly interact with a highly consistent database are generally preferred, or caching would be implemented with very short TTLs and aggressive invalidation strategies.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.