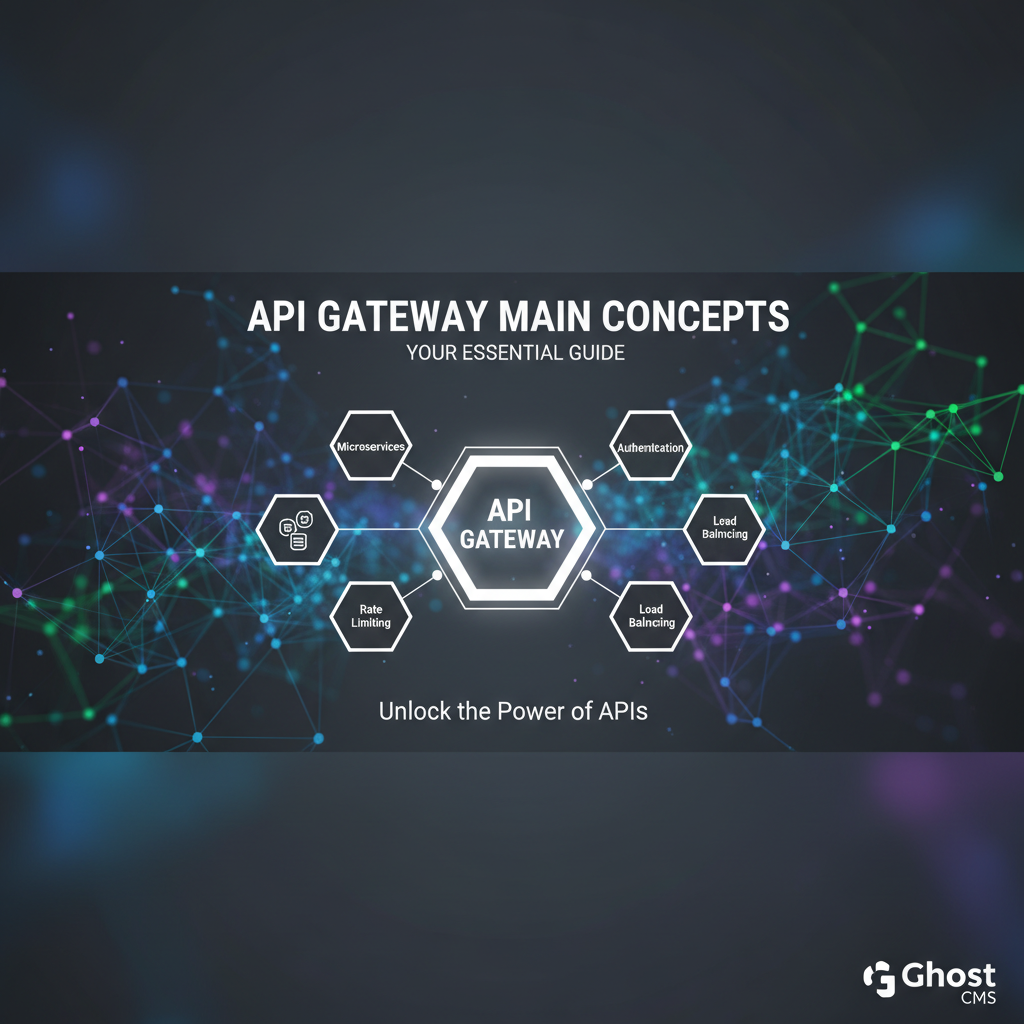

API Gateway Main Concepts: Your Essential Guide

In an increasingly interconnected digital world, where applications, services, and data constantly communicate, the architecture of modern software systems has grown profoundly intricate. The paradigm shift towards microservices, cloud-native deployments, and distributed systems has unlocked unprecedented agility and scalability for businesses. However, this evolution also ushers in a new set of complexities, particularly in how these numerous, disparate services expose their capabilities to external clients and internal consumers. Navigating a labyrinth of individual service endpoints, managing diverse security policies, ensuring optimal performance, and maintaining consistent observability across a sprawling ecosystem can quickly become an overwhelming endeavor.

Imagine a bustling metropolis, filled with countless buildings, each housing a unique service. Without a well-orchestrated traffic management system—clear roads, signposts, and dedicated entry points—chaos would inevitably ensue. Clients attempting to reach a specific service would need to know the exact address of each building, understand its unique access protocols, and somehow navigate the city's entire infrastructure independently. This scenario, scaled up to hundreds or even thousands of digital services, precisely illustrates the challenge that modern application architectures face. This is where the API Gateway emerges not merely as a convenience, but as an indispensable architectural component.

The API Gateway acts as the singular, intelligent front door to your backend services, centralizing the management of various cross-cutting concerns that would otherwise need to be redundantly implemented across every single service. It forms a crucial abstraction layer, shielding clients from the underlying complexity and dynamic nature of the backend while simultaneously enforcing critical policies and optimizing communication flows. From bolstering security and enhancing performance to simplifying development workflows and providing invaluable insights into API usage, the API Gateway has cemented its position as a cornerstone of robust, scalable, and manageable distributed systems.

This comprehensive guide will delve deep into the fundamental concepts underpinning API Gateways, exploring their critical functionalities, architectural patterns, deployment considerations, and best practices. We will uncover why this piece of infrastructure is not just a helpful tool but an essential strategic asset for any organization leveraging APIs in their digital strategy. By the end of this journey, you will possess a profound understanding of how to effectively harness the power of an API Gateway to build more resilient, secure, and high-performing applications.

Chapter 1: Understanding the API Gateway – The Digital Front Door

To truly grasp the significance of an API Gateway, we must first establish a clear definition and understand the architectural evolution that necessitated its existence. It’s more than just a proxy; it’s an intelligent intermediary, a command center for your API traffic.

What is an API Gateway?

At its core, an API Gateway is a server that acts as a single entry point for a group of backend services. It sits between the client applications (web browsers, mobile apps, third-party systems) and the backend services, intercepting all API requests, applying a set of policies, and then routing those requests to the appropriate backend service. Once the backend service responds, the API Gateway can also process the response before sending it back to the client.

Think of an API Gateway as the central dispatch for all inbound and outbound API calls. Instead of clients needing to know the specific network addresses, protocols, or authentication mechanisms for each individual service in your backend, they only interact with the gateway. This single point of contact significantly simplifies the client-side interaction model.

Unlike a traditional reverse proxy or load balancer, which primarily operates at the network or transport layer (layer 4 and sometimes layer 7 for basic routing), an API Gateway is API-aware. It understands the structure and semantics of API requests and responses. This intelligence allows it to perform a rich array of functions directly related to API management, such as request transformation, protocol mediation, detailed authentication checks, and sophisticated traffic management rules based on API semantics. It’s akin to a concierge at a grand hotel, directing guests, verifying credentials, ensuring smooth transitions, and providing bespoke services, rather than just a doorman who simply opens and closes the main door.

The primary purpose of an API Gateway is to abstract the complexity of your backend services from your clients. In a world of microservices, where a single user request might involve interactions with dozens of individual, specialized services, the gateway acts as a facade. It masks the internal architecture, allowing backend services to evolve independently without forcing changes on client applications. This abstraction not only simplifies client development but also enhances security by preventing direct exposure of internal service endpoints.

Why Do We Need an API Gateway?

The necessity for an API Gateway has become increasingly evident with the widespread adoption of microservices and cloud-native architectures. Before the rise of API Gateways, clients often communicated directly with each backend service. While seemingly simpler for a small number of services, this direct communication model quickly becomes untenable as the number of services grows.

Here are the compelling reasons why an API Gateway is not just beneficial but often essential:

- Managing Complexity of Microservices: In a microservices architecture, an application is broken down into numerous small, independent services. Without a gateway, clients would have to manage multiple URLs, understand different authentication schemes for each service, and potentially aggregate data from several services themselves. The API Gateway consolidates these interactions, providing a single, coherent API façade. It reduces the number of round trips between the client and backend, leading to better performance and a simpler client-side codebase.

- Centralized Security Enforcement: Security is paramount. Implementing robust authentication and authorization mechanisms for every microservice individually is repetitive, error-prone, and difficult to manage consistently. An API Gateway centralizes these crucial security checks. It can authenticate requests, authorize access based on roles or permissions, and even enforce advanced security policies like WAF (Web Application Firewall) rules, ensuring that only legitimate and authorized requests reach your backend services. This consolidation significantly enhances the overall security posture and simplifies compliance efforts.

- Performance Optimization: API Gateways can significantly improve the performance of your applications. They can implement caching strategies for frequently accessed data, reducing the load on backend services and speeding up response times for clients. They also handle rate limiting, preventing individual clients from overwhelming backend services with excessive requests, thereby maintaining system stability and responsiveness for all users. Furthermore, by aggregating multiple backend calls into a single response, they reduce network overhead and latency for clients.

- Enhanced Observability and Monitoring: Understanding the health and behavior of your distributed system is critical for operational excellence. An API Gateway acts as a natural choke point where all API traffic passes through. This strategic position makes it an ideal place to collect comprehensive metrics, logs, and traces for every incoming request and outgoing response. This centralized data collection simplifies monitoring, debugging, and performance analysis, providing invaluable insights into API usage, error rates, and latency across your entire ecosystem.

- Improved Developer Experience: For developers building client applications, interacting with a single, well-documented API Gateway endpoint is far simpler than juggling dozens of disparate service endpoints. The gateway can provide a consistent API contract, handle versioning, and abstract away internal architectural changes. This simplification accelerates client-side development, reduces onboarding time for new developers, and minimizes the risk of integration errors, ultimately fostering a more productive development environment.

In essence, an API Gateway acts as a sophisticated abstraction layer that tackles the challenges inherent in distributed systems by centralizing common cross-cutting concerns. It transforms a complex web of individual service interactions into a streamlined, secure, and performant experience for both clients and backend services.

Chapter 2: Core Concepts and Functionalities of API Gateways

The true power of an API Gateway lies in the rich array of functionalities it provides, each designed to address specific challenges in API management and distributed system architectures. These functionalities transform the gateway from a mere traffic director into an intelligent control plane for your API ecosystem.

2.1. Request Routing and Load Balancing

One of the most fundamental responsibilities of an API Gateway is to intelligently direct incoming API requests to the correct backend service instance. This involves both sophisticated routing logic and robust load balancing capabilities.

When a client sends a request to the API Gateway, the gateway inspects various elements of the request, such as the URL path, HTTP method, headers, and query parameters. Based on predefined routing rules, it determines which backend service is intended to handle the request. For example, a request to /users/{id} might be routed to a "User Service," while a request to /products/{id} goes to a "Product Catalog Service." The gateway can also handle more complex routing scenarios, such as routing requests based on an API version specified in a header (X-API-Version: v2) or directing traffic to different backend environments (e.g., staging vs. production).

Once the target service is identified, the API Gateway employs load balancing to distribute requests efficiently across multiple instances of that service. This ensures high availability and prevents any single service instance from becoming a bottleneck. Common load balancing strategies include:

- Round Robin: Distributes requests sequentially to each server in the pool.

- Least Connections: Directs requests to the server with the fewest active connections.

- IP Hash: Uses a hash of the client's IP address to ensure that requests from the same client always go to the same server, which can be useful for session persistence.

- Weighted Load Balancing: Assigns different weights to servers based on their capacity or performance, sending more traffic to stronger servers.

Modern API Gateways often integrate with service discovery mechanisms (like Consul, Eureka, or Kubernetes Service Discovery). This allows the gateway to dynamically discover available service instances, automatically adjusting its routing and load balancing decisions as services scale up or down, or as new instances become available. This dynamic capability is crucial for elastic and resilient microservices architectures.

2.2. Authentication and Authorization

Security is arguably the most critical function of an API Gateway. By centralizing authentication and authorization, the gateway acts as the first line of defense, ensuring that only legitimate and authorized users or applications can access your backend services.

Authentication is the process of verifying a client's identity. The API Gateway can support various authentication mechanisms:

- API Keys: A simple, often less secure method where clients include a unique key in their requests.

- OAuth2 / OpenID Connect: Industry-standard protocols for secure delegated access. The gateway can validate access tokens (e.g., JWTs - JSON Web Tokens) issued by an Identity Provider, ensuring their authenticity, expiry, and scope.

- Mutual TLS (mTLS): Provides two-way authentication, verifying both the client and the server using digital certificates.

Once authenticated, Authorization determines what actions the authenticated client is permitted to perform. The API Gateway can enforce fine-grained access control policies based on various factors:

- Role-Based Access Control (RBAC): Clients are assigned roles (e.g., "admin," "user," "guest"), and the gateway allows or denies access to specific API endpoints or operations based on these roles.

- Attribute-Based Access Control (ABAC): More dynamic and flexible, ABAC evaluates attributes of the user, resource, action, and environment to make authorization decisions.

- Subscription Management: In scenarios where API access is managed through subscriptions, the gateway can verify if a client has an active subscription to a particular API product.

This centralized security enforcement offloads the burden from individual microservices, which can then trust that any request reaching them has already been authenticated and authorized. This drastically simplifies security implementation across the entire backend, reduces the attack surface, and ensures consistent policy application.

For instance, platforms like APIPark offer features such as "API Resource Access Requires Approval." This means that before a caller can even invoke an API, they must subscribe to it, and an administrator's approval is necessary. This additional layer of control, enforced at the gateway level, effectively prevents unauthorized API calls and significantly mitigates potential data breaches, offering a robust security posture for valuable API resources.

2.3. Rate Limiting and Throttling

To protect backend services from overload, ensure fair usage, and prevent malicious attacks like denial-of-service (DoS), API Gateways implement rate limiting and throttling.

Rate limiting restricts the number of requests a client can make within a specified time window. If a client exceeds this limit, the gateway rejects subsequent requests for a certain period, typically returning an HTTP 429 "Too Many Requests" status code. This prevents a single client from monopolizing resources or causing a service disruption.

Throttling is a more nuanced form of rate limiting, often used for monetization or tiered service access. For example, a "free" tier user might be limited to 100 requests per minute, while a "premium" user gets 1000 requests per minute. Throttling can also involve delaying requests rather than outright rejecting them, queuing them to be processed when resources become available.

API Gateways can apply these limits at various granularities:

- Per API: Different limits for different API endpoints (e.g., a "read" API might have higher limits than a "write" API).

- Per User/Application: Limits based on the authenticated user or the calling application.

- Per IP Address: Prevents a single IP from overwhelming the system, useful against unauthenticated abuse.

Implementing rate limiting at the gateway shields backend services from direct exposure to excessive traffic, allowing them to focus on their core business logic without needing to implement complex traffic management logic.

2.4. Caching

Caching is a powerful technique employed by API Gateways to improve performance, reduce latency, and offload processing from backend services. By storing frequently accessed API responses closer to the client, the gateway can serve subsequent identical requests much faster without needing to query the backend again.

The gateway can cache responses for GET requests where the data is relatively static or changes infrequently. When a request comes in, the gateway first checks its cache. If a valid, unexpired response is found, it's immediately returned to the client. If not, the request is forwarded to the backend, and the response, once received, is stored in the cache for future use.

Key considerations for gateway caching include:

- Cache Invalidation: Strategies for removing stale data from the cache (e.g., time-to-live (TTL), event-driven invalidation).

- Cache Scope: Whether the cache is local to the gateway instance (in-memory) or distributed across multiple gateway instances (e.g., using Redis or Memcached).

- Cache Keys: How requests are identified as "identical" for caching purposes (e.g., URL, headers, query parameters).

Effective caching at the API Gateway can dramatically reduce backend load, lower operational costs, and significantly enhance the responsiveness of API calls for end-users, especially in high-traffic scenarios with repetitive data access.

2.5. API Transformation and Orchestration

The API Gateway serves as a vital intermediary for adapting API requests and responses, allowing clients to interact with services in a standardized or simplified manner, regardless of the backend's specific requirements. This includes both data transformation and request orchestration.

API Transformation involves modifying the request before it reaches the backend service or modifying the response before it's sent back to the client. This is incredibly useful for:

- Protocol Translation: Converting requests from one protocol to another (e.g., a REST request from the client might be translated into a gRPC call to a microservice, or even a SOAP call to a legacy system).

- Data Format Conversion: Transforming data payloads (e.g., converting a JSON request from the client into XML for a legacy backend, or vice versa for responses).

- Header Manipulation: Adding, removing, or modifying HTTP headers (e.g., adding a correlation ID for tracing, stripping sensitive headers).

- Payload Enrichment/Reduction: Adding additional data to the request (e.g., user context) or stripping unnecessary data from the response to reduce bandwidth.

API Orchestration (sometimes called API Aggregation) allows the API Gateway to aggregate data from multiple backend services into a single response for the client. Instead of a client making several calls to different microservices, it makes one call to the gateway. The gateway then fan-outs requests to the necessary backend services, gathers their responses, combines or transforms them, and sends a consolidated response back to the client. This "Backend for Frontend" (BFF) pattern, often implemented at the gateway, significantly reduces client-side complexity and network chatter, especially beneficial for mobile applications.

Furthermore, API Gateways are crucial for API version management. They can allow different versions of an API (e.g., /v1/users, /v2/users) to coexist, routing requests to the appropriate backend service version. This enables smooth API evolution without breaking existing client applications, providing a controlled transition path.

In the rapidly evolving landscape of Artificial Intelligence, API Gateways are taking on new transformation roles. Products like APIPark exemplify this by offering "Unified API Format for AI Invocation" and "Prompt Encapsulation into REST API." This means that regardless of the underlying AI model (which often have disparate input/output formats and invocation methods), clients can interact with them via a consistent RESTful interface provided by the gateway. The gateway handles the complex task of translating standard REST requests into specific AI model prompts and then transforming the AI model's output back into a standardized REST response. This simplifies AI usage and significantly reduces maintenance costs for applications integrating multiple AI models.

2.6. Monitoring, Logging, and Analytics

Visibility into the performance and behavior of your API ecosystem is paramount for operational stability, troubleshooting, and business insights. The API Gateway, being the central point of ingress and egress for all API traffic, is an ideal location to collect comprehensive observability data.

Logging: The API Gateway can capture detailed logs for every API request and response. These logs typically include information such as:

- Timestamp of the request

- Client IP address

- Requested API endpoint

- HTTP method

- HTTP status code of the response

- Request and response body (potentially sanitized for sensitive data)

- Latency (time taken for the gateway to process and forward, and for the backend to respond)

- Authentication and authorization outcomes

These logs are invaluable for debugging issues, auditing access, and ensuring compliance. They can be integrated with centralized logging systems (like ELK Stack, Splunk, Datadog) for aggregation, searching, and analysis.

Metrics: Beyond raw logs, API Gateways can collect and expose various metrics about API traffic and gateway performance. These often include:

- Total request count (throughput)

- Error rates (e.g., 4xx and 5xx errors)

- Latency distributions (average, p90, p95, p99 latencies)

- Resource utilization of the gateway itself (CPU, memory, network I/O)

- Cache hit rates

These metrics are crucial for real-time monitoring, creating dashboards, and setting up alerts for abnormal behavior. They can be pushed to monitoring systems like Prometheus, Grafana, or cloud-specific monitoring services.

Analytics: By aggregating and analyzing the collected logs and metrics over time, API Gateways enable powerful data analytics. This allows organizations to understand API usage patterns, identify trends in performance or errors, anticipate potential issues, and make data-driven decisions about API evolution and infrastructure scaling. For example, analytics can reveal which APIs are most popular, which clients are generating the most traffic, or where performance bottlenecks are emerging.

APIPark specifically emphasizes these capabilities, offering "Detailed API Call Logging" that records every detail of each API call. This feature is vital for quick tracing and troubleshooting, thereby ensuring system stability and data security. Furthermore, its "Powerful Data Analysis" capability analyzes historical call data to display long-term trends and performance changes. This proactive insight helps businesses with preventive maintenance, addressing issues before they impact users and operations.

2.7. Security Policies (WAF, DDoS Protection)

While authentication and authorization handle who can access your APIs and what they can do, API Gateways extend their security perimeter to protect against broader web-based threats and network attacks.

Web Application Firewall (WAF) Integration: Many API Gateways either include integrated WAF capabilities or can easily integrate with external WAF solutions. A WAF monitors HTTP/S traffic to and from web applications and APIs, identifying and blocking common web vulnerabilities and attacks, such as:

- SQL Injection

- Cross-Site Scripting (XSS)

- Command Injection

- Broken Authentication

- Sensitive Data Exposure

By inspecting request payloads, headers, and parameters, a WAF can detect malicious patterns and prevent them from reaching backend services, adding a critical layer of application-level security.

DDoS Protection: Distributed Denial of Service (DDoS) attacks aim to overwhelm a service with a flood of traffic, making it unavailable to legitimate users. While dedicated DDoS protection services are often used at the network edge, an API Gateway can contribute to DDoS mitigation strategies by:

- Rate Limiting: As discussed, this can block excessive requests from individual or groups of IPs.

- IP Whitelisting/Blacklisting: Allowing traffic only from known, trusted IP ranges (whitelisting) or blocking traffic from known malicious IPs (blacklisting).

- Bot Protection: Identifying and mitigating requests from automated bots that might be part of a DDoS attack.

Implementing these broader security policies at the gateway centralizes threat detection and mitigation, acting as a robust shield for your backend services and ensuring continuous availability and data integrity.

2.8. Developer Portal Integration

For organizations that expose their APIs to external developers, partners, or even internal teams, an API Gateway often integrates with or provides a Developer Portal. This portal is a self-service platform designed to streamline the API consumption experience.

A typical Developer Portal offers:

- API Documentation: Comprehensive, interactive documentation (often based on OpenAPI/Swagger specifications) that describes each API, its endpoints, parameters, request/response formats, and examples.

- API Discovery: A catalog or marketplace where developers can browse and search for available APIs.

- API Key Management: A self-service interface for developers to generate, revoke, and manage their API keys or access tokens.

- Subscription Workflows: Mechanisms for developers to subscribe to API products, request access, and review their usage.

- Code Samples and SDKs: Resources to help developers quickly integrate APIs into their applications.

- Support and Community Forums: Channels for developers to get help, share feedback, and interact with the API provider.

Integrating a Developer Portal with the API Gateway ensures that documentation, access policies, and API keys are synchronized and consistently managed. It significantly improves the developer experience, fosters API adoption, and reduces the support burden on the API provider. By making APIs easily discoverable and consumable, organizations can accelerate innovation and build thriving API ecosystems.

APIPark serves as an API developer portal, specifically highlighting its feature for "API Service Sharing within Teams." This capability allows for the centralized display of all API services, making it effortless for different departments and teams to find and utilize the required API services. This fosters collaboration and efficiency across an enterprise, ensuring that valuable API resources are easily accessible to those who need them.

Chapter 3: Architectural Patterns and Deployment Considerations

The effective deployment and architectural integration of an API Gateway are crucial for maximizing its benefits. There isn't a single "right" way to deploy a gateway; the optimal approach depends heavily on the specific organizational needs, existing infrastructure, and desired level of decentralization.

3.1. Centralized vs. Decentralized Gateways

The choice between a centralized and decentralized gateway model dictates how API traffic is routed and managed across your services.

Centralized Gateway (Monolithic Gateway): In this traditional pattern, a single API Gateway instance (or a cluster of instances for high availability) acts as the sole entry point for all API traffic to all backend services.

- Pros:

- Simplicity of management: A single point of configuration and deployment for all cross-cutting concerns.

- Consistent policy enforcement: Ensures all APIs adhere to the same security, rate limiting, and other policies.

- Reduced operational overhead: Easier to monitor and troubleshoot a single gateway component.

- Unified Developer Experience: Clients only need to know one gateway address.

- Cons:

- Single point of failure: If the gateway goes down, all APIs become inaccessible (mitigated by clustering).

- Potential bottleneck: All traffic flows through it, requiring significant scaling and robust hardware/software.

- Tight coupling: A change in one API's configuration might inadvertently affect others.

- Organizational overhead: A central team might become a bottleneck for API owners who want to deploy changes quickly.

Decentralized Gateways (Micro-Gateways / Sidecar Pattern): This approach involves deploying smaller, more specialized gateway instances alongside or very close to individual services or groups of related services. This could manifest as a "Backend for Frontend" (BFF) gateway per client application type (e.g., one for web, one for mobile), or even a sidecar proxy per microservice.

- Pros:

- Increased resilience: Failure of one micro-gateway only affects a subset of APIs.

- Scalability: Each micro-gateway can scale independently with its associated services.

- Loose coupling: Services have more autonomy over their API configuration and deployment.

- Tailored policies: Policies can be highly customized for specific services or client needs.

- Cons:

- Increased operational complexity: Managing many small gateway instances, each with its own configuration.

- Potential for inconsistency: Ensuring uniform policy enforcement across numerous gateways can be challenging.

- Higher resource consumption: More gateway instances mean more overall CPU/memory usage.

- Duplication of effort: Common policies might need to be implemented multiple times.

Many organizations adopt a hybrid approach, where a central API Gateway handles initial authentication, routing, and common security, while smaller, client-specific gateways (BFFs) or specialized gateways handle aggregation and transformations closer to their respective services or consumers.

3.2. Deployment Models

The chosen deployment model for your API Gateway will heavily influence its performance, scalability, and integration with your infrastructure.

- On-Premise: Deploying API Gateways on your own physical servers or private cloud infrastructure. This offers maximum control over the environment and data sovereignty but comes with higher operational overhead for hardware, networking, and maintenance. Often favored by organizations with stringent security or compliance requirements.

- Cloud-Native Gateways: Public cloud providers offer fully managed API Gateway services (e.g., AWS API Gateway, Azure API Management, Google Apigee). These services abstract away much of the infrastructure management, offering high scalability, availability, and integration with other cloud services. They are generally pay-as-you-go, reducing upfront costs and operational burden. However, they can lead to vendor lock-in and may have specific feature limitations.

- Containerized (Docker, Kubernetes): Deploying API Gateways as Docker containers orchestrated by Kubernetes is a popular and flexible model. This offers excellent portability, scalability, and resilience. Gateways like Kong, Envoy, or even open-source solutions like APIPark are designed to run efficiently in containerized environments. This model allows for Infrastructure as Code (IaC) and integrates seamlessly with CI/CD pipelines, enabling rapid deployment and updates. The ease of deployment is a significant advantage, as demonstrated by APIPark's quick start: a single command line allows for deployment in just 5 minutes, significantly reducing setup time and complexity for developers.

- Serverless: In a serverless architecture, the API Gateway can be integrated directly with serverless functions (e.g., AWS Lambda, Azure Functions). The gateway handles routing incoming HTTP requests directly to the appropriate function, managing the request/response lifecycle. This offers extreme scalability and a true pay-per-execution cost model, eliminating server management entirely. However, it might introduce cold start latencies for infrequently invoked functions and has specific operational considerations.

The choice of deployment model should align with your overall infrastructure strategy, existing toolchains, and expertise within your organization.

3.3. API Gateway in a Microservices Architecture

The API Gateway is arguably one of the most critical components in a microservices architecture, serving as the bridge between client applications and a potentially vast number of backend services.

- Abstraction of Microservices: It hides the internal complexity of hundreds of microservices, their specific network locations, and their internal communication patterns from client applications. Clients only see the gateway's façade.

- Client-Specific Gateways (Backend for Frontend - BFF): A common pattern in microservices is to have specialized gateways for different types of client applications (e.g., a gateway optimized for mobile clients, another for web applications, and yet another for third-party integrations). These BFF gateways can aggregate data specifically for their respective clients, transforming and combining responses from multiple microservices to fit the client's UI or data needs. This reduces client-side logic and network calls, optimizing the user experience.

- Orchestration and Aggregation: As discussed, the API Gateway can orchestrate calls to multiple microservices in response to a single client request, gathering data from each and compiling a unified response. This is essential for features that draw data from various domains (e.g., a product detail page pulling info from product, inventory, and review services).

- Decoupling Clients from Services: By acting as an intermediary, the API Gateway allows microservices to evolve independently. Internal refactoring, version updates, or even swapping out an entire microservice can happen without necessarily affecting client applications, as long as the gateway's external API contract remains consistent.

APIPark, an open-source AI gateway and API management platform, is designed precisely for this kind of dynamic environment. While it excels at integrating and managing AI models, its foundational capabilities extend to managing and governing any REST services, making it a versatile choice for organizations building and scaling their microservices ecosystems.

3.4. API Gateway vs. Service Mesh

It's common to confuse an API Gateway with a Service Mesh, or to wonder if one replaces the other. In reality, they address different concerns and often complement each other within a sophisticated distributed system.

API Gateway: * Focus: North-South traffic (traffic originating from outside the cluster/network and entering it, e.g., client to services). * Primary Concerns: Authentication, authorization, rate limiting, caching, routing, API transformation, security at the edge, developer experience, exposure of APIs to external consumers. * Layer: Operates at the application layer (Layer 7), understanding HTTP, API contracts, and business logic concerns.

Service Mesh: * Focus: East-West traffic (traffic between services within the cluster/network). * Primary Concerns: Service-to-service communication, traffic management (retries, timeouts, circuit breakers), observability (metrics, logging, tracing for inter-service calls), mTLS for internal communication, traffic shaping, chaos engineering. * Layer: Operates at the application layer (Layer 7) as sidecar proxies next to each service, abstracting network concerns for internal service communication.

Complementary Roles: An API Gateway and a Service Mesh are not mutually exclusive; in fact, they often work together effectively. The API Gateway handles all incoming external requests, applies its security and traffic management policies, and then routes them to the appropriate (often first-tier) microservice within the network. From there, the Service Mesh takes over, managing the internal communication between that first-tier microservice and any other dependent microservices.

- The gateway protects the entrance to your application.

- The service mesh manages and secures the internal highways of your application.

This combination provides a layered defense and comprehensive traffic management, addressing both external API consumers and internal service interactions effectively.

Chapter 4: Choosing the Right API Gateway – Factors to Consider

Selecting the appropriate API Gateway is a critical decision that can have significant long-term implications for your architecture's scalability, security, and maintainability. With numerous commercial products, open-source solutions, and cloud-native offerings available, it's essential to evaluate them against a comprehensive set of criteria.

4.1. Performance and Scalability

The API Gateway is a central piece of your infrastructure, meaning its performance characteristics directly impact the responsiveness of your entire system. It must be able to handle high volumes of concurrent requests with low latency.

- Throughput (Requests per Second - RPS/TPS): How many requests can the gateway process within a given timeframe? This is a fundamental metric.

- Latency: The additional delay introduced by the gateway itself. A good gateway should add minimal latency, ideally in the single-digit milliseconds.

- Resource Utilization: How much CPU, memory, and network I/O does the gateway consume under various loads? Efficient resource usage is key to cost-effectiveness.

- Horizontal Scalability: Can the gateway be easily scaled out by adding more instances? This is crucial for handling unpredictable traffic spikes and ensuring high availability.

- Fault Tolerance and High Availability: Does the gateway support clustering, active-passive, or active-active deployments to ensure continuous operation even if an instance fails?

For demanding environments, raw performance is a significant differentiator. For instance, APIPark boasts "Performance Rivaling Nginx," claiming to achieve over 20,000 TPS with just an 8-core CPU and 8GB of memory. This level of performance, coupled with support for cluster deployment, indicates its capability to handle large-scale traffic and ensure high responsiveness, making it suitable for enterprises with substantial API loads.

4.2. Feature Set

The range of functionalities offered by an API Gateway varies widely. It's crucial to align the gateway's capabilities with your specific organizational needs, both current and projected.

- Core API Management: Routing, load balancing, authentication (OAuth2, JWT, API keys), authorization (RBAC, ABAC), rate limiting, caching. These are table stakes for most modern gateways.

- Transformation and Orchestration: Ability to modify request/response payloads, perform protocol translation, and aggregate multiple backend calls.

- Security Features: WAF integration, DDoS mitigation, IP whitelisting/blacklisting, bot protection.

- Observability: Comprehensive logging, metrics collection (Prometheus, OpenTelemetry integration), distributed tracing support.

- Developer Experience: Integrated developer portal, automated documentation generation (OpenAPI), SDK generation, self-service API key management.

- AI Integration: For platforms dealing with AI services, specialized features like unified API formats for AI invocation, prompt encapsulation into REST APIs, and centralized cost tracking for AI models become crucial. This is where a solution like APIPark shines, with its explicit focus on integrating 100+ AI models and simplifying their management.

- Lifecycle Management: Support for API versioning, publishing, deprecation, and deprecation.

Carefully evaluate which features are essential for your operations. Overly complex gateways with unnecessary features can increase configuration overhead, while under-featured ones might require custom development to fill gaps.

4.3. Extensibility and Customization

No single API Gateway will perfectly fit every unique use case out of the box. The ability to extend and customize its behavior is often a significant factor.

- Plugin Architecture: Does the gateway support plugins (or "policies") that can be easily added to extend functionality?

- Custom Logic: Can you inject custom code (e.g., Lua scripts, JavaScript, Python) to implement bespoke routing, transformation, or security logic?

- Integration Points: How well does it integrate with your existing identity providers, monitoring systems, logging infrastructure, and CI/CD pipelines?

- Policy Granularity: Can policies be applied at different levels (globally, per API, per consumer)?

Extensibility ensures that the gateway can adapt to evolving requirements and integrate smoothly into your existing technology stack without requiring significant forks or workarounds.

4.4. Ease of Use and Management

The operational overhead of an API Gateway can be substantial if it's difficult to configure, monitor, or troubleshoot.

- User Interface (UI) / Management Console: Is there an intuitive web-based interface for managing APIs, policies, and users?

- Configuration: Is the configuration declarative (e.g., YAML, JSON) and easy to understand? Can it be managed via Infrastructure as Code (IaC)?

- Documentation: Is the documentation comprehensive, clear, and up-to-date?

- Deployment Simplicity: How easy is it to deploy and get started? Solutions like APIPark pride themselves on quick deployment (e.g., "5 minutes with a single command line"), which significantly lowers the barrier to entry and speeds up time to value.

- Troubleshooting: Are logging and metrics easily accessible and actionable? Are there tools to help diagnose issues quickly?

A gateway that is easy to manage reduces operational costs and allows teams to focus more on developing core business logic.

4.5. Cost and Licensing

The financial implications of an API Gateway can range from minimal to substantial, depending on the chosen solution.

- Open Source: Solutions like APIPark (under Apache 2.0 license) offer a cost-effective entry point, providing the core software for free. However, "free" doesn't mean zero cost. You still need to account for infrastructure costs, internal development time for setup and customization, and potentially purchasing commercial support.

- Commercial Products: These often come with a license fee, subscription model, or consumption-based pricing. They typically include professional support, a richer feature set, and enterprise-grade tooling.

- Cloud-Native Services: Follow a pay-as-you-go model, often based on request volume, data transfer, and number of APIs. While seemingly flexible, costs can escalate with high traffic or complex configurations, requiring careful monitoring.

Consider the total cost of ownership (TCO), which includes not just licensing but also infrastructure, operational expenses, and the cost of skilled personnel required to manage the gateway. APIPark exemplifies a tiered approach by offering a powerful open-source version for basic needs, alongside a commercial version with advanced features and professional technical support for leading enterprises, catering to different organizational scales and requirements.

4.6. Community and Support

The availability of a strong community and reliable support can be invaluable, especially when encountering complex issues or seeking best practices.

- Active Community: For open-source solutions, a vibrant community (forums, GitHub discussions, chat channels) means readily available help, shared knowledge, and frequent updates.

- Vendor Support: For commercial products, evaluate the quality, responsiveness, and service level agreements (SLAs) of the vendor's technical support.

- Documentation and Training: Availability of tutorials, guides, and training programs can accelerate adoption and skill development.

- Ecosystem and Integrations: The breadth of third-party integrations (e.g., with CI/CD tools, monitoring platforms) and a healthy ecosystem indicate a mature and widely adopted product.

APIPark benefits from being launched by Eolink, a leading API lifecycle governance solution company. This background implies a strong foundation of expertise, a commitment to the API ecosystem, and potentially robust commercial support options for its enterprise version, leveraging Eolink's experience in serving over 100,000 companies globally. This backing provides confidence in the product's longevity and reliability.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Chapter 5: Best Practices for API Gateway Implementation and Management

Implementing and managing an API Gateway effectively goes beyond simply deploying the software. It requires careful planning, adherence to best practices, and continuous attention to ensure it serves its purpose as a secure, performant, and reliable intermediary.

5.1. Design for Resilience

The API Gateway is a critical component, and its failure can bring down your entire API ecosystem. Therefore, designing for resilience is paramount.

- Redundancy and Failover: Deploy multiple gateway instances across different availability zones or regions. Implement robust failover mechanisms so that if one instance or zone fails, traffic is automatically routed to healthy instances without manual intervention. This often involves load balancers sitting in front of the gateway instances.

- Circuit Breakers: Implement circuit breakers within the gateway (or configure them in backend services) to prevent cascading failures. If a backend service becomes unhealthy or unresponsive, the circuit breaker "trips," stopping the gateway from sending further requests to that service for a period. This gives the troubled service time to recover and prevents the gateway from becoming overloaded while waiting for timeouts.

- Timeouts and Retries: Configure appropriate timeouts for backend service calls from the gateway to prevent long-running requests from consuming resources indefinitely. Implement intelligent retry mechanisms for transient failures, but with exponential backoff to avoid exacerbating the problem.

- Graceful Degradation: Design your APIs and gateway to degrade gracefully. For non-critical functionalities, if a backend service is unavailable, the gateway could return a cached response, a default response, or inform the client without causing a hard error.

5.2. Robust Security Policies

Security must be deeply ingrained in your API Gateway strategy, not an afterthought.

- Least Privilege Principle: Configure access controls such that clients and internal services only have the minimum necessary permissions to perform their functions. Avoid granting broad access where specific, limited access suffices.

- Regular Security Audits and Vulnerability Scanning: Periodically audit your gateway configurations, policies, and underlying infrastructure for security weaknesses. Conduct regular vulnerability scans and penetration testing.

- Keep Dependencies Updated: Ensure the API Gateway software, its plugins, and underlying operating system components are regularly updated to patch known vulnerabilities. Automate this process where possible.

- Centralized Secrets Management: Do not hardcode API keys, database credentials, or other sensitive information directly into gateway configurations. Use a centralized secrets management solution (e.g., HashiCorp Vault, AWS Secrets Manager, Kubernetes Secrets) and integrate the gateway with it.

- Strict Input Validation: While backend services should also validate inputs, the API Gateway can perform initial, basic validation to filter out malformed or malicious requests early, protecting backend services from unnecessary processing.

- TLS Everywhere: Enforce TLS (Transport Layer Security) for all communication—between clients and the gateway, and between the gateway and backend services. Use strong ciphers and up-to-date TLS versions.

5.3. Comprehensive Monitoring and Alerting

You cannot manage what you cannot measure. Robust monitoring is essential for operational visibility and proactive problem-solving.

- Collect Key Metrics: Gather all crucial metrics such as request rates, error rates, latency percentiles (P50, P90, P99), cache hit ratios, and gateway resource utilization (CPU, memory, network I/O).

- Centralized Logging: Aggregate all gateway logs into a centralized logging system (e.g., ELK Stack, Splunk, Datadog). Ensure logs are structured and contain enough detail for effective debugging, while also redacting sensitive information.

- Distributed Tracing: Implement distributed tracing (e.g., OpenTelemetry, Jaeger, Zipkin) across your gateway and backend services. This allows you to trace a single request's journey through multiple services, pinpointing bottlenecks or errors.

- Proactive Alerting: Set up alerts for deviations from normal behavior. This includes high error rates, increased latency, significant drops in throughput, or unusual resource spikes. Alerts should be actionable and notify the appropriate teams.

- Establish Baselines: Understand the "normal" operational behavior of your gateway and APIs. This baseline is crucial for detecting anomalies and effectively setting alert thresholds.

As highlighted earlier, APIPark offers "Detailed API Call Logging" and "Powerful Data Analysis" precisely for these purposes, enabling businesses to quickly identify and resolve issues, and even anticipate problems based on historical trends.

5.4. Versioning Strategies

As your APIs evolve, managing different versions is crucial to prevent breaking existing client applications. The API Gateway plays a central role here.

- URI Versioning: Include the version number directly in the API's URI (e.g.,

/v1/users,/v2/users). This is a common and straightforward approach. The gateway can then route traffic based on this path segment. - Header Versioning: Include the API version in an HTTP header (e.g.,

Accept-Version: v2or a custom header). This keeps the URI cleaner but requires clients to manage headers. - Content Negotiation: Use the

Acceptheader to specify the desired content type, which can implicitly include a version (e.g.,Accept: application/vnd.example.v2+json). - Phased Rollouts: Use the gateway to route a small percentage of traffic to a new API version (canary release) while the majority still uses the older version. This allows for testing in production and minimizes risk.

- Clear Deprecation Policy: When deprecating older API versions, the gateway can help by returning appropriate warnings (e.g.,

Warningheader, custom headers) or eventually rejecting requests to truly deprecated versions, guiding clients to migrate.

APIPark's "End-to-End API Lifecycle Management" directly supports this, assisting with managing design, publication, invocation, and decommission, and helping regulate processes like traffic forwarding, load balancing, and versioning of published APIs.

5.5. Document Your APIs Thoroughly

Comprehensive and up-to-date documentation is essential for API consumers, whether they are internal teams or external partners.

- OpenAPI/Swagger Specifications: Use a standardized format like OpenAPI to describe your APIs. This allows for automated documentation generation, code client SDKs, and integration with developer portals.

- Integrated Developer Portal: Leverage your API Gateway's (or a complementary product's) developer portal to host interactive documentation, provide code samples, and offer a self-service experience for API key management and subscription.

- Clear Examples and Use Cases: Provide practical examples of requests and responses, along with clear explanations of how to use each API endpoint and its expected behavior.

- Version-Specific Documentation: Ensure documentation is versioned alongside your APIs, so consumers always see relevant information for the version they are using.

Good documentation reduces the learning curve for API consumers, accelerates integration, and minimizes support requests.

5.6. Adopt a DevOps Culture

Integrating API Gateway management into your DevOps practices ensures agility, reliability, and automation.

- Infrastructure as Code (IaC): Manage your API Gateway's configuration (routing rules, policies, security settings) as code using tools like Terraform, CloudFormation, or Kubernetes manifests. This ensures consistency, repeatability, and version control.

- Automated Deployment: Incorporate API Gateway deployment and configuration updates into your CI/CD pipelines. This allows for rapid, reliable, and consistent changes without manual intervention.

- Automated Testing: Implement automated tests for your APIs through the gateway. This includes functional tests, integration tests, performance tests, and security tests to catch issues early in the development lifecycle.

- Collaboration: Foster collaboration between development, operations, and security teams in designing, implementing, and managing the API Gateway.

A DevOps approach to API Gateway management leads to faster delivery, fewer errors, and a more robust API ecosystem.

5.7. Regular Performance Testing

Even with robust design, real-world traffic can reveal unforeseen bottlenecks. Regular performance testing is critical.

- Load Testing: Simulate expected peak loads to verify that the API Gateway and backend services can handle the traffic without degrading performance.

- Stress Testing: Push the system beyond its expected limits to identify breaking points and understand its behavior under extreme conditions.

- Soak Testing (Endurance Testing): Run tests over extended periods to detect memory leaks, resource exhaustion, or other performance degradation issues that might only appear over time.

- Identify Bottlenecks: Use the data from performance tests (metrics, logs, traces) to identify bottlenecks, whether they are in the gateway itself, network, database, or specific backend services.

Proactive performance testing helps ensure that your API Gateway infrastructure is ready for production traffic and can scale effectively as your API usage grows.

Chapter 6: The Evolving Landscape – API Gateways and AI Integration

The digital landscape is in perpetual motion, and few shifts have been as transformative in recent years as the explosion of Artificial Intelligence (AI) and Machine Learning (ML). From large language models (LLMs) to sophisticated image recognition and data analysis algorithms, AI capabilities are rapidly becoming integral to a vast array of applications. This surge in AI adoption presents both incredible opportunities and unique challenges for developers and enterprises, particularly concerning how these intelligent services are integrated, managed, and exposed.

6.1. Rise of AI Services and Models

The proliferation of powerful AI models, many accessible via APIs (e.g., OpenAI's GPT, Google's Gemini, various open-source models), has democratized access to advanced intelligence. Developers are no longer required to possess deep expertise in machine learning to imbue their applications with capabilities like natural language understanding, sentiment analysis, code generation, content creation, and predictive analytics. This has led to a demand for streamlined ways to incorporate these diverse AI models into existing software architectures.

However, the very diversity that makes AI so powerful also introduces complexity. Each AI model might have its own unique API endpoint, authentication mechanism, data input format, and output structure. Managing a dozen different AI models directly from an application can quickly become a tangled web of specialized integrations.

6.2. Challenges in Integrating AI Models

Integrating multiple AI models directly into an application poses several significant challenges:

- Varied APIs and Protocols: AI models often expose different APIs, some RESTful, some gRPC, others perhaps custom binary protocols. The formats of input prompts and output responses can vary wildly.

- Complex Authentication: Each AI service might require different authentication tokens, keys, or OAuth flows, necessitating complex management within the client application.

- Cost Tracking and Management: Monitoring and controlling the costs associated with invoking different AI models can be difficult without a centralized mechanism.

- Prompt Engineering and Evolution: As AI models and best practices for prompt engineering evolve, direct integrations require frequent application-level changes to adapt.

- Version Control: Managing different versions of AI models and ensuring backward compatibility is a constant concern.

- Performance and Scalability: Ensuring reliable access to AI models, handling retries, and load balancing across instances for self-hosted models.

These challenges underscore the need for an intelligent intermediary, much like how API Gateways solved the complexity of microservices.

6.3. API Gateways as AI Gateways

This is precisely where the API Gateway evolves into an "AI Gateway," offering a specialized solution to manage the unique demands of AI service integration. An AI Gateway extends the traditional API Gateway functionalities to specifically address the complexities of AI models.

Platforms like APIPark are at the forefront of this evolution, positioning themselves as an "open-source AI gateway and API management platform." They exemplify how an API Gateway can be specifically tailored to manage the nuances of AI services:

- Quick Integration of 100+ AI Models: APIPark offers the capability to integrate a vast array of AI models from different providers (e.g., OpenAI, Google, AWS, various open-source models). This centralizes the point of integration, allowing developers to access a diverse toolkit of AI capabilities through a single interface. It provides a unified management system for authentication and cost tracking across all integrated AI models. This means developers don't have to learn the specific nuances of each AI provider's billing or credential system.

- Unified API Format for AI Invocation: A core value proposition of an AI Gateway like APIPark is standardizing the request data format across all integrated AI models. This abstraction layer is invaluable. Regardless of whether an underlying AI model expects a JSON object with specific keys or a plain text prompt, the client application interacts with a consistent RESTful API provided by APIPark. The gateway handles the complex translation from the unified format to the specific AI model's required input and transforms the AI model's diverse output back into a predictable, standardized format for the application. This ensures that changes in underlying AI models or prompt engineering techniques do not necessitate changes in the application or microservices, thereby simplifying AI usage and significantly reducing maintenance costs.

- Prompt Encapsulation into REST API: APIPark enables users to quickly combine AI models with custom prompts to create new, purpose-built APIs. For example, a user could define a prompt that instructs an LLM to perform sentiment analysis on a piece of text. APIPark can then encapsulate this combination (AI model + specific prompt) into a standard REST API. Clients can simply call this new "Sentiment Analysis API" with their text, and APIPark handles sending the text to the AI model with the predefined prompt, returning the sentiment result. This simplifies the creation and consumption of AI-powered functionalities, transforming complex AI model interactions into easy-to-use, reusable APIs.

By acting as a sophisticated control plane for AI models, an AI Gateway streamlines the development lifecycle for AI-powered applications. It significantly reduces the complexity associated with integrating, managing, and scaling diverse AI capabilities, freeing developers to focus on application logic rather than the intricate details of AI service consumption. This paradigm shift, led by platforms like APIPark, makes advanced AI more accessible, manageable, and cost-effective for enterprises.

Conclusion

In the labyrinthine world of modern software architectures, where microservices proliferate and APIs form the very arteries of digital communication, the API Gateway has unequivocally emerged as an indispensable architectural cornerstone. Far more than a mere proxy, it acts as the intelligent, secure, and resilient front door to your entire backend ecosystem, centralizing critical functionalities that would otherwise fragment and complicate your distributed systems.

Throughout this guide, we have explored the profound impact of the API Gateway on abstracting backend complexity, enhancing security through centralized authentication and authorization, optimizing performance with caching and rate limiting, and providing unparalleled observability into API traffic. We delved into its crucial role in API transformation and orchestration, enabling seamless communication across disparate services and protocols, and its integration with developer portals to foster a vibrant API economy. Whether deployed centrally or in a decentralized pattern, on-premise or in the cloud, the strategic implementation of an API Gateway is directly correlated with an organization's ability to scale efficiently, innovate rapidly, and maintain robust system integrity.

The principles and best practices discussed, from designing for resilience and implementing robust security policies to embracing comprehensive monitoring and a DevOps culture, are not just technical guidelines; they are fundamental tenets for building and maintaining a healthy, high-performing API infrastructure. As digital transformation continues to accelerate and new paradigms emerge, the adaptability of the API Gateway remains critical. Its evolution into specialized roles, such as the AI Gateway, further underscores its dynamic utility. Platforms like APIPark exemplify this adaptability, providing an open-source solution that not only manages traditional REST APIs but also specifically addresses the burgeoning complexities of integrating and orchestrating diverse AI models, unifying their access and simplifying their consumption.

Ultimately, the API Gateway is a strategic asset that streamlines operations, bolsters security, and empowers developers, serving as the essential control point that enables organizations to confidently navigate the complexities of today's interconnected digital landscape. Its role is not just about managing traffic; it's about orchestrating success.

Key API Gateway Capabilities and Their Benefits

| Capability | Description | Primary Benefits |

|---|---|---|

| Request Routing | Directs incoming requests to the correct backend service based on various criteria (path, headers, etc.). | Simplifies client interaction, abstracts backend complexity, enables flexible service updates. |

| Load Balancing | Distributes traffic across multiple instances of a service. | Enhances high availability, improves performance, prevents service overload. |

| Authentication & Authorization | Verifies client identity and permissions before allowing access to services. | Centralized security, reduces security burden on individual services, prevents unauthorized access. |

| Rate Limiting & Throttling | Controls the number of requests a client can make within a period. | Protects backend services from abuse/overload, ensures fair resource usage, improves system stability. |

| Caching | Stores frequently accessed API responses to serve subsequent requests faster. | Reduces latency, improves performance, decreases load on backend services, lowers operational costs. |

| Transformation & Orchestration | Modifies request/response payloads; aggregates calls to multiple services into one response. | Standardizes API contracts, reduces client-side complexity, enables protocol mediation, simplifies versioning. |

| Monitoring & Logging | Collects detailed logs, metrics, and traces for all API traffic. | Provides operational visibility, aids in troubleshooting, enables performance analysis, supports auditing. |

| Security Policies (WAF) | Filters malicious traffic and protects against common web vulnerabilities. | Adds an extra layer of defense, protects backend services from application-level attacks. |

| Developer Portal | Provides self-service documentation, API key management, and subscription workflows. | Enhances developer experience, promotes API adoption, reduces support overhead. |

| AI Integration | Unifies access, formats, and management for diverse AI models (e.g., LLMs). | Simplifies AI model consumption, standardizes AI interactions, reduces maintenance costs for AI-powered apps. |

Frequently Asked Questions (FAQ)

- What is the fundamental difference between an API Gateway and a traditional Reverse Proxy? While both intercept traffic, an API Gateway is API-aware, meaning it understands the structure and semantics of API requests (e.g., HTTP methods, paths, headers, body content). It performs API-specific functions like authentication based on API keys or JWTs, request/response transformation, and API versioning. A traditional reverse proxy, conversely, primarily operates at a lower network level, forwarding traffic based on basic URL paths or hostnames, and typically lacks the intelligence to inspect and manipulate API payloads or enforce complex API policies. The gateway adds a layer of intelligence and specialized API management capabilities.

- Can an API Gateway replace a Service Mesh, or are they used together? No, an API Gateway cannot replace a Service Mesh; they serve different, albeit complementary, purposes. An API Gateway manages "north-south" traffic (external client to services, acting as the edge of your network) and focuses on external concerns like public API exposure, client authentication, and rate limiting. A Service Mesh, on the other hand, manages "east-west" traffic (internal service-to-service communication within your network) and addresses concerns like internal traffic routing, inter-service resilience (circuit breakers, retries), and internal observability. They are typically used together, with the API Gateway directing external traffic into the service mesh, which then governs the communication between microservices.

- What are the key benefits of centralizing API security with an API Gateway? Centralizing API security with an API Gateway offers several significant advantages: it provides a single point of enforcement for authentication and authorization, reducing redundant security implementations across individual services. This leads to more consistent security policies, fewer configuration errors, and a smaller attack surface. It also simplifies compliance audits and allows backend services to focus purely on their business logic, knowing that the gateway has already handled initial security checks.

- How does an API Gateway help in adopting a microservices architecture? An API Gateway is crucial for microservices architectures by providing a unified API façade that abstracts away the complexity of numerous backend services from client applications. It allows services to evolve independently without affecting clients, handles request routing to the correct service, performs data aggregation from multiple services, and centralizes cross-cutting concerns like security, rate limiting, and monitoring. This simplifies client-side development, improves overall system manageability, and facilitates easier adoption and scaling of microservices.

- With the rise of AI, how is the role of an API Gateway evolving? With the explosion of AI models, the API Gateway is evolving into an "AI Gateway," taking on specialized functions to manage AI services. This includes providing a unified API format for invoking diverse AI models, encapsulating complex AI prompts into simple REST APIs, and centralizing authentication, cost tracking, and lifecycle management for AI resources. By standardizing access and simplifying integration, an AI Gateway (like APIPark) enables developers to easily leverage various AI capabilities in their applications without wrestling with the unique complexities of each individual AI model.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.