API Gateway Main Concepts: An Essential Guide

In the intricate landscape of modern software architecture, where distributed systems, microservices, and a myriad of applications communicate ceaselessly, the API gateway has emerged as an indispensable component. No longer a mere add-on, it stands as the critical frontline for all external and often internal communication, acting as the intelligent intermediary between clients and the myriad of backend services they wish to access. This comprehensive guide delves deep into the fundamental concepts underpinning an API gateway, exploring its architecture, core functionalities, inherent benefits, and the pivotal role it plays in securing, optimizing, and simplifying the consumption of APIs.

The evolution of software development has dramatically shifted from monolithic applications to highly distributed, independently deployable microservices. This paradigm shift, while offering unparalleled flexibility and scalability, introduced a new layer of complexity: how do clients reliably discover, securely interact with, and efficiently consume services that might be scattered across different hosts, written in various languages, and constantly evolving? This is precisely the challenge an API gateway addresses, centralizing concerns that would otherwise be duplicated across numerous client applications or backend services, thereby streamlining operations and bolstering overall system resilience.

Understanding the main concepts of an API gateway is not just beneficial for architects and developers; it is crucial for anyone involved in building, maintaining, or consuming networked applications in today's digital economy. From enhancing security posture to optimizing performance, and from simplifying client-side logic to enabling sophisticated analytics, the API gateway is the linchpin that transforms a collection of disparate services into a coherent, manageable, and highly performant API ecosystem.

The Genesis and Evolution of the API Gateway

To truly appreciate the significance of an API gateway, it is imperative to understand the architectural shifts that necessitated its rise. For decades, monolithic applications dominated the software landscape. In this model, all functionalities – user interface, business logic, and data access layer – were bundled into a single, indivisible unit. Clients would typically interact directly with this monolithic application, often through a single entry point. While simpler to develop initially for smaller projects, monoliths soon buckled under the weight of growing complexity, team sizes, and the relentless demand for faster innovation cycles. Deploying a minor change often required redeploying the entire application, leading to slow release cycles and high risk.

The advent of Service-Oriented Architecture (SOA) marked the first major step towards breaking down monoliths into smaller, loosely coupled services. Enterprise Service Buses (ESBs) became popular in this era, serving as a central hub for message routing, transformation, and protocol mediation. While ESBs provided valuable integration capabilities, they often became complex, heavyweight, and ironically, new monoliths themselves, centralizing too much logic and becoming bottlenecks.

The true catalyst for the API gateway as we know it today was the rise of microservices architecture. Microservices advocate for decomposing an application into a collection of small, autonomous services, each responsible for a specific business capability, running in its own process, and communicating via lightweight mechanisms, typically HTTP APIs. While this architectural style offers immense benefits in terms of scalability, resilience, and independent development, it introduces a significant challenge for clients. Instead of interacting with one large application, clients now face dozens, if not hundreds, of granular services, each with its own endpoint, authentication requirements, and data formats.

Direct client-to-microservice communication became unwieldy and impractical for several reasons: * Too many endpoints: Clients would need to manage connections to numerous services, complicating client-side code. * Security fragmentation: Each service would need to implement its own authentication and authorization, leading to duplication and potential inconsistencies. * Network latency: Multiple requests from a client to compose a single view could lead to increased latency. * Protocol mismatch: Clients might require different protocols or data formats than the backend services provide. * Refactoring pains: As microservices evolve, their endpoints might change, breaking client applications.

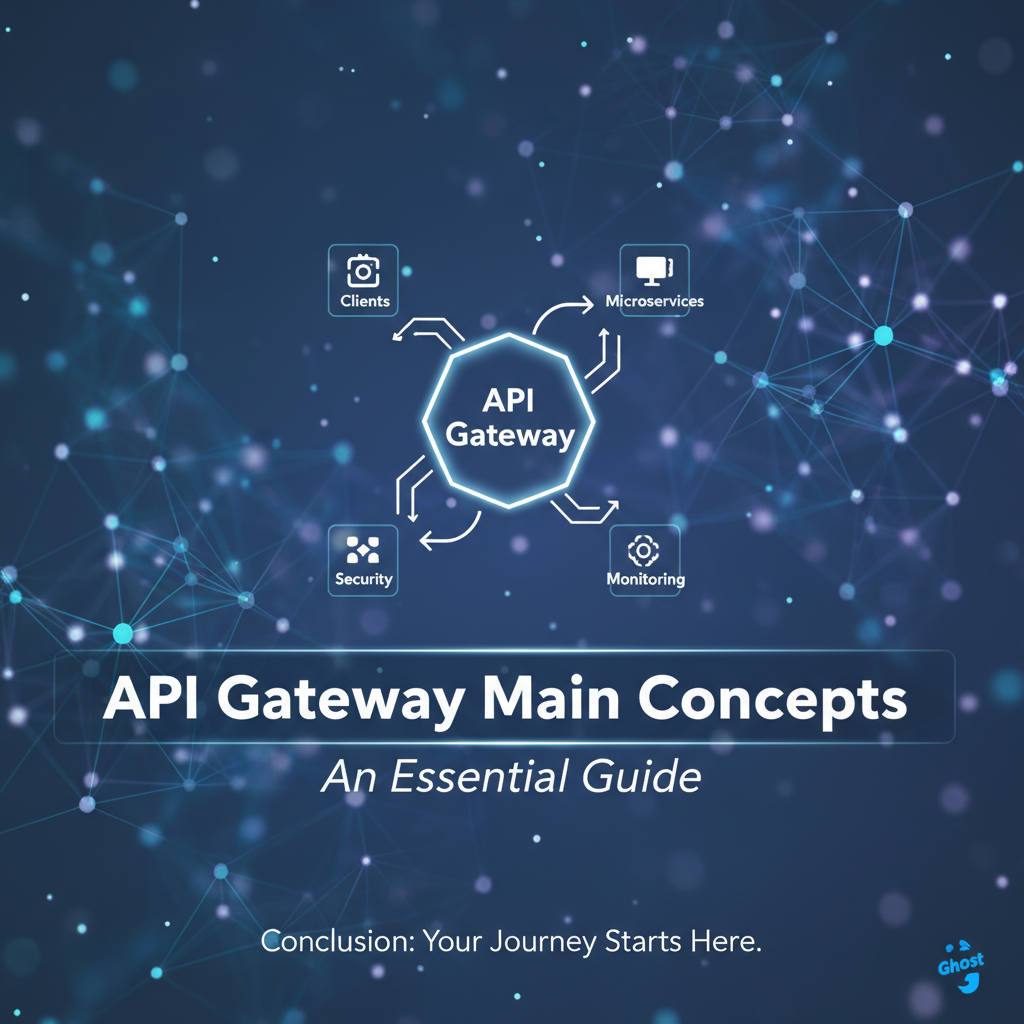

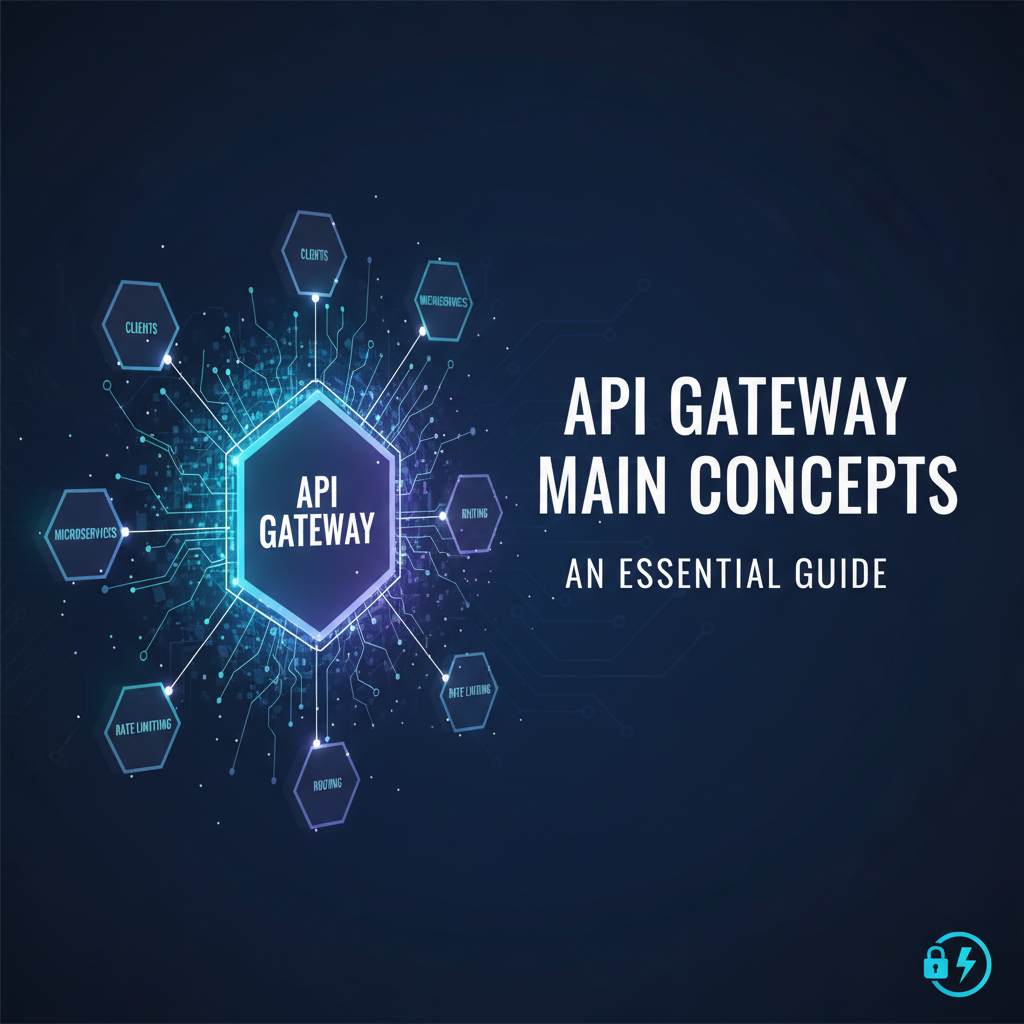

The API gateway emerged as the elegant solution to these problems. It acts as a single, centralized entry point for all client requests, abstracting the complexity of the backend microservices. Instead of clients directly interacting with individual services, they communicate solely with the API gateway, which then intelligently routes, transforms, and secures these requests before forwarding them to the appropriate backend services. This evolution transformed the gateway from a simple router into a powerful traffic manager, security enforcer, and API aggregator.

What Exactly Is an API Gateway? A Deep Dive

At its core, an API gateway is a server that acts as an API frontend, sitting between client applications and backend services. It is the single entry point for a group of APIs, centralizing common functionalities that might otherwise be implemented redundantly across multiple backend services or client applications. Think of it as the highly efficient, multilingual concierge of a vast, multi-floor hotel (your microservices architecture) that directs guests (clients) to the right room (service), handles their requests, ensures their security, and provides a seamless experience, all without them needing to know the complex inner workings of the hotel.

The API gateway is more than just a reverse proxy, although that is a fundamental part of its functionality. While a reverse proxy simply forwards requests to an upstream server, an API gateway performs a rich set of features before and after forwarding. It encapsulates the internal structure of the application or backend services and provides an aggregated, simplified, and consistent API for clients. This abstraction allows backend services to evolve independently without affecting external clients.

Crucially, an API gateway understands the context of an API request. It can inspect headers, query parameters, request bodies, and even the identity of the caller to make intelligent decisions about routing, security, and policy enforcement. This deep understanding allows it to apply various policies and transformations tailored to the specific needs of the API and the client.

The strategic placement of an API gateway within an architecture is typically at the edge of the network, acting as the perimeter defense and traffic director. All incoming requests from external clients first hit the gateway. After processing, the gateway then communicates with internal backend services, which are often isolated within a private network, enhancing security by limiting direct exposure. This pattern effectively decouples the client from the backend implementation details, creating a robust and flexible system boundary.

In essence, an API gateway serves as: * A single entry point: All client requests are directed to one known endpoint. * An abstraction layer: It hides the complexity and number of backend services from clients. * A policy enforcement point: It applies security, rate limiting, and other operational policies. * A traffic manager: It routes requests efficiently and can balance loads across instances. * An API aggregator: It can combine responses from multiple services into a single response for clients, reducing client-side chatter.

This central role makes the API gateway a powerhouse for managing the lifecycle, security, and performance of modern API ecosystems.

Key Concepts and Core Components of an API Gateway

The robust functionality of an API gateway is built upon a foundation of several interconnected concepts and components, each playing a vital role in its overall operation. Understanding these individual elements is key to grasping how an API gateway delivers its multifaceted value proposition.

1. Reverse Proxy and Intelligent Routing

At its most fundamental level, an API gateway functions as a reverse proxy. This means it intercepts client requests and forwards them to the appropriate backend service. However, an API gateway goes far beyond a simple pass-through. It implements intelligent routing, which involves directing requests to specific backend services based on a variety of criteria: * URL path: e.g., /users goes to the User Service, /products goes to the Product Service. * HTTP method: Different methods (GET, POST, PUT, DELETE) for the same path might be routed to different handlers or services. * Headers: Custom headers can indicate the client type, tenant ID, or preferred version, influencing routing decisions. * Query parameters: Specific parameters might trigger routing to specialized service instances. * Service discovery: The gateway integrates with service discovery mechanisms (e.g., Consul, Eureka, Kubernetes DNS) to dynamically locate available instances of a backend service, providing resilience and flexibility.

This intelligent routing ensures that requests are always directed to healthy and appropriate service instances, even as the backend landscape changes. It also supports blue/green deployments or canary releases by directing a small percentage of traffic to new service versions.

2. Load Balancing

Closely tied to intelligent routing is load balancing. When multiple instances of a backend service are available, the API gateway distributes incoming requests across these instances to ensure optimal resource utilization and prevent any single service instance from becoming overloaded. Common load balancing algorithms include: * Round Robin: Distributes requests sequentially to each server in the group. * Least Connections: Sends requests to the server with the fewest active connections. * IP Hash: Distributes requests based on the client's IP address, ensuring sticky sessions if needed. * Weighted Load Balancing: Prioritizes servers with higher processing capabilities or available resources.

Effective load balancing enhances the scalability and availability of backend services, allowing them to handle increased traffic volumes gracefully.

3. Authentication and Authorization

Security is paramount for any API, and the API gateway serves as the primary enforcement point for authentication and authorization. Centralizing these concerns at the gateway offers significant advantages: * Authentication: The gateway verifies the identity of the client making the request. This can involve checking API keys, validating JSON Web Tokens (JWTs), OAuth tokens, or integrating with identity providers (IdPs) like Okta, Auth0, or Azure AD. By offloading authentication to the gateway, individual backend services do not need to implement this logic, reducing complexity and potential vulnerabilities. * Authorization: Once a client's identity is verified, the gateway determines if the authenticated client has the necessary permissions to access the requested resource or perform the desired action. This can involve checking scopes in a JWT, querying an authorization service, or applying role-based access control (RBAC) policies. Granular authorization rules can be applied based on the client's role, the requested resource, or even specific data attributes.

This centralized security management streamlines the development process, ensures consistent security policies across all APIs, and provides a single audit point for access control decisions.

4. Rate Limiting and Throttling

To protect backend services from abuse, excessive traffic, or denial-of-service (DoS) attacks, the API gateway implements rate limiting and throttling. * Rate Limiting: This mechanism restricts the number of requests a client can make within a specified time window (e.g., 100 requests per minute). If a client exceeds this limit, subsequent requests are rejected, often with an HTTP 429 Too Many Requests status. * Throttling: This is a more controlled form of rate limiting, where requests beyond a certain threshold are not immediately rejected but might be queued, delayed, or processed with lower priority. Throttling is often used to manage resource consumption and ensure fair usage among different client tiers (e.g., free vs. premium users).

Rate limiting and throttling are essential for maintaining the stability and availability of backend services, preventing resource exhaustion, and ensuring a predictable quality of service for all consumers.

5. Request and Response Transformation

Backend services may have specific requirements for request formats or may return responses that are not ideal for direct client consumption. The API gateway can perform transformations on both incoming requests and outgoing responses: * Request Transformation: * Header manipulation: Adding, removing, or modifying HTTP headers (e.g., adding a unique request ID, injecting security tokens for backend services). * Payload transformation: Converting data formats (e.g., XML to JSON, or simplifying complex JSON structures), remapping field names, or enriching payloads with additional data. * Query parameter manipulation: Adding default values, removing sensitive parameters, or reformatting them. * Response Transformation: * Data masking: Hiding sensitive information (e.g., partial credit card numbers) before sending the response to the client. * Format conversion: Converting backend responses to a format preferred by the client (e.g., gRPC to REST, or a specific JSON schema). * Aggregating responses: Combining data from multiple backend service calls into a single, unified response.

These transformations decouple the client's view of the API from the backend's implementation details, enabling greater flexibility and easier integration for diverse clients.

6. Caching

To improve performance and reduce the load on backend services, an API gateway can implement caching. Frequently accessed data or responses that do not change often can be stored directly at the gateway. When a subsequent request for the same resource arrives, the gateway can serve the cached response immediately, bypassing the backend service entirely. * Benefits: * Reduced latency: Clients receive responses much faster. * Lower backend load: Backend services are hit less frequently, freeing up their resources. * Cost savings: For cloud-based APIs, reduced backend calls can translate to lower operational costs.

Caching strategies can include time-to-live (TTL) policies, cache invalidation mechanisms, and conditional caching based on request headers.

7. Monitoring, Logging, and Tracing

Observability is critical for any distributed system, and the API gateway is a prime location for collecting valuable operational data. * Monitoring: The gateway can collect metrics such as request counts, error rates, latency, and resource utilization. This data is invaluable for understanding the health and performance of the API ecosystem, identifying bottlenecks, and triggering alerts. * Logging: Every request and response passing through the gateway can be logged, providing a detailed audit trail. These logs include information like client IP, request path, response status, duration, and any errors encountered. Comprehensive logging is essential for troubleshooting, security auditing, and compliance. * Tracing: For complex requests that traverse multiple backend services, distributed tracing capabilities (e.g., using OpenTelemetry, Zipkin, Jaeger) can be integrated into the gateway. The gateway can inject correlation IDs into requests, allowing the entire flow of a request through various services to be tracked and visualized, which is invaluable for debugging and performance optimization.

These observability features provide deep insights into API usage and system behavior, empowering teams to proactively manage and troubleshoot their services. This is particularly useful for platforms like APIPark, which offers detailed API call logging and powerful data analysis features to help businesses trace and troubleshoot issues, ensuring system stability and data security. APIPark analyzes historical call data to display long-term trends and performance changes, assisting with preventive maintenance.

8. Security Policies (WAF, DDoS Protection)

Beyond basic authentication and authorization, an API gateway can enforce more advanced security policies, often integrating capabilities found in Web Application Firewalls (WAFs) and DDoS protection services: * WAF Integration: The gateway can inspect incoming requests for common web vulnerabilities like SQL injection, cross-site scripting (XSS), and other OWASP Top 10 threats, blocking malicious requests before they reach backend services. * DDoS Protection: By monitoring traffic patterns and identifying anomalous spikes, the gateway can detect and mitigate distributed denial-of-service attacks, protecting backend infrastructure from being overwhelmed. * IP Whitelisting/Blacklisting: Restricting access based on client IP addresses. * SSL/TLS Termination: Encrypting and decrypting traffic at the gateway, offloading this CPU-intensive task from backend services and ensuring secure communication.

These advanced security features make the API gateway a formidable first line of defense against a wide array of cyber threats.

9. Version Management and API Lifecycle

Managing different versions of an API is a common challenge, especially in microservices environments where services evolve rapidly. The API gateway provides robust mechanisms for API version management: * URL Versioning: e.g., /v1/users, /v2/users. The gateway routes requests to the appropriate versioned backend service. * Header Versioning: Using custom HTTP headers (e.g., Accept-Version: v2) to indicate the desired API version. * Query Parameter Versioning: e.g., /users?api-version=2.

The API gateway also plays a crucial role in the broader API lifecycle management, assisting with publishing new APIs, deprecating old ones, and managing traffic forwarding during transitions. Platforms like APIPark excel in end-to-end API lifecycle management, guiding design, publication, invocation, and decommission, and helping regulate processes, manage traffic forwarding, load balancing, and versioning of published APIs.

10. Protocol Translation

In heterogeneous environments, clients might communicate using different protocols than backend services. An API gateway can act as a protocol translator: * REST to gRPC: Converting standard RESTful HTTP requests into gRPC calls for highly performant backend services. * REST to SOAP: Bridging legacy SOAP services with modern RESTful clients. * HTTP/1.1 to HTTP/2: Upgrading or downgrading protocols as needed.

This capability significantly enhances interoperability, allowing diverse clients and services to communicate seamlessly without requiring each to understand the other's native protocol.

11. Developer Portal Integration

For organizations offering APIs to external developers or even internal teams, a developer portal is essential for discoverability and ease of use. An API gateway often integrates with or includes features that support a developer portal: * API Documentation: Publishing interactive API documentation (e.g., OpenAPI/Swagger) directly from the gateway's configuration. * Subscription Management: Allowing developers to register, subscribe to APIs, and manage their API keys. This can include approval workflows, as supported by APIPark, where API resource access requires administrator approval, preventing unauthorized calls. * Usage Analytics: Providing developers with insights into their API consumption. * Self-Service: Empowering developers to onboard themselves, generate API keys, and test APIs.

Integration with a developer portal streamlines the developer experience, fostering broader adoption and efficient consumption of APIs. For instance, APIPark allows for centralized display of all API services, making it easy for different departments and teams to find and use required API services, enhancing team collaboration and efficiency.

The following table summarizes these core functionalities, highlighting their purpose and benefit within an API gateway:

| API Gateway Core Functionality | Purpose | Key Benefits |

|---|---|---|

| Reverse Proxy & Routing | Intercepts requests, forwards to correct backend service. | Abstracts backend complexity, enables flexible service discovery, supports blue/green deployments. |

| Load Balancing | Distributes traffic across multiple service instances. | Improves scalability, ensures high availability, prevents service overload, optimizes resource utilization. |

| Authentication & Auth | Verifies client identity and permissions. | Centralized security, consistent policy enforcement, reduces backend security burden, enhanced auditability. |

| Rate Limiting & Throttling | Controls request volume from clients. | Protects backend services from abuse/overload, ensures fair usage, maintains service stability, manages resource consumption. |

| Request/Response Transform | Modifies data formats, headers, or payloads. | Decouples client from backend, facilitates integration with diverse clients, simplifies backend service logic, enables data masking/enrichment. |

| Caching | Stores frequently accessed responses. | Reduces latency, lowers backend load, improves overall performance, potentially reduces infrastructure costs. |

| Monitoring, Logging, Tracing | Collects operational data and tracks request flow. | Provides deep insights into API usage/performance, facilitates troubleshooting, enables security auditing, supports proactive maintenance and issue resolution (e.g., APIPark's detailed logging and analysis). |

| Security Policies | Enforces advanced security rules (WAF, DDoS). | Protects against common web vulnerabilities, mitigates DDoS attacks, enhances overall system resilience and security posture. |

| Version Management | Manages different API versions concurrently. | Allows seamless API evolution, minimizes client breaking changes, supports phased rollouts of new features. |

| Protocol Translation | Converts requests/responses between different protocols. | Enhances interoperability between heterogeneous systems, connects modern clients to legacy services, supports diverse communication patterns. |

| Developer Portal Integration | Provides self-service for API consumers. | Improves developer experience, increases API adoption, centralizes documentation, enables self-service onboarding and subscription management (e.g., APIPark's team sharing and approval features). |

Benefits of Using an API Gateway

The strategic adoption of an API gateway brings a multitude of advantages to organizations, impacting everything from security and performance to development efficiency and operational complexity. These benefits underscore why an API gateway is not merely an optional component but a foundational element in modern distributed architectures.

1. Improved Security Posture

Perhaps one of the most compelling reasons to deploy an API gateway is the significant enhancement it brings to an organization's security posture. By serving as the single entry point for all API traffic, the gateway acts as the first line of defense. * Centralized Security Enforcement: Instead of implementing authentication, authorization, and other security checks in every microservice, these concerns are consolidated at the gateway. This prevents security logic duplication, reduces the risk of inconsistencies or oversights, and simplifies security auditing. * Reduced Attack Surface: Backend services can be isolated within a private network, accessible only by the gateway. This significantly reduces their exposure to the public internet, making them less vulnerable to direct attacks. * Advanced Threat Protection: Features like Web Application Firewall (WAF) capabilities, DDoS protection, IP whitelisting/blacklisting, and bot detection can be implemented at the gateway layer, providing robust protection against a wide range of cyber threats before they even reach the core business logic. * SSL/TLS Offloading: The gateway can handle SSL/TLS termination, decrypting incoming encrypted traffic and re-encrypting outgoing traffic. This offloads computationally intensive tasks from backend services, allowing them to focus purely on business logic.

2. Enhanced Performance and Scalability

An API gateway can dramatically improve the overall performance and scalability of an API ecosystem. * Caching: As discussed, caching frequently accessed responses at the gateway reduces the load on backend services and significantly decreases response times for clients, leading to a much snappier user experience. * Load Balancing: By intelligently distributing incoming requests across multiple instances of backend services, the gateway ensures optimal resource utilization and prevents any single service from becoming a bottleneck, enabling graceful scaling under heavy loads. * Reduced Network Latency: For clients that need to aggregate data from multiple backend services to compose a single response, the API gateway can perform this aggregation internally. This reduces the number of round trips between the client and various backend services, minimizing network latency and improving perceived performance. * Throttling and Rate Limiting: By preventing service overload, these features ensure that services remain responsive and performant even during traffic spikes, maintaining a consistent quality of service.

3. Simplified API Management and Operations

Managing a growing number of APIs and their underlying services can quickly become a complex operational nightmare. The API gateway centralizes many management tasks, simplifying operations considerably. * Unified API Entry Point: Clients interact with a single, well-defined endpoint, abstracting away the dynamic and complex topology of backend microservices. This simplifies client-side development and reduces maintenance burden. * API Versioning: The gateway handles different API versions, allowing backend services to evolve independently without forcing clients to immediately migrate. This enables smoother transitions and reduces breaking changes. * Centralized Monitoring and Logging: All API traffic passes through the gateway, making it an ideal point to collect comprehensive metrics, logs, and traces. This centralized observability simplifies troubleshooting, performance analysis, and security auditing across the entire API landscape. * Developer Experience: With features like integrated developer portals, documentation generation, and self-service API key management, the API gateway significantly enhances the experience for API consumers, fostering adoption and ease of use. Platforms like APIPark provide an open-source AI gateway and API developer portal that streamlines the integration and management of both AI and REST services, offering features like quick integration of 100+ AI models and end-to-end API lifecycle management.

4. Decoupling and Flexibility

One of the most profound benefits of an API gateway is the strong decoupling it provides between clients and backend services. * Backend Abstraction: Clients are shielded from the internal architectural details, technologies, and scaling strategies of backend services. If a backend service needs to be refactored, replaced, or scaled, clients remain unaffected as long as the API gateway maintains the external API contract. * Protocol Agnostic Clients: Through request and response transformations, the gateway can bridge mismatches in protocols or data formats, allowing clients to use their preferred communication style while backend services operate in their optimal environment. * Innovation Without Disruption: This decoupling fosters agility. Backend teams can rapidly iterate on their services without worrying about breaking existing client applications, accelerating innovation cycles.

5. Multi-Tenant Support and Granular Control

For businesses that serve multiple clients, departments, or even external partners through their APIs, an API gateway can offer crucial multi-tenancy capabilities. * Tenant Isolation: The gateway can be configured to create logical separation between different tenants. This means that each tenant can have independent API access, data, user configurations, and security policies, even when sharing underlying infrastructure. This is a core feature of APIPark, which enables the creation of multiple teams (tenants), each with independent applications, data, user configurations, and security policies, while sharing underlying applications and infrastructure to improve resource utilization and reduce operational costs. * Granular Access Control: Beyond simple authorization, the gateway can apply very specific policies based on the tenant, client application, or even specific user attributes. This allows for fine-grained control over who can access what, under what conditions, and at what rate. * Subscription Management with Approval: For controlled access, the gateway can enforce subscription approval processes. As mentioned with APIPark, callers must subscribe to an API and await administrator approval before they can invoke it, preventing unauthorized API calls and potential data breaches.

These benefits collectively make the API gateway a strategic investment for any organization building and managing a robust API ecosystem, enabling them to deliver secure, performant, and easily consumable APIs at scale.

Challenges and Considerations

While the benefits of an API gateway are substantial, it is not a silver bullet without its own set of challenges and considerations. Organizations must be aware of these potential pitfalls to effectively implement and manage a gateway solution.

1. Single Point of Failure (SPOF)

By consolidating all incoming traffic to a single component, the API gateway inherently becomes a potential single point of failure. If the gateway goes down, all APIs become unreachable, effectively crippling the entire system. * Mitigation: To address this, high availability (HA) configurations are crucial. This typically involves deploying multiple instances of the gateway behind a network load balancer, ensuring that if one instance fails, others can take over seamlessly. Redundancy across different availability zones or regions is also critical for disaster recovery.

2. Increased Latency

Introducing an additional layer (the API gateway) in the request path inevitably adds a small amount of latency to each request. While often negligible for individual requests, this can accumulate for highly latency-sensitive applications or requests that require chaining multiple services behind the gateway. * Mitigation: Optimize gateway performance by using efficient routing algorithms, implementing aggressive caching strategies where appropriate, and ensuring the gateway itself is performant (e.g., using technologies known for high throughput like Nginx, which APIPark rivals in performance, capable of over 20,000 TPS with modest resources). Avoid overly complex transformation logic within the gateway that could introduce significant processing overhead. For extreme low-latency requirements, direct service access might be considered for specific, internal communications, though this sacrifices other gateway benefits.

3. Operational Complexity

Deploying, configuring, monitoring, and maintaining an API gateway adds a new layer of operational complexity to the infrastructure. * Configuration Management: Managing routing rules, security policies, rate limits, and transformations across potentially hundreds of APIs can become complex, requiring robust configuration management tools and practices (e.g., GitOps). * Monitoring and Alerting: While the gateway centralizes observability, it also becomes a critical component to monitor intensely. Effective alerting mechanisms for gateway health and performance are essential. * Skilled Personnel: Operating an API gateway often requires specialized skills in networking, security, and the specific gateway technology chosen.

4. Vendor Lock-in (for Commercial Gateways)

Choosing a commercial API gateway solution can lead to vendor lock-in, making it difficult and costly to switch to a different provider later. This can impact flexibility, pricing, and the ability to customize the gateway to specific needs. * Mitigation: Evaluate open-source API gateway options (like APIPark) that offer greater flexibility and community support. If opting for commercial solutions, carefully assess their extensibility, integration capabilities, and exit strategies. Cloud-native gateways often integrate deeply with their respective ecosystems, which can be a strength but also a form of lock-in.

5. Over-engineering and Monolithic Gateway

There is a risk of transforming the API gateway itself into a new monolith if too much business logic, complex transformations, or service orchestration is packed into it. This "Smart Gateway" anti-pattern can lead to the very problems microservices were designed to solve: tight coupling, reduced agility, and difficult maintenance. * Mitigation: The API gateway should primarily focus on cross-cutting concerns (security, routing, rate limiting, caching) and thin transformations. Business logic should reside within the backend microservices. The gateway should be kept as "dumb" as possible, acting as a traffic cop and policy enforcer, not a domain expert. Complex orchestration or aggregation that is deeply tied to business logic might be better handled by a dedicated aggregation service behind the gateway.

6. Performance vs. Features Trade-off

Every feature enabled on an API gateway (e.g., deep packet inspection for WAF, complex transformations, extensive logging) consumes resources and adds some degree of latency. It's a constant balancing act between enabling rich features for security and management, and maintaining optimal performance. * Mitigation: Carefully assess which features are truly necessary for each API and disable or simplify those that are not. Profile the gateway's performance under various loads and with different feature sets enabled to understand the impact. Optimize configurations and hardware resources as needed.

By acknowledging and proactively addressing these challenges, organizations can harness the full power of an API gateway while minimizing its potential drawbacks, leading to a robust and efficient API ecosystem.

APIPark is a high-performance AI gateway that allows you to securely access the most comprehensive LLM APIs globally on the APIPark platform, including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more.Try APIPark now! 👇👇👇

Types of API Gateways

The market for API gateway solutions is diverse, offering options that range from cloud-native services to open-source software and specialized deployments. Understanding these different types helps in choosing the right gateway for specific architectural and business needs.

1. Cloud-Native API Gateways

Cloud providers offer fully managed API gateway services that deeply integrate with their respective cloud ecosystems. These services are highly scalable, often serverless, and come with built-in features for security, monitoring, and deployment. * Examples: * AWS API Gateway: A highly scalable, serverless service that enables developers to create, publish, maintain, monitor, and secure APIs at any scale. It integrates seamlessly with other AWS services like Lambda, EC2, and IAM. * Azure API Management: A fully managed service that allows organizations to publish, secure, transform, maintain, and monitor APIs. It offers a developer portal, analytics, and robust security features, integrating with Azure Active Directory and other Azure services. * Google Cloud Apigee: A comprehensive, enterprise-grade API management platform that includes an API gateway, developer portal, analytics, and advanced security capabilities. Apigee can manage APIs across hybrid and multi-cloud environments. * Pros: High scalability, managed service (reduced operational burden), deep cloud integration, strong security features. * Cons: Vendor lock-in, potentially higher cost for heavy usage, less control over underlying infrastructure.

2. Open Source API Gateways

Open-source API gateway solutions provide flexibility, community support, and the ability to self-host and customize. They are often preferred by organizations that require greater control, have specific deployment environments, or wish to avoid vendor lock-in. * Examples: * Kong Gateway: A popular open-source API gateway built on Nginx and LuaJIT. It's known for its extensibility via plugins, high performance, and ability to manage both traditional REST APIs and modern microservices. * Tyk Gateway: Another robust open-source API gateway that offers a comprehensive set of features including authentication, rate limiting, quotas, analytics, and a developer portal. It's written in Go. * Gloo Edge: An open-source API gateway built on Envoy Proxy, designed for hybrid and multi-cloud environments, and supporting various protocols including HTTP, gRPC, and GraphQL. It's particularly strong for Kubernetes environments. * Ocelot: A .NET Core API Gateway that is lightweight and provides basic routing, authentication, and service discovery features for microservices written in .NET. * Spring Cloud Gateway: A project from the Spring ecosystem designed to provide a simple, yet powerful way to route to APIs and provide cross-cutting concerns to them. Ideal for Spring Boot microservices. * Pros: Flexibility, control, community support, no license costs (though operational costs exist), avoids vendor lock-in. * Cons: Requires more operational expertise, self-management of infrastructure, no direct vendor support for the open-source version (though commercial versions or paid support are often available).

3. Self-Managed / On-Premise API Gateways

Organizations with specific compliance requirements, existing data center infrastructure, or extreme performance needs might opt for self-managed or on-premise API gateway deployments. This can involve deploying open-source solutions on their own hardware or virtual machines. * Pros: Maximum control over environment, data locality, integration with existing on-premise systems, suitable for highly regulated industries. * Cons: High operational burden, significant infrastructure management overhead, scaling can be more challenging.

4. Specialized API Gateways (e.g., AI Gateways)

As technology evolves, specialized API gateways are emerging to cater to specific needs, such as managing Artificial Intelligence (AI) models and services. These gateways extend the core functionalities with features tailored for their niche.

A prime example of a specialized gateway is APIPark. APIPark is an open-source AI gateway and API management platform designed specifically to manage, integrate, and deploy both AI and REST services with ease. * Key Features of APIPark: * Quick Integration of 100+ AI Models: It offers a unified management system for authentication and cost tracking across a diverse range of AI models. * Unified API Format for AI Invocation: APIPark standardizes the request data format for AI models, ensuring that application logic remains unaffected by changes in underlying AI models or prompts. * Prompt Encapsulation into REST API: Users can quickly combine AI models with custom prompts to create new, specialized APIs (e.g., sentiment analysis, translation). * End-to-End API Lifecycle Management: As a comprehensive API management platform, it assists with the entire lifecycle of APIs, from design and publication to invocation and decommissioning, including traffic forwarding, load balancing, and versioning. * Team Sharing and Multi-Tenancy: Facilitates API service sharing within teams and provides independent API and access permissions for each tenant, optimizing resource utilization. * Performance: APIPark boasts performance rivaling Nginx, capable of over 20,000 TPS with an 8-core CPU and 8GB memory, supporting cluster deployment for large-scale traffic. * Detailed Call Logging and Data Analysis: Provides comprehensive logging and powerful data analysis to trace issues, monitor performance trends, and assist with preventive maintenance. * Pros (for specialized gateways like APIPark): Tailored features for specific domains (e.g., AI), simplifies complex integrations, high performance for target workloads, open-source flexibility combined with enterprise-grade features. * Cons: Might be overkill for general-purpose API management without the specific specialized need.

The choice of an API gateway type depends heavily on factors like existing infrastructure, budget, compliance requirements, desired level of control, and the specific nature of the APIs being managed.

Choosing an API Gateway: Key Considerations

Selecting the right API gateway is a critical decision that can profoundly impact the long-term success of an organization's API strategy. It's not a one-size-fits-all choice and requires careful consideration of several factors.

1. Scalability Requirements

How much traffic is your API expected to handle now, and in the future? * High Throughput: If your APIs need to process thousands or tens of thousands of requests per second (TPS), you'll need a gateway known for its high performance and efficient resource utilization (e.g., Kong, Tyk, or cloud-native options like AWS API Gateway). APIPark is a strong contender here, touting Nginx-rivaling performance with over 20,000 TPS. * Elastic Scalability: For unpredictable or bursty traffic patterns, a gateway that can automatically scale up and down (like serverless cloud gateways) is highly advantageous. * Horizontal Scalability: Ensure the gateway supports horizontal scaling, allowing you to add more instances to handle increased load.

2. Security Needs

Security is non-negotiable. What level of security enforcement is required? * Authentication & Authorization: Does the gateway support your existing identity providers (IdPs) and authentication mechanisms (OAuth2, JWT, API Keys)? Can it enforce fine-grained authorization policies? * Threat Protection: Are advanced security features like WAF, DDoS protection, and bot mitigation necessary? * Compliance: Does the gateway help meet industry-specific compliance standards (e.g., GDPR, HIPAA, PCI DSS)? * Data Masking/Encryption: Can it perform data transformation or SSL/TLS termination to protect sensitive data?

3. Integration Capabilities

How well does the gateway integrate with your existing and future ecosystem? * Service Discovery: Does it integrate with your service discovery mechanism (Kubernetes, Consul, Eureka)? * Monitoring & Logging: Can it forward metrics and logs to your preferred monitoring and logging platforms (Prometheus, Grafana, ELK stack, Splunk)? APIPark provides detailed API call logging and powerful data analysis, which is crucial for comprehensive monitoring. * Developer Portal: Does it integrate with or offer a developer portal for API documentation, subscription, and key management? * Backend Protocols: Can it handle various backend protocols (REST, gRPC, SOAP, GraphQL)? * AI Models: If you are integrating AI services, a specialized AI gateway like APIPark offers quick integration of 100+ AI models and unified invocation formats, making it a powerful choice.

4. Cost Implications

Cost is always a factor, encompassing more than just licensing. * Licensing/Subscription Fees: For commercial products. * Infrastructure Costs: For cloud-native gateways, usage-based billing; for self-managed, hardware, hosting, and operational overhead. * Operational Costs: The cost of managing, maintaining, and troubleshooting the gateway and its infrastructure. * Hidden Costs: Training for new tools, integration efforts, and potential vendor lock-in.

5. Vendor Support and Community

What level of support do you require? * Commercial Support: For mission-critical applications, enterprise-grade support and SLAs from a vendor might be essential. APIPark offers a commercial version with advanced features and professional technical support for leading enterprises, alongside its open-source product. * Community Support: For open-source solutions, a vibrant community can be invaluable for troubleshooting, finding solutions, and contributing to development.

6. Developer Experience

How easy is it for developers to use and integrate with the gateway? * Ease of Configuration: Is the configuration intuitive and manageable (e.g., via declarative configuration, GUI, or CLI)? * Documentation: Is the documentation clear, comprehensive, and up-to-date? * Self-Service: Can developers easily discover APIs, obtain API keys, and test functionality? * API Lifecycle Management: Does it simplify the management of API versions, deprecation, and new API publication? APIPark assists with managing the entire API lifecycle from design to decommission.

7. Specific Features and Use Cases

Are there any unique requirements for your APIs? * Multi-Tenancy: Do you need to support multiple teams or customers with independent API access and policies, as provided by APIPark? * Edge Computing: Is the gateway needed closer to the client for extremely low latency? * Microservices Orchestration: While generally discouraged for a "dumb" gateway, some advanced use cases might necessitate limited orchestration capabilities. * AI/ML Specifics: If your application heavily relies on AI models, a specialized AI gateway like APIPark with its unified API format for AI invocation and prompt encapsulation into REST API features might be a game-changer, simplifying AI usage and maintenance costs.

By carefully evaluating these considerations against your organization's specific context, you can make an informed decision and select an API gateway that best aligns with your strategic objectives and operational capabilities.

Implementing an API Gateway: Best Practices

Successful implementation of an API gateway goes beyond simply deploying the software; it involves thoughtful design, strategic configuration, and robust operational practices. Adhering to best practices ensures that the gateway delivers its intended benefits without introducing new complexities or bottlenecks.

1. Design for High Availability and Resilience

Given the API gateway's role as a single entry point, its availability is paramount. * Redundancy: Always deploy multiple gateway instances across different availability zones or regions. Use a robust load balancer (e.g., an external cloud load balancer or a hardware load balancer) in front of your gateway instances. * Automated Failover: Configure health checks and automated failover mechanisms to quickly detect and replace unhealthy gateway instances. * Self-Healing: Leverage container orchestration platforms like Kubernetes to manage gateway deployments, enabling self-healing capabilities and automated scaling. * Circuit Breakers and Timeouts: Configure circuit breakers at the gateway to prevent cascading failures to backend services. Implement appropriate timeouts for backend calls to avoid holding resources indefinitely.

2. Keep the Gateway "Dumb"

Resist the temptation to embed complex business logic or extensive data transformations within the API gateway. * Focus on Cross-Cutting Concerns: The gateway should primarily handle concerns like authentication, authorization, rate limiting, routing, caching, and basic request/response transformations. * Business Logic in Microservices: All domain-specific business logic and complex data processing should reside within the backend microservices. * Avoid Orchestration Overload: While the gateway can perform simple aggregation of responses from multiple services, complex orchestration that is specific to a business process should ideally be handled by dedicated aggregation services or a backend-for-frontend (BFF) pattern, which sit behind the gateway.

3. Centralize Configuration Management

Managing routing rules, policies, and security settings across multiple gateway instances can be challenging. * Declarative Configuration: Use declarative configurations (e.g., YAML, JSON files) that can be version-controlled in Git. This enables GitOps practices, allowing changes to be reviewed, approved, and deployed systematically. * Automated Deployment: Automate the deployment and configuration updates of the gateway using CI/CD pipelines. This ensures consistency and reduces human error. * Environment-Specific Configurations: Manage different configurations for development, staging, and production environments using configuration management tools or environment variables.

4. Implement Robust Monitoring, Logging, and Alerting

Comprehensive observability is crucial for understanding the gateway's health and performance. * Detailed Logging: Configure the gateway to log all relevant request/response details, including timestamps, client IPs, request paths, status codes, and latency. Integrate with a centralized logging system (e.g., ELK stack, Splunk, Loki). APIPark provides comprehensive logging capabilities, recording every detail of each API call, enabling quick tracing and troubleshooting. * Key Metrics Collection: Collect metrics such as request rates, error rates, latency percentiles, CPU/memory utilization, and cache hit rates. Use a monitoring system like Prometheus with Grafana for visualization. * Proactive Alerting: Set up alerts for critical conditions (e.g., high error rates, sudden drops in traffic, gateway instance failures) to enable rapid response. APIPark's powerful data analysis on historical call data aids in preventive maintenance. * Distributed Tracing: Implement distributed tracing to track requests as they flow through the gateway and into backend services, invaluable for debugging complex interactions.

5. Secure by Default

Security should be built into the gateway from the ground up. * Least Privilege: Configure the gateway with the minimum necessary permissions to perform its functions. * Strong Authentication: Enforce strong authentication mechanisms for client access and, if applicable, for gateway management APIs. * Regular Security Audits: Periodically audit gateway configurations and policies for vulnerabilities and compliance. * TLS Everywhere: Ensure all communication to and from the gateway (both client-facing and to backend services) uses TLS/SSL encryption. Terminate SSL at the gateway to offload backend services. * Input Validation: Implement basic input validation at the gateway to filter out obviously malicious or malformed requests.

6. Optimize Performance

Continuously monitor and optimize the gateway's performance. * Caching Strategy: Implement an intelligent caching strategy for static or infrequently changing resources to reduce backend load and improve response times. * Efficient Transformations: Keep request/response transformations minimal and efficient. Avoid computationally intensive operations within the gateway. * Resource Allocation: Ensure the gateway instances are adequately provisioned with CPU, memory, and network bandwidth. Performance tools like APIPark are designed for high throughput, reaching over 20,000 TPS, indicating how critical performance is for modern gateways. * Connection Pooling: Configure efficient connection pooling to backend services to minimize connection overhead.

7. Plan for API Versioning and Lifecycle Management

Anticipate API evolution from the start. * Consistent Versioning Strategy: Decide on a clear API versioning strategy (e.g., URL path, header, query parameter) and implement it consistently across all APIs managed by the gateway. * Gradual Rollouts: Use the gateway to facilitate gradual rollouts of new API versions (e.g., canary releases, blue/green deployments) to a subset of users before a full release. * Deprecation Policy: Establish a clear deprecation policy and use the gateway to communicate deprecation notices or gracefully redirect old API versions.

By following these best practices, organizations can maximize the value derived from their API gateway implementation, creating a stable, secure, and high-performing foundation for their API ecosystem.

The Future of API Gateways

The landscape of software architecture is in perpetual motion, and the API gateway is evolving alongside it. Several emerging trends are shaping the next generation of API gateway capabilities and deployments.

1. AI and Machine Learning Integration

The explosion of Artificial Intelligence (AI) and Machine Learning (ML) models presents new challenges and opportunities for API gateways. * AI Model as API: More and more AI models are exposed as APIs. Specialized AI gateways like APIPark are designed to simplify the management and invocation of these models. They offer features like unified API formats for different AI models, prompt encapsulation into REST APIs, and cost tracking for AI usage, making AI integration seamless for developers. * AI for Gateway Operations: AI and ML can be leveraged within the gateway itself for advanced functionalities: * Anomaly Detection: ML models can detect unusual traffic patterns, potential security threats, or performance degradation much faster than static rules. * Intelligent Load Balancing: AI can predict traffic surges and proactively adjust load balancing decisions or scale backend services. * Adaptive Rate Limiting: ML can dynamically adjust rate limits based on real-time usage patterns and user behavior, offering more flexible protection. * Automated Threat Response: AI could potentially trigger automated responses to identified security threats, such as temporarily blocking malicious IPs.

2. Convergence with Service Meshes

Service meshes (e.g., Istio, Linkerd, Consul Connect) have gained prominence in microservices architectures, managing inter-service communication within the cluster. This has led to discussions about the role distinction and potential convergence between API gateways and service meshes. * API Gateway (North-South Traffic): Primarily manages external (North-South) traffic from clients outside the cluster to services inside. * Service Mesh (East-West Traffic): Primarily manages internal (East-West) traffic between services within the cluster. * Convergence: Increasingly, API gateways are leveraging service mesh data planes (like Envoy Proxy) for their underlying proxy functionality, or service meshes are offering "ingress gateway" capabilities that blur the lines. The future might see a more unified control plane managing both external and internal traffic, perhaps with specialized proxies at the edge acting as an API gateway layer. This approach can lead to a more consistent policy enforcement and observability across the entire system.

3. Edge Computing and Serverless Functions

The decentralization of computing closer to data sources and users (edge computing) and the rise of serverless architectures are impacting API gateway deployments. * Edge Gateways: Deploying lightweight API gateways at the edge of the network can further reduce latency for geographically dispersed users and handle initial processing or caching closer to the client. * Serverless Gateways: Cloud-native API gateways often integrate directly with serverless functions (e.g., AWS Lambda, Azure Functions), allowing developers to build highly scalable, event-driven APIs without managing servers. This paradigm shift means the gateway seamlessly scales with function invocations.

4. GraphQL Gateways

While REST APIs remain prevalent, GraphQL has gained significant traction for its efficiency in data fetching. * GraphQL Gateways: Dedicated GraphQL gateways can aggregate data from multiple backend services (REST, SOAP, databases) and expose them through a single GraphQL endpoint, allowing clients to query precisely what they need. This reduces over-fetching and under-fetching issues common with REST. * Hybrid Approaches: Many modern API gateways are evolving to support both REST and GraphQL APIs, allowing organizations to adopt a polyglot API strategy.

5. Increased Focus on API Security and Governance

As APIs become the backbone of the digital economy, security and governance will remain paramount. * Zero Trust Architectures: API gateways will play an even more critical role in enforcing zero-trust principles, verifying every request regardless of its origin. * Automated Policy Enforcement: More sophisticated policy engines will allow for automated and dynamic policy enforcement based on real-time context, user behavior, and threat intelligence. * API Security Gateways: Specialization in API security, offering advanced threat detection, vulnerability management, and continuous compliance checks, will become standard.

The API gateway is continuously adapting to meet the demands of increasingly complex and distributed application landscapes. From a foundational component for microservices to an intelligent orchestrator of AI models and a robust security enforcer at the edge, its evolution is far from over, promising even greater capabilities and integrations in the years to come.

Conclusion

The journey through the main concepts of an API gateway reveals it to be far more than a simple traffic router; it is an indispensable component at the heart of modern API architectures. From its humble origins as a necessary abstraction layer for microservices, the API gateway has blossomed into a sophisticated control point, centralizing critical functionalities that are vital for the security, performance, and manageability of any distributed system.

We have explored how an API gateway meticulously handles tasks ranging from intelligent routing and robust load balancing to comprehensive authentication and authorization, rate limiting, and intricate request/response transformations. Its capacity for caching significantly boosts performance, while its centralized monitoring, logging, and tracing capabilities provide unparalleled visibility into the intricate dance of API interactions. Beyond these core functions, advanced security policies, seamless version management, and the ability to bridge diverse protocols underscore its versatility. Furthermore, integrating with developer portals—and in the case of specialized solutions like APIPark, offering powerful features for AI model integration and lifecycle management—enhances the entire API ecosystem for both providers and consumers.

The benefits are clear: an API gateway fortifies security, amplifies performance, simplifies API management, and fosters a flexible, decoupled architecture. However, as with any powerful tool, it demands careful implementation to mitigate challenges such as being a potential single point of failure, managing operational complexity, and avoiding the pitfall of a "monolithic gateway."

As the technological landscape continues to evolve with AI, service meshes, edge computing, and serverless paradigms, the API gateway itself is transforming, poised to integrate even more intelligence and capabilities. It remains the essential frontline, ensuring that the increasingly complex world of distributed systems can be presented to clients as a coherent, secure, and highly performant experience. Understanding these main concepts is not just about comprehending a piece of technology; it's about grasping the fundamental pillars that underpin robust, scalable, and resilient API strategies in the digital age.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between an API Gateway and a traditional Reverse Proxy?

While an API gateway does function as a reverse proxy, forwarding client requests to backend services, its capabilities extend far beyond simple request forwarding. A traditional reverse proxy primarily handles traffic distribution and basic security (like SSL termination). An API gateway, however, is context-aware; it understands the nature of API requests and can apply sophisticated policies based on API routes, client identities, and request content. It offers advanced features like authentication, authorization, rate limiting, request/response transformation, caching, monitoring, and API version management, centralizing these cross-cutting concerns that would otherwise need to be implemented in each backend service or client.

2. Why can an API Gateway be considered a potential Single Point of Failure (SPOF), and how is this mitigated?

An API gateway acts as the sole entry point for all client requests to backend services. If the gateway itself fails or becomes unavailable, all APIs become inaccessible, effectively crippling the entire system. This makes it a potential Single Point of Failure. To mitigate this, API gateways are deployed with high availability (HA) configurations. This typically involves running multiple gateway instances in parallel, often across different availability zones or data centers. An external load balancer distributes incoming traffic among these instances, and automated health checks ensure that traffic is only routed to healthy gateway instances, with failed instances being automatically replaced or restarted.

3. How does an API Gateway help in managing API versions and the API lifecycle?

An API gateway is instrumental in managing API versions by allowing organizations to expose different versions of an API concurrently without breaking existing client applications. It can route requests to specific backend service versions based on criteria like URL path (e.g., /v1/users, /v2/users), HTTP headers, or query parameters. This enables smooth transitions during API evolution, supporting blue/green deployments or canary releases where new versions are gradually rolled out. For the API lifecycle, the gateway centralizes the publication, discovery, and eventual deprecation of APIs, ensuring that clients always access the correct and supported versions while providing clear guidance for migration.

4. Can an API Gateway replace a Service Mesh in a microservices architecture?

No, an API gateway and a service mesh serve distinct, albeit complementary, purposes. An API gateway primarily handles "North-South" traffic – communication from external clients to services within the cluster. It focuses on external API concerns like security, rate limiting, and client-facing API contracts. A service mesh, on the other hand, manages "East-West" traffic – communication between microservices within the cluster. It focuses on internal service concerns like inter-service authentication, traffic management (retries, timeouts), circuit breaking, and observability for internal service calls. While there can be some overlap (e.g., an ingress gateway in a service mesh can expose services externally), a full-fledged API gateway offers a broader set of features for external API management that a service mesh typically does not.

5. When should an organization consider adopting a specialized API Gateway, such as an AI Gateway like APIPark?

An organization should consider adopting a specialized API gateway when its core business or technical requirements extend beyond generic API management, particularly for specific domains like Artificial Intelligence. For instance, an AI Gateway like APIPark becomes highly beneficial if your application heavily relies on integrating and managing a large number of diverse AI models. Such specialized gateways offer features tailored to AI workloads, such as unified API formats for different AI models, prompt encapsulation into REST APIs, and specific cost tracking for AI invocations. This simplifies complex AI integrations, reduces maintenance costs, and standardizes AI usage, allowing developers to focus on application logic rather than the intricacies of disparate AI service APIs.

🚀You can securely and efficiently call the OpenAI API on APIPark in just two steps:

Step 1: Deploy the APIPark AI gateway in 5 minutes.

APIPark is developed based on Golang, offering strong product performance and low development and maintenance costs. You can deploy APIPark with a single command line.

curl -sSO https://download.apipark.com/install/quick-start.sh; bash quick-start.sh

In my experience, you can see the successful deployment interface within 5 to 10 minutes. Then, you can log in to APIPark using your account.

Step 2: Call the OpenAI API.